Abstract

Massive open online courses (MOOCs) are potentially participated in by very many students from different parts of the world, which means that learning analytics is especially challenging. In this framework, predicting students’ performance is a key issue, but the high level of heterogeneity affects understanding and measurement of the causal links between performance and its drivers, including motivation, attitude to learning, and engagement, with different models recommended for the formulation of appropriate policies. Using data for the FedericaX EdX MOOC platform (Federica WebLearning Centre at the University of Naples Federico II), we exploit a consolidated composite-based path model to relate performance with engagement and learning. The model addresses heterogeneity by analysing gender, age, country of origin, and course design differences as they affect performance. Results reveal subgroups of students requiring different learning strategies to enhance final performance. Our main findings were that differences in performance depended mainly on learning for male students taking instructor-paced courses, and on engagement for older students (> 32 years) taking self-paced courses.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Measuring student performance is especially challenging for massive open online courses (MOOCs), as their very distinctive structure and delivery has a great impact on how students approach MOOCs and how they perform. Unlike school and university courses, MOOCs are open and free and there is almost always no obligation to complete. MOOCs are further novel in that they are delivered online, have non-traditional structures that combine traditional lectures with learning and assessment materials, and interactions with classmates and teachers mainly take place through forums, discussion groups, etc. In this framework, studying and predicting student performance is a challenge. Performance is a complex issue that depends on motivation, attitude, and engagement (Carannante et al. 2020; de Barba et al. 2016; Moore and Wang 2021; Phan et al. 2016; among others). For MOOCs, massive by definition, this complexity is greater, as it results from great sociodemographic and cultural heterogeneity among students. This would suggest that the use of a single model may easily lead to biased outcomes.

While several studies refer to the importance of student sociodemographics in evaluating performance, most focus only on descriptions (Deng et al. 2020) or on their predictive effects on performance (Li 2019; Rizvi et al. 2019; Williams et al. 2018). Few studies have analysed the impact of sociodemographics on engagement, learning, and performance (Gameel and Wilkins 2019; Hood et al. 2015; Krasodomska and Godawska 2020; Shao and Chen 2020), nor has the strength and direction of the causal relationship between engagement, learning, and performance been paid much attention. Hence, a clear gap is evident in our understanding of how sociodemographic variables for MOOC students affect the causal relationship between engagement, learning, and performance.

The aim of the paper is to investigate the impact of sociodemographics on engagement, learning, and performance, focusing on group effects, i.e., on the study of possible differences according to the personal characteristics of students. We accordingly extend the study by Carannante et al. (2020), who used composite-based path modelling (CB-PM) (Esposito Vinzi et al. 2010; Hair et al. 2016; Wold 1985) to measure the main factors affecting MOOC student performance. Starting from a conceptualization of performance and its main drivers (engagement and learning), as discussed in Carannante et al. (2020), we analysed differences in the structural model according to student profile (gender, age, and country of origin) and course instructional design. Our ultimate aim was to analyse heterogeneity in student performance from the perspective of learning and engagement behaviour while controlling for sociodemographic profiles. Our analysis points to the existence of student subgroups requiring different engagement and learning strategies to enhance their final performance.

We addressed this heterogeneity problem in a structural model using the methodologically grounded multigroup analysis (MGA) (Hair et al. 2017) and pathmox analysis (Lamberti et al. 2016; Lamberti et al. 2017), which we also comment on in terms of strengths and weaknesses. As data we used tracked log files of students enrolled in two MOOCs offered by FedericaX, the EdX MOOC platform of the Federica WebLearning Centre at the University of Naples Federico II.Footnote 1

The paper is organized as follows. In Sect. 2, we conceptualize students’ performance and its main drivers, namely, engagement and learning, review the effect of sociodemographics, and establish our research hypothesis. In Sect. 3, we describe data and the methodologies, and report our main findings and the tests for assessing heterogeneity in Sect. 4. Finally, in Sect. 5 contains our discussion and possible lines of further research.

2 Theoretical background

2.1 MOOC student engagement, learning, and performance

Social-cognitive theory (Dweck 1986) suggests that academic performance is strictly related to students behaviour and student desire to achieve a particular goal. Behaviour has been defined differently according to contexts and using constructs such as learning, engagement, and self-efficacy (Carranante et al. 2020; Fenollar et al. 2007; Kahu 2013; Guàrdia et al. 2006; Tezer et al. 2018; van Dinther et al. 2021). In this study, we exploited the conceptual model established by Carrannante et al. (2020), as framed in social-cognitive theory, that relates student performance with two main drivers: engagement and learning.

Carannante et al. (2020) reviewed several definitions, conceptualizations, and measurements from the literature regarding engagement and learning as key drivers underpinning student performance.

Engagement, which reflects students’ involvement in a course, has been defined using behavioural, emotional, cognitive, socio-political and holistic perspectives. According to Tani et al. (2021) and Azevedo (2015), behavioural engagement is reflected in actions taken in order to learn; the emotional aspect covers feelings related to coursework, teachers, and the institution; the cognitive aspect covers the desire to acquire skills; the socio-political aspect considers the socio-political context influencing students such as institutional culture; and finally, the holistic aspect includes all previous perspectives. Adopting the emotional perspective on engagement, we quantify how the student approaches the MOOC taking into account time-based dimensions: regularity (how time is spent on the platform and how a learning roadmap is organized), and procrastination (failure to organize learning).

Learning is the process of acquiring and modifying knowledge, skills, values, and preferences. Following Azevedo (2015), we analyse this construct by considering frequency-based actions (number of study activities), time-based actions (time spent studying), and interactions (forum participation and social learning aspects) that underpin learning (Lee 2002; Reed and Oughton 1997; Song et al. 2014).

Performance has a broad range of definitions but, in relation to MOOCs, is typically reflected in course completion (Conijn et al. 2018), whether in submitting a final exam, earning a course certificate, or showing a particular attitude in the final week of the course (Allione and Stein 2016; Conijn et al. 2018; Ramesh et al. 2014). However, considering that many students do not complete MOOCs, it is important to understand whether they at least manage to achieve some learning Following Carannante et al. (2020), we therefore define performance according to correct responses to a teacher questionnaire.

Concerning the causal relationship among these three constructs, we consider that engagement and learning are both positively related to performance, as already documented by Carannante et al. (2020), and supported by several other authors (Conijn et al. 2018; de Barba et al. 2016; Hadwin et al. 2007; Lan and Hew 2020; Maya-Jariego et al. 2020; Moore and Wang 2021; Phan et al. 2016; Tuckman 2005; You 2016; Vermunt 2005).

2.2 MOOC student heterogeneity

While there are several possible sources of heterogeneity that determine MOOC student performance, here we focus on gender, age, and country of origin (as sociodemographic factors), and course design. We design and formulate our research hypotheses below, each supported by a brief review of the literature and subsequently verified in our empirical analysis.

2.2.1 Gender

Gender differences have been widely investigated in the education literature, including, more recently, in the MOOC framework. Several studies show that men and women approach online education differently in terms of motivation, study habits, and communication behaviours, and, accordingly, perform differently (e.g., Deng et al. 2020; Gameel and Wilkins 2019; Rizvi et al. 2019; Sullivan 2001; Taplin and Jegede 2001). The gender effect in MOOCs is also reflected in a different use of technologies. For example, men use the internet more for entertainment and functional purposes, while women use it more to enrich interpersonal communication (Weiser 2000). Another example is blogging, which women use for human interaction purposes, and men for information purposes (Lu et al. 2010). A gender effect has been observed regarding the impact of engagement and learning on online course performance, even if findings do not always match the type efCR78fect (Deng et al. 2020; Gameel and Wilkins 2019; Lietaert et al. 2015; Wang et al. 2011). However, as reported by Veletsianos (2021), gender differences can be identified in the way men and women students approach online courses. Men students tend to be more task-oriented and outcome-focused, while female students tend to procrastinate less and are more likely to study at fixed times and locations. According to this literature review, we hypothesize as follows:

H1a

The effect of engagement on performance is greater in women students.

H1b

The effect of learning on performance is greater in men students.

2.2.2 Age

Online learners are heterogeneous in terms of age (Dabbagh 2007), and it is widely recognized that age determines different approaches to learning. Williams et al. (2018) reported a positive association between older students and MOOC participation. This difference, suggesting greater motivation in older students, is because they are more goal-oriented and self-directed. However, younger students are more dynamic and responsive to technological innovation, while younger online learners are also reported to perform significantly better in knowledge tests (Lim et al. 2006). Deng et al. (2020) reported how age is frequently investigated from a descriptive perspective, with few extant studies analysing the effect of age on the causal relationship between engagement, learning, and performance. Guo and Reinecke (2014) observed a significant effect of age on behavioural engagement, with older learners engaging more with learning materials and more likely to follow non-linear, self-defined learning paths than younger learners. Timms et al. (2018) found that older students reported higher levels of engagement with the course. Pellizzari and Billari (2012), in contrast, show that younger students tend to be more learning-oriented, to devote more time to studying, and to perform better at university. Accordingly, we hypothesize as follows:

H2a

The effect of engagement on performance is greater in older students.

H2b

The effect of learning on performance is greater in younger students.

2.2.3 Country origin

Differences in cultural values and educational systems undoubtedly lead to different perceptions of learning depending on the country of origin (Gameela and Wilkins 2019; Shafaei et al. 2016). The literature has extensively analysed differences in the learning styles of students from different cultures, with some researchers focusing on foreign students educated in Western countries. Zhao et al. (2005) found that foreign students are more engaged than domestic students in educational activities. For first-year foreign students at an Australian university, Ramsay et al. (1999) highlighted difficulties in understanding lectures, mainly due to language. In an analysis of five MOOCs offered in English and Arabic taken by students from several countries (Europe, North America, Asia and the Pacific, Arab states, Latin America, and the Caribbean), Gameela and Wilkins (2019) found significant differences in engagement: Arab students, for instance, showed lower engagement than North American and European students, but greater engagement than Latin America and Caribbean students. Deng et al. (2020) also found significant differences among students from different countries. In particular, they found that European students were less engaged than North American and Asian students. Accordingly, we hypothesize as follows:

H3

The effect of engagement on performance is less in European students than in students from other countries.

2.2.4 Course design

According to Endedijk et al. (2016), the instructional design of a MOOC is crucial to completion. Approaches proposed to improve learner satisfaction and performance can mainly be traced back to two broad categories: classical instructor-paced learning (characterized by fixed schedules, deadlines, and extensive interaction with teachers), and self-paced learning (greater learner control that largely removes the time-driven focus of fixed schedules). In investigating the impact on student behaviour of both approaches, Watson et al. (2018) found that the self-paced approach is more likely to result in greater learning satisfaction and gains than the instructor-paced approach. In highlighting the importance of MOOC course design, Fianu et al. (2018) showed that course design predicted usage, while Goopio and Cheung (2020) showed how poor course design explained high dropout rates. Concerning the effect of course design on the relationship between learning, engagement, and performance, the literature is limited. However, as indicated by Lim (2016) self-paced courses, while meeting the flexibility and learning needs of many students, tend to be related to a tendency to procrastinate. Thus, greater student diligence and regularity in self-paced courses may be necessary to ensure adequate performance. Accordingly, we hypothesize as follows:

H4

The effect of engagement on performance is greater in students attending self-paced courses.

3 Research design

3.1 Data

Since our analysis refers to all students registered for FedericaX, the edX MOOC platform of the Federica WebLearning Centre at the University of Naples Federico II, no sampling method was applied.

Courses are offered in instructor-paced versions and self-paced versions. The instructor-paced courses—usually integrated into an on-site course delivered in blended mode—are strictly scheduled, with fixed dates regarding assignments, materials, and exams, and a deadline for learners to complete the course and obtain certification. For the self-paced courses, since all course materials are available from as soon as the course starts, and there are no due dates for assignments and exams, learners can progress through the MOOC at their own pace and obtain a pass grade even without completing all the course materials.

Given that the study was focused on comparing MOOC students by gender, age, and country of origin, and in terms of course design, the study was limited to students attending the same MOOC platform. As pointed out by Ngah et al. (2022), results would not be comparable if different populations were sampled, as they would have different settings.

As drivers of student performance, engagement and learning were measured using 17 indicators. Considered for learning was the number of actions undertaken to acquire knowledge. Engagement, representing the emotional perspective, was quantified by how learners approached the MOOC. Both are complex constructs that require measurement of second-order constructs, namely, frequency-based actions, time-based actions, and interactions for the learning construct, and regularity and non-procrastination for the engagement construct. The outcome, performance, was measured from the rate of correct responses to a teacher questionnaire.

A complete description of the indicators is available in the original article by Carannante et al. (2020). Here we consider only a subset of the original data: students registered for the MOOC but not active were excluded from our analysis, and some indicators (average backward rate, average time spent on video, delay, rate of return, rate of videos watched, rate of rewatching, lesson ordering) were excluded due to low reliability (i.e., the correlations between these indicators and constructs were too low). Univariate statistics, reported in Appendix Fig. 4, show the typical skewed distribution of online courses.

As reported by Ngah et al. (2022), sample size is crucial for quantitative studies based on a composite-based path modelling (CB-PM) approach. According to the partial least squares (PLS) literature, sample size is determined by model complexity and is calculated based on the power of analysis (Ngah et al. 2019). As proposed by Gefen et al. (2011), and according to the table developed by Green (1991), with a power of 80%, moderate effect size, and p = 0.05, the minimum sample size is 85. Thus, since our sample included 3578 students, sample size was not an issue. Furthermore, regarding the sources of heterogeneity—gender, age, country of origin, and course design—the smallest segment was composed by students from Africa and Oceania in the country of origin variable (n = 756, 21.13%), again higher than the threshold of 85, and therefore also confirming an adequate sample size for the subsamples.

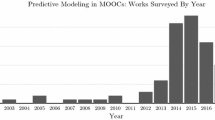

As sources of heterogeneity (see Fig. 1), we considered student gender, age, and country of origin, along with course design (instructor-paced or self-paced). Nearly half the students were male (40.55%), most were aged between 26 and 32 years (48.69%), and most attended a self-paced course (73.09%). According to country of origin, 31.11% were Asian, 26.10% were North American, 21.66% were European, and 21.13% were from countries in Africa and Oceania.Footnote 2

3.2 Methods

To estimate the causal relationship between the engagement, learning, and performance constructs, we combine PLS path modelling (PLS-PM) (Hair et al. 2016; Wold 1985) with the repeated indicators approach (Bradley and Henseler 2007). PLS-PM is a component-based approach to structural equation modelling (SEM). It connects observed variables with latent variables through a system of linear relationships (Esposito Vinzi et al. 2010). Each latent variable (also called a construct) is estimated using specific linear combinations of observed variables (also called indicators or manifest variables). Simultaneously, the relationships between constructs are quantified by applying a set of sequential multiple linear regressions. Two models are computed: the measurement (outer) model, relating observed variables to latent variables, and the structural (inner) model, reflecting the strength and direction of relationships among the latent variables.

Each set of indicators can be related to its own latent variable in either a reflective or formative way. Indicators are reflective when it is hypothesized that the latent variable causes the indicators, while indicators are formative when the latent variable is generated (i.e., formed) by the indicators. Reflective indicators need to be highly correlated, but not formative indicators, as each indicator describes a different aspect of the latent variable.

PLS-PM, whose use has recently increased in higher education research (Ghasemy et al. 2020), was considered suitable for our research as it meets three main requirements: (1) the research is exploratory; (2) the main goal is prediction; and (3) the data are not normally distributed. PLS-PM maximizes explained variance without strong assumptions (Hair et al. 2016), unlike covariance-based path modelling, which estimates latent variables as common factors explaining co-variation between the associated indicators (Sarstedt et al. 2016), thereby reflecting strong assumptions regarding data distribution and sample size.

In our analysis, as established in the conceptual model of Carrannante et al. (2020), engagement and learning are second-order latent variables, i.e., their indicators are themselves latent variables. In the absence of directly measured indicators, we therefore, we need to identify them using a specific strategy. Following Carrannante et al. (2020), we used the repeated indicator approach, which uses all the manifest variables of the latent variables as items for the second-order latent variables. In our application, as indicated by Carrannante et al. (2020), the second-order constructs exploit the regularity and non-procrastination manifest variables for engagement, and the frequency-based activities, time-based activities, and interaction manifest variables for learning.

The effects of the categorical variables were analysed using both MGA and pathmox analysis. MGA (Hair et al. 2017) investigates the effect of a categorical variable in CB-PM through three sequential steps: (1) the dataset is divided into groups according to the categorical variables; (2) a specific PLS-PM model is estimated for each group; and (3) differences between group coefficients are evaluated. While the PLS-PM literature describes several tests to compare differences (Hair et al. 2017), we used PLS-MGA (Henseler et al. 2009). As for pathmox analysis (Lamberti et al. 2016, 2017), this identifies segments distinguished by different relationships among constructs. Binary segmentation produces a tree with different models in each resulting node. The whole dataset is associated with the root node, and is then, through an iterative procedure, recursively partitioned to identify levels of the categorical variable yielding the two most significantly different CB-PMs, which are then associated to the two child nodes detected at each step. The two models are compared to check for similarities using a global comparison test based on Fisher’s F-test for equality in regression models, as proposed by Lebart et al. (1979). Partitioning ends (1) when no further significant differences are detected, (2) when tree depth falls below a fixed level; or (3) when the tree generates child nodes with a small number of observations (in general, less than 20% of the original sample). Unlike MGA, this technique allows several categorical variables to be analysed simultaneously to identify the most different subgroups. Furthermore, the selection order of the categorical variables during partitioning yields a ranking that reveals the relative importance of variables in defining groups.

Before applying MGA in PLS-PM, we evaluated measurement invariance to verify that each latent variable was measured in the same way across the groups emerging at the different levels (sociodemographic variables and course design). Measurement invariance ensures that a dissimilar group-specific model estimate does not depend on diverse content and meanings of latent variables across groups. A specific procedure to verify measurement invariance in the PLS-PM framework—proposed by Henseler et al. (2016)—is measurement invariance of composite models (MICOM), which involves three hierarchical steps, as follows: (1) configural invariance, which ensures the same latent variable specifications, exists when latent variables are equally parameterized and estimated across groups; (2) compositional invariance means that latent variables scores measure the same construct across groups; and (3) equality of latent variables means that values and variances ensures that data can be pooled across groups. If all three steps are confirmed, then full measurement invariance is established; if only the first two steps are confirmed, then partial measurement invariance is established. Confirmation of only (1) and (2) are a necessary condition for performing MGA. A practical guideline for applying the MICOM procedure is provided by Hair et al. (2017), while Henseler et al. (2016) provide more details on methodological aspects.

4 Results

Our exploration of possible sources of heterogeneity in MOOC student performance started with the results of the PLS-PM model, as proposed by Carannante et al. (2020), estimated for the whole sample of students (the global model). We then tested the hypotheses defined in Sect. 4.2 to determine if the effect of engagement and learning on performance varies according to gender (H1a H1b), age (H2a H2b), country of origin (H3), and course design (H4).

4.1 Global analysis

Before estimating the model, we analysed the indicator relationships to prevent possible problems related to common method bias (CMB), which should be below 50%. Thus, following Lamberti (2021), we performed Harman’s one-factor test, loading all principal constructs into a single principal component factor analysis and setting to one the number of factors. The absence of CMB was confirmed by just one factor that explained 40% of the variance.

We estimated the model depicted in Fig. 2 as a global model, used in the following subsections to conduct MGA and pathmox analysis. The model, consistent with results reported in Carannante et al. (2020), confirms that learning (β = 0.622, p < 0.001) was the main driver of performance, while engagement had a smaller impact (β = 0.203, p value < 0.001). Considering reflective latent variables, we observed satisfactory results for unidimensionality, internal consistency, and discriminant validity (see Appendix Tables 6, 7, 8 for details). The coefficient of determination, R2 = 0.626, reflected adequate model predictive power.

We next explored the effect of sociodemographic variables, given that engagement and learning may define different student performance subgroups.

4.2 Multigroup analysis

Following Hair et al. (2017), we ensured that engagement, learning, and performance were defined using the same set of indicators in all groups using a reflective scheme (configural invariance). Furthermore, we verified that engagement, learning, and performance scores measured the same constructs across groups (compositional invariance), which, from a technical perspective, meant calculating correlations, which should be 1 if the scores measure the same constructs across groups. We then compared these with the confidence intervals (CIs) obtained through permutation of the groups. The fact that all score correlations fell below the permutation-based CIs guaranteed compositional invariance. Finally, we tested for equal means and variances across the groups, comparing means and variances with the CIs obtained through permutation of the groups, and finding differences that, in most cases, confirmed the existence of different segments according to gender, age, country of origin, and course design. Results of the invariance procedure—steps (2) and (3)—are reported in Appendix Tables 9, 10, 11 and 12.

Following Cheah et al. (2023), we verified measurement model properties for each segment defined by the levels of the categorical variables. Results are available as supplementary materials posted on the journal website.

Results for each of the considered variables in the MGA are presented in Table 1 (H1a and H1b, gender), Table 2 (H2a and H2b, age), Table 3 (H3, country of origin), and Table 4 (H4, course design). As drivers of student performance, for gender we found significant differences for both engagement and learning (p = 0.041 and p = 0.038, respectively); learning was significantly more important for men than for women, while the reverse occurred with engagement. For age (H2a and H2b), we found no significant differences. For country of origin (H3), we found differences between North American and European students for engagement, which was significantly greater for North American students (p = 0.037), but was not significant for Europeans. Comparing European students with students from other countries, engagement and learning were also both significantly different (p = 0.05 and 0.038, respectively): learning was more important for European students, while engagement was more important for students from other countries. Finally, for course design (H4), we found significant differences for both learning and engagement (p = 0.035 and p = 0.024, respectively), with learning significantly more important for instructor-paced students, and engagement significantly more important for self-paced students.

Summarizing, the MGA evidence supported H1a and H2b (gender), H3 (country of origin), and H4 (course design), but not H2a and H2b (age). However, there were important differences in the relationships between engagement, learning, and performance when we considered sociodemographic variables and the course design, our analysis provided only a partial picture of the effects of heterogeneity, as the variables were merely considered one at the time, whereas they could simultaneously exert effects on specific subgroups of students. Furthermore, we could not identify the specific aspects that differentiated students according to the four variables. Therefore, to address these issues, we performed pathmox analysis.

4.3 Pathmox analysis

Results for the pathmox analysis, carried out using the categorical variables (gender, age, country of origin, and course design) as input variables for segmentation, are shown in Fig. 3 and Table 5. The root node reflects the global model estimated for the whole sample (n = 3578), while the four terminal nodes identify four different local models, labelled LM4, LM5, LM6, and LM7, and described further below.

The algorithm selected course design as the variable with the greatest discriminant power (p < 0.001), i.e., there was a clear distinction between instructor-paced students (Fig. 3 left, LM2) and self-paced students (Fig. 3 right, LM3). Instructor-paced students were further differentiated by gender (p < 0.001), defining two groups: men (LM4) and women and undeclaredgender (LM5), while self-paced students were further differentiated by age (p < 0.001), as younger and older than 32 years (LM6 and LM7, respectively). The CB-PMs associated with the four LMs are presented in Table 5. Results show that, in defining performance, and unlike the finding for the whole sample, engagement in instructor-paced MOOCs did not have a significant impact on males (LM4), while engagement and learning were both crucial for females and undeclared gender (LM5). In this model, the difference in the effect of learning and engagement was lower than in the global model (0.069 vs. 0.302). In self-paced MOOCs, younger students (LM6) presented similar effects for learning and engagement, while for older students (LM7), engagement was more important than learning. Both the global model and the local models show satisfactory R2 values.

Summarizing, pathmox identifies the order course design, gender, age in the ranking of variables according to their discriminant power, with MOOC student performance differing depending in the identified subgroups. The most significant differences were found for male instructor-paced students (LM4) and older self-paced students (LM7); for LM4, only learning significantly affected performance, while for LM7, engagement especially marked performance. Pathmox therefore complemented the MGA by extending the comparison reflected in Fig. 1 to different student subgroups.

5 Discussion

To evaluate whether MOOC performance was affected by gender, age, country of origin, and course design as possible sources of heterogeneity, we used a twofold approach based on MGA and pathmox analysis. Each categorical variable (gender, age, country origin, and course design) was analysed using MGA to explore differences between groups and to test for effects on the causal learning-engagement-performance chain, while the most important sources of heterogeneity in shaping differences between students were identified using pathmox analysis. Our research therefore reflects an innovative focus on the heterogeneity effect in the relationship between engagement, learning, and performance in MOOC students.

MGA allowed us to analyse the effect of each variable on performance and to check the extent to which our results confirm previous research findings. Regarding gender, we found the learning construct to be more important for men than women, corroborating findings from traditional education environments (Lietaert et al. 2015; Wang et al. 2011), but contradicting findings for MOOCs (Deng et al. 2020; Gameel and Wilkins 2019; Williams et al. 2018). Age, surprisingly, had no significant effect on the relationship between engagement, learning, and performance. As for country of origin, learning was more relevant for European than for North American and Oceanian/African students. While our findings confirm that country is relevant (Gameel and Wilkins 2019; Zhao et al. 2005), a direct comparison with published studies was not possible given the highly specific contexts of those studies. Finally, concerning course design, learning was found to be more relevant for students following instructor-paced courses. Interestingly, for this group, engagement was not significant, while both learning and, to a lesser, extent, engagement were significant for self-paced courses. These findings partially corroborate evidence reported by Rai et al. (2017) and Watson et al. (2018).

Pathmox analysis allowed us to simultaneously analyse the effects of the four variables and so segment students into subgroups. The most critical source of heterogeneity was course design, with the sociodemographic variables having only a minor impact. For instructor-paced MOOCs, gender was the most important sociodemographic variable, whereas age was most important for self-paced MOOCs.

Note that MGA and pathmox analysis are not directly comparable methods, as they tackle heterogeneity differently. The difference regarding age is explained by the fact that pathmox identified this difference only after partitioning between the two course designs, while country of origin was evident in MGA but not in pathmox partitioning. This issue may be due to the limited depth of the tree, set to two to avoid generating a large number of terminal nodes that would be difficult to interpret. Our results indicate that the country discriminant power was less than that of the other sociodemographic variables. Concerning the student subgroups, differences in performance depend primarily on learning, most especially for male students taking instructor-paced courses, while engagement was important for older students (> 32 years) taking self-paced courses.

5.1 Theoretical and practical implications

From a theoretical perspective, our research makes several important contributions. As far as we are aware, it is the first study that considers the effect of heterogeneity on the causal link between learning, engagement, and performance. Our research in particular contributes to the literature regarding the effect of country of origin on MOOC student performance, as we found that learning is more important for European than for North American and Oceanian/African students. Finally, the fact our analysis indicates course design to be a key aspect opens up discussion on how to better design e-learning policies to meet student expectations, given that student behaviours may reflect varying sources of heterogeneity. Indeed, to enhance performance in MOOCs, course designers should take in account not only the different characteristics of students, but also should offer adapted courses or complement online courses with specific strategies shaped by student profiles. Gender and country of origin also affect student performance, as engagement was found to be lower in male European and self-paced students. For these profiles, for instance, behavioural learning strategies regarding time management, test-taking, help-seeking, and homework management could be combined with systematic assessment of the students’ experience as a means of ensuring that they are investing enough time and energy in educationally purposeful activities.

5.2 Limitations

The main limitation of our study is that our findings are based on data for a single specific platform (the Federica WebLearning Centre of the University of Naples Federico II), so differences can be expected for research in other contexts. Second, other heterogeneity sources, e.g., different course topics, would undoubtedly provide more insights into the relationships between engagement, learning, and student performance.

6 Conclusion

In this article, by demonstrating that sociodemographic variables affect MOOC student performance and its drivers, learning and engagement, we highlight the importance of considering the MOOC students’ heterogeneity. Our results indicate that learning is the more important driver behind student performance in MOOC courses. Our findings also suggest that sociodemographic heterogeneity is a key factor to consider, as the relationships between drivers—earning and engagement—and student performance vary across different students’ segments. Our findings would suggest that course designers could consider customizing MOOC courses according to students’ profiles.

Notes

Students from Africa and Oceania were merged in a single group labelled “Others” due to the small number of cases. This classification was justified by similar average performance values for students from those two geographical regions.

References

Allione, G., Stein, R.M.: Mass attrition: an analysis of Drop Out from Principles of Microeconomics MOOC. J. Econ. Educ. 47(2), 174–186 (2016)

Azevedo, R.: Defining and measuring engagement and learning in science: conceptual, theoretical, methodological, and analytical issues. Educ. Psychol. 50(1), 84–94 (2015)

Bradley, W., Henseler, J.: Modeling Reflective Higher-order Constructs using Three Approaches with Pls Path Modeling: A Monte Carlo Comparison (2007) https://hdl.handle.net/2066/160877

Carannante, M., Davino, C., Vistocco, D.: Modelling students’ performance in moocs: a multivariate approach. Stud. High. Educ. (2020). https://doi.org/10.1080/03075079.2020.1723526

Cheah, J.H., Amaro, S., Roldán, J.L.: Multigroup analysis of more than two groups in PLS-SEM: a review, illustration, and recommendations. J. Bus. Res. (2023). https://doi.org/10.1016/j.jbusres.2022.113539

Conijn, R., Van den Beemt, A., Cuijpers, P.: Predicting student performance in a blended MOOC. J. Comput. Assist. 34(5), 1–14 (2018). https://doi.org/10.1111/jcal.12270

Dabbagh, N.: The online learner: characteristics and pedagogical implications. Contemp. Issues Technol. Teacher Educ. 7(3), 217–226 (2007)

de Barba, P.G., Kennedy, G.E., Ainley, M.D.: The role of students’ motivation and participation in predicting performance in a MOOC. J. Comput. Assist. 32, 218–231 (2016). https://doi.org/10.1111/jcal.12130

Deng, R., Benckendorff, P., Gannaway, D.: Linking learner factors, teaching context, and engagement patterns with MOOC learning outcomes. J. Comput. Assist. 36(5), 688–708 (2020). https://doi.org/10.1111/jcal.12437

Dweck, C.S.: Motivational processes affecting learning. Am. Psychol. 41(10), 1040–1048 (1986). https://doi.org/10.1037/0003-066X.41.10.1040

Endedijk, M.D., Brekelmans, M., Sleegers, P., et al.: Measuring students’ self-regulated learning in professional education: bridging the gap between event and aptitude measurements. Qual. Quant. 50, 2141–2164 (2016). https://doi.org/10.1007/s11135-015-0255-4

Esposito Vinzi, V., Chin, W.W., Henseler, J., Wang, H.: Handbook of Partial Least Squares: Concepts, Methods and Applications. Springer, Berlin Heidelberg (2010)

Fianu, E., Blewett, C., Ampong, G.O.A., Ofori, K.S.: Factors affecting MOOC usage by students in selected Ghanaian Universities. Sci. Educ. 8(2), 70 (2018)

Fenollar, P., Román, S., Cuestas, P.J.: University students’ academic performance: an integrative conceptual framework and empirical analysis. J. Educ. Psychol. 77(4), 873–891 (2007). https://doi.org/10.1348/000709907X189118

Gameel, B.G., Wilkins, K.G.: When it Comes to MOOCs, where you are from Makes a Difference. Comput. Educ.. Educ. 136, 49–60 (2019). https://doi.org/10.1016/j.compedu.2019.02.014

Gefen, D., Rigdon, E.E., Straub, D.: Editor’s comments: an update and extension to SEM guidelines for administrative and social science research. MIS Quart. iii–xiv (2011). https://doi.org/10.2307/23044042

Ghasemy, M., Teeroovengadum, V., Becker, J.M., Ringle, C.M.: This fast car can move faster: a review of PLS-SEM application in higher education research. High. Educ. 80(6), 1121–1152 (2020). https://doi.org/10.1007/s10734-020-00534-1

Guàrdia, J., Freixa, M., Peró, M., et al.: Factors related to the academic performance of students in the statistics course in psychology. Qual. Quant. 40, 661–674 (2006). https://doi.org/10.1007/s11135-005-2072-7

Goopio, J., Cheung, C.: The MOOC dropout phenomenon and retention strategies. J. Teach. Travel Tour. (2020). https://doi.org/10.1080/15313220.2020.1809050

Green, S.B.: How many subjects does it take to do a regression analysis. Multivar. Behav. Res. 26(3), 499–510 (1991). https://doi.org/10.1207/s15327906mbr2603_7

Guo, P.J., Reinecke, K.: Demographic Differences in how Students Navigate through MOOCs. In: Proceedings of the first ACM conference on Learning@ scale conference (pp. 21–30) (2014). https://doi.org/10.1145/2556325.2566247

Hair Jr, J.F., Sarstedt, M., Ringle, C., Gudergan, S.P.: Advanced Issues in Partial Least Squares Structural Equation Modeling. Sage publications. (2017)

Hair Jr, J.F., Hult, G.T.M., Ringle, C., Sarstedt, M.: A Primer on Partial Least Squares Structural Equation Modeling (PLS-SEM). Sage publications. (2016)

Hadwin, A.F., Nesbit, J.C., Jamieson-Noel, D., Code, J., Winne, P.H.: Examining trace data to explore self-regulated learning. Metacogn. Learn.. Learn. 2, 107–124 (2007)

Henseler, J., Ringle, C., Sarstedt, M.: Testing measurement invariance of composites using partial least squares. Int. Mark. Rev. 33(3), 405–431 (2016)

Henseler, J., Ringle, C., Sinkovics, R.R.: The use of partial least squares path modeling in international marketing. Adv. Int. Mark. 20, 273–319 (2009). https://doi.org/10.1108/S1474-7979(2009)0000020014

Henderikx, M.A., Kreijns, K., Kalz, M.: Refining success and dropout in massive open online courses based on the intention–behavior gap. Distance Educ. 38(3), 353–368 (2017). https://doi.org/10.1080/01587919.2017.1369006

Hintze, J.L., Nelson, R.D.: Violin plots: a box plot-density trace synergism. Am. Stat. 52(2), 181–184 (1998). https://doi.org/10.1080/00031305.1998.10480559

Hood, N., Littlejohn, A., Milligan, C.: Context counts: how learners’ contexts influence learning in a MOOC. Comput. Educ.. Educ. 91, 83–91 (2015). https://doi.org/10.1016/j.iheduc.2015.12.003

Kahu, E.R.: Framing student engagement in higher education. Stud. High. Educ. 38(5), 758–773 (2013)

Kizilcec, R.F., Pérez-Sanagustín, M., Maldonado, J.J.: Self-regulated learning strategies predict learner behavior and goal attainment in massive open online courses. Comput. Educ.. Educ. 104, 18–33 (2017). https://doi.org/10.1016/j.compedu.2016.10.001

Krasodomska, J., Godawska,: E-learning in accounting education: the influence of students’ characteristics on their engagement and performance. J. Account. Educ. (2020). https://doi.org/10.1080/09639284.2020.1867874

Lamberti, G., Banet Aluja, T., Sanchez, G.: The pathmox approach for pls path modeling: discovering which constructs differentiate segments. Appl. Stoch. Models. Bus. Ind. 33(6), 674–689 (2017). https://doi.org/10.1002/asmb.2270

Lamberti, G., Banet Aluja, T., Sanchez, G.: The pathmox approach for PLS path modeling segmentation. Appl. Stoch. Models. Bus. Ind. 32(4), 453–468 (2016). https://doi.org/10.1002/asmb.2168

Lamberti, G.: Hybrid multigroup partial least squares structural equation modelling: an application to bank employee satisfaction and loyalty. Qual. Quant. (2021). https://doi.org/10.1007/s11135-021-01096-9

Lamberti, G., Aluja Banet, T., Rialp Criado, J.: Work climate drivers and employee heterogeneity. Int. J. Hum. Resour. Manag.Resour. Manag. 33(3), 472–504 (2022). https://doi.org/10.1080/09585192.2020.1711798

Lan, M., Hew, K.F.: Examining learning engagement in MOOCs: a self-determination theoretical perspective using mixed method. Int. J. Educ. Technol. (2020). https://doi.org/10.1186/s41239-020-0179-5

Lebart, L., Morineau, A., Fenelon, J.P.: Traitement des Donnees Statistiques. Dunod, Paris (1979)

Lee, J.: Racial and ethnic achievement gap trends: Reversing the Progress Toward Equity? Educ. Res. 31(1), 3–12 (2002). https://doi.org/10.3102/0013189X031001003

Li, K.: MOOC learners’ demographics, self-regulated learning strategy, perceived learning and satisfaction: a structural equation modeling approach. Comput. Educ.. Educ. 132, 16–30 (2019). https://doi.org/10.1016/j.compedu.2019.01.003

Lietaert, S., Roorda, D., Laevers, F., Verschueren, K., De Fraine, B.: The gender gap in student engagement: the role of teachers’ autonomy support, structure, and involvement. Br. J. Educ. Psychol. 85(4), 498–518 (2015). https://doi.org/10.1111/bjep.12095

Lim, D.H., Morris, M.L., Yoon, S.W.: Combined effect of instructional and learner variables on course outcomes within an online learning environment. Interact. Learn. Environ. 5(3), 255–269 (2006)

Lim, J.M.: Predicting successful completion using student delay indicators in undergraduate self-paced online courses. Distance Educ. 37(3), 317–332 (2016). https://doi.org/10.1080/01587919.2016.1233050

Lu, H.P., Lin, J.C.C., Hsiao, K.L., Cheng, L.T.: Information sharing behaviour on blogs in Taiwan: effects of interactivities and gender differences. Inf. 36(3), 401–416 (2010)

Maya-Jariego, I., Holgado, D., González-Tinoco, E., Castaño-Muñoz, J., Punie, Y.: Typology of motivation and learning intentions of users in MOOCs: the MOOCKNOWLEDGE study. Educ. Technol. Res. Dev. 68(1), 203–224 (2020)

Moore, R.L., Wang, C.: Influence of learner motivational dispositions on MOOC completion. Comput. Educ.. Educ. 33(1), 121–134 (2021). https://doi.org/10.1007/s12528-020-09258-8

Ngah, A.H., Kamalrulzaman, N.I., Mohamad, M., et al.: Do science and social science differ? Multi-group analysis (MGA) of the willingness to continue online learning. Qual. Quant. (2022). https://doi.org/10.1007/s11135-022-01465-y

Ngah, A.H., Ramayah, T., Ali, M.H., Khan, M.I.: Halal transportation adoption among pharmaceuticals and comestics manufacturers. J. Islamic Mark. 11(6), 1619–1639 (2019). https://doi.org/10.1108/JIMA-10-2018-0193

Pellizzari, M., Billari, F.C.: The younger, the better? Age-related differences in academic performance at university. J. Popul. Econ.Popul. Econ. 25, 697–739 (2012). https://doi.org/10.1007/s00148-011-0379-3

Phan, T., McNeil, S.G., Robin, B.R.: Students’ patterns of engagement and course performance in a massive open online course. Comput. Educ.. Educ. 95, 36–44 (2016)

Rai, L., Yue, Z., Yang, T., Shadiev, R., Sun, N.: General Impact of MOOC Assessment Methods on Learner Engagement and Performance. In 2017 10th International Conference on Ubi-media Computing and Workshops (Ubi-Media) (pp. 1–4). IEEE. (2017)

Ramesh, A., Goldwasser, D., Huang, B., Iii, H.D., Getoor, L.: Learning Latent Engagement Patterns of Students in Online Courses. In Proceedings of the Twenty-Eighth AAAI Conference on Artificial Intelligence, pp. 1272–1278. AAAI Press. (2014)

Ramsay, S., Barker, M., Jones, E.: Academic adjustment and learning processes: a comparison of international and local students in first-year university. High. Educ. Res. 18(1), 129–144 (1999). https://doi.org/10.1080/0729436990180110

Rizvi, S., Rienties, B., Khoja, S.A.: The role of demographics in online learning; a decision tree based approach. Comput. Educ.. Educ. 137, 32–47 (2019). https://doi.org/10.1016/j.compedu.2019.04.001

Robertson, M., Line, M., Jones, S., Thomas, S.: International students, learning environments and perceptions: a case study using the Delphi technique. High. Educ. Res. 19(1), 89–102 (2000). https://doi.org/10.1080/07294360050020499

Reed, W.M., Oughton, J.M.: Computer experience and interval-based hypermedia navigation. J. Res. Technol. Educ. 30(1), 38–52 (1997). https://doi.org/10.1080/08886504.1997.10782212

Sarstedt, M., Hair, J.F., Ringle, C.M., Thiele, K.O., Gudergan, S.P.: Estimation issues with PLS and CBSEM: where the bias lies!. J. Bus. Res. 69(10), 3998–4010 (2016). https://doi.org/10.1016/j.jbusres.2016.06.00

Shao, Z., Chen, K.: Understanding individuals’ engagement and continuance intention of MOOCs: the effect of interactivity and the role of gender. Internet Res. (2020). https://doi.org/10.1108/INTR-10-2019-0416

Shafaei, A., Nejati, M., Quazi, A., Von der Heidt, T.: ‘When in Rome, do as the Romans do’Do international students’ acculturation attitudes impact their ethical academic conduct? High. Educ. 71(5), 651–666 (2016). https://doi.org/10.1007/s10734-015-9928-0

Song, L., Singleton, E.S., Hill, J.R., Koh, M.H.: Improving online learning: student perceptions of useful and challenging characteristics. Internet High. Educ. 7(1), 59–70 (2014). https://doi.org/10.1016/j.iheduc.2003.11.003

Sullivan, P.: Gender differences and the online classroom: male and female college students evaluate their experiences. Commun. Coll. J. Res. Pract. 25, 805–818 (2001). https://doi.org/10.1080/106689201753235930

Tani, M., Gheith, M.H., Papaluca, O.: Drivers of student engagement in higher education: a behavioral reasoning theory perspective. High. Educ. 82(3), 499–518 (2021)

Taplin, M., Jegede, O.: Gender differences in factors influencing achievement of distance education students. Open Learn. 16(2), 133–154 (2001). https://doi.org/10.1080/02680510120050307

Tezer, M., Yildiz, E.P., Uzunboylu, H.: Online authentic learning self-efficacy: a scale development. Qual. Quant. 52(Suppl 1), 639–649 (2018). https://doi.org/10.1007/s11135-017-0641-1

Timms, C., Fishman, T., Godineau, A., Granger, J., Sibanda, T.: Psychological engagement of university students: learning communities and family relationships. J. Appl. Res. High. Educ 10(3), 243–255 (2018). https://doi.org/10.1108/JARHE-09-2017-0107

Tuckman, B.W.: Relations of academic procrastination, rationalizations, and performance in a web course with deadlines. Psychol. Rep. 96(3), 1015–1021 (2005). https://doi.org/10.2466/pr0.96.3c.1015-1021

van Dinther, M., Dochy, F., Segers, M.: Factors affecting students’ self-efficacy in higher education. Educ. Res. Rev. 6(2), 95–108 (2011). https://doi.org/10.1016/j.edurev.2010.10.003

Veletsianos, G., Kimmons, R., Larsen, R., Rogers, J.: Temporal flexibility, gender, and online learning completion. Dist. Educ. 42(1), 22–36 (2021). https://doi.org/10.1080/01587919.2020.1869523

Vermunt, J.D.: Relations between student learning patterns and personal and contextual factors and academic performance. High. Educ. 49(3), 205–234 (2005)

Wang, M.T., Willett, J.B., Eccles, J.S.: The assessment of school engagement: examining dimensionality and measurement invariance by gender and race/ethnicity. J. Sch. Psychol. 49(4), 465–480 (2011). https://doi.org/10.1016/j.jsp.2011.04.001

Watson, W.R., Yu, J.H., Watson, S.L.: Perceived attitudinal learning in a self-paced versus fixed-schedule MOOC. Educ. Media Int. 55(2), 170–181 (2018). https://doi.org/10.1080/09523987.2018.1484044

Weiser, E.B.: Gender differences in internet use patterns and internet application preferences: a two-sample comparison. Cyberpsychology 3(2), 167–178 (2000)

Williams, K.M., Stafford, R.E., Corliss, S.B., Reilly, E.D.: Examining student characteristics, goals, and engagement in massive open online courses. Comput. Educ.. Educ. 126, 433–442 (2018). https://doi.org/10.1016/j.compedu.2018.08.014

Wold, H.: Partial least squares. In S. Kotz e N. Johnson (Eds.). Enc. of Stat. Scien. John Wiley & Sons (1985)

Xiong, Y., Li, H., Kornhaber, M.L., Suen, H.K., Pursel, B., Goins, D.D.: Examining the relations among student motivation, engagement, and retention in a MOOC: a structural equation modeling approach. Glob. Educ. Rev. 2(3), 23–33 (2015)

Yukselturk, E., Bulut, S.: Gender differences in self-regulated online learning environment. J. Educ. Techno. Soc. 12(3), 12–22 (2009)

You, J.W.: Identifying significant indicators using LMS data to predict course achievement in online learning. Internet High Educ. 29, 23–30 (2016). https://doi.org/10.1016/j.iheduc.2015.11.003

Zhao, C.M., Kuh, G.D., Carini, R.M.: A comparison of international student and American student engagement in effective educational practices. J. Higher Educ. 76(2), 209–231 (2005). https://doi.org/10.1080/00221546.2005.11778911

Funding

This work was supported by grant #870691-INVENT funded by the EU H2020 program.

Author information

Authors and Affiliations

Contributions

Authors contributed equally.

Corresponding author

Ethics declarations

Conflict of interest

The authors report there are no competing interests to declare.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Appendix

Appendix

Indicators are reported as violin plots (Hintze and Nelson 1998), as these simultaneously depict the full distribution and number of data considered. The height of each violin indicates the range of the detected values, while the width indicates the position of the peak. In the separate panels, the colours reflect the six subdimensions used to measure learning, engagement, and performance. Thus, indicators related to frequency-based actions, time-based actions, and interaction are coloured green, light blue, and red, respectively, while regularity, non-procrastination, and performance are coloured dark blue, yellow, and violet, respectively. All indicators are highly skewed, with long tails on the right of the distributions (Fig. 4).

Tables 6, 7, 8. Measurement model reliability. Crossloadings, bootstrap confidence intervals (CIs) calculated with 500 repetitions, composite reliability (CR), and average expected variance (AVE) results for the first-order constructs, as reported in Table 6. Results are acceptable, as, according to Esposito Vinzi et al. (2010), CR should be greater than 0.7, AVE should be greater than 0.5, and loadings are higher than 0.7 and significantly higher with respect to their own constructs. Loadings, bootstrap CIs, CR, and AVE results for the second-order constructs are reported in Table 7. Loading values for both constructs are lower than 0.7 (explained by the particular nature of the indicators used in the analysis) but are significant, CR is higher than 0.7 for both constructs, AVE is higher than 0.5 for engagement, and although lower than 0.5 for learning, is still close to the threshold. Given that CR is higher than 0.7, convergent validity can still be considered adequate (Fornell and Larcker, 1981). Results for the Fornell-Larcker matrix, reported in Table 8, indicate that discriminant validity is assured.

Tables 9, 10, 11, and 12 MICOM testing of the invariance measurement model; steps (2) and (3). Each table reports the observed score correlation (SC), the 5% confidence interval (CI), and the observed score difference in means (SDM) between the compared groups, and the log-ratio of score variances (LSV) for groups with their corresponding 95% CIs (obtained by group permutation). Compositional invariance is verified when the SC value falls within the CI, and full measurement invariance is verified when SDM and LSV values fall within the CI. Note that in case of Table 12 (course design), the SC is lower than the threshold, although, in this case, the observed deviation occurs in the third decimal. According to Lamberti et al. (2022), the correlation is not too low for MGA and so the compositional invariance of the constructs is globally accepted.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Cristina, D., Giuseppe, L. & Domenico, V. Assessing heterogeneity in MOOC student performance through composite-based path modelling. Qual Quant 58, 2453–2477 (2024). https://doi.org/10.1007/s11135-023-01760-2

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11135-023-01760-2