Abstract

It is rather easy to identify the leading universities in a country, there are different established methods and indicators of excellence. Generally, it is more challenging to find ‘the second best’ universities which have the potential to become leaders, ‘the firsts’. In Russia, such an attempt has been made. The program of ‘Pillar Universities’ was realized in 2016–2020, in two stages. This paper analyzes the initial stage of the project and its outcomes. We aim to investigate how the program affected the output of the universities from the bibliometric point of view. The results, obtained by bibliometric methods, are encouraging. There is an increase in publication output above the Russia’s average growth. Multidisciplinarity, domestic and international collaboration also increase. Those universities which had no papers in the top journals started publishing their research there. The overall effect of the ‘pillar project’ is found to be positive. Bibliometrics is widely used for assessing higher education institutions and is free from local peculiarities. This allows using the observations of this study in a broader context.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

The aim of identifying the leading universities in a country was addressed frequently and there exists a number of methods and approaches to fulfil this task. One may focus, for example, on highly cited papers (e.g. Tijssen et al. 2002; Pislyakov and Shukshina 2014; Abramo and D’Angelo 2015) or other indicators of excellence. Another widely used method to find the leaders are rankings (e.g. Olcay and Bulu 2017; Antonova and Sushchenko 2020). For example, for selection and evaluation of Chinese universities in the Project 211, Project 985, Double First Class Universities Initiative (Borsi et al. 2022), for decisions on additional funding of the local higher education institutions of China (Tang 2022) different academic university rankings were used (QS, THE, CWUR, etc.). Generally, they combine bibliometric scores with other ‘reputational’, ‘prestige’, ‘excellence’ metrics. Some of them use as a criterion the number of Nobel laureates among alumni/staff or papers published in Nature and Science (Liu and Cheng 2005).

Despite rather abundant critics of the rankings (e.g. Bekhradnia 2016), they are quite useful in finding ‘the best of the best’. However, it is more challenging to find the ‘the second best’ universities which deserve to receive additional governmental help and budget because in this case they may become the most prominent ones and enter the first league of higher education institutions. The problem is that all usual excellence metrics are often not applicable. There are no Nobel winners, no Nature/Science papers, even no top-cited papers. It is a more difficult task to find firsts among seconds than to find firsts among all.Footnote 1

This paper is an analysis of the performance of the ‘second best’ universities which were chosen for the ‘pillar program’ in Russia. For presenting the context of this program, a brief description of the system of Russian universities is given below.

Russian government tries to reform universities to more effectively invest public money into science-dependent technologies and innovations of the country (Schiermeier 2010). As recent results show, this is a real problem for the country (Lancho-Barrantes et al. 2021). ‘Economics of knowledge’—this is a goal of reforming and transforming of higher education in Russia (e.g. Dadasheva et al. 2016). This requires classification of institutions, determining their stronger and weaker characteristics, establishing some hierarchy.

Higher education institutions in Russia were classified in a period of study as follows (see Fig. 1):

-

Moscow State University and Saint Petersburg State University are the main National Russian Universities. In 2009 they were officially marked by status of the leading classical universities in Russia.

-

In 2006 the ‘Federal Universities’ were established. Now there are 10 such universities. They are deeply integrated with the regional governments and industries.

-

There are 29 ‘National Research Universities’, the program started in 2009. Their mission is to combine education with scientific research. The idea is that this connection can help Russian higher education institutions to become the leading organizations on the market.

More details on these three types of universities may be found in (Skvortsov et al. 2013).

-

The famous ‘5–100’ project which was initiated in 2013 by the government (Rodionov et al. 2015; Ivanov et al. 2016; Block and Khvatova 2017; Guskov et al. 2018) included 21 universities by 2020. The aim is not only that five, at minimum, universities enter the tops-100 of world universities’ rankings (hence the name ‘5–100’), but also to evoke the ‘Triple Helix’ (e.g. Leydesdorff 2000; Choi et al. 2015; for Russian case Leydesdorff et al. 2015) and stimulate interaction between education, science, and industry. Project ‘5–100’ is not strictly linked to universities’ classification and includes, among others, five Federal Universities and twelve National Research Universities.

At last, in 2015 Russian Ministry of Education and Science started to form a new type of higher education institutions, ‘the Pillar Universities’.Footnote 2 The aim of this project is to create strong educational and research centers specially oriented to the needs of regions. These institutions were not conceived as the most prominent national leaders, but, indeed, as ‘pillars’ of the whole higher education system which guarantee high-quality and reliable educational context all around Russia. They are not leaders but those who go right behind the leaders, the second best.

We should note that the task of finding ‘the second best’ universities is somewhat easier in the context of Russia. The university science is concentrated in two capitals, Moscow and St. Petersburg. But even when we exclude those cities, the provincial universities are still highly unequal in terms of scientific performance. For example, Markusova et al. (2005) found that 75% of all grants were received by only 30 “provincial” universities.

The ‘pillar’ status was granted in two stages. The main subject matter of the present paper are 11 pillar universities, those of the first stage which have received this status in 2016 (Fig. 1).

Pillar universities are regional centers of education, so further in the text we will denote the first stage participants by a city where they are situated:

-

Volgograd = Volgograd State Technical University.

-

Samara = Samara State Technical University.

-

Tyumen = Tyumen Industrial University.

-

Voronezh = Voronezh State Technical University.

-

Kirov = Vyatka State University (Kirov).

-

Rostov = Don State Technical University (Rostov-on-Don).

-

Omsk = Omsk State Technical University.

-

Krasnoyarsk = Siberian State Aerospace University (Krasnoyarsk).

-

Ufa = Ufa State Petroleum Technological University.

-

Orel = Orel State University.

-

Kostroma = Kostroma State University.

Geography of these universities is visualized in Fig. 2. It should be noted that the process of establishing a pillar university included joining of two different universities of the same city, generally by merging/incorporating one of them into another. Their budgets were also merged. The components of such mergers are listed in Appendix.

The additional amount of public money each pillar university received at start in 2016 is from 100 to 150 mln rubles, that is $1.6–2.5 mln (Arzhanova et al. 2017). As an estimate, it is about 4.5–6.7% of the average budget of these universities, though this percentage may greatly vary across institutions. The largest share of this extra budget was allocated to R&D (33%), accompanied with introduction of a new ‘effective contract system’ with an evaluation framework for faculty and researchers. Additional funding is not very big, so the expert and information support from government as well as status of being ‘pillar’ itself play important roles.

The Pillar project is completed now as the development of the educational system in Russia has stepped into a new phase. The Priority-2030 program has started (Ministry of Science and Higher Education of the Russian Federation 2022). The universities, in total 121, have received additional funding. The results of the Pillar project were incorporated into this new multilevel system, 64% of universities included into this program are regional ones.

Until recently, the pillar universities project was analyzed either from self-reports of its participating institutions, or from monitoring documents of the Ministry of Education and Science, or from local ‘Interfax National university ranking’ (Arzhanova et al. 2017; Surovitskaya 2017). This is the first study of the pillars’ performance by internationally recognized bibliometric databases of Clarivate company (ex-Thomson Reuters IP). There also exists a special national citation database Russian Index of Science Citation, RISC (Moskaleva et al. 2018) indexing thousands of Russian journals, but we were motivated by the desire to assess ‘pillar project’ against the highest scholarly standards. To do this we have chosen Web of Science database as an instrument. Moreover, we took only ‘the main’ its components/indexes, as explained in the next section. The question is, how do ‘the second best’ publish in the best science journals?

The aim of this paper is to analyze “the pillars case in Russia” in such a way that its methods and results may be useful in a broader context. We demonstrate that a proper evaluation framework is important because it promotes the competition among universities and effective resource allocation in any region. Bibliometric “objective” method could give us more robust estimations of the scientific research progress in the universities under study. This is an external, bias-free alternative to the self-reports or government monitoring documents.

Our research hypothesis is that organizational, financial support and, most of all, their accompanying evaluation procedures applied to the “second best” universities will lead to growing of the publication output, better quality of publication venues, rise of the international collaboration. This will be revealed in observable, objective, and measurable bibliometric indicators. Whether this hypothesis is true, is an interesting and important research question. Because the opposite is “no reason to support research and additionally finance not-the-best universities, this will not have any positive effect; only the best of the best should be supported”.

2 Data and methods

Bibliometric data were gathered in August 2018. Web of Science (WoS) platform with its Science Citation Index Expanded and Social Sciences Citation Index databases was used as a data source. The main point of this research is to evaluate pillar universities against the rigorous international standards of quality. We omitted conference proceedings and book citation indexes because they contain different types of documents. Arts & Humanities Citation Index was also not included as humanities literature is hard to be assessed correctly by bibliometric indicators (Thelwall and Delgado 2015; Hammarfelt and Haddow 2018), although there are attempts “to measure research impact [of Arts and Humanities] in a much wider sense” (Donovan and Gulbrandsen 2018). Additionally, there are no impact factors (IF) for AHCI journals, while we will need IF data in our study. The same is true for another WoS journal database, Emerging Sources Citation Index, which is a sort of preliminary limbo for journals on their way to ‘the main’ WoS indexes. We take IFs from Journal Citation Reports database, and some supplementary data from Essential Science Indicators and InCites databases (all—Clarivate company).

We calculate indicators for two years, 2013 and 2017. It makes possible to track progress of the pillar universities in their process of becoming ‘pillar’. Five years are enough to observe the evolution of the universities receiving their new status. Only “Article” and “Review” document types were considered, all other documents not taken into account. Total number of papers of pillar universities between 2013 and 2017 satisfying the selection criteria is 2246. We use “whole counting” method (Gauffriau et al. 2007) in attributing a paper to any pillar university. A paper belongs to the university if at least one author indicated this university as at least one his/her affiliation. This means that authors’ contributions are not fractionalized, and an article may belong to several institutions simultaneously. In the output indicators total for all universities, such papers are counted as one, so there is no double counting. However, as we will see, there are only two such papers in the analysis—intra-pillar collaboration is almost non-existent.

As was explained in Introduction, the inauguration of the new type of universities in Russia, the ‘pillar universities’, was accompanied by merging of several minor higher education institutions. That is, in 2017 one pillar university was a result of integration of universities being separate in 2013. To make bibliometric indicators comparable, we sum up all 2013 components of the future pillar university, which is established later, in 2016.

The main difficulty in obtaining publication statistics was absence of one perfect record for each university in WoS, its organization profile. For the majority of organizations in the study we had to find alternative spellings of their affiliations in the papers (and consequently in the database). There were up to ten different versions for one institution. The search string used, for example, for Don State Technical University (Rostov) was

OG = (Don State Technical University) OR OO = (BRANCH FGBOU VO DON STATE TECH UNIV OR DONSKOY STATE ENGN UNIV OR DON STATE TECH UNIV OR DON STATE TECH UNIV SHAKHTY OR DSTU OR ROSTOV STATE ACAD AGR MACHINERY IND)

We also contacted the Clarivate company and sent them our advanced search strings to help their improvement of the organization profile records in the WoS.

3 Results and discussion

3.1 Output

Figure 3 shows the progress of publication output of the organizations which have become pillar universities in 2016. Remind that for the earlier period the data for all components of the future pillar universities are merged, so it is not an extensive growth simply caused by consolidation of organizations. Generally, on the whole time interval the observed growth is close to linear (in absolute numbers). This means that not only after becoming ‘pillar’ the universities have started their progress, but those institutions which were chosen for the first stage were already “ripe” and demonstrated success. Those who were strengthening their research before becoming pillars were supported. The similar effect was observed by Turko et al. (2016) for Russian ‘5–100’ universities right before the start of that program.

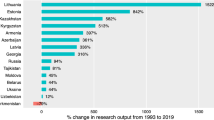

The total number of Russian WoS publications also grew during this period. But the progress of pillar universities even outperforms that of Russia. In 2013 the papers of these 11 universities had a 1.15% share in total Russian output, while in 2017 this share has become 1.50%. On the other hand, it is far less than, for example, the share of 21 universities from ‘5–100’ project (33.6% in 2017).

Individual performance of each of the pillars is shown in Fig. 4. Not one of the 11 has demonstrated a decline in publications in the prominent international journals. The leader has changed, instead of Volgograd in 2013, five years later the maximum number of WoS papers was published by pillar from Samara. What is probably even more important is that “weak” institutions of 2013, with no more than 10 publications in WoS, have the strongest progress: Kostroma (6→23) and Tyumen (10→39); both leave their last places taken in 2013 ranking.

In terms of relative growth, if we consider only universities with more than 10 papers in 2013 (to exclude outliers), the most striking progress show Rostov (273%), Omsk (235%), and Kirov (143%), which is equivalent to 39.0%, 35.3%, and 24.8% of yearly growth respectively. The low base effect here should be taken into account, but not as an aspect which cancels the advantage. If a university doubles its publications from 10 to 20, this is an evidence of serious structural changes in its politics, performance and targets. The most moderate increase of output is found in Volgograd—only 4 extra paper (5.8%).

To compare universities with each other we should normalize output by the number of faculty and staff in them. Does better total performance is generally explained by greater size of the institution? To answer this question, we juxtapose gross number of publications of the universities and its ratio to number of faculty members (Fig. 5).

Figure 5 shows that generally higher publication output is due to higher productivity of researchers, not their number. There are only three slight digressions from the pattern ‘the higher is total number of papers, more papers are produced by one faculty member’—Samara, Rostov and Kostroma. We may suppose that better total performance is achieved by higher research activity in this or that pillar university, not by its size. This result is rather unexpected and proves that better production may be achieved even in smaller institutions.Footnote 3 For example, 2–5 positions in ranking by total number of papers occupy universities with less than 1000 faculty members, while 6–8 ranks have pillars with 1000 + faculty members. Another clue to this effect may be that publication output is linked to the number of researchers who wish (or are able to) publish their papers at international level, not the total number of faculty. This adds some evidence to our research hypothesis demonstrating that the proper government support may increase university performance in an intensive, not extensive manner by mere inflating of the staff.

Four pillar universities were organized in the regions where already was a ‘grand’ university, either Federal University or a member of ‘5–100’ program. Table 1 compares publication statistics and its dynamics for ‘pillars’ and ‘grands’.

Predictably, the ‘grand’ universities play a leading role, producing the largest number of papers in their regions. But in some cases (Rostov) the progress in output is more pronounced for a smaller pillar university (with the reservations on low base effect discussed above).

3.2 Disciplines

To investigate the evolution of disciplinary patterns of pillar universities’ publications we use 22 broad fields of science from Essential Science Indicators database. This method allows to attribute one unique subject to each paper. The results for 2013 and 2017 publications are shown in Fig. 6.

First of all, it is well-known that disciplinary structure of Russian scholarly output differs from the world science. The focus is on physics, engineering, materials science and chemistry (Moed et al. 2018). This dominance of natural sciences over life sciences in Russia (see Zitt and Bassecoulard 2005, p. 428; Glänzel 2001, p. 77) is also traced in grant applications (Markusova et al. 2004), although recently the situation has begun to change (Nature 2016).

In this sense output of the pillar universities is in line with all-Russian trends (Fig. 6). The most important difference is the leading position of chemistry, while in Russia usually physics is the first. Approximately every third high-quality paper by pillar university is published in a chemistry journal.

In 2013–2017, almost all fields of science have shown increase in terms of absolute number of papers (the amazing exception is physics), and the leaders have remained the same. However, the disciplinary structure has changed significantly. The share of physics has decreased in favor of materials science, engineering, and mathematics. The proportion of Earth sciences has slightly declined too. But most drastic growth is for “others” category, primarily due to increase in social sciences and computer science publications. There were only 2 papers in social sciences in 2013 output of 11 pillar universities. In 2017, there are 21 such articles. For computer science these numbers are 5 and 15, respectively.

What is important, disciplinary output of pillars has become more diverse. First, there were 7 research fields with not a single paper in 2013. In 2017 only 4 remained (still no WoS papers in neuroscience, immunology, molecular biology/genetics, and economics). Second, Herfindahl-Hirschman index for papers’ distribution across disciplines has fallen from 2512 to 2015 (19.8%). This means that concentration, unevenness of disciplinary structure has decreased. Pillar universities have become more multidisciplinary, this may be considered as success. More papers are published in new, previously untouched research areas.

3.3 Publication venues

At the moment, it is rather early to count citations received by papers published in 2017. Moreover, we would face the problem of disciplinary normalization as soon as citation practices and averages are different for different fields of science. Still, we may use as a proxy of a paper’s quality the visibility of a journal where it was published. The latter may be estimated by IF from Journal Citation Reports database. Grančay et al. (2017) cite as critics of this approach (Seglen 1997; Callaway 2016), so the arguments in favor of it (Yuret 2016). Grančay et al. (2017) finally conclude that “publishing in a journal with high IF is a certain mark of excellence.”

To assess multidisciplinary output of universities correctly, we divide journals into four quartiles in each WoS subject category. This leads to comparison of a rank of the journal against its peers, titles from the same discipline. Such quartile approach for WoS or Scopus rankings is widely adopted in the literature (Bordons and Barrigón 1992; Chinchilla-Rodríguez et al. 2015; Olmeda-Gómez and Moya-Anegón 2016; Ho et al. 2022). If a journal is assigned to different quartiles in different categories, we use the highest quartile.

Distribution of pillar universities’ publications across journal quartiles is shown in Fig. 7. To ensure consistency, the same year of Journal Citation Reports is used (2017). A five-year progress is evident. The share of papers published in the lowest quartile has decreased. What is wonderful, is that the share of publications in the top-25% journals has grown by 2.4 times, from 5.3 to 12.5%. Only 7 out of 11 institutions succeeded to publish their papers in the highest quality journals in 2013. In 2017, each university has at least two papers in Q1. Vice versa, while in 2013 one university (Kostroma) published all its papers in Q4, in 2017 the maximum share of pillar’s publications in the lowest quartile is 77% (Ufa).

Though the advancements are striking, there is still a long way to go. According to InCites database, for the whole Russia the share of papers published in Q1 journals is 26% (year 2017). For Moscow State University, the biggest Russian higher education institution, this value equals 35%; for the biggest social sciences university, Higher School of Economics, 32%. Kostroma has become a champion among pillars with 22% of its papers in the top journals (2017).

3.4 Collaboration

As shown in Table 2, the intra-national (‘domestic’) collaboration of pillar universities has strengthened during five years 2013–2017. Almost all pillars started to write a higher percentage of papers in coauthorship with other Russian organizations. The choice of partners is most often based on geography, the most active partnership tends to occur between institutions from the same city. However, there are some exceptions such as pairs Samara-Moscow (850 km between), Kirov-Kazan (300 km) or Kostroma-Ivanovo (90 km). We also found that the leading partners of pillar universities are either other universities or institutes of the Russian Academy of Sciences, almost in an equal proportion.

What is striking, it is that inter-pillar collaboration is almost zero. There were no papers coauthored by two pillar universities in 2013, and only two such papers in 2017 (both in the field of chemistry, Samara-Omsk and Samara-Ufa). One of possible explanations may be the desire to have a gain from collaboration and, consequently, search for stronger coauthors. If it is true, then this means that pillar universities do not consider each other as quite beneficial and scientifically strong partners. Another hypothesis is related to mere geography, as said above. Pillars were intentionally scattered throughout Russia, so that even distance between Orel and Voronezh, the closest pair of cities, is more than 250 km.

To investigate this peculiarity in more detail, we checked whether collaboration could be found in national journals not indexed in the Web of Science. We used the most comprehensive Russian national scientific database, eLIBRARY.RU (Moskaleva et al. 2018; Akoev et al. 2018). Some studies (Pislyakov et al. 2019) demonstrated that generally Russian universities quite extensively collaborate in domestic journals. Results for our case are shown in Table 3. It is obvious that inter-pillar collaboration is very weak. Among 55 potential collaborative pairs only 10 have produced at least one joint paper. No pair has published more than 8 papers (Rostov-Volgograd). Three universities (Krasnoyarsk, Kostroma and Orel) do not have a single co-authored work with any pillar from another city. Evidently, this type of scientific collaboration is still waiting to be developed in Russia.

Table 4 contains share of papers created with foreign coauthors. Again, the majority of pillar universities have boosted their international collaboration (Volgograd and Voronezh are exceptions). While in 2013 pillars of Ufa, Kirov, and Kostroma had not a single paper in international partnership, five years later all 11 universities have an experience of research together with foreign colleagues. Interestingly, Rostov is the unique pillar which has more papers in international than in domestic collaboration in 2017. In five years it reduced the share of its national co-authorship and rose the international one. This means that Rostov pillar university has refocused its collaborative efforts from domestic to international partnerships.

Total percentage of internationally coauthored papers by pillar institutions has increased from 14 to 23%. It is still lower than all-Russian 39% share for 2017. The leading partners of pillar universities among countries are the same as for the whole Russia—USA and Germany.

Though Surovitskaya (2017), based on the monitoring of the Ministry of Education and Science, states that international activity tends to decrease in 6 out of 11 pillar universities, our analysis clearly shows that bibliometric indicators of partnership with foreign colleagues grow for nine institutions of the first pillar stage. The crucial role of such international collaboration for Russian institutions was demonstrated by Pislyakov and Shukshina (2014) who studied the most highly cited papers. Additionally, as internationalization was one of the objectives of the ‘pillar project’ and its indicators were included into reporting, this additionally proves our research hypothesis. Funding of the research in the regional centers, accompanied by proper evaluation framework, may lead to the rise of the ‘second best’.

4 Conclusion

Russia has started to directly support not only ‘the best’ higher education institutions, but also those which follow the leaders and have a potential to become the best. We call them in this paper ‘the second best’ universities. As we have seen, the standard “world rankings” methods poorly work in this case for selection and assessment of this type of organizations. This is partially confirmed by producers of world university rankings themselves: they attribute strictly defined positions to the leaders and join others into groups 201–250, 251–300, etc. We use not the “excellence approaches”, but full-range bibliometrics, which includes tails of distributions, not only the best output and outstanding publications (being top-cited, for example).

For 11 pillar universities of the first stage of the project, the publication output has increased more than 1.6 times compared to 2013, these papers being published in more visible journals (2.4 times increase in the number of publications in Q1 titles). The diversity of publications has also increased, the pillars have become more multidisciplinary. Moreover, these institutions have become more and more involved into scientific networks as at domestic so at international level. International collaboration strengthens, in 2017 nearly every fourth paper by pillar university is written with foreign coauthor(s).

What is even more important, no “sleeping universities” remained. In 2017, each of the 11 first stage universities has published at least two papers in Q1 journal and at least two papers in international collaboration.

As soon as the amounts of additional money allocated to pillar universities were relatively small, we may suggest that the mere coming of research assessment and attention to R&D performance may wake up even the most passive regional higher education institutions. This sensitivity to evaluation framework was also recently reported, for example, for universities in Finland (Mathies et al. 2020) and Denmark (Rowlands and Wright 2021).

Some policy recommendations may be made, which will be useful for Priority-2030 program and the future development of the national education system. For example, it is needed to motivate collaboration between regional universities (and, consequently, between regions), an activity not developed so far as at most prominent international level, so even in national Russian journals. As an obvious suggestion, some regular joint conferences (scientific, not purely administrative meetings) for all regional leading universities could reinforce their cooperation.

The fulfilment of the Pillars program reduced to some extent the gap between ‘pillars’ and grand federal universities. This shows that our research hypothesis on justification of ‘second best’ support holds true. The start of the competition between institutions of these two leagues should benefit both sides. The Program in its present form is over, but these conclusions should be taken into account in planning any future development of the national higher education system as in Russia, so in the other countries. And, hopefully, in the future some of the most successful “second best” Russian universities could enter the highest league of education, and those who are second will be first (cf. Mt. 19:30).Footnote 4

Notes

World university rankings also recognize this problem. As a rule, they give exact ranks to several dozen institutions, and then group them as non-discriminated sets (201–250, 251–300 etc.). The same “non-all-discriminative” approach is used for complex journals rankings (Subochev et al. 2018).

Sometimes also called in English “Flagship”, which is a reference to the US system of Flagship Universities (National Science Board 2012, p. 26).

Some previous studies reported that size of a unit, whether the department (Golden and Carstensen 1992) or research group (Seglen and Aksnes 2000) is poorly related, if at all, to per capita article production. In the case of Italian universities, the same conclusion for the majority of disciplines is drawn by Abramo et al. (2012), with their specific definition of ‘productivity’ (see also bibliography there). But all these results should rather be contrary to ours—in that case total production should be strongly correlated to the number of authors in a unit.

When the paper was already finished, the news has come that Volgograd State Technical University is the first among Pillars-2016 who entered Top-1000 of the THE World University Ranking.

References

Abramo, G., D’Angelo, C.A.: Ranking research institutions by the number of highly-cited articles per scientist. J. Informetrics. 9(4), 915–923 (2015). https://doi.org/10.1016/j.joi.2015.09.001

Abramo, G., Cicero, T., D’Angelo, C.A.: Revisiting size effects in higher education research productivity. High. Educ. 63(6), 701–717 (2012). https://doi.org/10.1007/s10734-011-9471-6

Akoev, M., Moskaleva, O., Pislyakov, V.: Confidence and RISC: How Russian papers indexed in the national citation database Russian Index of Science Citation (RISC) characterize universities and research institutes. In: Costas R., Franssen, T., Yegros-Yegros, A. (eds.) STI 2018 Conference Proceedings. Proceedings of the 23rd International Conference on Science and Technology Indicators, pp. 1328–1338. Universiteit Leiden—CWTS, Leiden (2018)

Antonova, N.L., Sushchenko, A.D.: University Academic Reputation as a Leadership factor in the global Educational Market. Vysshee obrazovanie v Rossii = Higher. Educ. Russia. 29(6), 144–152 (2020). https://doi.org/10.31992/0869-3617-2020-6-144-152

Arzhanova, I.V., Vorov, A.B., Derman, D.O., Dyachkova, E.A., Klyagin, A.V.: Results of pillar universities development program implementation for 2016. Univ. Management: Pract. Anal. 21(4), 11–21 (2017)

Bekhradnia, B.: International University Rankings: for good or ill? Higher Education Policy Institute, Oxford (2016)

Block, M., Khvatova, T.: University transformation: Explaining policy-making and trends in higher education in Russia. J. Manage. Dev. 36(6), 761–779 (2017). https://doi.org/10.1108/JMD-01-2016-0020

Bordons, M., Barrigón, S.: Bibliometric analysis of publications of Spanish pharmacologists in the SCI (1984–89). Part II. Contribution to subfields other than “pharmacology & pharmacy” (ISI). Scientometrics. 25(3), 425–446 (1992). https://doi.org/10.1007/BF02016930

Borsi, M.T., Mendoza, O.M.V., Comim, F.: Measuring the provincial supply of higher education institutions in China. China Econ. Rev. 71, 101724 (2022). https://doi.org/10.1016/j.chieco.2021.101724

Callaway, E.: Publishing elite turns against impact factor. Nature. 535(7611), 210–211 (2016). https://doi.org/10.1038/nature.2016.20224

Chinchilla-Rodríguez, Z., Arencibia-Jorge, R., Moya-Anegón, F.D., Corera Álvarez, E.: Some patterns of Cuban scientific publication in Scopus: The current situation and challenges. Scientometrics. 103(3), 779–794 (2015). https://doi.org/10.1007/s11192-015-1568-8

Choi, S., Yang, J.S., Park, H.W.: Quantifying the Triple Helix relationship in scientific research: Statistical analyses on the dividing pattern between developed and developing countries. Qual. Quantity. 49(4), 1381–1396 (2015). https://doi.org/10.1007/s11135-014-0052-5

Dadasheva, V., Efimov, V., Lapteva, A.: The Future of Higher School in Russia: Missions and Functions of Universities. In: Chova L.G., Martínez A.L., Torres I.C. (eds.) Proceedings of INTED2016, Tenth International Technology, Education and Development Conference. 7–9 March 2016, pp. 286–296. IATED Academy, Valencia. (2016). https://doi.org/10.21125/inted.2016.1072

Donovan, C., Gulbrandsen, M.: Introduction: Measuring the impact of arts and humanities research in Europe. Res. Evaluation. 27(4), 285–286 (2018). https://doi.org/10.1093/reseval/rvy019

Gauffriau, M., Larsen, P.O., Maye, I., Roulin-Perriard, A., Von Ins, M.: Publication, cooperation and productivity measures in scientific research. Scientometrics. 73(2), 175–214 (2007). https://doi.org/10.1007/s11192-007-1800-2

Glänzel, W.: National characteristics in international scientific co-authorship relations. Scientometrics. 51(1), 69–115 (2001). https://doi.org/10.1023/A:1010512628145

Golden, J., Carstensen, F.V.: Academic research productivity, department size and organization: Further results, comment. Econ. Educ. Rev. 11(2), 153–160 (1992). https://doi.org/10.1016/0272-7757(92)90005-N

Grančay, M., Vveinhardt, J., Šumilo, Ä.: Publish or perish: How Central and Eastern European economists have dealt with the ever-increasing academic publishing requirements 2000–2015. Scientometrics. 111(3), 1813–1837 (2017). https://doi.org/10.1007/s11192-017-2332-z

Guskov, A.E., Kosyakov, D.V., Selivanova, I.V.: Boosting research productivity in top Russian universities: The circumstances of breakthrough. Scientometrics. 117(2), 1053–1080 (2018). https://doi.org/10.1007/s11192-018-2890-8

Hammarfelt, B., Haddow, G.: Conflicting measures and values: How humanities scholars in Australia and Sweden use and react to bibliometric indicators. J. Association Inform. Sci. Technol. 69(7), 924–935 (2018). https://doi.org/10.1002/asi.24043

Ho, M.-T., Le, N.-T.-B., Ho, M.-T., Vuong, Q.-H.: A bibliometric review on development economics research in Vietnam from 2008 to 2020. Qual. Quantity. 56, 2939–2969 (2022). https://doi.org/10.1007/s11135-021-01258-9

Ivanov, V.V., Markusova, V.A., Mindeli, L.E.: Government investments and the publishing activity of higher educational institutions: Bibliometric analysis. Her. Russ. Acad. Sci. 86(4), 314–321 (2016). https://doi.org/10.1134/S1019331616040031

Lancho-Barrantes, B.S., Ceballos-Cancino, H.G., Cantu-Ortiz, F.J.: Comparing the efficiency of countries to assimilate and apply research investment. Qual. Quantity. 55(4), 1347–1369 (2021). https://doi.org/10.1007/s11135-020-01063-w

Leydesdorff, L.: The triple helix: An evolutionary model of innovations. Res. Policy. 29(2), 243–255 (2000). https://doi.org/10.1016/S0048-7333(99)00063-3

Leydesdorff, L., Perevodchikov, E., Uvarov, A.: Measuring triple-helix synergy in the Russian innovation systems at regional, provincial, and national levels. J. Association Inform. Sci. Technol. 66(6), 1229–1238 (2015). https://doi.org/10.1002/asi.23258

Lisitskaya, T., Taranov, P., Ugnich, E., Pislyakov, V.: Pillar Universities in Russia: The Rise of “the Second Wave”. In: Costas, R., Franssen, T., Yegros-Yegros, A. (eds.) STI 2018 Conference Proceedings. Proceedings of the 23rd International Conference on Science and Technology Indicators, pp. 1–10. Universiteit Leiden—CWTS, Leiden (2018).

Liu, N.C., Cheng, Y.: The academic ranking of World Universities. High. Educ. Europe. 30(2), 127–136 (2005). https://doi.org/10.1080/03797720500260116

Markusova, V.A., Minin, V.A., Libkind, A.N., Arapov, M.V., Jansz, C.N.M., Zitt, M., Bassecoulard-Zitt, E.: Impact of socio-economic factors on higher education in Russia. Res. Evaluation. 14(1), 35–42 (2005)

Markusova, V.A., Minin, V.A., Libkind, A.N., Jansz, C.N.M., Zitt, M., Bassecoulard-Zitt, E.: Research in non-metropolitan universities as a new stage of science development in Russia. Scientometrics. 60(3), 365–383 (2004). https://doi.org/10.1023/B:SCIE.0000034380.12874.cc

Mathies, C., Kivistö, J., Birnbaum, M.: Following the money? Performance-based funding and the changing publication patterns of Finnish academics. High. Educ. 79, 21–37 (2020). https://doi.org/10.1007/s10734-019-00394-4

Ministry of Science and Higher Education of the Russian Federation: Priority 2030. (2022). https://priority2030.ru/en. Accessed 5 December 2022

Moed, H.F., Markusova, V., Akoev, M.: Trends in Russian research output indexed in Scopus and Web of Science. Scientometrics. 116(2), 1153–1180 (2018). https://doi.org/10.1007/s11192-018-2769-8

Moskaleva, O., Pislyakov, V., Sterligov, I., Akoev, M., Shabanova, S.: Russian Index of Science Citation: Overview and review. Scientometrics. 116(1), 449–462 (2018). https://doi.org/10.1007/s11192-018-2758-y

National Science Board: Diminishing Funding and Rising Expectations: Trends and Challenges for Public Research Universities, A Companion to Science and Engineering Indicators 2012. Arlington, VA: National Science Foundation. (2012). https://www.nsf.gov/nsb/publications/2012/nsb1245.pdf Accessed 18 May 2022

Nature: All countries, great and small. Nature. 535, S56–S61 (2016). https://doi.org/10.1038/535S56a

Olmeda-Gómez, C., Moya-Anegón, F.D.: Publishing Trends in Library and Information Sciences across European Countries and Institutions. J. Acad. Librariansh. 42(1), 27–37 (2016). https://doi.org/10.1016/j.acalib.2015.10.005

Olcay, G.A., Bulu, M.: Is measuring the knowledge creation of universities possible?: A review of university rankings. Technol. Forecast. Soc. Chang. 123, 153–160 (2017). https://doi.org/10.1016/j.techfore.2016.03.029

Pislyakov, V., Moskaleva, O., Akoev, M.: Cui Prodest? Reciprocity of collaboration measured by Russian Index of Science Citation. In: Proceedings of the 17th International Conference on Scientometrics and Informetrics ISSI2019. Vol. 1, pp. 185–195. Edizioni Efesto, Italy (2019)

Pislyakov, V., Shukshina, E.: Measuring excellence in Russia: Highly cited papers, leading institutions, patterns of national and international collaboration. J. Association Inform. Sci. Technol. 65(11), 2321–2330 (2014). https://doi.org/10.1002/asi.23093

Rodionov, D., Yaluner, E., Kushneva, O.: Drag race 5-100-2020 national program. Eur. J. Sci. Theol. 11(4), 199–212 (2015)

Rowlands, J., Wright, S.: Hunting for points: The effects of research assessment on research practice. Stud. High. Educ. 46(9), 1801–1815 (2021). https://doi.org/10.1080/03075079.2019.1706077

Schiermeier, Q.: Russia to boost university science. Nature. 464(7293), 1257 (2010). https://doi.org/10.1038/4641257a

Seglen, P.O.: Why the impact factor of journals should not be used for evaluating research. Br. Med. J. 314(7079), 498–502 (1997). https://doi.org/10.1136/bmj.314.7079.497

Seglen, P.O., Aksnes, D.W.: Scientific productivity and group size: A bibliometric analysis of Norwegian microbiological research. Scientometrics. 49(1), 125–143 (2000). https://doi.org/10.1023/A:1005665309719

Skvortsov, N., Moskaleva, O., Dmitrieva, J.: World-class Universities. Experience and Practices of Russian Universities. In: Wang, Q., Cheng, Y., Cai Liu, N. (eds.) Building World-Class Universities: Different Approaches to a Shared Goal, pp. 53–69. Sense Publishers, Rotterdam (2013). https://doi.org/10.1007/978-94-6209-034-7

Subochev, A., Aleskerov, F., Pislyakov, V.: Ranking journals using social choice theory methods: A novel approach in bibliometrics. J. Informetrics. 12(2), 416–429 (2018). https://doi.org/10.1016/j.joi.2018.03.001

Surovitskaya, G.: Comparative competitiveness of Russian flagship universities. Univ. Management: Pract. Anal. 21(4), 63–75 (2017). https://doi.org/10.15826/umpa.2017.04.050

Tang, Y.: Government spending on local higher education institutions (LHEIs) in China: Analysing the determinants of general appropriations and their contributions. Stud. High. Educ. 47(2), 423–436 (2022). https://doi.org/10.1080/03075079.2020.1750586

Thelwall, M., Delgado, M.M.: Arts and humanities research evaluation: No metrics please, just data. J. Doc. 71(4), 817–833 (2015). https://doi.org/10.1108/JD-02-2015-0028

Tijssen, R.J.W., Visser, M.S., van Leeuwen, T.N.: Benchmarking international scientific excellence: Are highly cited research papers an appropriate frame of reference? Scientometrics 54(3), 381–397 (2002). https://doi.org/10.1023/A:1016082432660

Turko, T., Bakhturin, G., Bagan, V., Poloskov, S., Gudym, D.: Influence of the program “5–top 100” on the publication activity of Russian universities. Scientometrics. 109(2), 769–782 (2016). https://doi.org/10.1007/s11192-016-2060-9

Yuret, T.: Is it easier to publish in journals that have low impact factors? Appl. Econ. Lett. 23(11), 801–803 (2016). https://doi.org/10.1080/13504851.2015.1109034

Zitt, M., Bassecoulard, E.: Internationalisation in science in the prism of bibliometric indicators. In: Moed, H.F., Glänzel, W., Schmoch, U. (eds.) Handbook of Quantitative Science and Technology Research, pp. 407–436. Springer, Dordrecht (2005). https://doi.org/10.1007/1-4020-2755-9_19

Acknowledgements

The present study is a substantially extended version of a paper presented at the 23rd International Conference on Science and Technology Indicators, Leiden (The Netherlands), 12–14 September 2018 (Lisitskaya et al. 2018).We thank two anonymous reviewers for their valuable comments.

Funding

The authors declare that no funds, grants, or other support were received during the preparation of this manuscript.

Author information

Authors and Affiliations

Contributions

Tatiana Lisitskaya: conceptualization, methodology, data collection and analysis, writing — reviewing and editing.

Pavel Taranov: conceptualization, methodology, data collection and analysis, writing — reviewing and editing.

Ekaterina Ugnich: conceptualization, methodology, data collection and analysis, writing — reviewing and editing.

Vladimir Pislyakov: conceptualization, methodology, data analysis, writing — original draft, reviewing and editing.

All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors have no relevant financial or non-financial interests to disclose.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix. Institutions merged into pillar universities of the first stage

Appendix. Institutions merged into pillar universities of the first stage

City, pillar university | Merged universities |

|---|---|

Kirov = Vyatka State University | Vyatka State University |

Vyatka State University of Humanities | |

Kostroma = Kostroma State University | Kostroma State Technological University |

Kostroma State University | |

Krasnoyarsk = Reshetnev Siberian State University of Science and Technology | Reshetnev Siberian State Aerospace University |

Siberian State Technological University | |

Omsk = Omsk State Technical University | Omsk State Technical University |

Omsk State University of Design and Technology | |

Orel = Orel State University | Orel State University |

Prioksky State University | |

Rostov = Don State Technical University | Don State Technical University |

Rostov State University of Civil Engineering | |

Samara = Samara State Technical University | Samara State Technical University |

Samara State University of Architecture and Civil Engineering | |

Tyumen = Tyumen Industrial University | Tyumen State Oil and Gas University |

Tyumen State University of Architecture, Building and Civil Engineering | |

Ufa = Ufa State Petroleum Technological University | Ufa State Petroleum Technological University |

Ufa State University of Economics and Service | |

Volgograd = Volgograd State Technical University | Volgograd State Technical University |

Volgograd State University of Architecture and Civil Engineering | |

Voronezh = Voronezh State Technical University | Voronezh State Technical University |

Voronezh State University of Architecture and Civil Engineering |

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Lisitskaya, T., Taranov, P., Ugnich, E. et al. Pillar Universities in Russia: Bibliometrics of ‘the second best’. Qual Quant 58, 365–383 (2024). https://doi.org/10.1007/s11135-023-01645-4

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11135-023-01645-4