Abstract

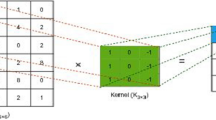

Moving object detection methods, MOD, must solve complex situations found in video scenarios related to bootstrapping, illumination changes, bad weather, PTZ, intermittent objects, color camouflage, camera jittering, low camera frame rate, noisy videos, shadows, thermal videos, night videos, etc. Some of the most promising MOD methods are based on convolutional neural networks, which are among the best-ranked algorithms in the CDnet14 dataset. Therefore, this paper presents a novel CNN to detect moving objects called Two-Frame CNN, 2FraCNN. Unlike best-ranked algorithms in CDnet14, 2FraCNN is a non-transfer learning model and employs temporal information to estimate the motion of moving objects. The architecture of 2FraCNN is inspired by how the optical flow helps to estimate motion, and its core is the FlowNet architecture. 2FraCNN processes temporal information through the concatenation of two consecutive frames and an Encoder-Decoder architecture. 2FraCNN includes a novel training scheme to deal with unbalanced pixel classes background/foreground. 2FraCNN was evaluated using three different schemes: the CDnet14 benchmark for a state-of-the-art comparison; against human performance metric intervals for a realistic evaluation; and for practical purposes with the performance instrument PVADN that considers the quantitative criteria of performance, speed, auto-adaptability, documentation, and novelty. Findings show that 2FraCNN has a performance comparable to the top ten algorithms in CDnet14 and is one of the best twelve in the PVADN evaluation. Also, 2FraCNN demonstrated that can solve many video challenges categories with human-like performance, such as dynamic backgrounds, jittering, shadow, bad weather, and thermal cameras, among others. Based on these findings, it can be concluded that 2FraCNN is a robust algorithm solving different video conditions with competent performance regarding state-of-the-art algorithms.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.References

Babiker M, Khalifa OO, Htike KK, et al (2017) Automated daily human activity recognition for video surveillance using neural network. In: 2017 IEEE international conference on smart instrumentation, measurement and applications, ICSIMA 2017. pp 1–5

Xie S, Zhang X, Cai J (2019) Video crowd detection and abnormal behavior model detection based on machine learning method. Neural Comput Appl 31:175–184. https://doi.org/10.1007/s00521-018-3692-x

Li D, Qin B, Liu W, Deng L (2021) A city monitoring system based on real-time communication interaction module and intelligent visual information collection system. Neural Process Lett 53:2501–2517. https://doi.org/10.1007/s11063-020-10325-5

Zhang B, Guo K, Yang Y et al (2020) Pedestrian detection based on deep neural network in video surveillance. Commun Signal Process Syst Lect Notes Electr Eng 517:113–120

Brunetti A, Buongiorno D, Trotta GF, Bevilacqua V (2018) Computer vision and deep learning techniques for pedestrian detection and tracking: A survey. Neurocomputing 300:17–33. https://doi.org/10.1016/j.neucom.2018.01.092

Serrano MM, Chen YP, Howard A, Vela PA (2016) Automated feet detection for clinical gait assessment. 2016 38th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBS) 2016-October. pp 2161–2164. https://doi.org/10.1109/EMBC.2016.7591157

Moro M, Marchesi G, Odone F, Casadio M (2020) Markerless gait analysis in stroke survivors based on computer vision and deep learning. In: Proceedings of the 35th Annual ACM Symposium on Applied Computing. pp 2097–2104

Magnier B, Gabbay E, Bougamale F et al (2019) Multiple honey bees tracking and trajectory modeling. Multimodal Sens Artif Intell Technol Appl Int Soc Opt Photonics 11059:1–12

Shakeel D, Bakshi G, Singh B (2020) insect detection and flight tracking in a controlled environment using machine vision: review of existing techniques and an improved approach. SSRN Electron J 3564057:1–6. https://doi.org/10.2139/ssrn.3564057

Bouwmans T (2014) Traditional and recent approaches in background modeling for foreground detection: an overview. Comput Sci Rev 11–12:31–66. https://doi.org/10.1016/j.cosrev.2014.04.001

Sehairi K, Chouireb F, Meunier J (2017) Comparative study of motion detection methods for video surveillance systems. J Electron Imaging 26:023025. https://doi.org/10.1117/1.JEI.26.2.023025

Garcia-Garcia B, Bouwmans T, Rosales Silva AJ (2020) Background subtraction in real applications: challenges, current models and future directions. Comput Sci Rev 35:1–42. https://doi.org/10.1016/j.cosrev.2019.100204

Zhao X, Wang G, He Z, Jiang H (2022) A survey of moving object detection methods: a practical perspective. Neurocomputing 503:28–48. https://doi.org/10.1016/j.neucom.2022.06.104

Kulchandani JS, Dangarwala KJ (2015) Moving object detection: review of recent research trends. Pervasive Comput (ICPC), 2015 Int Conf 1:1–5. https://doi.org/10.1109/PERVASIVE.2015.7087138

Guzman-Pando A, Chacon-Murguia MI (2019) Analysis and trends on moving object detection algorithm techniques. IEEE Lat Am Trans 17:1771–1783

Wu M, Sun Y, Hang R et al (2018) Multi-component group sparse RPCA model for motion object detection under complex dynamic background. Neurocomputing 314:12–131

Szymczyk P, Szymczyk M (2018) Identification of dynamic object using Z-transform artificial neural network. Neurocomputing 312:382–389

Braham M, Van Droogenbroeck M (2016) Deep background subtraction with scene-specific convolutional neural networks. In: International conference on systems, signals, and image processing. pp 3–6

Babaee M, Dinh DT, Rigoll G (2017) A deep convolutional neural network for background subtraction. Comput Res Repos arXiv:1702.01731

Wang Y, Luo Z, Jodoin PM (2016) Interactive deep learning method for segmenting moving objects. Pattern Recognit Lett 96:66–75. https://doi.org/10.1016/j.patrec.2016.09.014

Zhao Z, Zhang X, Fang Y, Member S (2015) Stacked multi-layer self-organizing map for background modeling. IEEE Trans IMAGE Process 7149:1–10. https://doi.org/10.1109/TIP.2015.2427519

Heo B, Yun K, Choi JY (2017) Appearance and motion based deep learning architecture for moving object detection in moving camera. In: 2017 IEEE international conference on image processing (ICIP). pp 1827–1831

Rahmon G, Bunyak F, Seetharaman G, Palaniappan K (2020) Motion U-net: Multi-cue encoder-decoder network for motion segmentation. In: Proceedings - International Conference on Pattern Recognition. pp 8125–8132

Lim LA, Keles HY (2018) Foreground segmentation using a triplet convolutional neural network for multiscale feature encoding. arXiv Pre-print arXiv:1801.02225

Lim LA, Yalim Keles H (2018) Foreground segmentation using convolutional neural networks for multiscale feature encoding. Pattern Recognit Lett 112:256–262. https://doi.org/10.1016/j.patrec.2018.08.002

Tezcan MO, Ishwar P, Konrad J (2021) BSUV-Net 2.0: spatio-temporal data augmentations for video-agnostic supervised background subtraction. IEEE Access 9:53849–53860. https://doi.org/10.1109/ACCESS.2021.3071163

Dosovitskiy A, Fischery P, Ilg E, et al (2015) FlowNet: learning optical flow with convolutional networks. In: IEEE International Conference on Computer Vision (ICCV). pp 2758–2766

Eigen D, Puhrsch C, Fergus R (2014) Depth map prediction from a single image using a multi-scale deep network. Neural Inf Process Syst Conf 2014:1–9

Shafiee MJ, Siva P, Fieguth P, Wong A (2016) Embedded motion detection via neural response mixture background modeling. In: 2016 IEEE conference on computer vision and pattern recognition workshops (CVPRW). pp 19–26

LeCun Y, Bottou L, Bengio Y, Haffner P (1998) Gradient-based learning applied to document recognition. Proc IEEE 86:2278–2323. https://doi.org/10.1109/5.726791

Lim LA, Keles HY (2020) Learning multi-scale features for foreground segmentation. Pattern Anal Appl 23:1369–1380

Gao F, Li Y, Lu S (2021) Extracting moving objects more accurately: a CDA contour optimizer. IEEE Trans Circuits Syst Video Technol 8215:1–10. https://doi.org/10.1109/TCSVT.2021.3055539

Yang L, Li J, Member S et al (2017) Deep background modeling using fully convolutional network. IEEE Trans Intell Transp Syst 19:1–9. https://doi.org/10.1109/TITS.2017.2754099

Sakkos D, Liu H, Han J et al (2017) End-to-end video background subtraction with 3d convolutional neural networks. Multimed Tools Appl 77:23023–23041. https://doi.org/10.1007/s11042-017-5460-9

Ilg E, Mayer N, Saikia T, et al (2016) FlowNet 2.0: evolution of optical flow estimation with deep networks. In: IEEE international conference on computer vision (ICCV). pp 1–9

Kruger N, Janssen P, Kalkan S et al (2013) Deep hierarchies in the primate visual cortex: What can we learn for computer vision? IEEE Trans Pattern Anal Mach Intell 35:1847–1870. https://doi.org/10.1016/j.enbuild.2012.02.004

Guzman-Pando A, Chacon-Murguia MI, Chacon-Diaz LB (2020) Human-like evaluation method for object motion detection algorithms. IET Comput Vis 14:674–682. https://doi.org/10.1049/iet-cvi.2019.0997

Chacon-Murguia MI, Guzman-Pando A, Ramirez-Alonso G, Ramirez-Quintana JA (2019) A novel instrument to compare dynamic object detection algorithms. Image Vis Comput 88:19–28. https://doi.org/10.1016/j.imavis.2019.04.006

Zeiler MD, Krishnan D, Taylor GW, Fergus R (2010) Deconvolutional networks. Proc IEEE Comput Soc Conf Comput Vis Pattern Recognit. https://doi.org/10.1109/CVPR.2010.5539957

Zeiler MD, Taylor GW, Fergus R (2011) Adaptive deconvolutional networks for mid and high level feature learning. In: In ICCV. pp 2018–2025

Zeiler MD, Fergus R (2014) Visualizing and understanding convolutional networks. ECCV 2014:818–833

Huang G, Liu Z, Van Der Maaten L, Weinberger KQ (2017) Densely connected convolutional networks. In: Proceedings - 30th ieee conference on computer vision and pattern recognition, CVPR 2017. pp 2261–2269

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image Recognition. In: Computer vision and pattern recognition (CVPR). pp 770–778

Long J, Shelhamer E, Darrell T (2015) Fully convolutional networks for semantic segmentation. Proc IEEE Comput Soc Conf Comput Vis Pattern Recognit. https://doi.org/10.1109/CVPR.2015.7298965

Ho Y, Wookey S (2020) The real-world-weight cross-entropy loss function: modeling the costs of mislabeling. IEEE Access 8:4806–4813. https://doi.org/10.1109/ACCESS.2019.2962617

Perez L, Wang J (2017) The effectiveness of data augmentation in image classification using deep learning. arXiv:1712.04621 1:1–8

Taylor L, Nitschke G (2018) Improving deep learning with generic data augmentation. In: 2018 IEEE symposium series on computational intelligence (SSCI). pp 1542–1547

Shorten C, Khoshgoftaar TM (2019) A survey on image data augmentation for deep learning. J Big Data. https://doi.org/10.1186/s40537-019-0197-0

Acknowledgements

The authors wish to extend their thanks to Tecnologico Nacional de Mexico/I.T. Chihuahua for the support provided to carry out this work under grants 5162.19-P, and 7598.20-P

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Chacon-Murguia, M.I., Guzman-Pando, A. Moving Object Detection in Video Sequences Based on a Two-Frame Temporal Information CNN. Neural Process Lett 55, 5425–5449 (2023). https://doi.org/10.1007/s11063-022-11092-1

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-022-11092-1