Abstract

In this paper, we consider a proximal linearized alternating direction method of multipliers, or PL-ADMM, for solving linearly constrained nonconvex and possibly nonsmooth optimization problems. The algorithm is generalized by using variable metric proximal terms in the primal updates and an over-relaxation step in the multiplier update. Extended results based on the augmented Lagrangian including subgradient band, limiting continuity, descent and monotonicity properties are established. We prove that the PL-ADMM sequence is bounded. Under the powerful Kurdyka-Łojasiewicz inequality we show that the PL-ADMM sequence has a finite length thus converges, and we drive its convergence rates.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The alternating direction method of multiplier (ADMM), proposed by Gabay and Mercier [30] and Glowinski and Marroco [31], has been shown to be an effective approach for solving linearly constrained separable optimization problems. It is closely related to the Douglas-Rachford [25] and Peachman-Rachford [58] operator splitting methods that date back to the 1950s. Due to of its success in solving structured convex optimization, ADMM has been widely used in various applications such as machine learning [14, 24, 55], compressive sensing [19, 68, 69], image processing [65, 66, 72,73,74], and reconstruction [18, 36, 71], sparse and low-rank optimization [49, 56].

Theoretical analysis of ADMM and its variants including linearized ADMM and proximal ADMM has been established extensively in the context of convex optimization. For example, Boley [8] studied the local linear convergence for solving quadratic and linear programs. Deng and Yin [23] studied the convergence rate of a general ADMM method in which a proximal term was added to each subproblem. Eckstein and Bertsekas [26] proved the linear convergence for solving linear programs, which depends on a bound on the largest iterate in the course of the ADMM algorithm. Hager, Yashtini, and Zhang [37] established the ergodic convergence rate of a proximal linearized ADMM method [18], in which the proximal parameter updates through a backtracking line search strategy.

Lions and Mercier [53] showed that the Douglas–Rachford operator splitting method converges linearly under the assumption that some involved monotone operators are both coercive and Lipschitz. When both functions in the objective are convex smooth and have Lipschitz continuous gradients, the work [32], establishes the O(1/k) and \(O(1/k^2)\) convergence rates based on the iteration number k for a Jacobi and its accelerated variant of ADMM respectively. Similar results also established in [33] for a Gauss-Seidel variant of ADMM and its accelerated variant, which requires only one of the two functions to be smooth with Lipschitz continuous gradients. Based on the variational inequality, He and Yuan established the O(1/k) convergence rate of Douglas-Rachford variant of ADMM in the ergodic [43] and non-ergodic sense [44]. Goldstein and Donoghue [34] proved the O(1/k) rate for the ADMM and \(O(1/k^2)\) rate for its accelerated variant based on the dual objective function under strong convexity assumptions. Davis and Yin [21] showed that the linear and sublinear rates of ADMM can be obtained as an extension of Douglas-Rachford splitting method in the sense of fixed-point residual and objective error. The R-linear convergence rate of ADMM under certain error bound condition proved in [45]. In [75], the authors consider a proximal ADMM with Fortin and Glowinski’s larger step size, and by using variational analysis they prove that the local linear convergence can be guaranteed without the strong convexity of objective functions together with the full rank assumption of the coefficient matrices, or the full polyhedricity assumption of their subdifferential.

The convergence of multi-block ADMM for minimizing the sum of more than two functions requires strong convexity assumptions on some or all functions in the objective [15, 17, 23, 38, 51, 52]. Without strong convexity assumptions, Chen et al. [16] showed that the multi-block ADMM is not necessarily convergent unless there exists at least two orthogonal coefficient matrices. Deng et al. [22] showed that the multi-block Jacobi ADMM converges with the o(1/k) rate when all coefficient matrices are mutually near-orthogonal and have full column rank or proximal terms are added to the subproblems. In [40, 41] the authors combined the ADMM with either forward or backward substitution procedure and they proved the convergence results from contraction perspectives. He, Hou, and Yuan [42] showed that the local linear convergence rate is provable if certain standard error bound condition is assumed.

He et al. [39] combined the Jacobi ADMM with a relaxation step and proved the convergence and O(1/k) rate in both ergodic and non-ergodic senses. In [20], the authors propose to update the Lagrange multiplier sequentially once each decomposed subproblem over the primal variables is solved. Their scheme updates both the primal and dual variables in Gauss-Seidel fashion. Recently, a generalized symmetric ADMM was proposed in [7] which updates the Lagrange multiplier twice with suitable stepsizes to solve the multi-block separable convex programming; they proved a worst-case O(1/k) ergodic convergence rate. In [6], the latter work [7] had been extended to stochastic ADMM-type version, and the so-called over-relaxation stepsize \((0, (1 + \sqrt{5})/2]\) was used in an accelerated stochastic ADMM [5].

The ADMM algorithm generally fails to solve nonconvex possibly nonsmooth optimization problems. However, its great performance on some practical applications such as phase retrieval [64], distributed clustering [28], sparse zero variance discriminant analysis [1], matrix separation [60], imaging [72,73,74], sparse feedback control [50] has encouraged researchers to study underlying conditions for which nonconvex nonsmooth ADMM converges. Wang and Yin [63] established the convergence of nonconvex nonsmooth multi-block ADMM for both separable and non-separable objective functions. For the separable objective function they assumed that majority of functions in the objective are prox-regular. When the functions are either smooth and nonconvex or convex and nonsmooth, Hong et al. [46] proved the convergence of the ADMM, provided that the penalty parameter in the augmented Lagrangian is chosen to be sufficiently large.

Wang et al. [62] analyzed the so-called Bregman ADMM under the assumption that the objective function is subanalytic, and included the standard ADMM as a special case. In [54] the authors studied the convergence of a full linearized ADMM, given that one of the coefficient matrices is full column rank and the penalty parameter in the augmented Lagrangian is sufficiently large. The work [47] considered a variant of multi-block proximal ADMM, where the proximal ADMM updates can be implemented for all the block variables except for the last block, for which either a gradient step or a majorization–minimization step is implemented. The authors analyzed its iteration complexity. In [35] Guo et al. considered a two-block ADMM to minimize a sum of two nonconvex functions with linear constraints and they proved the convergence by assuming that the generated sequence is bounded. Under some assumptions on the coefficient matrices, Yang, Pong, and Chen [67] studied a three-block ADMM to solve a special class of nonconvex and nonsmooth problems with applications to background/foreground extraction. Li and Pong [48] proved the global convergence of two-block ADMM under assumption that one of the functions in the objective is twice differentiable and has bounded Hessian. Under Kurdyka-Łojasiewicz property, Bot and Nguyen [13] and Yashtini [70] established the convergence and convergence rates of proximal variants of ADMM. The iteration complexity of two classes of fully and partially linearized multi-block ADMM with the choice of some relaxation parameter in the multiplier update established in [57]. In [61] the authors showed that ADMM is closely related to Douglas–Rachford splitting and Peaceman–Rachford splitting, and established a unifying global convergence result under the only assumption of Lipschitz differentiability of one function.

In this paper, we study the global convergence analysis of a variant of ADMM algorithm to solve the linearly constrained nonconvex and nonsmooth minimization problem

where \(x\in {\mathbb {R}}^n\) and \(y\in {\mathbb {R}}^m\) are unknown variables, \(A\in {\mathbb {R}}^{n\times p}\), \(B\in {\mathbb {R}}^{m\times p}\), \(c\in {\mathbb {R}}^p\). The function \(f:{\mathbb {R}}^n\rightarrow \bar{{\mathbb {R}}}:={\mathbb {R}} \cup \{+\infty \}\) is proper and lower-semicontinuous while \(g:{\mathbb {R}}^n\rightarrow \bar{{\mathbb {R}}}\) and \(h: {\mathbb {R}}^m\rightarrow \bar{{\mathbb {R}}}\) are proper smooth functions. We do not assume any convexity assumption on f, g, and h. The augmented Lagrangian function \({\mathcal {L}}^{\alpha } (x,y,z)\) associated with the problem (1) is defined by

where \(\alpha >0\) and \(z\in {\mathbb {R}}^p\) is the Lagrange multiplier associated with the linear constraint \(Ax+By+c=0\). Let \(\{Q_1^k\}_{k\ge 0}\subseteq {\mathbb {R}}^{n\times n}\) and \(\{Q_2^k\}_{k\ge 0}\subseteq {\mathbb {R}}^{m\times m}\) be two sequences of symmetric and positive semidefinite matrices. Given the initial vector \((x^0,y^0,z^0)\) and for \(k=1,2, \dots \) until some stopping criterion satisfied the variable metric proximal ADMM generates the sequence \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) recursively as follows

where \(\Vert v\Vert ^2_{Q}=\langle v, Q v\rangle \) for any \(v\in {\mathbb {R}}^d\) and \(Q\in {\mathbb {R}}^{d\times d}\), \(\langle \cdot , \cdot \rangle \) denotes the Euclidean inner product, and \(\Vert \cdot \Vert =\sqrt{\langle \cdot ,\cdot \rangle }\) denotes the \(\ell _2\) norm. The algorithm (3) can be equivalently written as follows

For efficiency, we take advantage of the smooth structure of \(g(\cdot )\), \(\frac{\alpha }{2}\Vert A \cdot +By^k+c\Vert ^2\), and \(h(\cdot )\), and we replace them by their proper linearization to obtain the variable metric Proximal Linearized ADMM (PL-ADMM):

where \(\beta \in (0,2)\) is an over-relaxation parameter. In most applications, \(B^\mathsf{T}B\) is a diagonal, nearly diagonal, or nearly orthogonal matrix thus the y-subproblem with proper choice of \(Q_2^k\) can be solved efficiently, thus the linearization \(\frac{\alpha }{2}\Vert By+Ax^{k+1}+c\Vert ^2\) may be unnecessary. If \(B^\mathsf{T}B \) is nearly a diagonal matrix (or nearly an orthogonal matrix), one can replace \(B^\mathsf{T}B\) by a certain symmetric diagonal (orthogonal) matrix \(D\approx B^\mathsf{T}B\). This replacement gives rise to \(\frac{\alpha }{2} y^* B^\mathsf{T}B y=\frac{\alpha }{2} y^* D y^*\), and then one can choose \(Q_2^k= \alpha (D-B^\mathsf{T}B)\). In general wise choices of proximal matrices \(\{Q_i^k\}_{k\ge 0}\) for \(i=1,2\) can lead us to much easier computations for \(x^{k+1}\) and \(y^{k+1}\), consequently might yield a more efficient scheme. For instance, by setting \(Q_1^k=\frac{1}{t_k} I_n\) where \(\{t_k\}_{k\ge 0}\) is a positive sequence and \(I_n\) is an \(n\times n\) identity matrix, the x subproblem in (4) becomes the following prox-linear problem

where \(p^k:=\nabla g(x^k)+\alpha A^\mathsf{T}(Ax^k+By^k+c+\alpha ^{-1}z^k)\). Prox-linear subproblems can be easier to compute specially when f is a separable function.

The PL-ADMM (4) is related but different from [13, 54]. Work [54] considers the fixed proximal terms \(\frac{L_x}{2}\Vert x-x^k\Vert ^2\) and \(\frac{L_y}{2}\Vert y-y^k\Vert ^2\), where \(L_x>0\) and \(L_y>0\) are positive constants, and \(\beta =1\). Algorithm 2 in [13] does not exploit lineariziation of \(\frac{\alpha }{2} \Vert A\cdot +By^k+c\Vert ^2\) in the x subproblem, and solves (1) with \(g(x)=0\), \(A=-I_{n\times n}\) and \(c=0\).

The main contributions of this paper is to analyze the theoretical properties of the PL-ADMM (4). In Theorem 1 we prove that the sequence generated by the PL-ADMM is bounded, and in Theorem 2 we show that in fact it is Cauchy, thus converges. In Theorem 3 we establish the rate of convergence for the error of regularized augmented Lagrangian, which by Lemma 11 this provides the rate of convergence for the error of objective function. Finally in Theorem 4 we analyze the convergence rate for the sequential error.

We present now the structure of the paper: in Sect. 2, we recall some definitions, well-known facts, and set the notation. In Sect. 3, we drive some fundamental properties based on the augmented Lagrangian for the sequence generated by the PL-ADMM. Sections 4 and 5 contain the main theoretical results of the paper. More specifically, in Sect. 4 we introduce the regularized augmented Lagrangian and some sufficient decrease condition which enables us to prove the boundedness of the PL-ADMM sequence. In Sect. 5 we prove general convergence and the convergence rates. Section 6 concludes the paper.

2 Preliminaries

Throughout this paper, we denote \({\mathbb {R}}\) as the real number set while \({\mathbb {Z}}\) as the set of integers. The set \(\bar{{\mathbb {R}}}:={\mathbb {R}}\cup \{+\infty \}\) is the extended real number, \({\mathbb {R}}_+\) is the positive real number set, and \({\mathbb {Z}}_+\) is the set of positive integers. The domain of \(\Phi :{\mathbb {R}}^d\rightarrow \bar{{\mathbb {R}}}={\mathbb {R}}\cup \{+\infty \}\), denoted \(\mathrm{dom}\; \Phi \), is defined by \( \mathrm{dom}\; \Phi :=\{x:\; \Phi (x)<+\infty \}. \) We write \(x^k\) is \(\Phi \)-converging to x, and we write \(x^k\xrightarrow {\Phi } x\), iff \(x^k\rightarrow x\) and \(\Phi (x^k)\rightarrow \Phi (x)\). Given the matrix X, \(\mathrm{Im}(X)\) denotes its image. We denote by \(I_n\) the \(n\times n\) identity matrix for \(n\in {\mathbb {Z}}_+\). The minimum, maximum, and the smallest positive eigenvalues of the matrix \(X\in {\mathbb {R}}^{n\times n}\) are denoted by \(\lambda _{\min }^X\), \(\lambda _{\max }^X\), \(\lambda _+^X\), respectively. The Euclidean scalar product of \({\mathbb {R}}^n\) and its corresponding norms are, respectively, denoted by \(\langle \cdot , \cdot \rangle \) and \(\Vert \cdot \Vert =\sqrt{\langle \cdot ,\cdot \rangle }\). If \(n_1,\dots , n_p\in {\mathbb {Z}}_+\) and \(p\in {\mathbb {Z}}_+\), then for any \(v:=(v_1,\dots ,v_p)\in {\mathbb {R}}^{n_1}\times {\mathbb {R}}^{n_2}\times \dots \times {\mathbb {R}}^{n_p}\) and \(v':=(v'_1,\dots ,v'_p)\in {\mathbb {R}}^{n_1}\times {\mathbb {R}}^{n_2}\times \dots \times {\mathbb {R}}^{n_p}\) the Cartesian product and its norm are defined by

For the sequence \(\{u^k\}_{k\ge 1}\), \(\Delta u^{k}:=u^k-u^{k-1}\), for all \(k\ge 1\).

2.1 Subdifferential and critical points

Let \(\Phi :{\mathbb {R}}^d\rightarrow \bar{{\mathbb {R}}}\). The Fréchet subdifferential of \(\Phi \) at \(x\in \mathrm{dom} \Phi \) is the set \(\partial _F\phi (x)\) of those elements \(s\in {\mathbb {R}}^d\) such that

For any \(x\notin \mathrm{dom} \Phi \), we set \(\partial _F\Phi (x):= \emptyset \). The (limiting) subdifferential of \(\Phi \) at \(x\in \mathrm{dom}\;\Phi \) is the set \(\partial \Phi (x)\) of elements \(s\in {\mathbb {R}}^d\) for which there exists sequences \(\{x^k\}_{k\in {\mathbb {N}}}\) and \(\{s^k\}_{k\in {\mathbb {N}}}\) in \({\mathbb {R}}^d\) such that \(x^k\xrightarrow {\Phi } x\) and \(s^k\rightarrow s\), and \(s^k\in \partial _F\Phi (x^k)\). As before, \(\partial \Phi (x):=\emptyset \) for \(x\notin \mathrm{dom} \Phi \), and its domain is \(\mathrm{dom} \partial \Phi :=\{x\in {\mathbb {R}}^d: \partial \Phi (x)\ne \emptyset \}.\) Note that \(\partial _F\Phi (x) \subset \partial \Phi (x)\), where the first set is convex and closed while the second one is closed ([59], Theorem 8.6). When \(\Phi \) is convex the two sets coincide and \( \partial _F\Phi (x)=\partial \Phi (x) =\{s\in {\mathbb {R}}^d: \Phi (y)\ge \Phi (x) +\langle s, y-x\rangle \; \forall y\in {\mathbb {R}}^d\}. \) Let \(F:{\mathbb {R}}^n\times {\mathbb {R}}^m\rightarrow (-\infty ,+\infty ]\) be a lower semicontinuous function, the subdifferential of F(x, y) at \(({{\hat{x}}},{{\hat{y}}})\) is \( \partial F({{\hat{x}}}, {{\hat{y}}})=\big (\partial _x F({{\hat{x}}},{{\hat{y}}}), \partial _y F({{\hat{x}}},{{\hat{y}}})\big ), \) where \(\partial _x F\) and \(\partial _y F\) are respectively the differential of the function \(F(\cdot ,y)\) when \(y\in {\mathbb {R}}^m\) is fixed, and \(F(x,\cdot )\) when x is fixed. The lazy slope of \(\Phi \) at x is \(\Vert \partial \Phi (x)\Vert _-:=\inf _{s\in \partial \Phi (x)} \Vert s\Vert \) if \(x\in \mathrm{dom} \partial \Phi \), and otherwise \(+\infty \). We say that \(x\in {\mathbb {R}}^d\) is a critical point iff \(0\in \partial \Phi (x)\). We denote the set of critical points of \(\Phi \) by \(\mathrm{crit}\; \Phi \). It follows from these definitions that if \(x^k\xrightarrow {\Phi } x\) with \(\lim \inf _{k\rightarrow \infty }\Vert \partial \Phi (x)\Vert _-=0\), then x is a critical point.

If \(\Phi :{\mathbb {R}}^d\rightarrow {\mathbb {R}}\) is Fréchet differentiable and its gradient is Lipschitz continuous with constant \(L_{\Phi }>0\). Then for every \(u,v\in {\mathbb {R}}^d\) and every \(\xi \in [u,v]=\{(1-t)u+tv:\;t\in [0,1]\}\) it holds

Moreover, if \(\Phi \) is bounded from below, by setting \(\xi =u\) and \(v=u-\delta \nabla \Phi (u)\) in (6), it is easy to show that the term \(\Phi (v)-(\delta -\frac{L_{\Phi } \delta ^2}{2})\Vert \nabla \Phi (v)\Vert ^2\) is bounded from below for every \(\delta >0\).

2.2 The Kurdyka-Łojasiewicz properties

Let \(\eta \in (0,+\infty ]\), and let \(\psi :[0,\eta ]\rightarrow [0,+\infty )\) be a continuous concave function such that \(\psi (0)=0\) and \(\psi \) is continuously differentiable on \((0,\eta )\), with \(\psi '(t)>0\) for all \(t\in (0,\eta )\).

A proper lower semi-continuous function \(\Phi :{\mathbb {R}}^d\rightarrow \bar{{\mathbb {R}}}\) has the Kurdyka-Łojasiewicz (KŁ) property at \(x^*\in \mathrm{dom} \;\partial \Phi \) with desingularising function \(\psi \), iff there exists \(\epsilon >0\) such that the Kurdyka-Łojasiewicz inequality

holds for all x in the strict local upper level set

A proper lower semi-continuous function having the Kurdyka-Łojasiewicz property at each point of \(\mathrm{dom} \partial \Phi \) is a KŁ function. When \(\Phi \) is of class \(C^1\), (7) becomes \(\Vert \nabla (\psi \circ \Phi )(x)\Vert \ge 1\). This means that the more \(\Phi \) is flat around its critical points, the more \(\psi \) has to be steep around 0, and this justifies the term “desingularising”. If \(\Omega \subset {\mathbb {R}}^d\) and assume that \(\Phi \) takes constant value on \(\Omega \). The function \(\Phi \) satisfies a KŁ property on \(\Omega \) if there exists \(\epsilon >0\) such that the (7) holds for every \(x^*\in \Omega \) and every element x belongs to the intersection

We will see that the growth of \(\psi \) has a direct impact on the convergence rate of PL-ADMM. If \(\psi (s)={{\bar{c}}} s^{1-\theta }\) for some \({{\bar{c}}}>0\) and \(\theta \in [0,1)\), then the Kurdyka-Łojasiewicz inequality (7) becomes

for any \(x\in \Gamma _{\eta }(x^*, \epsilon )\) where \(c=(1-\theta ){{\bar{c}}}\). In this case, we say \(\Phi \) has the KŁ property at \(x^*\) with an exponent \(\theta \). This asserts that \((\Phi (x)-\Phi (x^*))^{\theta }/\Vert \partial \Phi (x)\Vert _- \) remains bounded around \(x^*\).

This definition encompasses broad classes of functions arising in practical optimization problems. For example, if f is a proper closed semi-algebraic function, then f is a KŁ function with exponent \(\theta \in [0,1)\) [4]. The function l(Ax), where l is strongly convex on any compact set and it is twice differentiable, and \(A\in {\mathbb {R}}^{m\times n}\), is a KŁ function. Convex piecewise linear-quadratic function, \(\Vert x\Vert _1\), \(\Vert x\Vert _0\), \(\gamma \sum _{i=1}^k |x_{[i]}|\) where \(|x_{[i]}|\) is the ith largest (in magnitude) entry in x, \(k\le n\) and \(\gamma \in (0,1]\), \(\delta _{\Delta }(x)\) where \(\Delta =\{x\in {\mathbb {R}}^n: e^\mathsf{T}x=1, x\ge 0\}\), and least square problems with SCAD [27] or MCP [76] regularized functions are all KŁ functions. The KŁ property characterizes the local geometry of a function around the set of critical points. If \(\Phi \) is differentiable then \(\partial \Phi (x)=\nabla \Phi (x)\), the inequality (8) becomes \( \Phi (x)-\Phi (x^*)\le c \Vert \nabla \Phi (x)\Vert ^{\frac{1}{\theta }}, \) which generalizes the Polyak-Łojasiewics condition \(\Phi (x)-\Phi (x^*)\le {\mathcal {O}}(\Vert \nabla \Phi (x)\Vert ^2)\) (\(\theta =\frac{1}{2}\)). We refer interested readers to [2,3,4, 9,10,11,12, 29] for more properties of KŁ functions and illustrating examples.

3 Augmented Lagrangian-based properties

In this section, we establish some important properties for the PL-ADMM algorithm (4) based on the augmented Lagrangian functional (2).

Assumption 1

We begin by making some assumptions.

A1. The function f is lower-semicontinuous and coercive;

A2. g is coercive and h is bounded from below;

A3. The matrix B is full rank, \(\mathrm{Im}({A})\subseteq \mathrm{Im}(B)\) and \(b\in \mathrm{Im}(B)\);

A4. g and h have respectively \(L_g\) and \(L_h\) Lipschitz continuous gradients;

A5. \(\alpha >0\), \(\beta \in (0,2)\), \({q_i}^-:=\inf _{k\ge 0} \Vert Q_i^k\Vert \ge 0\) and \(q_i:=\sup _{k\ge 0} \Vert Q_i^k\Vert <+\infty \), \(i=1,2\)

Lemma 1

(Subgradient bound) Suppose that the Assumption 1 holds. Let \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) be a sequence generated by the PL-ADMM algorithm (4). There exists a constant \(\rho >0\) and \(d^{k+1}:=(d^{k+1}_x,d^{k+1}_y,d^{k+1}_z)\in \partial {\mathcal {L}}^{\alpha }(x^{k+1},y^{k+1},z^{k+1})\) where

such that

where for any sequence \(\{u^k\}_{k\ge 0}\), \(\Delta u^{k+1}=u^{k+1}-u^{k}\), and

Proof

Let \(k\ge 0\) be fixed. By taking partial differential of \({\mathcal {L}}^{\alpha }\) with respect to x, and evaluating the result at the point \((x^{k+1},y^{k+1},z^{k+1})\) yields

By the optimality condition of x subproblem in (4) we have

Therefore, we obtain

Taking partial differential of \({\mathcal {L}}^{\alpha }\) with respect to y and evaluating the result at \((x^{k+1},y^{k+1},z^{k+1}) \) gives \( \nabla _y {\mathcal {L}}^{\alpha }(x^{k+1},y^{k+1},z^{k+1}) = \nabla h(y^{k+1})+\alpha B^\mathsf{T}\big (Ax^{k+1}+By^{k+1}+\alpha ^{-1}z^{k+1}+c\big ). \) The optimality criterion of y subproblem in (4) is also given by

Thus, we have

By the z subproblem in the algorithm (4) it is easy to see that

Hence, by (11), (12), and (13), we then have \( d^{k+1}:=(d^{k+1}_x,d^{k+1}_y,d^{k+1}_z)\in \partial {\mathcal {L}}^{\alpha }(x^{k+1},y^{k+1},z^{k+1}).\)

From (11), by using the triangle inequality we have

Since \(\nabla g\) is \(L_g\) Lipschitz continuous and \(q_1=\sup _{k\ge 0} \Vert Q_1^k\Vert <+\infty \) we then have

From (12), by the triangle inequality, \( \Vert d_y^{k+1}\Vert \le \Vert \nabla h(y^{k+1})-\nabla h(y^k)\Vert +\Vert B\Vert \Vert \Delta z^{k+1}\Vert + \Vert {Q_2^k}\Vert \Vert \Delta y^{k+1}\Vert . \) Since \(\nabla h\) is \(L_h\) Lipschitz continuous and \(q_2=\sup _{k\ge 0} \Vert Q_2^k\Vert <+\infty \) we get

We also have

By (14), (15), and (16) we obtain

where \(\rho \) defined in (10). \(\square \)

Lemma 2

(Limiting continuity) Suppose that the Assumption 1 holds. If \((x^*, y^*, z^*)\) is the limit point of a converging subsequence \(\{(x^{k_j},y^{k_j},z^{k_j})\}_{j\ge 0}\) generated by the PL-ADMM, then \( {\mathcal {L}}^{\alpha } (x^*, y^*, z^*)=\displaystyle {\lim _{j\rightarrow \infty }} {\mathcal {L}}^{\alpha } (x^{k_j},y^{k_j},z^{k_j}).\)

Proof

Let \(\{(x^{k_j},y^{k_j},z^{k_j})\}_{j\ge 0}\) be a subsequence of the sequence generated by the PL-ADMM algorithm such that \( \displaystyle {\lim _{j\rightarrow \infty }} (x^{k_j},y^{k_j},z^{k_j})=(x^*, y^*, z^*). \) The function f is lower semicontinuous hence we have

From the x-subproblem in (4), we have \({{\hat{f}}}^k(x^{k+1})\le {{\hat{f}}}^k(x)\) for any \(x\in {\mathbb {R}}^n\). Choose \(k=k_j\), \(\forall j\ge 0\), and let \(x=x^*\) to get

By the continuity of \(\nabla g\) and the fact that \(\Delta x^{k_j+1}\) approaches zero, taking the limit supremum from the both sides leads to

We have \(x^{k_j}\rightarrow x^*\) as \(j\rightarrow \infty \) thus the latter inequality reduces to \( \displaystyle {\limsup _{j\rightarrow \infty }} \;f(x^{k_j+1})\le f(x^*).\) Thus, in view of (17), we then have \(\displaystyle {\lim _{j\rightarrow \infty }} f(x^{k_j})=f(x^*).\)

Since the functions \(h(\cdot )\) and \(g(\cdot )\) are smooth we further have \(\lim _{j\rightarrow \infty } g(x^{k_j})= g(x^*)\) and \(\lim _{j\rightarrow \infty } h(y^{k_j})= h(y^*)\). Thus

That completes the proof. \(\square \)

Lemma 3

(Limit point is critical point) Suppose that the Assumption 1 holds. Any limit point \((x^*,y^*,z^*)\) of the sequence \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) generated by the PL-ADMM (4) is a stationary point. That is, \(0\in {\mathcal {L}}^{\alpha } (x^*,y^*,z^*)\), or equivalently

Proof

Let \(\{(x^{k_j},y^{k_j},z^{k_j})\}_{j\ge 0}\) be a subsequence of \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) such that \((x^*, y^*, z^*)=\lim _{j\rightarrow \infty } (x^{k_j},y^{k_j},z^{k_j}).\) This follows that \(\Vert \Delta x^{k_j}\Vert \rightarrow 0\) \(\Vert \Delta y^{k_j}\Vert \rightarrow 0\), and \(\Vert \Delta z^{k_j}\Vert \rightarrow 0\) as \(j\rightarrow \infty \). By Lemma 2, \( {\mathcal {L}}^{\alpha } (x^{k_j},y^{k_j},z^{k_j})\rightarrow {\mathcal {L}}^{\alpha }(x^*, y^*, z^*)\), \(j\rightarrow +\infty \). Let \(d^{k_j}\in \partial {\mathcal {L}}^{\alpha } (x^{k_j},y^{k_j},z^{k_j})\), by Lemma 1 we have \(|||d^{k_j}\Vert ||\le \rho (\Vert \Delta x^{k_j}\Vert +\Vert \Delta y^{k_j}\Vert +\Vert \Delta z^{k_j}\Vert )\), where \(\rho >0\) is an scalar. Since \(|||d^{k_j}\Vert ||\rightarrow 0\) as \(j\rightarrow \infty \), hence \(d^{k_j}\rightarrow 0\). By the closeness criterion of the limiting sub-differential we then have \(0\in \partial {\mathcal {L}}^{\alpha }(x^*, y^*, z^*)\), or equivalently, \((x^*, y^*, z^*)\in \mathrm{crit} ({\mathcal {L}}^{\alpha })\).

\(\square \)

Lemma 4

(descent of \({\mathcal {L}}^{\alpha }\) during x update) Suppose that the Assumption 1 holds. For the sequence \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) generated by the PL-ADMM (4) we have

where \(A^k:= { Q_1^k} + \alpha A^\mathsf{T}A - L_g {I_n}\).

Proof

Let \(k\ge 0\) be fixed. From the x iterate of (4) it is clear that \({{\hat{f}}}^k(x^{k+1})\le {{\hat{f}}}^k(x)\) for any \(x\in {\mathbb {R}}^n.\) Setting \(x=x^k\) gives

We next consider

By (19) we then have

Since g is \(L_g\) Lipschitz continues this yields

That completes the proof. \(\square \)

Lemma 5

(descent of \({\mathcal {L}}^{\alpha }\) during y update) Suppose that the Assumption 1 holds. For the sequence \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) generated by the PL-ADMM algorithm (4) we have

where \({B^k}= {Q_2^k} + \alpha B^\mathsf{T}B -L_h{I_m}\).

Proof

Let \(k\ge 1\) be fixed. From the optimality condition of y subproblem in (4) we have

Multiply this equation by \(\Delta y^{k+1}\) and rearrange to obtain

We next consider

where the last equation is obtained by (21). Since \(\nabla h\) is \(L_h\) Lipschitz continuous we then get

This completes the proof. \(\square \)

Lemma 6

Suppose that the Assumption 1 holds. For the sequence \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) generated by the PL-ADMM (4) we have

where \(A^k\) and \({B^k}\) are defined respectively in Lemmas 4 and 5.

Proof

Let \(k\ge 1\) be fixed. By the z update in (4) and Lemma 4 and 5 we have

Rearrange to obtain (22). \(\square \)

Lemma 7

(Monotonicity of \({\mathcal {L}}^{\alpha }\)) Suppose that the Assumption 1 holds. For the sequence \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) generated by the PL-ADMM (4) the following inequalities hold

where \(r >1\), \(\lambda _+^{B^\mathsf{T}B}\) is the smallest positive eigenvalue of \(B^\mathsf{T}B\), \(\rho (\beta )=1-|1-\beta |\), and

Proof

Part (a). Let \(k\ge 1\) be fixed. We define the vector

Then \( \Delta w^{k+1}= Q_2^{k-1}\Delta y^{k}-{Q_2^k}\Delta y^{k+1}+ \nabla h(y^{k-1})-\nabla h(y^k), \) and by the triangle inequality

By the fact that \(\nabla h\) is \(L_h\) Lipschitz continuous and \(q_2=\sup _{k\ge 0}\Vert {Q_2^k}\Vert <+\infty \) we obtain

and hence

Expressing the optimality condition of y subproblem using \(w^{k+1}\) gives

Combining this with the z iterate in (4) yields

This follows that \( B^\mathsf{T}\Delta z^{k+1}=\beta \Delta w^{k+1}+(1-\beta ) B^\mathsf{T}\Delta z^{k}. \) Since \(\beta \in (0,2)\), we can equivalently write this as follows

where \(\mathrm{sign} (\lambda )=1\) if \(\lambda \ge 0\) and \(\mathrm{sign} (\lambda )=-1\) if \(\lambda <0\). By the convexity of \(\Vert \cdot \Vert ^2\) we have

Divide the both sides of the latter inequality by \(\alpha \beta \lambda _{+}^{B^\mathsf{T}B} \rho (\beta )\) and use (27) to obtain (a).

Part (b). Multiply (a) by \(r>1\) and combine it with (22) to obtain the result. \(\square \)

4 Regularized augmented Lagrangian

The regularized augmented Lagrangian function \({\mathcal {R}}:{\mathbb {R}}^n\times {\mathbb {R}}^m\times {\mathbb {R}}^p\times {\mathbb {R}}^m\times {\mathbb {R}}^p \rightarrow (-\infty ,+\infty ] \nonumber \) is defined by

where \(r>1\), and \(\theta _0\) and \(\theta _2\) are as in (23).

For any \(k\ge 1\), we denote

By (23) we then have

where \(D^k:= B^k-r(\theta _0+\theta _1) {I_m}.\)

Assumption 2

(Sufficient decrease condition)

-

(i)

The parameters \(\alpha>0, \beta \in (0,2), r>1\), and the symmetric positive semidefinite matrices \(\{Q_1^k\}_{k\ge 0}\) and \(\{Q_2^k\}_{k\ge 0}\) are chosen such that there exists \(\sigma _1>0\) and \(\sigma _2>0\) that

$$\begin{aligned} Q_1^{k}+\alpha {A^\mathsf{T}A}-L_g I_n \succeq \sigma _1 I_n \quad \mathrm{and} \quad Q_2^k+\alpha B^\mathsf{T}B - (L_h+r\theta _0+r\theta _1)I_m\succeq \sigma _2 I_m. \end{aligned}$$We then let \( \sigma =\min \{\sigma _1,\sigma _2, \frac{r-1}{\alpha \beta }\},\) then for all \(k\ge 1\) we have

$$\begin{aligned} {\mathcal {R}}_{k+1} +\sigma \Big (\Vert \Delta x^{k+1}\Vert ^2+\Vert \Delta y^{k+1}\Vert ^2+\Vert \Delta z^{k+1}\Vert ^2\Big ) \le {\mathcal {R}}_k\le {\mathcal {R}}_1. \end{aligned}$$(32) -

(ii)

(Relaxed Version) The conditions in the assumption can be relaxed. Chosen the parameters \(\alpha>0, \beta \in (0,2), r>1\), suppose that the symmetric positive semidefinite matrices\(\{Q_1^k\}_{k\ge 0}\) and \(\{Q_2^k\}_{k\ge 0}\) are chosen such that after a finite number of iterations \(k_0\ge 0\) we have

$$\begin{aligned} \sigma _k=\inf _{k\ge k_0}\Big \{\Vert Q_1^k\Vert +\alpha \Vert A\Vert ^2-L_g, \Vert Q_2^k\Vert +\alpha \Vert B\Vert ^2 - (L_h+r\theta _0+r\theta _1), \frac{r-1}{\alpha \beta } \Big \}>\sigma >0. \end{aligned}$$With this, we would then have

$$\begin{aligned} {\mathcal {R}}_{k+1} +\sigma \Big (\Vert \Delta x^{k+1}\Vert ^2+\Vert \Delta y^{k+1}\Vert ^2+\Vert \Delta z^{k+1}\Vert ^2\Big ) \le {\mathcal {R}}_k\le {\mathcal {R}}_{k_0},\quad \forall k\ge k_0. \end{aligned}$$(33)

Remark 1

We make a few remarks regarding to Assumption 2.

-

(i)

By setting \(Q_1^k=0\) and \(Q_2^k=0\) for all k, the PL-ADMM reduces to the linearized ADMM. In this case, for any \(\beta \in (0,2)\) and \(r>1\), \(\alpha \) needs to be chosen such that

$$\begin{aligned} \alpha >\max \Big \{ L_g/\lambda _{\max }^{B^\mathsf{T}B}, \Big (1+\sqrt{1+8rd\beta /\rho (\beta )^2}\Big )\frac{L_h}{2\lambda _{\max }^{B^\mathsf{T}B}}\Big \} \end{aligned}$$where \(d=\lambda _{\max }^{B^\mathsf{T}B}/\lambda _+^{B^\mathsf{T}B}\).

-

(ii)

If the y subproblem in PL-ADMM contains the second order term \(\frac{\alpha }{2}y^*B^\mathsf{T}By\) and if \(B^\mathsf{T}B\) is nearly diagonal (or orthogonal), we can replace \(B^\mathsf{T}B\) by a certain symmetric diagonal (orthogonal) matrix D that approximates \(B^\mathsf{T}B\). By choosing \(Q_2^k=\alpha (D-B^\mathsf{T}B)\), the second order term \(\frac{\alpha }{2}y^*B^\mathsf{T}By\) is replaced by \(\frac{\alpha }{2}y^*Dy\). Note that by Assumption (A5) we have \(q_2^-=\alpha \Vert D-B^\mathsf{T}B\Vert \), and by (23) we have \(\theta _0\propto \frac{1}{\alpha }\) and \(\theta _1\propto \frac{1}{\alpha }\). Hence, for a fixed \(\beta \in (0,2)\) and \(r>1\), if \(\alpha \) chosen large enough, the relation \(q_2^-+\alpha \lambda _+^{B^\mathsf{T}B}-(L_h+r\theta _0+r\theta _1)>0\) and hence Assumption 2 (i) holds.

-

(iii)

(Application example) Consider the problem

$$\begin{aligned} \min _{x\in {\mathbb {R}}^n}\;\; r(x)+\mathcal \ell ({\mathcal {A}} x-b) \end{aligned}$$where \({\mathcal {A}}:{\mathbb {R}}^n\rightarrow {\mathbb {R}}^m\) and \(b\in {\mathbb {R}}^m\). The first term is the regularization term and the second term is the fitting term. Regularizers can be some sparsity-including functions, \(\ell _q\) quasi-norms for \(0<q\le 1\), indicator functions, or any lower-semicontinuous (not necessarily convex) functions satisfying KŁ conditions. The fitting term can be least squares, logistic functions, and other smooth functions. In order to solve this problem, we formulate it as

$$\begin{aligned} \min _{x,y\in {\mathbb {R}}^n}\;\; r(x)+\mathcal \ell ({\mathcal {A}} y-b)\quad s.t. \;\;\; x-y=0 \end{aligned}$$Comparing to (1), \(h(y)=\ell ({\mathcal {A}} y-b)\), \(g(x)=0\), \(A=I\), and \(B=-I\). Note that A1-A4 hold. We set \(Q_1^k=\eta I\) where \(\eta >0\). Since B is a multiple of identity, we set \(Q_2^k=0\). The PL-ADMM algorithm to solve this problem is given as follows

$$\begin{aligned} x^{k+1}= & {} \arg \min _{x}\;\; r(x)+ \frac{\eta }{2} \Vert \alpha \eta ^{-1}(x^k-y^k+\alpha ^{-1}z^k)+x-x^k\Vert ^2,\\ y^{k+1}= & {} x^{k+1}-\frac{1}{\alpha }\big ( {\mathcal {A}}^\mathsf{T}\nabla \ell (\mathcal Ay^k-b)+B^\mathsf{T}z^k \big ),\\ z^{k+1}= & {} z^k+\alpha \beta (x^{k+1}-y^{k+1}). \end{aligned}$$Note that \(B^\mathsf{T}B=I\), \(\lambda _+^{B^\mathsf{T}B}=1=\lambda _{\max }^{B^\mathsf{T}B}\). If \(\ell (.)=\Vert \;.\;\Vert ^2\), then \(L_h=\Vert {\mathcal {A}}\Vert ^2\). To guarantee Assumption 2 (i), we need to choose \( \alpha >\Vert {\mathcal {A}}\Vert ^2 \big (1+\sqrt{1+8\beta r/\rho (\beta )^2}\big ),\) for any \(r>1\) and \(\beta \in (0,2)\).

Theorem 1

(bounded sequence) We assume that Assumption 1, and 2 (ii) hold. Then sequence \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) generated by the PL-ADMM algorithm (4) is bounded.

Proof

Let \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) be a sequence generated by the PL-ADMM algorithm. By (33) there exists a \(k_0\ge 1\) such that \({\mathcal {R}}_{k+1}\le {\mathcal {R}}_{k_0}\) for all \(k\ge k_0\). Hence

We will next find a lower bound for \(-\frac{1}{2\alpha }\Vert z^{k+1}\Vert ^2\). Given \(w^{k+1}\) as in (24), we rearrange (28) to obtain \( \beta B^\mathsf{T}z^{k+1}=\beta w^{k+1}+(1-\beta ) B^\mathsf{T}(z^k-z^{k+1}).\) Since \(\beta \in (0,2)\) we can rewrite this equation as follows

By the convexity of \(\Vert \cdot \Vert ^2\) we have

By (24) we have \(\Vert w^{k+1}\Vert ^2\le 2(q_2+L_h)^2\Vert \Delta y^{k+1}\Vert ^2+2\Vert \nabla h(y^{k+1})\Vert ^2\). Use this in (35) and then divide the both sides of the resulting inequality by \(-2\alpha \beta ^2\lambda _{+}^{B^\mathsf{T}B}\) to get

where

Using the latter inequality, (34) leads to

By the Assumption 1, \(\nabla h\) is \(L_h\) Lipschitz continuous, then for any \(k\ge k_0\ge 1\) and \(y\in {\mathbb {R}}^m\) it holds \(h(y)\le h(y^k)+\langle \nabla h(y^k),y-y^k\rangle +\frac{L_h}{2}\Vert y-y^k\Vert ^2.\) If \(\delta >0\) be an scalar, setting \(y=y^k-\delta \nabla h(y^k)\) yields \( h\Big (y^k-\delta \nabla h(y^k)\Big )\le h(y^k)- \Big (\delta -\frac{L_h\delta ^2}{2}\Big )\Vert \nabla h(y^k)\Vert ^2. \) Since h is bounded from below, then we have

We choose \(\delta >0\) such that \(\theta _3=\delta -\frac{L_h\delta ^2}{2}\). Then by (37), the right hand side of (36) is finite. It is easy to verify for any \(r>1\) and any \(\beta \in (0,2)\), we also have \(r\theta _2-\theta _5>0\) and \(r\theta _0+\sigma -\theta _4>0\). Hence

Since f and g are coercive, then the sequence \(\{x^k\}_{k\ge k_0}\) and consequently \(\{Ax^k\}_{k\ge k_0}\) is bounded. By the z iterate of (4) we have \( By^{k+1} = \frac{1}{\alpha \beta } \Delta z^{k+1} -Ax^{k+1}-c. \) By (38), \(\{\Delta z^k\}_{k\ge 0}\) is bounded and since \(B^\mathsf{T}B\) is invertible then \(\{y^k\}_{k\ge k_0}\) is bounded. Finally, since \(\{Ax^k\}_{k\ge k_0}\) and \(\{By^k\}_{k\ge k_0}\) are bounded and also \(\{Ax^{k}+By^{k}+\frac{1}{\alpha }z^{k}\}_{k\ge k_0}\) is bounded, thus \(\{z^k\}_{k\ge k_0}\) is bounded.

We showed that the sequences \(\{x^k\}_{k\ge k_0}\),\(\{y^k\}_{k\ge k_0}\), and \(\{z^k\}_{k\ge k_0}\) are bounded. Hence, there exists \(M_x>0\), \(M_y>0\), \(M_z>0\) positive scalars such that

We denote by \( {{\hat{M}}}_x=\displaystyle {\max _{0\le k\le k_0-1}} \Vert x^k\Vert ,\; {{\hat{M}}}_y=\displaystyle {\max _{0\le k\le k_0-1}} \Vert y^k\Vert ,\; {{\hat{M}}}_z=\displaystyle {\max _{0\le k\le k_0-1}} \Vert z^k\Vert . \) Thus we have \( \Vert x^k\Vert \le \max \{M_x,{{\hat{M}}}_x\}, \; \Vert y^k\Vert \le \max \{M_y,{{\hat{M}}}_y\}, \; \Vert z^k\Vert \le \max \{M_z,{{\hat{M}}}_z\}, \; \forall k\ge 1. \) This concludes the proof. \(\square \)

Remark 2

Theorem 1 was established for Assumption 2(ii). With Assumption 2(i), (39) holds for \(k\ge 1\).

Lemma 8

(Convergence of \({\mathcal {R}}_k\)) Suppose that the Assumption 1 and 2 (i) hold. If \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) is a sequence generated by the PL-ADMM algorithm (4), which assumed to be bounded. Then the sequence \(\{{\mathcal {R}}_{k}\}_{k\ge 1}\) defined in (30) is bounded from below and converges.

Proof

Let \(k\ge 0\) be fixed. By (30), we only need to show that

is bounded from below. Since \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) is a bounded sequence by Theorem 1, clearly \(\langle z^k, Ax^k+By^k+c\rangle \) and \(\Vert Ax^k+By^k+c\Vert ^2\) are bounded for \(k\ge 0\). By Assumption 1, h is bounded from below, and since f and g are coercive and \(\{x^k\}_{k\ge 0}\) is bounded, then \(\{f(x^k)\}_{k\ge 0}\) and \(\{g(x^k)\}_{k\ge 0}\) are bounded. Therefore \(\{{\mathcal {L}}^{\alpha }(x^k,y^k,z^k)\}_{k\ge 0}\) is bounded from below, and consequently \( -\infty <\inf \{{\mathcal {R}}_{k}:\; k\ge 1\}. \) By Assumption 2 (i), \(\{{\mathcal {R}}_k\}_{k\ge 1}\) is monotonically decreasing. This, together with the fact that \({\mathcal {R}}_k\) is bounded from below, we conclude that \(\{{\mathcal {R}}_k\}_{k\ge 1}\) is convergent.

\(\square \)

Lemma 9

Suppose that the Assumption 1 and 2 (ii) hold. If \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) is a sequence generated by the PL-ADMM algorithm (4), which assumed to be bounded, then

Proof

By summing up (33) from \(k=k_0\) to some \(K\ge k_0\) we have

We let K approach to infinity, and since \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) is bounded we have

This follows that \(\Vert \Delta x^{k+1}\Vert ^2+\Vert \Delta y^{k+1}\Vert ^2+\Vert \Delta z^{k+1}\Vert ^2\rightarrow 0,\) as \(k\rightarrow \infty \), and thus

This finishes the proof.

\(\square \)

Lemma 10

(Properties of limit point set) Let the Assumptions 1 and 2 hold. For a bounded sequence \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) generated by the PL-ADMM algorithm (4) the followings are true

-

(i)

The limit point set of the sequence \(\{(x^k,y^k,z^k)\}_{k\ge 0}\), denoted \(\omega \Big ((x^k,y^k,z^k)\}_{k\ge 0}\Big )\), is nonempty, connected and compact.

-

(ii)

\(\displaystyle {\lim _{k\rightarrow \infty } \mathrm{dist} \Big [(x^k,y^k,z^k), \omega \Big ((x^k,y^k,z^k)\}_{k\ge 0}\Big )\Big ] =0}\).

-

(iii)

\(\omega \Big ((x^k,y^k,z^k)\}_{k\ge 0}\Big ) \subseteq \mathrm{crit}\; {\mathcal {L}}^{\alpha } \).

Proof

These results follow by Lemma 9. We omit the proof. \(\square \)

Lemma 11

Suppose that the Assumption 1 holds. If \((x^*,y^*,z^*)\) is a limit point of a converging subsequence \(\{(x^{k_j},y^{k_j},z^{k_j})\}_{j\ge 0}\) of the PL-ADMM algorithm, then

Proof

Let \(\{(x^{k_j},y^{k_j},z^{k_j})\}_{j\ge 0}\) be a PL-ADMM subsequence such that \((x^{k_j},y^{k_j},z^{k_j})\rightarrow (x^*,y^*,z^*)\) as \(j\rightarrow \infty \). Hence \(\Vert \Delta y^{k_j}\Vert \rightarrow 0\) and \(\Vert B^\mathsf{T}\Delta z^{k_j}\Vert \le \Vert B\Vert \Vert \Delta z^{k_j}\Vert \rightarrow 0\) as \(j\rightarrow \infty \) hence

By the z iterate of the PL-ADMM (4), as \(j\rightarrow \infty \) we have \(\Vert \Delta z^{k_j+1}\Vert \rightarrow 0\) and hence \(\Vert Ax^{k_j}+By^{k_j}+c\Vert \rightarrow 0\). Since \(\{z^{k_j}\}_{j\ge 0}\) is a bounded sequence we also have \(\langle z^{k_j}, Ax^{k_j}+By^{k_j}+c\rangle \rightarrow 0,\) as \(j\rightarrow \infty \). As g and h are smooth and f is lower semicontinuous, then

This concludes the proof. \(\square \)

5 Convergence and convergence rates

In this section, we establish the main theoretical results including convergence and convergence rates for the sequence generated by the PL-ADMM and the regularized augmented Lagrangian. We begin with some important lemmas.

Lemma 12

Suppose that the Assumption 1 holds. Let \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) be a sequence generated by the PL-ADMM algorithm (4). Define

where \((d_x^k,d_y^k,d_z^k)\in \partial {\mathcal {L}}^{\alpha }(x^k,y^k,z^k)\). Then \( s^{k}:=(s^{k}_x, s^{k}_y, s^{k}_z, s^{k}_{y'},s^{k}_{z'}, )\in \partial {\mathcal {R}}(x^{k},y^{k},z^{k},y^{k-1},z^{k-1}) \) for \(k\ge 1\), and it holds

where

\(r>1\), \(\rho \) is given in (10), \(\theta _0\) and \(\theta _2\) are as (23).

Proof

Let \(k\ge 1\) fixed, and \((d_x^{k}, d_y^{k}, d_z^{k})\in \partial {\mathcal {L}}^{\alpha }(x^k,y^k,z^k)\). By taking partial derivatives of \({\mathcal {R}}_k\) with respect to \(x,y,z,y',z'\) we obtain

By the triangle inequality we obtain

By Lemma 1, this follows that

This concludes the proof. \(\square \)

Lemma 13

Let the Assumption 1 and 2 hold. If \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) is a sequence generated by the PL-ADMM algorithm (4), then the following statements hold.

-

(i)

The set \(\omega \Big (\{(x^k,y^k,z^k,y^{k-1},z^{k-1})\}_{k\ge 1}\Big )\) is nonempty, connected, and compact.

-

(ii)

\(\Omega \subseteq \{(x, y, z, y, z) \in {\mathbb {R}}^n\times {\mathbb {R}}^m\times {\mathbb {R}}^p\times {\mathbb {R}}^m\times {\mathbb {R}}^p: \;(x, y, z)\in \mathrm{crit} ({\mathcal {L}}^{\alpha })\Big \}.\)

-

(iii)

\(\lim _{k\rightarrow \infty } \mathrm{dist} \Big [ (x^k, y^k, z^k, y^{k-1}, x^{k-1}), \Omega \Big ]=0\);

-

(iv)

The sequences \(\{{\mathcal {R}}_k\}_{k\ge 1}\), \(\{{\mathcal {L}}^{\alpha }(x^k,y^k,z^k)\}_{k\ge 0}\), and \(\{{\mathcal {F}}(x^k,y^k)\}_{k\ge 0}\) approach to the same limit and if \((x^*, y^*, z^*, y^*, z^*)\in \Omega \), then

$$\begin{aligned} {\mathcal {R}} (x^*, y^*, z^*, y^*, z^*)= {\mathcal {L}}^{\alpha }(x^*, y^*, z^*) = {\mathcal {F}}(x^*,y^*). \end{aligned}$$

Proof

These results follow immediately from Lemma 3, Theorem 8, and Lemma 12. \(\square \)

Theorem 2

(Convergence) Suppose that Assumptions 1 and 2 (ii) hold and \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) is a sequence generated by the PL-ADMM (4), which is assumed to be bounded. If the regularized augmented Lagrangian \({\mathcal {R}}\) defined in (29) satisfies the KŁ property on the limit point set \( \Omega :=\omega \big (\{(x^k,y^k,z^k,y^{k-1},z^{k-1})\}_{k\ge 1}\big );\) that is, for every \(v^*:=(x^*, y^*, z^*, y^*, z^*) \in \Omega \) there exists \(\epsilon >0\) and desingularizing function \(\psi :[0,\eta ]\rightarrow [0,+\infty )\), for some \(\eta \in [0,+\infty )\) (see Sect. 2.2) such that for all \(v=(x,y,z,y',z')\in {\mathbb {R}}^n\times {\mathbb {R}}^m\times {\mathbb {R}}^p\times {\mathbb {R}}^m\times {\mathbb {R}}^p\) belongs to the following set

the inequality \( \psi '\Big ({\mathcal {R}}(v)-{\mathcal {R}}(v^*)\Big ) \Vert \partial {\mathcal {R}}(v)\Vert _-\ge 1 \) holds. Then \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) satisfies the finite length property

and consequently converges to a stationary point of (1).

Proof

By Lemma 8, the monotonically decreasing sequence \(\{{\mathcal {R}}_k\}_{k\ge k_0}\) for some \(k_0\ge 1 \) converges, thus let \(\lim _{k\rightarrow \infty }{\mathcal {R}}_k:={\mathcal {R}}_{\infty }\). This immediately follows that the sequence \(\{{\mathcal {E}}_k\}_{k\ge k_0}\) defined by

is non-negative, monotonically decreasing, and converges to 0. Let us consider two scenarios:

Scenario 1. There is \(k_1\ge k_0\) such that \({\mathcal {E}}_{k_1}=0\). This gives rise to \({\mathcal {E}}_k=0\) for all \(k\ge k_1\). By (5), (33), and the fact that the sequence \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) is bounded, we then have

Scenario 2. The sequence \(\{{\mathcal {E}}_k\}_{k\ge k_0}\) is a strictly positive sequence. Since the sequence \(\{{\mathcal {R}}_{k}\}_{k\ge k_0}\) is monotonically decreasing to \({\mathcal {R}}_{\infty }\), then there exists \( k_1\ge k_0\ge 1\) such that

Note that by Lemma 13, \(\Omega \) is nonempty, compact, and connected. By the assumption, \({\mathcal {R}}_k\) takes on a constant value \({\mathcal {R}}_{\infty }\) on \(\Omega \). By Lemma 13, since \(\lim _{k\rightarrow \infty } \mathrm{dist} \Big [ (x^k,y^k,z^k,y^{k-1},x^{k-1}), \Omega \Big ]=0\), thus there exists \(k_2\ge 1\) such that

Choose \({{\tilde{k}}} =\max \{ k_1,k_2,3 \}\). Then for \(k\ge {{\tilde{k}}}\), we have \((x^k,y^k,z^k,y^{k-1},x^{k-1})\in {\mathcal {S}}\) where \({\mathcal {S}}\) defined in (42). This follows that

Multiply both sides of this inequality by \(|||\Delta u^{k+1}|||^2=\Vert \Delta x^{k+1}\Vert ^2+\Vert \Delta y^{k+1}\Vert ^2+\Vert \Delta z^{k+1}\Vert ^2\), and use (33) and concavity of \(\psi \) (recall: \(\psi ({\mathcal {E}}_{k}) -\psi ({\mathcal {E}}_{k+1})\ge \psi '({\mathcal {E}}_{k}) ({\mathcal {E}}_{k} - {\mathcal {E}}_{k+1})\)) to obtain

The last inequality is obtained by the use of arithmetic mean-geometric mean inequality holds for any \(\gamma >0\).

By Lemma 12 and (5) we then obtain

It is easy to validate the following identity for any sequence \(\{w^k\}_{k\ge 0}\) and two integer numbers \(i,I\in {\mathbb {Z}}_+\) with \(I\ge i\),

We sum the inequality (45) from \(k=i\ge {{\tilde{k}}} \) to \(k=I\ge i\), we choose \(\gamma \) large enough such that \(1>\frac{\sqrt{3}{{\tilde{\rho }}}}{2\gamma }\), define \(\delta _0:=1-\frac{\sqrt{3}{{\tilde{\rho }}}}{2\gamma }\) and rearrange to obtain

Since \({\mathcal {E}}_k\) is a monotonically decreasing positive sequence \(\psi ({\mathcal {E}}_k)\ge \psi ({\mathcal {E}}_{k+1})>0\) and hence

The right hand side of the latter inequality is finite, therefore the left hand side sum is bounded for any \(I\ge i\). We let I to approach infinity to obtain

Since \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) is a bounded sequence, then for any \(i\in {\mathbb {Z}}_+\) we clearly have

Thus, by combining (46) and (47) we conclude that \(\displaystyle {\sum _{k\ge 0} \Vert \Delta x^{k+1}\Vert +\Vert \Delta y^{k+1}\Vert +\Vert \Delta z^{k+1}\Vert }\) is finite.

Now for any \(p,q,K\in {\mathbb {Z}}_+\) where \(q\ge p>0\), by (5) and Cauchy–Schwarz inequality we have

This implies that \(\{u^k\}_{k\ge 0}=\{(x^k,y^k,z^k)\}_{k\ge 0}\) is a Cauchy sequence and converges. Moreover, by Lemma 3, it converges to a stationary point. \(\square \)

Remark 3

Theorem 2 gives rise to the fact that the limit point set \(\omega (\{(x^k,y^k,z^k)\}_{k\ge 0})\) is a singleton. Let’s denote by \((x^{\infty }, y^{\infty }, z^{\infty })\) the unique limit point of the sequence \({(x^k,y^k,z^k)}_{k\ge 0}\).

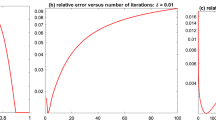

Theorem 3

(Convergence rate of \({\mathcal {R}}_k\)) Suppose that Assumption 1 and 2 (ii) hold. Let \((x^{\infty }, y^{\infty }, z^{\infty })\) be the unique limit point of the sequence \({(x^k,y^k,z^k)}_{k\ge 0}\) and denote \(v^{\infty }:=(x^{\infty }, y^{\infty }, z^{\infty },y^{\infty }, z^{\infty })\), \({\mathcal {E}}_k:={\mathcal {R}}_k-{\mathcal {R}}_{\infty }\) where \({\mathcal {R}}_{\infty }:={\mathcal {R}}(v^{\infty })=\lim _{k\rightarrow \infty } {\mathcal {R}}_k\). If the regularized augmented Lagrangian \({\mathcal {R}}\) defined in (29) satisfies the KŁ property at \(v^{\infty }\) (see Sect. 2.2); that is, there exists an exponent \(\theta \in [0,1)\), \(C_L>0\), and \(\epsilon >0\) such that for all \(v:=(x,y,z,y',z')\) where \(\mathrm{dist}(v,v^{\infty })<\epsilon \) the following inequality holds

Then there exists \(K\ge 1\) such that

where \({{\bar{\alpha }}}>0\). Moreover,

-

(a)

if \(\theta =0\), then \({\mathcal {E}}_k\) converges to zero in a finite number of iterations.

-

(b)

if \(\theta \in (0, \frac{1}{2}]\), then for all \(k\ge K\) it holds

$$\begin{aligned} {\mathcal {E}}_k\le \displaystyle {\frac{ \max \{{\mathcal {E}}_i:1\le i\le K\}}{(1+{{\bar{\alpha }}} {\mathcal {E}}_K^{2\theta -1})^{k-K+1} }}, \end{aligned}$$(50) -

(c)

if \(\theta \in (\frac{1}{2},1)\) then there is a \(\mu >0\) such that for all \(k\ge K\) it holds

$$\begin{aligned} {\mathcal {E}}_{k}\le \Big (\frac{1}{\mu (k-K)+ {\mathcal {E}}_K^{1-2\theta }} \Big )^{\frac{1}{2\theta -1}}, \quad \forall k\ge K. \end{aligned}$$

Proof

By (40), and the use of (5), for any \(k\ge 1\) we have

By Assumption 2(ii), there exists a \(k_0\ge 1\) such that for any \(k\ge k_0\) we have

Combining (51) and (52) leads to

By the assumption \({\mathcal {R}}\) satisfies the Łojasiewicz property at \(v^{\infty }\) where \(v^{\infty }=\lim _{k\rightarrow \infty } v^k\) together with the fact that \(\{{\mathcal {R}}_k\}_{k\ge k_0}\) is monotonically decreasing where \({\mathcal {R}}_{\infty }=\lim _{k\rightarrow \infty } {\mathcal {R}}_k\), then there exist an \(K\ge k_0\), \(\epsilon >0\), \(\theta \in [0,1)\), and \(C_L>0\) such that for all \(k\ge K\) \(\mathrm{dist}(v^k,v^{\infty })<\epsilon \) and

for some \(s^k\in \partial {\mathcal {R}}_k\).Thus raising both sides to the power two gives

This, together with (53) yields (49) with \({{\bar{\alpha }}}=\sigma /{3C_L^2{{\tilde{\rho }}}^2}>0\).

(a) Let \(\theta =0\). If \({\mathcal {E}}_k>0\) for all \(k\ge K\) we would have \(0<{{\bar{\alpha }}} \le {\mathcal {E}}_{k-1}-{\mathcal {E}}_k\). As k approaches infinity, the right hand side approaches zero, which leads to a contradiction. Hence \({\mathcal {E}}_k\) must be equal to zero for some \(k\ge K\). Since \({\mathcal {E}}_k\) is a monotonically decreasing sequence to zero, thus there is a \({{\tilde{k}}}\le K\) such that \({\mathcal {E}}_k=0\) for all \(k\ge {{\tilde{k}}}\).

(b) If \(\theta \in (0,\frac{1}{2}]\), then \(2\theta -1<0\). Let \(k\ge K\) be fixed. The sequence \(\{{\mathcal {E}}_k\}_{k\ge K}\) is monotonically decreasing thus

We rearrange this to obtain

Hence

(c) Let \(\theta \in (\frac{1}{2},1)\). Rearrange (49) to obtain

We let \(h:{\mathbb {R}}_+\rightarrow {\mathbb {R}}\) defined by \(h(s)=s^{-2\theta }\) for \(s\in {\mathbb {R}}_+\). Clearly, h is monotonically decreasing (\(h'(s)=-2\theta s^{-(1+2\theta )}<0\)). Since \({\mathcal {E}}_k\le {\mathcal {E}}_{k-1}\) for all \(k\ge K\) then \(h({\mathcal {E}}_{k-1}) \le h({\mathcal {E}}_{k})\) for all \(k\ge K\). We consider two cases. First, let \(r_0\in (1,+\infty )\) such that

Hence, by (54) we obtain

Note that \(1-2\theta <0\), so rearrange to get \( 0< \frac{{{\bar{\alpha }}}(2\theta -1)}{r_0} \le {\mathcal {E}}_{k}^{1-2\theta }-{\mathcal {E}}_{k-1} ^{1-2\theta }. \) Setting \({{\hat{\mu }}}=\frac{{{\bar{\alpha }}}(2\theta -1)}{r_0}>0\) and \(\nu :={1-2\theta }<0 \) one then can obtain

Next, we consider the case where \(h({\mathcal {E}}_{k}) \ge r_0 h({\mathcal {E}}_{k-1})\),. This yields \({\mathcal {E}}_{k}^{-2\theta } \ge r_0 {\mathcal {E}}_{k-1}^{-2\theta }\). Rearranging this gives \(r_0^{-1}{\mathcal {E}}_{k-1}^{2\theta } \ge {\mathcal {E}}_{k}^{2\theta }\), which by raising both sides to the power \(1/2\theta \) and setting \(q:=r_0^{-\frac{1}{2\theta }}\in (0,1)\) leads to \( q{\mathcal {E}}_{k-1}\ge {\mathcal {E}}_{k}. \) Since \(\nu =1-2\theta <0\), \(q^{\nu } {\mathcal {E}}_{k-1}^{\nu }\le {\mathcal {E}}_{k}^{\nu }\), which follows that

By the fact that \(q^{\nu }-1>0\) and \({\mathcal {E}}_p\rightarrow 0^+\) as \(p\rightarrow \infty \), there exists \({{\bar{\mu }}}\) such that \((q^{\nu }-1) {\mathcal {E}}_{k-1}^{\nu }> {{\bar{\mu }}}\) for all \(k> K\). Therefore we obtain

Choose \(\mu =\min \{{{\hat{\mu }}},{{\bar{\mu }}}\}>0\), one can combine (55) and (56) to obtain

Summing this inequality from \(K+1\) to some \(k\ge K+1\) gives \( \mu (k-K)+ {\mathcal {E}}_K^{\nu } \le {\mathcal {E}}_{k}^{\nu } . \) Hence \( \displaystyle { {\mathcal {E}}_{k}\le ( \mu (k-K)+ {\mathcal {E}}_K^{\nu } )^{1/\nu } =( \mu (k-K)+ {\mathcal {E}}_K^{1-2\theta } )^{1/ (1-2\theta )}.}\) This concludes the proof. \(\square \)

Theorem 4

(Convergence rate of the PL-ADMM sequence) Suppose that the Assumptions 1 and 2(ii) hold, \(u^{\infty }:=(x^{\infty }, y^{\infty }, z^{\infty })\) is the unique limit point of the sequence \(\{(x^k,y^k,z^k)\}_{k\ge 0}\) generated by the PL-ADMM algorithm. If the regularized augmented Lagrangian \({\mathcal {R}}\) (29) satisfies the KŁ property at \(v^{\infty }:=(x^{\infty }, y^{\infty }, z^{\infty },y^{\infty }, z^{\infty })\), then the following assertions hold:

-

(a)

There exists a \(K\ge 1\) such that for all \(k\ge K\) we have

$$\begin{aligned} |||u^k-u^{\infty }|||\le c \max \{ \psi ({\mathcal {E}}_{k}), \sqrt{{\mathcal {E}}_{k-1}} \}, \end{aligned}$$(57)where \(c>0\) constant, \({\mathcal {E}}_k:={\mathcal {R}}_k-{\mathcal {R}}_{\infty }\), \({\mathcal {R}}_{\infty }:=\displaystyle {\lim _{k\rightarrow \infty } {\mathcal {R}}_k}\), and \(\psi :[0,\eta )\rightarrow [0,+\infty )\) where \(\eta >0\) denotes a desingularizing function (see Sect. 2.2).

-

(b)

If \(\psi :[0,\eta )\rightarrow [0,+\infty )\) is defined by \(\psi (s)=s^{1-\theta }\) where \(\eta >0\) and \(\theta \in [0,1)\) then

-

(i)

If \(\theta =0\), then \(u^k\) converges to \(u^{\infty }\) in a finite number of iterations.

-

(ii)

If \(\theta \in (0, \frac{1}{2}]\), then for all \(k\ge K\) it holds

$$\begin{aligned} |||u^k-u^{\infty }||| \le \frac{ \max \{\sqrt{{\mathcal {E}}_i}:1\le i\le K\} }{\sqrt{(1+{{\bar{\alpha }}} {\mathcal {E}}_K^{2\theta -1})^{k-K+1}}},\quad \quad {{\bar{\alpha }}}>0. \end{aligned}$$ -

(iii)

If \(\theta \in (\frac{1}{2},1)\) then

$$\begin{aligned} |||u^k-u^{\infty }||| \le \Big (\frac{1}{\mu (k-K+1)+{{\mathcal {E}}_K}^{1-2\theta }} \Big )^{\frac{1-\theta }{2\theta -1}},\quad \forall k\ge K. \end{aligned}$$

-

(i)

Proof

Part (a). By Assumption 2(ii), let \(k_0\ge 1\) such that the sequence \(\{{\mathcal {E}}_k\}_{k\ge k_0}\) is monotonically decreasing. By (33) and (5) then for all \(k\ge k_0+1\) we have

Since \({\mathcal {R}}_k\) and \(v^k\) converge to \({\mathcal {R}}_{\infty }\) and \(v^{\infty }\) respectively and \({\mathcal {R}}\) satisfies the KŁ property at \(v^{\infty }\), then there exists \(\epsilon >0\), \(\eta >0\), \(\psi :[0,\eta )\rightarrow [0,+\infty )\), and \(K\ge k_0+1\) such that for all \(k\ge K\), we have \(\mathrm{dist}(v^k, v^{\infty }) <\epsilon \) and \({\mathcal {R}}_{\infty }<{\mathcal {R}}_k< {\mathcal {R}}_{\infty }+\eta , \) and the following KŁ property holds

By the concavity of \(\psi \) and (33) we have \( |||\Delta u^{k+1}|||^2 \le \frac{1}{\sigma } \big (\psi ({\mathcal {E}}_{k}) -\psi ({\mathcal {E}}_{k+1})\big ) \cdot \Vert \partial {\mathcal {R}}_k\Vert _-. \) By the arithmetic mean-geometric mean inequality for any \(\gamma >0\) we have

Lemma 12 leads us to

Let \(\gamma >0\) large enough such that \(1>\sqrt{3}{{\tilde{\rho }}}/2\gamma \). Denote \(\delta _0:=1-\frac{\sqrt{3}{{\tilde{\rho }}}}{2\gamma }\), then sum up the latter inequality over \(k\ge K\) to get

Hence by the triangle inequality for any \(k\ge K\) it holds

Use (58) to get

where \( c=\max \Big \{ \frac{\sqrt{3}\gamma }{2\sigma \delta _0}, \frac{3{{\tilde{\rho }}}}{2\gamma \delta _0\sqrt{\sigma }}\Big \}. \)

Part (b). Let \(\theta \in [0,1)\) and \(\psi (s)=s^{1-\theta }\). By part (a) we have

Exploit \(\psi '(s)=(1-\theta )s^{-\theta }\) in (59) to obtain \( {{\mathcal {E}}_k}^{\theta }\le (1-\theta )\Vert \partial {\mathcal {R}}_k\Vert _-,\;\forall k\ge K. \) This implies that \({\mathcal {R}}_k\) satisfies the Łojasiewics (48) at \(v^{\infty }\) for all \(k\ge K\) with \(C_L=1-\theta \).

(i) If \(\theta =0\), by Theorem 3(a) \({\mathcal {E}}_k\) goes to zero in a finite numbers of iterations. Hence by (61) \(u^k\) must converge to \(u^{\infty }\) in a finite number of iterations. (ii) If \(\theta \in (0,\frac{1}{2}]\), then \(\max \{{\mathcal {E}}_{k}^{1-\theta }, \sqrt{{\mathcal {E}}_{k-1}} \} =\sqrt{{\mathcal {E}}_{k-1}}\). By Theorem 3(b) we have

where \({{\bar{\alpha }}} =\sigma /3{{\tilde{\rho }}}^2\). (iii) If \(\theta \in (1/2,1)\), then \(\max \{{\mathcal {E}}_{k-1}^{1-\theta }, \sqrt{{\mathcal {E}}_{k-1}} \} ={\mathcal {E}}_{k-1}^{1-\theta }\). By Theorem 3(c) we have

This completes the proof. \(\square \)

6 Concluding remarks

In this paper, we considered the variable metric proximal linearized ADMM (PL-ADMM) method described in (4) and established its theoretical analysis. This algorithm solves a broad class of linearly constrained nonconvex and nonsmooth optimization problems of the form (1). We proved the algorithm’s convergence by showing that the sequence generated by the PL-ADMM has a finite length, thus it is Cauchy. Under the powerful Kurdyka-Łojasiewicz (KŁ) property, we established the convergence rates for the regularized augmented Lagrangian function value as well as and the PL-ADMM sequence. We showed that various values of KŁ-exponent of the desingularising function associated with the objective function may raise the PL-ADMM with different convergence rates. For instance, we proved three rates for the desingularising function \(\psi (s)=s^{1-\theta }\), with \(\theta \in [0,1)\). If \(\theta =0\), the sequence generated by PL-ADMM converges in a finite numbers of iterations. If \(\theta \in (0,1/2]\), then the sequential rate of convergence is \(cQ^{k}\) where \(c>0\), \(Q\in (0,1)\), and \(k\in {\mathbb {N}}\) is the iteration number. If \(\theta \in (1/2,1)\), then the rate \({\mathcal {O}}(1/k^{r})\) where \(r=(1-\theta )/(2\theta -1)\) is achieved.

References

Ames, B., Hong, M.: Alternating directions method of multipliers for \(\ell _1\)-penalized zero variance discriminant analysis and principal component analysis. Comput. Optim. Appl. 64, 725–754 (2016)

Attouch, H., Bolte, J.: On the convergence of the proximal algorithm for nonsmooth functions involving analytic features. Math. Program. 116, 5–16 (2009)

Attouch, H., Bolte, J., Redont, P., Soubeyran, A.: Proximal alternating minimization and projection methods for nonconvex problems: an approach based on the Kurdyka-Łojasiewicz inequality. Math. Oper. Res. 35, 438–457 (2010)

Attouch, H., Bolte, J., Svaiter, B.: Convergence of descent methods for semi-algebraic and tame problems: proximal algorithms, forward-backward splitting, and regularized gauss-seidel methods. Math. Program. Ser. A 137, 91–129 (2013)

Bai, J., Hager, W. W., Zhang, H.: An inexact accelerated stochastic ADMM for separable convex optimization, arXiv preprint arXiv:2010.12765, (2020)

Bai, J., Han, D., Sun, H., Zhang, H.: Convergence on a symmetric accelerated stochastic admm with larger stepsizes, arXiv preprint arXiv:2103.16154, (2021)

Bai, J., Li, J., Xu, F., Zhang, H.: Generalized symmetric ADMM for separable convex optimization. Comput. Optim. Appl. 70, 129–170 (2018)

Boley, D.: Local linear convergence of the alternating direction method of multipliers on quadratic or linear programs. SIAM J. Optim 23, 2183–2207 (2013)

Bolte, J., Daniilidis, A., Lewis, A.: The Łojasiewicz inequality for nonsmooth subanalytic functions with applications to subgradient dynamical systems. SIAM J. Optim 17, 1205–1223 (2006)

Bolte, J., Daniilidis, A., Ley, M., Mazet, L.: Characterizations of Łojasiewicz inequalities: Subgradientflows, talweg, convexity. Trans. Am. Math. Soc. 362, 3319–3363 (2010)

Bolte, J., Sabach, S., Teboulle, M.: Proximal alternating linearized minimization for nonconvex and nonsmooth problems. Math. Program. 146, 459–494 (2014)

Bolte, J., Daniilidis, A., Shiota, M.: Clarke subgradients of stratifiable functions. SIAM J. Optim. 18, 556–572 (2007)

Boţ, R., Nguyen, D.: The proximal alternating direction method of multipliers in the nonconvex setting: Convergence analysis and rates. Math. Oper. Res. 45, 682–712 (2020)

Boyd, S., Parikh, N., Chu, E., Peleato, B., Eckstein, J.: Distributed optimization and statistical learning via the alternating direction method of multipliers. Found. Trends Mach. Learn. 3, 1–122 (2010)

Cai, X., Han, D., Yuan, X.: The direct extension of ADMM for three-block separable convex minimization models is convergent when one function is strongly convex, http://www.optimization-online.org, 2013 (2015)

Chen, C., He, B., Yuan, X., Ye, Y.: The direct extension of ADMM for multi-block convex minimization problems is not necessarily convergent. Math. Program. 55, 57–79 (2016)

Chen, C., Shen, Y., You, Y.: On the convergence analysis of the alternating direction method of multipliers with three blocks. Abstr. Appl. Anal. 2015, 1–7 (2013)

Chen, Y., Hager, W.W., Yashtini, M., Ye, X., Zhang, H.: Bregman operator splitting with variable stepsize for Total Variation image reconstruction. Comput. Optim. Appl. 54, 317–342 (2013)

Cirik, A.C., Balasubramanya, N.M., Lampe, L.: Multi-user detection using ADMM-based compressive sensing for uplink grant-free noma. IEEE Wirel. Commun. Lett. 7, 46–49 (2017)

Dai, Y., Han, D., Yuan, X., Zhang, W.: A sequential updating scheme of Lagrange multiplier for separable convex programming. Math. Comput. 86, 315–343 (2017)

Davis, D., Yin, W.: A three-operator splitting scheme and its optimization applications. Set-Valued Var. Anal. 25, 829–858 (2017)

Deng, W., Lai, M., Peng, Z., Yin, W.: Parallel multi-block ADMM with \(o(1/k)\) convergence. J. Sci. Comput. 71, 712–736 (2017)

Deng, W., Yin, W.: On the global and linear convergence of the generalized alternating direction method of multipliers. J. Sci. Comput. 66, 889–916 (2015)

Dhar, S., Yi, C., Ramakrishnan, N., Shah, M.: ADMM based scalable machine learning on spark, In 2015 IEEE International Conference on Big Data (Big Data), IEEE, (2015), pp. 1174–1182

Douglas, J., Rachford, H.: On the numerical solution of the heat conduction problem in 2 and 3 space variables. Trans. Am. Math. Soc. 82, 421–439 (1956)

Eckstein, J., Bertsekas, D.: On the Douglas-Rachford splitting method and the proximal point algorithm for maximal monotone operators. Math. Program. 55, 293–318 (1992)

Fan, J.: Comments on wavelets in statistics: a review by a antoniadis. J. Ital. Stat. Soc. 6, 131–138 (1997)

Forero, P.A., Cano, A., Giannakis, G.B.: Distributed clustering using wireless sensor networks. IEEE J. Sel. Topics Signal Process. 5, 707–724 (2011)

Frankel, P., Garrigos, G., Peypouquet, J.: Splitting methods with variable metric for Kurdyka-Łojasiewicz functions and general convergence rates. J. Optim. Theory Appl. 165, 874–900 (2015)

Gabay, D., Mercier, B.: A dual algorithm for the solution of nonlinear variational problems via finite element approximations. Comput. Math. Appl. 2, 17–40 (1976)

Glowinski, R., Marroco, A.: Sur l’approximation, par éléments finis d’ordre un, et la résolution, par pénalisation-dualité d’une classe de problèmes de dirichlet non linéaires. ESAIM Math Modell Numer Anal Modélisation Mathématique et Analyse Numérique 9, 41–76 (1975)

Goldfarb, D., Ma, S.: Fast multiple-splitting algorithms for convex optimization. SIAM J. Optim. 22, 533–556 (2012)

Goldfarb, D., Ma, S., Scheinberg, K.: Fast alternating linearization methods for minimizing the sum of the two convex functions. Math. Program. 141, 349–382 (2013)

Goldstein, T., O’Donoghue, B., Setzer, S., Baraniuk, R.: Fast alternating direction optimization methods. SIAM. J. Imag. Sci. 7, 1588–1623 (2014)

Guo, K., Han, D., Wu, T.: Convergence of alternating direction method for minimizing sum of two nonconvex functions with linear constraints. Int. J. Comput. Math 94, 1653–1669 (2017)

Hager, W.W., Ngo, C., Yashtini, M., Zhang, H.: Alternating direction approximate Newton (ADAN) algorithm for ill-conditioned inverse problems with application to parallel MRI. J. Oper. Res. Soc. China 3, 139–162 (2015)

Hager, W.W., Yashtini, M., Zhang, H.: An \({O}(1/k)\) convergence rate for the variable stepsize Bregman operator splitting algorithm. SIAM J. Numer. Anal. 53, 1535–1556 (2016)

Han, D., Yuan, X.: A note on the alternating direction method of multipliers. J. Optim. Theory Appl. 155, 227–238 (2012)

He, B., Hou, L., Yuan, X.: On full Jacobian decomposition of the augmented lagrangian method for separable convex programming. SIAM J. Optim. 25, 2274–2312 (2015)

He, B., Tao, M., Xu, M., Yuan, X.: Alternating directions based contraction method for generally separable linearly constrained convex programming problem, http://www.optimization-online.org, (2010)

He, B., Tao, M., Yuan, X.: Alternating direction method with Gaussian back substitution for separable convex programming. SIAM J. Optim. 22, 313–340 (2012)

He, B., Tao, M., Yuan, X.: Convergence rate and iteration complexity on the alternating direction method of multipliers with a substitution procedure for separable convex programming. Math. Oper. Res. 42, 662–691 (2017)

He, B., Yuan, X.: On the O(1/n) convergence rate of the Douglas-Rachford alternating direction method. SIAM. J. Numer. Anal. 2, 700–709 (2012)

He, B., Yuan, X.: On non-ergodic convergence rate of the Douglas-Rachford alternating direction method of multipliers. Numerische Mathematik 130, 567–577 (2014)

Hong, M., Luo, Z.: On the linear convergence of the alternating direction method of multipliers. Math. Program. 162, 165–199 (2017)

Hong, M., Luo, Z., Razaviyayn, M.: Convergence analysis of alternating direction method of multipliers for a family of nonconvex problems. SIAM J. Optim. 26, 337–364 (2016)

Jiang, B., Lin, T., Ma, S., Zhang, S.: Structured nonconvex and nonsmooth optimization: algorithms and iteration complexity analysis. Comput. Optim. Appl. 72, 115–157 (2019)

Li, G., Pong, T.K.: Global convergence of splitting methods for nonconvex composite optimization. SIAM J. Optim. 25, 2434–2460 (2015)

Liégeois, R., Mishra, B., Zorzi, M., Sepulchre, R.: Sparse plus low-rank autoregressive identification in neuroimaging time series, In 2015 54th IEEE Conference on Decision and Control (CDC), IEEE, (2015), pp. 3965–3970

Lin, F., Fardad, M., Jovanovic, M.R.: Design of optimal sparse feedback gains via the alternating direction method of multipliers. IEEE Trans. Automat. Control 58, 2426–2431 (2013)

Lin, T., Ma, S., Zhang, S.: On the sublinear convergence rate of multi-block ADMM. J. Oper. Res. Soc. China 3, 251–274 (2015)

Lin, T., Ma, S., Zhang, S.: Global convergence of unmodified 3-block ADMM for a class of convex minimization problems. J. Sci. Comput. 76, 69–88 (2018)

Lions, P.L., Mercier, B.: Splitting algorithms for the sum of two nonlinear operators. SIAM J. Numer. Anal. 16, 964–979 (1979)

Liu, Q., Shen, X., Gu, Y.: Linearized ADMM for nonconvex nonsmooth optimization with convergence analysis. IEEE Access 7, 76131–76144 (2019)

Liu, Y., Shang, F., Liu, H., Kong, L., Licheng, J., Lin, Z.: Accelerated variance reduction stochastic ADMM for large-scale machine learning, IEEE Trans. Pattern Anal. Mach. Intell., (2020)

Lu, C.: A library of ADMM for sparse and low-rank optimization. Methodology 68, 49–67 (2006)

Melo, J.G., Monteiro, R.D.: Iteration complexity of a linearized proximal multiblock ADMM class for linearly constrained nonconvex optimization problems, http://www.optimization-online.org, (2017)

Peaceman, D., Rachford, H.: The numerical solution of parabolic elliptic differential equations. SIAM J. Appl. Math. 3, 28–41 (1955)

Rockafellar, R.T., Wets, R.: Variational analysis, vol. 317. Grundlehren der Mathematischen Wissenschaften, Springer, Berlin (1998)

Shen, Y., Wen, Z., Zhang, Y.: Augmented Lagrangian alternating direction method for matrix separation based on low-rank factorization. Optim. Methods Soft. 29, 239–263 (2014)

Themelis, A., Patrinos, P.: Douglas-Rachford splitting and ADMM for nonconvex optimization: tight convergence results. SIAM J. Optim. 30, 149–181 (2020)

Wang, F., Can, W., Xu, Z.: Convergence of multi-block Bregman ADMM for nonconvex composite problems. Sci. China Inf. Sci. 61, 12210:11-122101:12 (2018)

Wang, Y., Yin, W., Zeng, J.: Global convergence of ADMM in nonconvex nonsmooth optimization. J. Sci. Comput. 78, 29–63 (2019)

Wen, Z., Yang, C., Liu, X., Marchesini, S.: Alternating direction methods for classical and ptychographic phase retrieval. Invers. Probl. 28, 1–18 (2012)

Wu, C., Tai, X.-C.: Augmented Lagrangian method, dual methods, and split Bregman iteration for ROF, vectorial TV, and high order models. SIAM J. Imag. Sci. 3, 300–339 (2010)

Yang, J., Zhang, Y., Yin, W.: A fast alternating direction method for TVL1-L2 signal reconstruction from partial Fourier data. IEEE J. Sel. Topics Signal Process. 4, 288–297 (2010)

Yang, L., Pong, T., Chen, X.: Alternating direction method of multipliers for a class of nonconvex and nonsmooth problems with applications to background/foreground extraction. SIAM J. Imag. Sci. 10, 74–110 (2017)

Yang, Y., Sun, J., Li, H., Xu, Z.: ADMM-Net: A deep learning approach for compressive sensing MRI, arXiv preprint arXiv:1705.06869, (2017)

Yang, Y., Sun, J., Li, H., Xu, Z.: ADMM-CSNet: a deep learning approach for image compressive sensing. IEEE Trans. Pattern Anal. Mach. Intell. 42, 521–538 (2018)

Yashtini, M.: Multi-block nonconvex nonsmooth proximal ADMM: convergence and rates under Kurdyka-Łojasiewicz property. J. Optim. Theory Appl. 190, 966–998 (2021)

Yashtini, M., Hager, W. W., Chen, Y., Ye, X.: Partially parallel MR image reconstruction using sensitivity encoding, In 2012 IEEE International Conference on Image Processing, Orlando, (2012), IEEE, pp. 2077–2080

Yashtini, M., Kang, S.H.: Alternating direction method of multipliers for Euler’s elastica-based denoising, SSVM 2015. LNCS 9087, 690–701 (2015)

Yashtini, M., Kang, S.H.: A fast relaxed normal two split method and an effective weighted TV approach for Euler’s Elastica image inpainting. SIAM J. Imag. Sci. 9, 1552–1581 (2016)

Yashtini, M., Kang, S.H., Zhu, W.: Efficient alternating minimization methods for variational edge-weighted colorization models. Adv. Comput. Math. 45, 1735–1767 (2019)

Yuan, X., Zeng, S., Zhang, J.: Discerning the linear convergence of ADMM for structured convex optimization through the lens of variational analysis. J. Mach. Learn. Res. 21, 1–75 (2020)

Zhang, C.: Nearly unbiased variable selection under minimax concave penalty. Ann. Stat. 38, 894–942 (2010)

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Yashtini, M. Convergence and rate analysis of a proximal linearized ADMM for nonconvex nonsmooth optimization. J Glob Optim 84, 913–939 (2022). https://doi.org/10.1007/s10898-022-01174-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10898-022-01174-8