Abstract

Complex software development projects rely on the contribution of teams of developers, who are required to collaborate and coordinate their efforts. The productivity of such development teams, i.e., how their size is related to the produced output, is an important consideration for project and schedule management as well as for cost estimation. The majority of studies in empirical software engineering suggest that - due to coordination overhead - teams of collaborating developers become less productive as they grow in size. This phenomenon is commonly paraphrased as Brooks’ law of software project management, which states that “adding manpower to a software project makes it later”. Outside software engineering, the non-additive scaling of productivity in teams is often referred to as the Ringelmann effect, which is studied extensively in social psychology and organizational theory. Conversely, a recent study suggested that in Open Source Software (OSS) projects, the productivity of developers increases as the team grows in size. Attributing it to collective synergetic effects, this surprising finding was linked to the Aristotelian quote that “the whole is more than the sum of its parts”. Using a data set of 58 OSS projects with more than 580,000 commits contributed by more than 30,000 developers, in this article we provide a large-scale analysis of the relation between size and productivity of software development teams. Our findings confirm the negative relation between team size and productivity previously suggested by empirical software engineering research, thus providing quantitative evidence for the presence of a strong Ringelmann effect. Using fine-grained data on the association between developers and source code files, we investigate possible explanations for the observed relations between team size and productivity. In particular, we take a network perspective on developer-code associations in software development teams and show that the magnitude of the decrease in productivity is likely to be related to the growth dynamics of co-editing networks which can be interpreted as a first-order approximation of coordination requirements.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Most of today’s software projects are so complex that they cannot be developed by a single developer. Instead, potentially large teams of software developers need to collaborate. This necessity of collaboration in large teams raises a simple, yet important question: How productive is a team of developers compared to a single developer? Or, in other words: How much time do n developers need to finish a software project compared to the time taken by a single developer? This question is of significant importance not only for project management but also for the development of reasonable cost estimation models for software engineering processes. One may naively assume that the productivity of individual team members is additive, i.e., that, compared to the time taken by a single developer, a team of n developers will speed up the development time by a factor of n. However, this naive perspective misses out two important factors that can give rise to a non-additive scaling of productivity.

First, the collaboration of developers in a team can give rise to synergy effects, which result in the team being more productive than one would expect from simply adding up the individual productivities of its members. Under this assumption, the average output per team member can be increased by simply adding developers to the team, a fact that has recently been related to Aristotle’s quote that “the whole is more than the sum of its parts” (Sornette et al. 2014). A second, contrary factor that influences the productivity of software development teams is the communication and coordination overhead which is becoming more pronounced as teams grow larger. This increasing overhead can impact productivity and it has thus been discussed extensively in the software engineering and project management literature. In particular, it has been argued that coordination issues can lead to situations where the average output per team member decreases as the size of the team is increased. Studies showing that growing team sizes negatively affect productivity can be traced back to early studies of Maximilian Ringelmann, and the effect has accordingly be named the “Ringelmann effect” (Ringelmann 1913). In the context of software engineering, it can be related to Brooks’ law of software project management, which states that “adding manpower to a late software project makes it later”, and Brooks rejects the idea of additive, or even super-additive productivity by adding that “nine women can’t make a baby in one month” (Brooks 1975).

Apart from very few exceptions, there is a broad consensus in the software engineering literature that the size of software development teams, both in traditional closed source and open source environments negatively affects the average productivity. However, quantitative evidence for this fact that would go beyond small-scale case studies or surveys is relatively sparse. At the same time, there is significant confusion over the question which quantitative indicators can reasonably be used to measure the productivity of software development teams or individual developers.

Using a large-scale data set covering the full history of 58 Open Source Software (OSS) projects hosted on the social coding platform GitHub, in this paper we quantitatively address the question of how the size of a software development team is related to productivity. Based on a time-slice analysis of more than 580,000 commit events over a period of more than 14 years, we analyse the output of projects in terms of code and study how their time-varying productivity relates to the number of active software developers. The contributions of our empirical analysis are as follows:

-

1.

Using the distribution of inter-commit times, we first identify reasonable time windows for the definition of team size and the analysis of commit activities in OSS projects.

-

2.

Based on a microscopic, textual analysis of commit contents, we measure the contributions of individual commits and highlight their huge variance. By this, we quantitatively prove that the mere number of commits should not be used as a reasonable measure of productivity, and that an analysis of actual commit content is needed.

-

3.

We define a more reasonable measure for the contribution of developers which is based on the so-called Levenshtein edit distance (Levenshtein 1966) between consecutive versions of source code files.

-

4.

Using this fine-grained measure of code contributions, we quantitatively show that in all of the studied OSS projects the average productivity of developers decreases as the team size increases, thus providing quantitative evidence for the Ringelmann effect.

-

5.

Addressing possible mechanisms behind the Ringelmann effect, we finally take a network perspective on evolving coordination structures that is based on a fine-grained analysis of diffs between commits occurring within certain time windows. In particular, using the association between source code regions and developers, we construct time-evolving co-editing networks. These networks can be seen as language-independent first-order approximations for coordination structures that can be constructed solely based on widely available repository data.

-

6.

Finally, we analyse the growth dynamics of co-editing networks constructed from repository data. For all projects in our data set, we observe a super-linear growth of co-editing networks, which can be seen as one potential mechanism behind the observed Ringelmann effect.

Using a large and open data set, our study validates the common assumption in software engineering that the size of a team negatively affects the average productivity of its members. This highlights the fact that, possibly due to the duplication of efforts, increasing coordination overhead, as well as decreasing accountability, software development projects represent diseconomies of scale.

We argue that both our results as well as our methodology are useful to refine and calibrate existing software development cost models based on empirical data from software development repositories. Our investigation of commit numbers and the size distribution of commits further calls for a cautious use of commit-based productivity measures, since their naive application can easily yield erroneous results.

The remainder of this paper is structured as follows. In the following Section 2 we carefully review the extensive body of literature on team productivity which has been published in software engineering, but also in fields such as sociology, social psychology and organizational theory. We then continue by providing a detailed description of our data set, our time-slice analysis and our measure for productivity in Section 3. Empirical results on the scaling of productivity are presented and discussed in Section 4. Investigating potential mechanisms behind the observed scaling relations, in Section 5 we take a network perspective on the association between developers and edited source code regions in OSS projects. Having discussed potential threats to validity and future work in Section 6, we conclude our paper in Section 7.

2 Background and Related Work

We start our investigation by a review of the existing body of literature on productivity factors in software development projects. Rather than limiting our focus on the field of empirical software engineering, we additionally review works studying how the size of teams, groups or organizations affects their performance which have been completed in fields such as sociology, social psychology, management science or organizational theory.

In what has since been named one of the foundational experiments of social psychology, the French agricultural engineer Maximilian Ringelmann (Kravitz and Martin 1986; Ringelmann 1913) quantitatively studied the efficiency of a group of human workers jointly pulling on a rope. He showed that the individual performance decreased with increasing group size, an effect later termed as “Ringelmann effect” in social psychology (Ingham et al. 1974). Ringelmann attributed the effect mainly to the increasingly challenging coordination in larger groups. He additionally highlighted the potential influence of social factors that may affect the motivation of individual group members (Steiner 1972). These social factors have since been investigated in detail in a number of studies from psychology, organizational theory and management science. Here, the tendency of group members to spend less effort in larger groups has been named “free-riding”, the “sucker effect” or “social loafing” (Shepperd 1993). It is generally attributed to situations characterized by a lack of individual accountability and shared responsibility (Latane et al. 1979; Williams et al. 1981; Williams and Karau 1991; Wagner 1995) and it has been shown to be a robust phenomenon in various contexts and - with varying strength - across cultures (Yetton and Bottger 1983; Jackson and Harkins 1985; Earley 1989; Karau and Williams 1993, 1995; Chidambaram and Tung 2005; Shiue et al. 2010.)

Notably, both the coordination challenges and the motivational factors which have been investigated as potential mechanisms behind the Ringelmann effect, play an important role in software development. Especially in large and fluid teams with no fixed assignment of responsibilities, based on findings from social psychology, the lack of accountability could potentially negatively affect the amount of contributions. Furthermore, complex collaborative tasks such as software development entail a need for coordination which is likely to become more challenging as the size of the team increases.

If and how these factors affect the productivity of software development teams has been studied extensively since the early days of empirical software engineering research. According to Brooks’ law of software project management (Brooks 1975), the size of a development team negatively affects its productivity. Brooks’ rationale behind this “law” is based on two main factors: (i) the significant “ramp-up” time needed by new developers before they become productive, and (ii) the super-linear coordination cost which is due to the quadratic scaling of the number of possible communication channels.

These intuitive arguments have been substantiated by a a number of empirical studies showing that the size of the development team negatively affects software development productivity. Based on a survey with 77 software developers from eleven software companies, Paiva et al. (2010) argue that developers assess the size of a development team as one of those factors that have the strongest negative impact on productivity. Blackburn et al. (1996) empirically studied software development projects in Japan, the United States and Western Europe across a time period of four years. The authors found a negative correlation between the productivity of software development teams and their size, referring to the phenomenon as the “productivity paradox”. Using data on 99 software projects of the European Space Agency with a total of more than 5 Million lines of code, Maxwell et al. (1996) studied a number of factors influencing productivity. The authors found that productivity significantly decreases with increasing team size and argue that this “is probably due to the coordination and communication problems that occur as more people work on a project.” (Maxwell et al. 1996).

A first step to investigate the coordination cost associated with larger development teams was taken by works studying how work is distributed among team members. Using the Open Source projects Apache httpd and Mozilla Firefox as case studies, Mockus et al. (2000) and (2002) found that a small core of developers is responsible for the vast majority of code changes. They further argue that in OSS projects “communication and coordination overhead issues […] typically limit the size of effective teams to 10-15 people” (Mockus et al. 2000). The finding that the majority of code is developed by a small core group of developers has been validated in a number of empirical studies studying the distribution of code distributions (Lerner and Tirole 2002; German 2006).

A number of further works have studied the question of how coordination costs affect software development productivity. Analyzing the congruence between coordination patterns and coordination needs in software development teams, the fact that a lack of coordination negatively affects software development productivity was shown in (Cataldo et al. 2008; Cataldo and Herbsleb 2013). The scaling of coordination costs and productivity in OSS projects was studied by Adams et al. (2009), finding a non-linear scaling that is in line with earlier studies suggesting an optimum team size. In particular, the authors identify a first regime with comparable coordination effort for development teams consisting of less than ten developers. A second regime in which the growing number of developers does not lead to a proportionate increase of coordination efforts is likely due to the emergence of modular team structures which mitigate coordination efforts. A third regime is identified beyond a critical team size, in which coordination efforts quickly increase in a super-linear fashion. The negative impact of coordination requirement observed in empirical studies has finally been integrated in a number of cost estimation models, such as the Constructive Cost Model (COCOMO) or its successor COCOMO II (Boehm 1984; Boehm et al 2000), which are commonly used in project management.

Apart from social psychology and empirical software engineering, management science and organizational theory are two further fields of study which have extensively addressed the question of how the productivity in a project is related to its size. Here, the question how the productivity, or cost, of software projects is related to their size is commonly rephrased in terms of whether software projects are economies or diseconomies of scale (Stigler 1958). This question has been addressed from different perspectives.

Measuring project size in terms of the size of the code base and productivity in terms of person hours, Premraj et al. (2005) studied more than 600 software projects from Finnish companies, finding no significant evidence for a non-linear relation between size of the code base and productivity. A series of other works has argued that there exist both economies and diseconomies of scale in the context of software development (Banker and Kemerer 1989; Banker et al. 1994; Banker and Slaughter 1997; Banker and Kauffman 2004). Notably, evidence for economies of scale has predominantly been found in software maintenance projects, which has been related to the possibility of batching modification requests (Banker and Slaughter 1997). In a large-scale study comprising more than 4,000 industrial software development projects, Comstock et al. (2011) studied the relation between team size and the effort required to complete a project. Consistent with Brooks’ law, they find that the time taken to develop a software does not decrease proportionally with team size, concluding that “doubling the team size does not halve the time required” (Comstock et al. 2011). In line with earlier works showing a non-linear and non-monotonous relationship between team size and coordination costs (Adams et al. 2009), they further argue that there exists an optimum team size that depends on project characteristics like project size as well as other productivity parameters.

Due to the availability of fine-grained data containing project variables like cost, effort, personnel and project performance, as well as the need for cost estimation models, the majority of studies on software productivity, including the large-scale study performed by Comstock et al. (2011), have studied industrial, and thus mainly closed-source software projects. Fewer studies in management science have specifically addressed Open Source Software communities, addressing the open question whether OSS projects are economies or diseconomies of scale (von Krogh et al. 2003). Taking an economic perspective and defining productivity as the real value contributed per employee, Harison and Koski (2008) compared the productivity of Open Source Software firms with those of companies developing proprietary solutions. While they did not study productivity in terms of code production, the authors find employees in OSS-based companies to be less productive from an economic point of view. Disagreeing with studies showing diminishing returns to scale prevalent in the software engineering literature, Sornette et al. (2014) argued that the productivity of OSS communities increases with team size in a super-linear fashion. Interpreting “commits” as “commitment”, the authors define the productivity of a project as the total number of commits made within five day time windows and find that a large number of contributors facilitates bursts of commit activity.

Summarising the large body of interdisciplinary works outlined above, we conclude that there is broad consensus in the software engineering community that increasing the size of software development teams - at least beyond certain, comparably small numbers - negatively affects productivity. At the same time, there is an ongoing debate of whether Open Source Software projects are economies or diseconomies of scale, results critically depending on (i) the type of software projects considered (i.e., software development vs. maintenance projects), and (ii) the operationalization of project size and productivity. Regarding the second aspect, it is important to note that recent results on a super-linear scaling of productivity in OSS communities have been obtained using a productivity measure that is based on the total number of commits, thus neglecting the actual commit contribution in terms of code.

Contributing to this debate, in our work we first quantitatively show that this approach of measuring productivity introduces a systematic error which results in an overestimation of productivity that is due to (i) the skewed commit size distribution, and (ii) basic software engineering and collaboration practices such as “commit early commit often” which are likely to result in commit sizes becoming smaller as teams grow in size. Defining a measure of productivity that encompasses both commits and - based on a fine-grained textual analysis - the actual contents of these commits, we show that Open Source Software Communities represent diseconomies of scale, in which the average productivity of developers decreases as the team size grows. With this work, we contribute to the ongoing discussion on the scaling of productivity in software development projects, showing that earlier results on the presence of the Ringelmann effect in industrial software projects also extend to Open Source Communities. Our study further highlights the importance for complex systems and data science studies to take into account basic principles of collaborative software engineering, thus contributing to a knowledge exchange between the disciplines of complex systems, management science, and empirical software engineering.

3 Methodology

We study the research questions outlined above using a large-scale data set collected from the collaborative software development platform GitHub. It offers a free, web-based hosting service for Open Source Software repositories centered around the distributed version control system Git. With more than 5 Million developers collaborating on more than 10 Million repositories, GitHub has recently become the most popular software project hosting platform in the world (Gousios et al. 2014). This popularity is partly due to the fact that, in addition to a mere version control of source code, GitHub offers a number of social features such as issue tracking, a wiki system and team management which support collaboration and coordination. A feature that makes GitHub particularly popular in the Open Source community is the ability to easily fork the repositories of others. In a nutshell, this allows users to copy the source code of existing projects into their own repository, instantly enabling them to modify and advance the code base without the need to coordinate the changes with the original developers. Changes that may be useful for others can then be propagated back to the original repository via a so-called pull request, i.e., a request sent to the maintainers of the root repository to pull the changes from the forked repository and merge them into the main code base.

3.1 Data Set

For our study, we collected the full commit history of 58 OSS projects from the publicly available GitHub API.Footnote 1 The initial choice of projects was based on the following criteria: (i) the project should be among the 100 most frequently forked projects, (ii) it should still be active, showing commit activity in the week when the data were collected, (iii) there should be at least 50 different active developers across the whole project history, and (iv) there should be at least one year of activity. The data on these 58 projects amount to a total of 581,353 commits contributed by 30,845 different users, with an average of 10,023 commits and 531 unique developers per project. A detailed summary of our choice of projects, the programming language used, the time period covered, as well as the number of commits and developers is shown in Table 3 in the supplementary information (SI).

In the Git version control system the development history of a project consists of a tree of commits, with the first commit representing the root and each subsequent commit storing a parent pointer to the commit immediately preceding it. In this way, starting from the most recent commit of a project, the whole development history can be traced back by following the parent pointers. The GitHub API returns information about each commit and the corresponding Git tree. More specifically, for each commit our data set includes:

-

an SHA hash uniquely identifying the commit

-

the name and email of the developer who authored the commit

-

the time stamp of the commit (with a resolution of seconds)

-

the list of files changed by this commit

-

the diffs of all files changed by the commit, which allows to reconstruct the precise changes to the source code at the level of individual characters

-

the parent pointer (SHA hash) of the preceding commit

When project maintainers merge pull requests (i.e., code authored by others) from forked repositories, a special merge commit can be created in the commit tree. We exclude such commits from the analysis as they would wrongly attribute the diffs to the maintainer who executed the merge and not to the developer who authored the pull request. For technical details, we refer the reader to Section ?? in the Appendix.

We make both the raw and the preprocessed data used in our study freely available (Scholtes et al. 2015). In the following we provide a detailed description of how we use this data set to analyse the relation between the size of a software development team and its productivity.

3.2 Measuring Team Size

Understanding the relation between team size and productivity first requires a reasonable definition for the size of the development team of an OSS project. Being informal and fluid social organisations, the simple question who belongs to the development team of an Open Source project at a given point in time is not trivial to answer since there is - in general - no “formalized” notion of who is a member of the project. This loose notion of team membership is particularly pronounced in GitHub, since the integration of pull requests allows users to contribute code without the need for a prior procedure of obtaining commit rights in the repository.

Using time-stamped commit data, a first naive approach to study team size could be based on the analysis of activity spans of developers, i.e., taking the first and last commit of each developer to the project and considering them as team members in between the time stamps of those two commits. However, this simple approach generates several problems: First, developers may leave the project, be inactive for an extended period of time and then rejoin the project later. Secondly, our analysis is necessarily based on a finite observation period. Close to the end of this observation period, the naive approach outlined above will wrongly consider that developers left the project after their last commit, even though in reality many of them are likely to commit again in the future. Finally, Open Source Software projects feature a large number of one-time contributors who - using this simple approach - will not be considered as project members even though they both contribute to the development and impose coordination costs. Figure 14 in the SI shows that this is indeed the case for our data set, where more than 40 % of all commits in the majority of the projects have been contributed by one-time contributors.

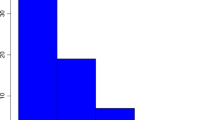

We avoid these problems by taking into account the intermittent nature of developer activities. In particular, for each individual developer we analyse his/her whole commit history and calculate the maximum time of inactivity between any two consecutive commits.Footnote 2 Doing this for all developers yields a maximum inactivity time distribution, which allows us to estimate the probability with which a developer who has been inactive for a certain time will commit again in the future. The left panel of Fig. 1 Footnote 3 shows the histogram of maximum inactivity times of all developers, aggregated across all projects. The right panel shows the complementary cumulative distribution function (ccdf) of the distribution. Here, we observe that 90 % of all consecutive commits occur within time periods of less than 295 days. In other words, the chance of a developer committing again after having been inactive for more than 295 days is less than 10 %.

Based on this finding, we utilize a a sliding window with a size of 295 days to define the size of the development team at any given time t.Footnote 4 Precisely, at time t we define the team T t of a project to consist of all developers who have committed at least once within the time window [t−δ team,t] of window size δ team.

3.3 Measuring Software Development Productivity

Apart from a reasonable definition of the development team of a project, a second major building block of our study is the definition of software development productivity. In general, the productivity of an individual or an organization is defined as the output produced per time unit. In our study we exclusively focus on the output in terms of source code artifacts produced by software developers. A number of earlier studies have used similar approaches to study the productivity of software development teams based on data from software repositories: The simplest possible approach to measure productivity is to calculate the total number of commits in a given time period (Adams et al. 2009; Sornette et al. 2014). However, this approach introduces multiple problems: First and foremost, a number of prior studies have shown that the size of commits follows a highly skewed distribution (Robles et al. 2004; Hindle et al. 2008; Alali et al. 2008; Arafat and Riehle 2009; Hofmann and Riehle 2009), arguing that it is typically a very small number of large commits which are fundamentally important in the evolution of software, while the majority of commits are minor contributions. As such, the size of a commit has to be taken into account when measuring the productivity of developers in software projects.

Even more important for the purpose of our study, using the total number of commits introduces a systematic error when studying the relation between the number of developers in a development team and the total number of commits: First, the total number of commits contributed by n developers active in a given time period cannot - by definition - be less than n, which is why the total number of commits must scale at least linearly with team size. Secondly, due to basic principles guiding the use of revision control systems, developers in larger development teams tend to avoid generating conflicts by splitting their contributions in increasingly atomic changes, a strategy commonly paraphrased as “commit early, commit often”.

From the arguments above, we conclude that the mere number of commits of a developer is not a good proxy for the developer’s contribution, unless the size of those commits is taken into account. In our study we thus leverage the fact that our data set contains not only the occurrence of commits, but also the associated diff records which allow us to analyse the contents of commits.

Several methods can be used to turn this information into a quantitative measure of productivity. A simple approach is to use diff records in order to calculate the number of lines changed in a file, then calculating the commit size as the total number of lines changed in all affected files (Gousios et al. 2008). This methods is a significant improvement over using the mere number of commits. However, it still suffers from the fact that not all line changes are equal. In particular, following this approach, commenting out 100 lines of source code is seen as the same contribution as writing those 100 lines in the first place. For this reason, other standard measures of software development productivity take into account the semantics by extracting so-called function points (Albrecht 1979), i.e., larger units of source code that are directly related to some functionality of the software. Since the source code needs to parsed in order to extract these function points, this approach necessarily depends on the programming language and does not allow for an easy comparison between projects developed in different languages.

Our data set contains projects written in a mix of different programming languages (see Table 3 for details), which requires us to quantify productivity in a language-independent way. Rather than counting commits or the changed lines of code, we take a fine-grained approach which captures the changes of the source code at the level of individual characters. Precisely, for each commit we extract the diff records of all affected files, which allows us to unambiguously reconstruct the source code before and after the commit. We then calculate the Levenshtein edit distance (Levenshtein 1966) between these versions of the source code. The Levenshtein distance captures the number of single-character deletions, insertions and substitutions required to change the version before the commit into the version after the commit. For each of the more than 580,000 commits in our data set, we then sum the Levenshtein edit distances of the files affected by a commit, defining this sum as the commit contribution.

Apart from taking into account the size, or contribution, of commits, another important aspect in the analysis of time-stamped commit data is that productivity evolves in time. As such, we need to be able to define the productivity of a project within a particular time window. In order to define the productivity P t at time t, we consider all commits occurring within a window [t−δ prod,t] of window size δ prod. On the one hand, the time window should be long enough to contain a sufficiently large number of commits. On the other hand, the time window should be short enough such that it allows us to get an idea about the instantaneous productivity at, or around, time t. Balancing these two requirements, we can again address the optimum choice of the time window by studying the activity patterns of developers.

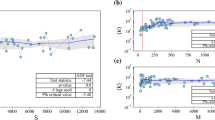

Here, for each project we analyze the time line of all commits (i.e., from any developer) and we calculate the time difference between any two consecutive commits. Doing this for all commits in all projects, we obtain the inter-commit time distribution. This helps us to understand how likely it is that multiple commits fall within a time window of a particular size. The histogram of inter-commit times is shown in the left panel of Fig. 2. As evident from the figure, the time difference between two consecutive commits varies over several orders of magnitude, with pronounced peaks around time differences of 30 minutes (i.e., ≈ 0.02 days) as well as around time differences of one day. The right panel of Fig. 2 shows the complementary cumulative distribution function (ccdf) of the inter-commit time distribution. From this distribution, we can deduce that in 90 % of the cases the time difference between consecutive commits of a project is less than 6 days.

Furthermore, we expect a strong weekly periodicity in commit activity due to the effect of workdays and weekends. To ensure that this effect is equally pronounced in all time windows, we choose the window size as a multiple of 7 days, i.e., δ prod=7.

With the so defined productivity time window, in Fig. 3 we display the relation between development productivity as measured simply by the number of commits and by the Levenshtein edit distance. We segment the commit history of each project in consecutive, non-overlapping productivity windows of 7 days. For each window, we then record the total number of commits and the mean commit contribution, computed as the sum of the commit contributions of all commits divided by the number of commits. We use the mean instead of the total commit contribution in order to eliminate the systematic linear scaling arising from the fact that n commits necessarily have a total contribution of at least n characters.

Figure 3 shows that the mean commit contribution varies across several orders of magnitude for any number of commits observed in our data set. In the small commit ranges we observe variations of up to 6 orders of magnitude,Footnote 5 and even though the fluctuations decrease, the high commit range still exhibits variations of close to 3 orders of magnitude. This indicates that the Levenshtein edit distance cannot be replaced as a productivity measure by the total number of commits, without losing a significant amount of information on the contributions of those commits.Footnote 6

3.4 Temporal Analysis of Productivity and Team Size

The procedure described above allows us to quantitatively assess the total production of source code, as well as the team size, i.e., the number of developers involved in the source code production at any given point in time. For each project, we use this methodology for a temporal analysis of the full history of time-stamped commit actions as follows:

Using the productivity time window of 7 days, we first segment the history of the project into consecutive, non-overlapping time slices of one week. For each productivity window reaching up to time t we calculate the total source code production based on the cumulative Levenshtein edit distance of all commit actions occurring within the interval [t−7d,t]. We further compute the associated size of the developer team as the number of different developers who have committed at least once within the time interval [t−295d,t]. We illustrate this procedure in Fig. 4.

Illustration of our methodology for the temporal analysis of productivity and team size: The total productivity is calculated as the sum of the Levenshtein edit distances of the five commits by two developers (red and green) in the productivity window. The team size of four is calculated as the number of different developers (red, green, blue and orange) who committed at least once within the team size window

Using this method, for each week in the history of all 58 projects in our data set, we obtain a pair of values capturing the team size and the production of commit contributions. This allows us to quantitatively analyse the relation between team size and productivity in the following section.

4 Quantitative Analysis of Team Size and Productivity

In the following, we present the results of our quantitative analysis of developer productivity in OSS projects. Notably, we first study productivity by exclusively using the productivity time window of 7 days introduced above. For each project in our data set, we split the entire commit history into subsequent productivity time windows. For each time window we aggregate all commits by all developers active in that particular time window. For each productivity window, we additionally calculate the number of active developers, i.e., the number of those developers committing at least once within a productivity time window. Compared to the estimation of the team size introduced above, this initial analysis is thus based on a rather restrictive definition for the size of the development team. We include it here to rule out the possibility that our results sensitively depend on the choice of the team size window.

The left panel of Fig. 5 shows the relation between the mean number of commits per active developer and the number of developers active in the productivity time window, aggregated over all projects. For small numbers of active developers, the mean number of commits per developer exhibits a large variance over more than two orders of magnitude. As the number of active developers increases, one observes a decrease in the mean number of commits per developer. In particular, for projects with more than 50 developers committing in a given week, we observe a mean number of about two commits per week, while the mean number of commits for projects with a single active developer is about ten times as large. Even if one were to consider the mean number of commits as a proxy for developer productivity, this finding is inconsistent with an economy of scale in collaborative software development, recently found by a similar analysis on a different data set (Sornette et al. 2014).

Mean number of commits (left) and commit contribution (right) per active developer depending on the active developers, i.e., the number of developers committing at least once within a given productivity time window. An alpha channel has been added to the plot for easier visualization of point densities

In Section 3 we have argued that different commits can have vastly different commit contributions, when measuring the contribution of a commit in terms of the actual code committed. In our analysis, we account for these differences by computing, for each commit, the commit contribution in terms of the Levenshtein edit distance between the versions of the source code before and after the commit. The total contribution of all developers active in a given productivity time window can then be computed as the cumulative Levenshtein edit distance of all commits occurring in that time window. The right panel of Fig. 5 shows the relation between the mean commit contribution per active developer and the number of active developers, i.e., the developers committing within the same productivity time window. We again aggregate over all 58 projects. Similar to the left panel of Fig. 5, a large variance can be observed for small numbers of active developers. Furthermore, we do not find evidence for a super-linear increase in total productivity, which would translate to an increase in the mean developer contribution. Similar to the left panel of Fig. 5, we rather observe that for projects with more than 50 active developers, the mean developer contribution is about 103 characters while it is - on average - at least one order of magnitude larger for projects with a small number of active developers.

In Section 3 we argued that the number of developers committing within a time window of 7 days does not provide us with a reasonable estimate for the size of the development team. This is due to the inactivity time distribution shown in Fig. 1, in which we observe a pronounced peak only around approximately 110 days. In particular, this shows that developers who have not been active in a given week should still be considered members of the development team, as they are still likely to commit again in the future. To mitigate this problem, we repeated our analysis using the window sizes illustrated in Fig. 4. For a given time t we again computed the mean number of commits, as well as the mean developer contribution with a productivity time window, i.e., within the time frame [t−7d,t]. For the estimation of the team size we then count all developers who committed within the team size time window, i.e., in the time range [t−295d,t]. In other words, we do not consider developers to be members of the development team if they have not committed for more than 295 days.

The results of this analysis are shown in Fig. 6. The left panel shows the relation between the mean number of commits per active developer and the team size. Similar to Fig. 5, we observe a decreasing mean number of commits as the teams size increases, which can be interpreted as evidence for the Ringelmann effect. Finally, the right panel of Fig. 6 shows the relation between the size of the development team and the mean commit contribution per active developer, again aggregated across all projects. Comparing small team sizes ( ≈ 1−10 developers) to large teams (>300 developers), one observes a significant decrease in the mean developer contribution, on average dropping by more than two orders of magnitude. That the decrease in the mean commit contribution is even more pronounced than for the mean number of commits per developers indicates (i) that developers in large development teams tend to commit less often, and (ii) that these commits tend to be smaller in terms of the Levenshtein edit distance. Again, this finding can be interpreted as evidence for the Ringelmann effect or - in economic terms - for the fact that collaborative software engineering projects represent diseconomies of scale. It furthermore quantitatively substantiates common software engineering wisdom, as paraphrased for instance by Brooks’ Law of software project management.

Mean number of commits (left) and commit contribution (right) per active developer depending on the team size, i.e., the number of developers committing within a given team size window. Green lines indicate the fitted slopes α 0 and α 1 (see Section 4.1). An alpha channel has been added to the plot for easier visualization of point constellations

4.1 Scaling Factors of Productivity

So far, our arguments about the scaling of productivity with the size of the development team have been mostly visual. In the following, we substantiate these arguments by means of a regression analysis. For 〈n〉 being the mean number of commits per active developer, 〈c〉 being the mean commit contribution per active developer, and s being the team size as defined above, we can perform a regression analysis using the following two log-transformed linear models:Footnote 7

Table 1 shows the estimated coefficients of these models, inferred by means of a robust linear regression. The observed negative values for the scaling factors α 0=−0.24 and α 1=−0.36 quantitatively confirm the negative relation between team size and mean production of active developers previously observed visually in Fig. 6.

The low r 2 values reflect the high variability in the data, resulting from the inherent sensitivity of the Levenshtein edit distance. In essence, our regression models allow us to infer a significant negative trend, but prevent us from making predictions about the mean production, given the team sizeFootnote 8 (c.f. further arguments in Section ?? in the ??).

Importantly, in all of our results so far, we computed the mean production (either in terms of the number of commits or commit contribution) per active developer, i.e., we divided the total production in a given productivity window by the number of developers who were committing at least once within this time window. When studying the relation between team size and mean production, one can alternatively compute a mean production per team member by dividing the total production by the team size, rather than the number of active developers. This is justified when interpreting the team size (i.e., all developers that have committed in the past team size window) as the amount of resources available to a project, and when considering productivity as the ratio between the generated outputs and these resources.

It furthermore emphasizes the ability of a project to continuously engage members of the development team, while a large number of inactive team members results in a decrease in observed productivity. In Fig. 7 we thus provide results for the mean production per team member. In both panels, we observe a significant decrease in terms of the mean number of commits (left) and the mean commit contribution (right) per team member, as the size of the team grows.

Mean number of commits (left) and commit contribution (right) per team member, depending on the team size, i.e., the number of developers committing within a given team size window. Green lines indicate the fitted slopes α 2 and α 3. An alpha channel has been added to the plot for easier visualization of point constellations

Using the data shown in Fig. 7, we can again perform a regression analysis. Like above, we use the following two log-transformed linear models

where 〈n ′〉 and 〈c ′〉 denote the mean number of commits and the mean contribution per team member respectively. Table 2 shows the results of estimating the coefficients of this model by means of a robust linear regression.

Again we find negative values for the scaling factors α 2 and α 3 which indicate a negative relation between team size and mean productivity. Importantly, with this we confirm a negative scaling of productivity both for the active developers, which we computed using a window size of 7 days, as well as for the team size, which we computed for a window size of 295 days based on the maximum inactivity time statistics of our data set. This shows that the presence of a negative scaling does not sensitively depend on the window size used to calculate the number of contributors to a project.

Studying the total rather than the mean output produced, we can alternatively represent the negative relation between team size and mean productivity in terms of two simple Cobb-Douglas production functions that take the following form

Here N and C denote the total production, measured in terms of the total number of commits (N) and the total commit contributions (C) by all members of the development team. A log-transformation of these production functions yields the linear models

Since the mean quantities 〈n ′〉 and 〈c ′〉 are given as \(\frac {N}{s}\) and \(\frac {C}{s}\), we can express the total production quantities N and C as s⋅〈n ′〉 and s⋅〈c ′〉 respectively. Dividing both sides of (3) by the team size s yields the following relation

between the scaling factors of the models. As such, negative scaling factors α 2 and α 3 in the models for the mean production 〈n ′〉 and 〈c ′〉correspond to exponents α 4, α 5 smaller than 1 in the Cobb-Douglas production functions for the total production N and C. In particular, such exponents smaller than 1 indicate a regime of decreasing returns to scale. In our case, we find values of α 4=0.25 and α 5=0.14, which provides further evidence to the fact that Open Source Software development processes are indeed examples of diseconomies of scale. Importantly, we emphasize that this finding is not an artifact of aggregating the data of all projects. Section ?? in the Appendix shows that it holds also on the level of individual projects.

From this we conclude that Open Source Software communities are indeed no magical exceptions from the basic economics of collaborative software engineering. Not surprisingly, we find that the productivity of large teams of irregularly contributing volunteers experiences a significant decrease as projects grow in size. As such, at least for productivity in OSS projects, it is safe to refute the Aristotelian interpretation that “the whole is more than the sum”, finding - on the contrary - strong support for the Ringelmann effect.

5 A Network Perspective on Coordination in Software Development

Considering our confirmation of the Ringelmann effect in OSS communities outlined above, we will now go one step further and investigate possible explanations for the observed relations between team size and productivity. In Section 3 we have argued that research has generally highlighted two different aspects that contribute to the Ringelmann effect: The first are motivational factors such as “free-riding” or “social loafing” which tend to become stronger as teams grow in size. The second are coordination challenges that naturally emerge as teams become larger. In line with a substantial body of work in empirical software engineering, our study exclusively focuses on coordination challenges emerging in development teams. While motivational factors are likely to play an important role as well, we argue that our available data do not allow us to quantitatively assess these factors.

In recent years, a number of sophisticated approaches have been developed which allow to operationalize coordination requirements in software development teams. Studying data from a software development repository, Cataldo et al. (2006) generated a so-called Task Assignment Matrix which captures the association between developers and the files they have modified. Using an additional data set of modification requests which can be used to identify interdependencies between files, the authors were then able to generate a Coordination Requirement Matrix. This matrix captures the need for coordination between developers who are either i) modifying the same files, or ii) editing different files that depend on each other due to a joint occurrence in the same modification request. Building on the general idea of operationalizing coordination requirements, researchers in empirical software engineering have generally highlighted the importance of fine-grained data on collaboration events in software development. Using rich data from the team collaboration platform Jazz, Wolf et al. (2009) generated and analyzed developer communication networks in order to identify coordination needs and improve knowledge management. Recent works, have even gone beyond analyzing the mere presence of coordination needs, using time-stamped and fine-grained data of developer actions from support tools like Mylin to not only detect (Blincoe et al. 2012), but also categorize coordination requirements into critical and non-critical ones (Blincoe et al. 2013; Blincoe 2014).

We acknowledge the importance of such accurate methods to infer meaningful coordination requirements based on fine-grained data that capture developer communication and developer actions beyond mere source code changes. However, in this paper we decided to follow a simpler approach for multiple reasons: First, we do not have access to fine-grained data on developer actions captured by collaboration tools such as Mylin or Jazz. Secondly, the fact that our study is comprised of 58 rather heterogeneous projects written in a total of 11 different programming languages, does not easily allow us to automatically extract comparable and meaningful dependency structures at at the level of programming language abstractions. And finally, rather than focusing on the microscopic inference of coordination requirements between a particular pair of developers, the goal of our study is a statistical categorization of team coordination structures at the macroscopic level.

In this paper we thus take a rather simplistic approach which is in essence a variation of the method proposed by Cataldo et al. (2006). In particular, using the file commit history of each project in our data set, we construct a Task Assignment matrix, that captures the association between developers and the source code regions they have edited. We particularly consider the time-stamps of commit actions, as well as the detailed code changes within all of the committed files in order to build directed and weighted networks which capture the time-ordered co-editing of source code regions by different developers. We call the resulting network topologies time-ordered co-editing networks and throughout this article we will use them as a first-order approximation for the emerging coordination overhead.

5.1 Constructing Time-Ordered Co-editing Networks

We first provide a detailed description of our methodology of constructing time-ordered co-editing networks, which is further illustrated in Fig. 8. Let us denote each committed change of a single source code line l in file f at time t by developer A by the 4-tuple {A,f,l,t}. We infer a time-ordered co-editing relation A→B between developer A and B on file f, whenever developer A commits a change to a source code region of a file f, which was last edited by developer B. More formally, we infer a directed link (A,B) in a co-editing network whenever there are committed line changes {B,f,l 1,t 1} and {A,f,l 2,t 2} such that t 1<t 2 and l 1 = l 2. We further require that no other developer X has edited this particular line in the meantime, i.e., we require \(\nexists (X,f,l_{1}=l_{2},t^{\prime })\) for some developer X≠B and some time stamp t ′∈[t 1,t 2]. In order to be able to differentiate between trivial and more complex changes in the code, we further weight directed links by the size of the overlap of the co-edited code regions. Using the notation of committed lines from above, we do so by aggregating multiple links (A,B), each of which indicates a single co-edited line, to a weighted link (A,B,w), where the weight w captures the number of co-edited lines within a given productivity time window.

The method outlined above is enabled by a fine-grained analysis of the contents of all commits to all files in the full history of a project. In particular, we calculate diffs between two committed versions of a file whenever commits to this file occurred from different developers within a given productivity time window. For the technical details of our approach, we refer the reader to Section ?? in the Appendix. In the following we illustrate our method to construct time-ordered co-editing networks using the time-stamped commit activities shown in Fig. 8. In this example, three developers A, B and C are committing their changes to a total of three files, illustrated by differently colored document symbols in the time line of commit activities shown in the bottom panel. For simplicity here each of the files contains exactly three lines, and the lines edited in each of the commits are highlighted in red. In the left part of Fig. 8 (showing commit activities during time window 1) the sequence of code changes of developers is such that three time-ordered co-editing links emerge: developer B is connected to A since B edited two lines in the orange file which were previously edited by A. The fact that the overlap in the edits comprises two lines is reflected by an aggregate link weight of two in the co-editing network. Furthermore, developer A is connected to B, since A has edited line two in the orange file, which was last edited by developer B. Finally, developer B is connected to C by a directed link with a weight of two, since B has committed changes to lines one and three in the blue file, both of which were last changed by developer C. Importantly, unrelated code changes occurring in between the changes of two developers to the same lines will not result in a link in the co-editing networks. This can be seen in time window 2 shown in the right part of Fig. 8. Here, we infer a directed co-editing link with weight two from node C to node A, which is based on the changes to lines one and two in the green file. Notably, the intermediate change of line three in the green file by developer A does not result in a co-editing relation, even though it occurs in between the changes by B and C.

Compared to much more sophisticated methods to infer coordination requirements which have been outlined above, it is clear that our method merely generates an approximation for real coordination structures. Nevertheless we argue that the questions (i) which source code regions were changed by which developers, and (ii) in which order these changes occurred, capture interesting aspects of the team organization that influence potential coordination overhead emerging in a team. Indeed, much of the project management and architectural design efforts in software engineering aim at maintaining a modular design, which allows subcomponents of a system to be developed by specialized subgroups of developers, rather than arbitrary team members. The resulting partitioning of development tasks is meant to keep coordination requirements, and thus overhead, at a minimum level and to ensure that coordination requirements correspond to actual social structures (Cataldo et al. 2008). The association between edits to source code regions and developers captured by our time-ordered co-editing networks can be seen as a first-order approximation for the partitioning of development tasks.

To illustrate this, in the right panel of Fig. 8 we randomly shuffled the association between source code and developers in the commit history. As a consequence, each of the three files is now edited by each of the three developers, with no particular assignment of developers to certain subsets of files. The time-ordered co-editing network resulting from the commits in time window 2 thus becomes more densely connected. We interpret such a densification of co-editing networks as indicator for task assignment procedures that are likely to result in increasing coordination overhead. In particular, this densification implies a super-linear growth in the number of links as the number of nodes increases. In line with arguments about the super-linear increase in the number of possible communication channels which was quoted as one possible reason for Brooks’ law (Brooks 1975), it can be seen as one possible explanation for decreasing productivity with increasing team size.

The fact that we study directed links whose directionality is defined based on the order of committed changes, further allows us to differentiate between nodes that point to many other nodes and nodes that are pointed to by many other nodes. Intuitively, by this we capture whether i) a developer A has to build on changes by many other developers (corresponding to a large out-degree of A), or ii) whether many other developers have to build on the changes committed by developer A (corresponding to a large in-degree of A). In addition, we consider weighted links between pairs of developers which can either result from multiple co-edited lines on the same file or from co-edits on different files (see examples in Fig. 8). Considering weights in the resulting network topologies thus allows us to differentiate between weaker or stronger coordination links, based on the actual source code changes committed.

In summary, the approach outlined above allows us to capture both the topology and strengths of co-editing links between developers. A macroscopic characterization of network density allows us to quantitatively assess a proxy for potential coordination overhead introduced by the association between developers and edited source code regions. Building on Brooks’ law, we hypothesize that co-editing network becomes increasingly dense as the number of active developers grows. In other words, we hypothesize that the mean number of co-editing relations introduced per team member grows as the number of developers increases.

5.2 Co-editing Networks and Productivity

We first test this hypothesis by means of a case study, focusing on two specific large-scale projects covered by our data set. For the selection of these projects, we computed the coefficients α 3 of a log-transformed linear model for the relation between the team size and the mean commit contribution per team member (see Section 4.1 and (2) for details of the model). Different from Section 4.1, here we perform this regression analysis per project, while limiting ourselves to those 15 projects in our data set which have at least 10,000 commits (Table 3). In Table ?? in the Appendix we report on the analysis of the fits. In order to be able to compare the scaling exponents of these projects to each other, we further limit our analysis to those 11 of the 15 large-scale projects, for which the log-transformed linear model from (2) provides the most reasonable fit (see Section ?? in the Appendix for details).

From these 11 projects, we selected (i) zf2 being the project with the smallest exponent α 3 ≈ −1.9, and (ii) specs being the project with the largest exponent α 3 ≈ −0.12.Footnote 9 Figure 9 shows the relation between the team size and the mean commit contribution per team member for these two projects individually.

Using the methodology described above, we next create time-evolving co-editing networks for the two projects specs and zf2 as outlined above. In Fig. 10 three representative snapshots of the resulting networks are shown for each project. For these networks, we are now interested in the question of how the co-editing links per developer scale with the size of the network. Since we are using weighted networks, there are different ways of computing the co-editing links per developer. First, for each developer A, we can simply sum the weights of all outgoing links (A,X). This weighted out-degree captures the total number of co-edited lines, which can be seen as a proxy for coordination costs incurred by developer A. Secondly, we can discard weights which capture the number of co-edited lines between a particular pair of developers, instead solely focusing on the topological dimension. For this, we compute the (unweighted) out-degree of each developer A, capturing with how many different other developers a developer A has co-edited source code regions. In Fig. 11 we show the relation between the size of the co-editing networks, computed in terms of the number of nodes, and the mean coordination costs per developer, proxied by the different degree-based measures outlined above.

For the zf2 project, which exhibits the smallest coefficient α 3 in our data set, we observe a positive correlation between the size of the network and the mean (weighted and unweighted) out-degree. We quantify this positive correlation using a linear regression analysis. Precisely, we calculate the coefficient of a linear model for the growth of the mean degree. Since the mean degree in a network is bounded above by the the number of nodes, this coefficient is necessarily smaller than one. At the same time, a coefficient of zero would indicate a constant mean degree, and thus a linear growth of the number of links in the network. For the growth of the mean (unweighted) out-degree in the zf2 project, we obtain a coefficient of 0.031 with an associated r 2 value of 0.23. For the mean weighted out-degree, we use a linear regression model on log-transformed values for the weighted out-degree. We then calculate the scaling coefficient for the exponent of a log-linear model. Again, a zero coefficient would indicate a linear growth of the number of links, while any positive coefficient indicates a super-linear growth. For the zfs project, we obtain a coefficient of 0.04 with an associated r 2=0.13. These positive values of the coefficients are evidence for a super-linear growth of co-editing links as the number of developers increases, which can be interpreted as a growth in the mean coordination cost per developer. The resulting tendency of networks in the zf2 project to become increasingly dense can be observed in the bottom panel of Fig. 10.

For the specs project, which exhibits the largest coefficient α 3 in our data set, we observe a significantly less pronounced growth of the (weighted and unweighted) degree as the network size grows. A regression analysis for the mean (unweighted) out-degree yields a coefficient 0.008 with an associated r 2 value of r 2=0.27, while for the mean weighted out-degree we obtain a coefficient 0.01 and r 2=0.049. From this, we conclude that an increase in the number of developers in the specs project results in a much less pronounced growth of co-editing links, compared to the zf2 project. The resulting, rather sparsely connected networks in the specs project can be observed in the top panel of Fig. 10. This finding provides a possible explanation for the scaling exponent of α 3 ≈ −0.12 which, compared to all other projects, corresponds to rather slowly decreasing returns to scale. Conversely, the fast, super-linear increase of co-editing links in the zf2 project coincides with a particularly small scaling exponent of α 3 ≈ −1.9 which corresponds to rapidly decreasing returns to scale. As such, the two case studies are consistent with our hypothesis that the decreasing returns to scale in OSS communities are related to an increasing densification of co-editing networks.

A closer look at the two projects in our case study allows us to further substantiate our quantitative findings with project-dependent, intuitive explanations. The specs project maintains a public repository for meta-information on Apple Cocoa modules, that can be managed via the library dependency management tool CocoaPods. In particular, the repository consists of more than 7000 different libraries, each containing a set of JSON files which are authored and maintained by different, small teams of developers. As such, specs can be seen as a rather extremal example for a maximally modular project, in the sense that it consists of thousands of independent modules each being developed by different developers with no or at least minimal coordination needs.

The zf2 project on the other hand, develops a component-oriented web framework named Zend. Compared to the fully independent modules in the specs project, the code base of zf2 is likely to be much more integrated, thus not allowing for a non-overlapping mapping between developers and source code regions. As such, the commit actions of a large number of developers are likely to produce a much more densely connected co-editing network. A particular additional feature of the zf2 project is that it imposes rather rigorous standards with respect to programming style, documentation and testing which are summarized in a detailed contributor guide.Footnote 10 These standards are targeted at improving both maintainability and software quality and require, for instance, that any piece of contributed code must be covered by unit tests. This focus on rigorous coding standards could be one possible explanation for an increased coordination overhead and thus a significantly smaller scaling exponent α 3, while at the same time possibly translating to increased software quality and maintainability.

In addition to this case study focusing on the two projects with the smallest and largest decrease in productivity, we additionally perform an analysis for all 48 projects, for which the log-log model is the best and significant fit for the scaling of productivity. The results of this analysis are shown in Fig. 12. Here, the scaling exponent α 3 for the scaling of productivity is shown on the x-axis, while the coefficient for the slope of the mean out-degree and the mean weighted out-degree are shown on the y-axis of the left and the right panel respectively. Dark blue symbols refer to projects with at least 10,000 commits, filled symbols indicate projects for which the fitted scaling coefficient for the mean (weighted) out-degree is significant at p=0.05. The results highlight that all projects with strongly negative and significant slopes α 3 for the scaling of productivity also exhibit pronouncedly positive scaling exponents for the growth of the mean (weighted) out-degree. Based on these empirical results, we can thus not reject our hypothesis that the decrease in productivity for larger team sizes is related to a super-linear growth of coordination links. While the spread in Fig. 12 does not indicate the presence of a strong linear correlation, one nevertheless observes a general tendency of projects with larger (i.e., closer to zero) scaling exponents α 3 to exhibit smaller coefficients for the growth of the mean out-degree. We can quantitatively assess the significance of such a relation by computing the normalized mutual information (NMI) between the slopes of the mean (weighted) out-degree and the exponents α 3. This quantity measures the extent to which knowing the value of one variable allows the inference of the other. The NMI has a minimum of 0 when the two variables are independent, and a maximum of 1 when one variable completely determines the other. Referring to the left side of Fig. 12, the NMI between α 3 and the slope of the mean out-degree is 0.33. Similarly on the right side, the NMI between α 3 and the slope of the mean weighted out-degree is 0.24.

Scaling of the mean team-member contribution with team size (α 3) vs. the scaling coefficients of the mean out-degree and mean weighted out-degree with the size of the coordination networks. To make projects comparable, we selected 48 of the 49 projects for which the log-log model is the best and significant fit for the scaling of productivity. We removed one project, oh-my-zsh, for which we could not estimate a reliable slope of the mean-degree. The coordination network for oh-my-zsh was so sparse that our robust regression did not converge. Filled symbols indicate that the fitted scaling coefficients for the mean (weighted) out-degree in the network is significant at p=0.05. Dark blue symbols represent those projects which have at least 10,000 commits

We further estimate the statistical significance of the obtained NMI values by means of a bootstrapping approach. We shuffle α 3 and the mean (weighted) out-degree 104 times and compute the resulting NMI for each shuffled sample. Calculating the percentage of the shuffled NMI values that are larger than the empirical ones allows us to derive a p-value for the null hypothesis that the empirical NMI values of 0.33 and 0.24 can be obtained by chance alone. The calculated p-values are 0 and 0.009 for the left and right side of Fig. 12 respectively, which allows us to reject the null hypothesis above. We therefore conclude that the positive NMI values are significant, and thus substantiate the negative relation between the scaling exponents α 3 and the growth coefficients of the mean (weighted) out-degree.

6 Threads to Validity and Future Work

Prior to concluding our article, in the following we summarize a number of threats to validity and open issues that highlight potential directions for future research.

First, and foremost, our study is based on a large-scale data set from the social coding platform gitHub which comes, like any data set, with its own fallacies and potential biases. The specific risks and opportunities awaiting researchers in the analysis of data from gitHub have recently been summarized by Kalliamvakou et al. (2014). A number of risks are associated with the facts that i) gitHub repositories are not necessarily equivalent to projects, ii) the majority of repositories show low levels of commit activity, iii) the majority of repositories are personal repositories not used for collaboration, iv) many repositories are not related to software development, and v) many gitHub repositories are actually not the main development repository of projects. We avoided all of these risks by a careful selection of projects which ensures that all analyzed repositories i) are the main development repositories of OSS projects, ii) showed activity for at least one year, iii) showed recent activity in the week of data selection, and iv) had commits by at least 50 different developers. A second set of risks involves the use of so-called pull requests, and the way in which those are recorded in the commit history. As mentioned in Section 3.1, by our special treatment of merged pull requests we have carefully accounted for the potential resulting biases. In particular for merged pull requests we only account for the contribution of the original commit underlying the pull request. We further correctly attribute this contribution to the actual author of the commit, and not to the maintainer who merged the pull request (see details in Section ??) .

One shortcoming of our empirical analysis is that we have excluded motivational factors which have been shown to be important driving factors of the Ringelmann effect. We expect that such factors play an important role as a further mechanism behind the decreasing returns to scale observed in our study, and we thus highlight a large potential for future research in this area. A particularly interesting question that results from our choice of data from gitHub, is whether the interaction mechanisms of this platform may mitigate motivational factors contributing to the Ringelmann effect. In particular, a recent study has highlighted the importance of publicly available developer activity information on gitHub (Dabbish et al. 2012), which is supposed to have a significant effect on the motivation of developers. Building on our quantitative approach, future work may search for quantitative evidence for this effect, for instance by means of a large-scale comparison between the scaling of team productivity on gitHub and other platforms such as, e.g., SourceForge. In order to facilitate such future work, we have made both the raw and the processed version of our data set freely available to be reused for researchers (Scholtes et al. 2015).

One concern about the validity of our results on the scaling exponents of productivity may arise due to our estimation of the size of development teams. To define a reasonable size of the time-window for our analysis, we used the statistics of developer activity, i.e., the maximum time of inactivity between any two commits of a developer. Notably, one-time contributors, i.e., developers who committed only once, were not included in the statistics of inactivity in order to avoid a bias towards large inactivity times. However, the question whether the large fraction of one-time contributors for some projects may result in a bias needs to be addressed. On the one hand, a large number of one-time contributors with minor commit contributions could result in a spuriously large team size that results in an underestimation of productivity scaling exponents. On the other hand, even though the fraction of single-commit developers is large for some projects, by definition these developers committed only once, thus limiting the magnitude of the effect. While there is no obvious answer to the question of how to account for single-commit contributions and whether to count one-time contributors as members of a development team, we carefully avoided potentially wrong conclusions in two ways: First, in Section 4, we confirmed a sub-linear scaling of productivity both for the size of the team and the number of active developers. The latter number is defined using a time window of 7 days, which significantly mitigates potential biases that may affect an analysis based on the much larger team size window. Secondly, to avoid wrong conclusions based on our results on the effect of the team size, we reanalyzed all of our data focusing on a stricter notion of core developers which excludes all one-time contributors. We report these results in Section ?? both at the aggregate and the project level. The scaling exponents shown in Tables 5 and 6 confirm that one-time contributors do not significantly influence our results and that they do not qualitatively change any of our conclusions.