Abstract

Objective

This study explored the use of electroencephalogram (EEG) and eye gaze features, experience-related features, and machine learning to evaluate performance and learning rates in fundamentals of laparoscopic surgery (FLS) and robotic-assisted surgery (RAS).

Methods

EEG and eye-tracking data were collected from 25 participants performing three FLS and 22 participants performing two RAS tasks. Generalized linear mixed models, using L1-penalized estimation, were developed to objectify performance evaluation using EEG and eye gaze features, and linear models were developed to objectify learning rate evaluation using these features and performance scores at the first attempt. Experience metrics were added to evaluate their role in learning robotic surgery. The differences in performance across experience levels were tested using analysis of variance.

Results

EEG and eye gaze features and experience-related features were important for evaluating performance in FLS and RAS tasks with reasonable results. Residents outperformed faculty in FLS peg transfer (p value = 0.04), while faculty and residents both excelled over pre-medical students in the FLS pattern cut (p value = 0.01 and p value < 0.001, respectively). Fellows outperformed pre-medical students in FLS suturing (p value = 0.01). In RAS tasks, both faculty and fellows surpassed pre-medical students (p values for the RAS pattern cut were 0.001 for faculty and 0.003 for fellows, while for RAS tissue dissection, the p value was less than 0.001 for both groups), with residents also showing superior skills in tissue dissection (p value = 0.03).

Conclusion

Findings could be used to develop training interventions for improving surgical skills and have implications for understanding motor learning and designing interventions to enhance learning outcomes.

Graphical abstract

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Surgery has evolved with minimally invasive techniques like laparoscopic surgery gaining popularity due to several advantages over traditional open surgery, including smaller incisions, reduced postoperative pain, faster recovery, and improved cosmetic outcomes [1, 2]. However, laparoscopic surgery requires specialized skills and techniques that differ from those used in open surgery [3].

The FLS program trains and assesses necessary skills for safe and effective laparoscopic surgery through simulated tasks [3]. The program evaluates important elements, such as expertise, decision-making abilities, and manual capabilities, to determine laparoscopic surgical competence [4].

RAS, on the other hand, uses robotic technology to assist surgeons in performing intricate surgical procedures. RAS is now commonly used in various surgical specialties, such as urology, gynecology, and general surgery. Mastering the FLS is crucial for surgical training and is an essential prerequisite for performing RAS surgeries.

Participation in the FLS program improves surgical trainees’ technical skills [5]. Additionally, completion of the FLS program is often a requirement for board certification in surgical specialties [5]. Hence, FLS tasks are critical components of laparoscopic and RAS surgical training.

Evaluating performance in FLS tasks can be challenging because (1) FLS score heavily weighs time and precision in its formula [6], (2) subjective assessment of task performance can also be challenging due to variations in criteria used by different observers, (3) individual differences in cognitive and physical abilities, along with external factors like fatigue and stress, can also make it challenging to assess performance accurately [7].

Recent technological advancements have integrated physiological and cognitive measures to enhance surgical task performance evaluation. EEG and eye tracking features are two methods that have gained attention recently. EEG measures electrical activity in the brain and can assess cognitive processing. Studies indicate that EEG features, such as event-related potentials, decrease at the parietal electrode with skill acquisition in laparoscopic surgery [8]. Eye tracking features, such as gaze patterns and fixations, can reveal visual attention and decision-making insights [9]. Expert laparoscopic surgeons exhibit shorter fixations and longer saccades compared to novices, indicating more efficient visual search and decision-making [10]. Eye tracking was suggested as a potential surgical skill evaluation tool [10, 11].

This study developed models for evaluating performance and learning rate in FLS and RAS tasks using machine learning, EEG, and eye gaze features.

Methods

This study was approved by the Institutional Review Board (IRB: I-241913) of Roswell Park Comprehensive Cancer Center. The IRB granted permission to waive the need for written consent. Participants were given written information about the study and provided verbal consent.

Data recording

A 124-channel EEG headset (AntNeuro®) was used to record EEG data at 500 Hz (Fig. 1). Additionally, Tobii eyeglasses (Tobii®) were used to simultaneously record eye-tracking data at 50 Hz (Fig. 1). Videos were also recorded during the task completion.

Participants

Eleven medical or premedical students, two residents, six fellows, and six surgeons participated in this study. The participants’ ages ranged from 22 to 67, with an average age of 36 ± 12. There were 17 male and 8 female participants, of whom 24 were right-handed and one was left-handed. Additionally, 17 participants were right eye dominant, while 8 were left eye dominant. Three participants did not perform RAS tasks. The number of hours of RAS experience, the total count of laparoscopic surgeries performed as the primary surgeon (cases), the length of clinical practice (years), and the duration of formal laparoscopic surgery training (years) for participants were represented in Table 1.

Tasks

The study comprised three FLS program tasks (peg transfer, pattern cut, and intracorporeal suturing) and two RAS tasks (pattern cut and tissue dissection). Participants performed each task five times, while expert surgeons only completed them twice. FLS tasks were done with the FLS laparoscopic training box (Pyxus®), and RAS tasks were performed using the da Vinci surgical robot (Fig. 1).

FLS peg transfer involves participants transferring six objects mid-air from their non-dominant hand to their dominant hand and placing them on a peg on the opposite side of the pegboard. They then reverse the process, transferring the objects back to their original side. Dropping objects outside the field of view incurs a penalty. It evaluates a surgeon’s fine motor skills, hand–eye coordination, and depth perception [12].

FLS pattern cut involves holding a Maryland dissector in one hand and providing traction to a gauze piece while cutting it with endoscopic scissors held in the other hand. Participants cut along a pre-marked circle until the gauze is completely removed from the 4 × 4 gauze piece. Any cuts deviating from the marked circle are penalized. This task assesses skills needed for laparoscopic surgery, including hand–eye coordination, dexterity, and depth perception [12].

FLS intracorporeal suturing involves placing a short suture through two marks in a Penrose drain and tying two throws of a knot to close a slit. Penalties are assessed for deviations from the marks, improper closure of the slit, or a knot that slips or comes apart when tension is applied. This task evaluates surgical skills, including hand–eye coordination, dexterity, knot-tying in tight spaces, tissue handling, tension management, and suturing techniques [13].

RAS pattern cut and tissue dissection involve cutting along a pre-marked circle on paper and woodchuck skin, respectively, until the circle area is completely removed.

Actual performance scores

FLS peg transfer performance was evaluated by counting completion time, tool collisions, and drops from videos, and using the Global Operative Assessment of Laparoscopic Skills (GOALS) tool to evaluate five domains (depth perception, bimanual dexterity, efficiency, tissue handling, and autonomy) on a 1–5 Likert scale, with a total range of 5–25 (“Appendix 1”) [3]. In FLS pattern cut, completion time and tool collisions were counted via videos, error area was calculated using the final product and Fiji software [14], and used to assess overall technical proficiency using the GOALS tool. In FLS suturing, videos were used to count time to complete the task, number of drops and collisions, and evaluated performance using the Objective Structured Assessment of Technical Skills (OSAT) tool, which assesses eight domains (respect for tissues, time and motion, instrument handling, suture handling, flow of suturing, knowledge of the steps, overall appearance, and overall performance domains) on a Likert scale between 1 and 5, with a total score range of 8–40 (“Appendix 1”) [15].

For RAS pattern cut and tissue dissection, completion time, tool collisions, and error area were counted using videos and Fiji software [14], respectively. Performance was assessed using the Global Evaluative Assessment of Robotic Skills (GEARS) tool [16], which measures depth perception, bimanual dexterity, efficiency, force sensitivity, autonomy, and robotic control on a 1–5 Likert scale (“Appendix 1”).

Actual learning rate

The learning rate was calculated by fitting a linear regression to the participant’s performance scores across attempts and taking the slope of the resulting line.

Eye gaze features

Eye gaze data collected in this study were preprocessed using Tobii Pro Lab©. Preprocessing involved applying a moving average filter with a window size of 3 points to reduce noise, and a velocity-threshold identification fixation filter with a threshold of 30° per second to identify fixation and saccadic points. Twelve eye gaze features were extracted, including pupil diameter, entropy, fixation time points, saccade time points, gaze direction change, and pupil trajectory length for both eyes [17, 18].

EEG features

Signal processing techniques were applied to the EEG signals to remove artifacts (Supplement 1 [19,20,21,22,23,24,25,26,27,28,29,30,31,32,33,34,35]). After decontamination, the EEG signals were analyzed to extract features related to changes of brain activity during learning, such as strength, search information, temporal network flexibility, integration, and recruitment (Supplement 1). The average of features was calculated at 4 different cortices of the brain (frontal, parietal, occipital, and temporal cortices), resulting in 20 EEG features.

Role of extracted EEG features in learning

When a person learns new skills, brain stores information in particular areas [36]. The process of practicing and training results in modifications to the brain’s functional network [36], which can be measured by examining various features, such as strength, search information, temporal network flexibility, integration, and recruitment. Search information measures the efficiency of information transfer between different areas of the brain [24, 37]. Strength measures the quality of communication between different regions of the brain. Temporal network flexibility measures the degree of the brain changes over time to adapt to different demands [33]. Flexibility has been proposed as a functional brain network feature that changes by learning [38], and as a predictor of the mental workload of surgeons during surgical procedures [39]. Integration explains how different regions of the brain function in harmony over time [34]. Recruitment is the activation of a specific region of the brain that forms interconnected networks while performing cognitive or behavioral tasks. This feature provides insights into the underlying neural mechanisms of different cognitive functions and can assist understand how the brain processes information and generates behavior [40]. Integration and recruitment features are known to be sensitive to changes in skill level and learning [34].

Performance and learning rate evaluation models using EEG and eye gaze features, and experience

Experience-related features were added to EEG and eye gaze features to explore the influence of RAS and FLS experience on performance and learning rate. These variables included the number of hours of RAS experience, the total count of laparoscopic surgeries performed as the primary surgeon (cases), the length of clinical practice (years), and the duration of formal laparoscopic surgery training (years).

Machine learning models were developed for performance, using retrieved EEG and eye gaze features, and experience-related features. Also, the retrieved EEG and eye gaze features at the first attempt were used to develop learning rate evaluation models. Moreover, performance score at the first attempt of each task was considered as a baseline and was included as a possible predictor of learning rate.

Machine learning models for performance and learning rate evaluation

The generalized linear mixed models (GLMMs), using L1-penalized estimation, also known as GLMM-LASSO models were developed to select the most important features and evaluate performance. The algorithm was applied to the features with participant identifier (ID) as a random effect, and the best penalty values were selected based on grid search and cross-validation analyses to determine the optimum lambda value with minimum Bayesian Information Criterion (BIC)—Supplement 2.

Learning rate models were developed using EEG and eye gaze features and performance, at the first attempt, experience-related features, and linear regression algorithm. Feed forward features selection and leave one out cross validation techniques were used to select features for linear regression model development.

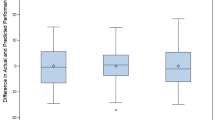

Local Outlier Factor (LOF) algorithm with 10 neighbors was applied to detect and exclude outliers from analysis. The R2 metric measured the proportion of variance in the dependent variable explained by the independent variables in the developed models. Mean Absolute Error (MAE) is the average of the absolute differences between the predicted and actual values. Root Mean Squared Error (RMSE) is the square root of the average of the squared differences between the predicted and actual values. MAE and RMSE are two commonly used metrics in machine learning and data science for evaluating the performance of regression models. R2, MAE, and RMSE metrics were calculated to assess the power of prediction models.

Statistical analysis to find the change in performance across experience levels

A linear mixed model was fitted for performance scores, where the skill levels were treated as four factors (pre-medical student, resident, fellow, and faculty), and participant Identifier (ID) was treated as a random effect to accommodate for repeated measurement. Analysis of variance (ANOVA) was fitted to test whether there was any difference in measurements between different skill levels. A p value less than 0.05 was considered a statistically significant difference between skill levels. Least Squares Means (LSM) was calculated for each skill level to accommodate the inferential comparison.

Results

The results of this study included the development of performance and learning rate evaluation models, employing EEG and eye gaze features along with experience-related features. The developed models were shown across several tables: Table 2 presented the evaluation model for the FLS peg transfer task; Table 3 showed the models for the FLS pattern cut task; Table 4 outlined the models for the FLS suturing task; Tables 5 and 6 respectively presented the performance and learning rate evaluation models for the RAS pattern cut task; and Tables 7 and 8 respectively depicted the models for the RAS tissue dissection task.

Several EEG features at different brain cortices, and eye gaze features played important roles in performance and learning rate evaluation across all tasks. Experience-related features also emerged as pivotal determinants in evaluating the performance and learning rate. Specifically, hours of RAS experience showed a statistically significant association with learning rate for the FLS peg transfer. Similarly, years of clinical practice was associated with learning rate for FLS suturing. The duration of formal training in laparoscopic surgery had a strong association with the learning rate at the RAS pattern cut. Moreover, the quantity of laparoscopic surgeries where the individual was the primary surgeon demonstrated an association with the learning rate at RAS tissue dissection.

Change in performance across experience levels

From the comparison of performance across four categories (Faculty, fellow, resident, and pre-medical student), the results varied across different tasks. The results showed no statistically significant differences among the categories in performing FLS peg transfer, with p values exceeding the common threshold of 0.05 for statistical significance (Table 9).

At FLS pattern cut, there were significant differences in the scores of faculties versus pre-medical student (p = 0.01), fellow versus pre-medical student (p = 0.007), and pre-medical student versus resident (p < 0.001), indicating that faculty, fellows, and residents performed this task significantly better than pre-medical students. Fellow versus pre-medical student was the only comparison with a significant difference (p = 0.01) in FLS suturing, suggesting fellows performed significantly better in suturing tasks compared to pre-medical students. At RAS pattern cut, significant differences were found in the performance between faculty and pre-medical student (p = 0.001) and between fellow and pre-medical student (p = 0.003), both indicating superior performance by faculty and fellows compared to pre-medical students. At RAS tissue dissection, the comparisons between faculty and pre-medical student (p < 0.001), fellow and pre-medical student (p < 0.001), and pre-medical student versus resident (p = 0.03) showed significant differences, suggesting that faculty, fellows, and residents performed better at RAS tissue dissection than pre-medical students.

Discussion

Better methods for performance and learning rate evaluation are necessary to improve surgical training while ensuring patient safety. The best existing performance evaluation approaches are based on subjective rating scales, which are costly and subject to bias [3]. Objective evaluation methods are needed to enable individualized skill development, which ultimately improves surgical outcomes.

Results indicated that eye movement measures and specific neural activity patterns are significant predictors of performance and learning rate in various surgical tasks. The performance evaluation models demonstrated robust results, as evidenced by the notably high coefficients of determination, or R2 values, for FLS peg transfer, FLS pattern cut, FLS suturing, RAS pattern cut, and RAS tissue dissection tasks, which were 0.87, 0.86, 0.92, 0.94, and 0.97 respectively. Concurrently, the MAE for these tasks was relatively low, with values of 0.8, 0.64, 1.47, 0.74, and 0.6. Similarly, the learning rate evaluation models showed considerable efficacy, yielding high R2 values for FLS peg transfer, FLS pattern cut, FLS suturing, RAS pattern cut, and RAS tissue dissection tasks at 0.88, 0.82, 0.87, 0.84, and 0.85, respectively. Correspondingly, the MAE for these tasks was kept low (0.25, 0.24, 0.57, 0.15, and 0.28, respectively). Findings can have important implications for surgical training programs, as they can be tailored to improve these specific aspects of surgeons’ behavior and neural patterns.

The regression analyses provided insight into the roles various features play in determining performance and learning rates in several surgical tasks. Eye-tracking metrics such as pupil diameter and pupil trajectory length were predictors of performance across different surgical tasks, suggesting a link between visual attention and surgical performance. Measures of brain function, including the recruitment and strength of channels in brain cortices, significantly influenced performance. This suggests that neural activity and how the brain processes information during a task could be key indicators of surgical performance. Eye-tracking metrics at the first attempt of each task were significant predictors, implying that initial visual attention and processing may set the rate for learning rate. Similar to performance, brain function features at the first attempt also play a key role in learning rates evaluation. This finding supports the idea that the initial brain-state might shape the trajectory of learning [41].

These findings point to a multifaceted interaction of visual and neurological factors that contribute to surgical performance and the rate of learning surgical tasks. They highlight the potential value of eye-tracking metrics and neuroimaging data in surgical education and training. By understanding these influences, surgical training programs could be tailored to individual learning patterns and optimize performance outcomes. However, further research would be beneficial to confirm these results and develop specific interventions.

The findings emphasize the significance of ocular dominance (dominant and nondominant eyes) in surgical performance, as it plays a crucial role in depth perception and precise manipulation of surgical instruments [42, 43]. However, current assessment tools for surgical skills do not explicitly consider ocular dominance. Therefore, incorporating measurements of ocular dominance could enhance the accuracy of evaluating surgical performance. Surgical training programs that incorporate simulated surgical environments and tools designed to enhance trainees’ ocular dominance and other visual and motor skills could be beneficial.

The number of years of formal training in laparoscopic surgery positively predicted performance in several tasks. This suggests that specific, focused training in a procedure may be important when it comes to skill acquisition and performance in that procedure.

Change in performance across experience levels

No noticeable statistical differences were observed among the various categories in performing the FLS peg transfer (Table 9). This may be attributed to the straightforward nature of the task, rendering it manageable for all participants. The results indicated a superior performance by the resident group compared to the faculty group in performing FLS pattern cut (p value = 0.04). This difference might be attributable to the fact that residents regularly engage in FLS tasks, whereas the faculty members have typically not practiced these tasks since their residency programs, often many years prior. In terms of the FLS pattern cut, the faculty group demonstrated a higher performance level compared to the pre-medical student group (p value = 0.01). Similarly, the residents also outperformed the pre-medical students in the same task (p value < 0.001). As expected, fellows surpassed pre-medical students in executing the FLS suturing task (p value = 0.01).

Regarding RAS tasks, both faculty and fellows exhibited better performances in the RAS pattern cut and RAS tissue dissection tasks compared to the pre-medical students (p values for the RAS pattern cut were 0.001 for faculty and 0.003 for fellows, while for RAS tissue dissection, the p value was less than 0.001 for both groups). Moreover, residents also demonstrated superior skills in RAS tissue dissection compared to pre-medical students (p value = 0.03).

The strengths of this study include its innovative approach of utilizing functional brain network and eye gaze features to assess surgical performance and learning rate. The standardized approach to task selection and data collection enhances the reliability and validity of the findings. Furthermore, by including both FLS and RAS tasks, the study allows for a comparison of performance across different surgical modalities, providing valuable insights. Overall, the study’s methodology, standardized approach, and comparison across surgical modalities contribute to its strengths.

However, several limitations should be considered. The use of linear model analyses in this study does not establish causality. Additionally, the small sample size of 25 participants and five attempts of tasks may limit the generalizability of the results to a broader population. The study focused solely on EEG and eye gaze data, neglecting other factors such as muscle activity that may also influence surgical performance and learning rate. Moreover, the study examined only a limited number of tasks, which may not encompass the full spectrum of surgical procedures. Finally, the controlled laboratory environment may not fully capture the complexity and variability of real-world surgical settings.

Practical implications

The developed models for evaluating performance and rate of learning using EEG and eye-tracking characteristics are promising, and they are aligned with the demands of each task. Once these models are validated for a broader population and a variety of surgical procedures, they could be utilized in surgical residency programs to enhance the RAS training process. This can be achieved in two ways: (1) By offering objective, unbiased performance evaluation of RAS trainees without the need for a RAS surgeon present during training sessions. This approach could reduce the costs associated with skill acquisition while offering trainees valuable feedback. This means trainees can correct any errors in their technique rather than repeating them, leading to a faster learning process. This increased efficiency would enable more trainees to enroll in programs, expedite the graduation of current residents, and ultimately increase the number of trained RAS surgeons each year. This proliferation of RAS skills would benefit more patients and hospitals, as RAS procedures are associated with shorter hospital stays and fewer surgical complications than traditional surgical methods [44, 45]. (2) By recording data from the initial attempt, the learning rate evaluation models could assist RAS training programs in predicting an individual trainee’s rate of learning. Equipped with this knowledge, programs could better select trainees or prepare strategies to strengthen learning among slower learners.

Overall, these results suggest that cognitive load, as inferred from eye tracking and EEG data, plays a crucial role in surgical performance and the rate of skill acquisition. This could have several implications for the way surgical training programs are designed: Training could be individualized based on these features, with trainees receiving feedback not only on their technical skills but also on their cognitive load management; Simulators and training programs could incorporate eye tracking and EEG data to provide more detailed feedback; Eye tracking and EEG could be used as objective measures to assess surgical proficiency and readiness for independent practice.

Conclusion

Results provided valuable insights into the potential for the integration of eye-tracking and neuroimaging measures as objective tools for performance and learning rate evaluation in surgical training. The developed models demonstrate significant potential, as they provide an objective assessment of performance and learning rates. This is an important improvement over more subjective methods, which are costly and susceptible to biases. The results showed that several neural and visual features are meaningful predictors of performance and learning rate in the FLS and RAS surgical tasks. The findings provide insights into the factors that affect task performance and learning rate, which could inform the development of training interventions to improve surgical skill acquisition.

Data availability

The data analyzed in the current study are available at Shafiei and Shadpour [46]. Integration of electroencephalogram and eye-gaze datasets for performance evaluation in fundamentals of laparoscopic surgery (FLS) tasks (version 1.0.0). PhysioNet.

References

Kim H-H et al (2010) Morbidity and mortality of laparoscopic gastrectomy versus open gastrectomy for gastric cancer: an interim report—a phase III multicenter, prospective, randomized Trial (KLASS Trial). Ann Surg 251(3):417–420

Hwang S-H et al (2009) Actual 3-year survival after laparoscopy-assisted gastrectomy for gastric cancer. Arch Surg 144(6):559–564

Vassiliou MC et al (2005) A global assessment tool for evaluation of intraoperative laparoscopic skills. Am J Surg 190(1):107–113

Peters JH et al (2004) Development and validation of a comprehensive program of education and assessment of the basic fundamentals of laparoscopic surgery. Surgery 135(1):21–27

Sroka G et al (2010) Fundamentals of laparoscopic surgery simulator training to proficiency improves laparoscopic performance in the operating room—a randomized controlled trial. Am J Surg 199(1):115–120

Derossis AM et al (1998) Development of a model for training and evaluation of laparoscopic skills. Am J Surg 175(6):482–487

Kahol K, Vankipuram M, Smith ML (2009) Cognitive simulators for medical education and training. J Biomed Inform 42(4):593–604

Thomaschewski M et al (2021) Changes in attentional resources during the acquisition of laparoscopic surgical skills. BJS Open 5(2):zraa012

Frutos-Pascual M, Garcia-Zapirain B (2015) Assessing visual attention using eye tracking sensors in intelligent cognitive therapies based on serious games. Sensors 15(5):11092–11117

Kuo R, Chen H-J, Kuo Y-H (2022) The development of an eye movement-based deep learning system for laparoscopic surgical skills assessment. Sci Rep 12(1):11036

Toussi MS et al (2023) MP26-09 eye movement behavior associates with expertise level in robot-assisted surgery. J Urol 209(Supplement 4):e355

Emken JL, McDougall EM, Clayman RV (2004) Training and assessment of laparoscopic skills. J Soc Laparoendosc Surg 8(2):195

Soper NJ, Fried GM (2008) The fundamentals of laparoscopic surgery: its time has come. Bull Am Coll Surg 93(9):30–32

Schindelin J et al (2012) Fiji: an open-source platform for biological-image analysis. Nat Methods 9(7):676–682

Alam M et al (2014) Objective structured assessment of technical skills in elliptical excision repair of senior dermatology residents: a multirater, blinded study of operating room video recordings. JAMA Dermatol 150(6):608–612

Goh AC et al (2012) Global evaluative assessment of robotic skills: validation of a clinical assessment tool to measure robotic surgical skills. J Urol 187(1):247–252

Garcia AAT et al (2021) Biosignal processing and classification using computational learning and intelligence: principles, algorithms, and applications. Academic Press, Cambridge

Shafiei SB et al (2023) Developing surgical skill level classification model using visual metrics and a gradient boosting algorithm. Ann Surg Open 4(2):e292

Luck SJ (2014) An introduction to the event-related potential technique. MIT Press, Cambridge

Kayser J, Tenke CE (2015) On the benefits of using surface Laplacian (current source density) methodology in electrophysiology. Int J Psychophysiol 97(3):171

Srinivasan R et al (2007) EEG and MEG coherence: measures of functional connectivity at distinct spatial scales of neocortical dynamics. J Neurosci Methods 166(1):41–52

Strotzer M (2009) One century of brain mapping using Brodmann areas. Clin Neuroradiol 19(3):179–186

Sneppen K, Trusina A, Rosvall M (2005) Hide-and-seek on complex networks. Europhys Lett 69(5):853

Rosvall M et al (2005) Searchability of networks. Phys Rev E 72(4):046117

Trusina A, Rosvall M, Sneppen K (2005) Communication boundaries in networks. Phys Rev Lett 94(23):238701

Lynn CW, Bassett DS (2019) The physics of brain network structure, function and control. Nat Rev Phys 1:318–332

Zhao H et al (2022) SCC-MPGCN: self-attention coherence clustering based on multi-pooling graph convolutional network for EEG emotion recognition. J Neural Eng 19(2):026051

Sporns O (2013) Network attributes for segregation and integration in the human brain. Curr Opin Neurobiol 23(2):162–171

Betzel RF et al (2017) Positive affect, surprise, and fatigue are correlates of network flexibility. Sci Rep 7(1):520

Radicchi F et al (2004) Defining and identifying communities in networks. Proc Natl Acad Sci USA 101(9):2658–2663

Jutla IS, Jeub LG, Mucha PJ (2011) A generalized Louvain method for community detection implemented in MATLAB. http://netwiki.amath.unc.edu/GenLouvain

Bassett DS et al (2013) Task-based core-periphery organization of human brain dynamics. PLoS Comput Biol 9(9):e1003171

Bassett DS et al (2011) Dynamic reconfiguration of human brain networks during learning. Proc Natl Acad Sci USA 108(18):7641–7646

Bassett DS et al (2015) Learning-induced autonomy of sensorimotor systems. Nat Neurosci 18(5):744–751

Mattar MG et al (2015) A functional cartography of cognitive systems. PLoS Comput Biol 11(12):e1004533

Jesan JP, Lauro DM (2003) Human brain and neural network behavior: a comparison. Ubiquity 2003(November):2

Goñi J et al (2014) Resting-brain functional connectivity predicted by analytic measures of network communication. Proc Natl Acad Sci USA 111(2):833–838

Reddy PG et al (2018) Brain state flexibility accompanies motor-skill acquisition. Neuroimage 171:135–147

Shafiei SB et al (2020) Evaluating the mental workload during robot-assisted surgery utilizing network flexibility of human brain. IEEE Access 8:204012–204019

Buckner RL, Andrews-Hanna JR, Schacter DL (2008) The brain’s default network: anatomy, function, and relevance to disease. Ann NY Acad Sci 1124(1):1–38

Gabitov E, Manor D, Karni A (2016) Learning from the other limb’s experience: sharing the ‘trained’M1 representation of the motor sequence knowledge. J Physiol 594(1):169–188

McFadden S (1994) Binocular depth perception. In: Davies MN, Green PR (eds) Perception and motor control in birds: an ecological approach. Springer, Berlin, pp 54–73

Bogdanova R, Boulanger P, Zheng B (2016) Depth perception of surgeons in minimally invasive surgery. Surg Innov 23(5):515–524

Khorgami Z et al (2019) The cost of robotics: an analysis of the added costs of robotic-assisted versus laparoscopic surgery using the National Inpatient Sample. Surg Endosc 33:2217–2221

Bhama AR et al (2016) A comparison of laparoscopic and robotic colorectal surgery outcomes using the American College of Surgeons National Surgical Quality Improvement Program (ACS NSQIP) database. Surg Endosc 30:1576–1584

Shafiei SB, Shadpour S (2023) Integration of electroencephalogram and eye-gaze datasets for performance evaluation in Fundamentals of Laparoscopic Surgery (FLS) tasks (version 1.0.0). PhysioNet. https://doi.org/10.13026/kyjw-p786.

Acknowledgements

This research was supported by the National Institute of Biomedical Imaging and Bioengineering of the National Institutes of Health under R01EB029398. The content is the sole responsibility of the authors and does not necessarily represent the official views of National Institutes of Health. This work was supported by the National Cancer Institute (NCI) Grant P30CA016056, which involved the use of the Roswell Park Comprehensive Cancer Center’s Comparative Oncology Shared Resource and Applied Technology Laboratory for Advanced Surgery (ATLAS).

Funding

National Institute of Biomedical Imaging and Bioengineering of the National Institutes of Health (R01EB029398) and National Cancer Institute (P30CA016056).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Disclosures

Somayeh B. Shafiei, Saeed Shadpour, Xavier Intes, Rahul Rahul, Mehdi Seilanian Toussi, and Ambreen Shafqat, have no conflicts of interest or financial ties to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Appendix 1: Surgical performance evaluation tools

Appendix 1: Surgical performance evaluation tools

Global operative assessment of laparoscopic skills (GOALS) [3]

Depth perception

1—Constantly overshoots target, wide swings, slow to correct.

3—Some overshooting or missing target, but quick to correct.

5—Accurately directs instruments in the correct plane to target.

Bimanual dexterity

1—Uses only one hand, ignores nondominant hand, poor coordination between hands.

3—Uses both hands, but does not optimize the interaction between hands.

5—Expertly uses both hands in a complementary manner to provide optimal exposure.

Efficiency

1—Uncertain, inefficient efforts; many tentative movements; constantly changing focus or persisting without progress.

3—Slow, but planned movements are reasonably organized.

5—Confident, efficient, and safe conduct, maintains focus on the task until it is better performed by way of an alternative approach.

Tissue handling

1—Rough movements, tears tissue, injures adjacent structures, poor grasper control, grasper frequently slips.

3—Handles tissue reasonably well, with minor trauma to adjacent tissue (i.e., occasional unnecessary bleeding or slipping of the grasper).

5—Handles tissues well, applies appropriate traction, negligible injury to adjacent structures.

Autonomy

1—Unable to complete entire task, even with verbal guidance.

3—Able to complete task safely with moderate guidance.

5—Able to complete task independently without prompting.

Objective structured assessment of technical skills (OSAT) tool [15]

Global evaluative assessment of robotic skills (GEARS) [16]

1 | 2 | 3 | 4 | 5 |

|---|---|---|---|---|

Depth perception | ||||

Constantly overshoots target, wide swings, slow to correct | Some overshooting or missing of target, but quick to correct | Accurately directs instruments in correct plane to target | ||

Bimanual dexterity | ||||

Uses only one hand, ignores non-dominant hand, poor coordination | Uses both hands, but does not optimize interactions between hands | Expertly uses both hands in a complementary way to provide best exposure | ||

Efficiency | ||||

Inefficient efforts; many uncertain movements; constantly changing focus or persisting without progress | Slow, but planned movements are reasonably organized | Confident, efficient and safe conduct, maintains focus on task, fluid progression | ||

Force sensitivity | ||||

Rough moves, tears tissue, injures nearby structures, poor control, frequent suture breakage | Handles tissues reasonably well, minor trauma to adjacent tissue, rare suture breakage | Applies appropriate tension, negligible injury to adjacent structures, no suture breakage | ||

Autonomy | ||||

Unable to complete entire task, even with verbal guidance | Able to complete task safely with moderate guidance | Able to complete task independently without prompting | ||

Robotic control | ||||

Consistently does not optimize view, hand position, or repeated collisions even with guidance | View is sometimes not optimal. Occasionally needs to relocate arms. Occasional collisions and obstruction of assistant | Controls camera and hand position optimally and independently. Minimal collisions or obstruction of assistant | ||

Use of third arm: N/A, third arm was not used in this study | ||||

Consistently does not use it, or does not use it well when required, even with verbal guidance | Mostly uses third arm in a safe and efficient manner with moderate guidance | Consistently uses third arm in a safe and efficient manner without prompting | ||

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Shafiei, S.B., Shadpour, S., Intes, X. et al. Performance and learning rate prediction models development in FLS and RAS surgical tasks using electroencephalogram and eye gaze data and machine learning. Surg Endosc 37, 8447–8463 (2023). https://doi.org/10.1007/s00464-023-10409-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00464-023-10409-y