Abstract

We study the problem of deterministic approximate counting of matchings and independent sets in graphs of bounded connective constant. More generally, we consider the problem of evaluating the partition functions of the monomer-dimer model (which is defined as a weighted sum over all matchings where each matching is given a weight \(\gamma ^{|V| - 2 |M|}\) in terms of a fixed parameter \(\gamma \) called the monomer activity) and the hard core model (which is defined as a weighted sum over all independent sets where an independent set I is given a weight \(\lambda ^{|I|}\) in terms of a fixed parameter \(\lambda \) called the vertex activity). The connective constant is a natural measure of the average degree of a graph which has been studied extensively in combinatorics and mathematical physics, and can be bounded by a constant even for certain unbounded degree graphs such as those sampled from the sparse Erdős–Rényi model \(\mathcal {G}(n, d/n)\). Our main technical contribution is to prove the best possible rates of decay of correlations in the natural probability distributions induced by both the hard core model and the monomer-dimer model in graphs with a given bound on the connective constant. These results on decay of correlations are obtained using a new framework based on the so-called message approach that has been extensively used recently to prove such results for bounded degree graphs. We then use these optimal decay of correlations results to obtain fully polynomial time approximation schemes (FPTASs) for the two problems on graphs of bounded connective constant. In particular, for the monomer-dimer model, we give a deterministic FPTAS for the partition function on all graphs of bounded connective constant for any given value of the monomer activity. The best previously known deterministic algorithm was due to Bayati et al. (Proc. 39th ACM Symp. Theory Comput., pp. 122–127, 2007), and gave the same runtime guarantees as our results but only for the case of bounded degree graphs. For the hard core model, we give an FPTAS for graphs of connective constant \(\varDelta \) whenever the vertex activity \(\lambda < \lambda _c(\varDelta )\), where \(\lambda _c(\varDelta ) :=\frac{\varDelta ^\varDelta }{(\varDelta - 1)^{\varDelta + 1}}\); this result is optimal in the sense that an FPTAS for any \(\lambda > \lambda _c(\varDelta )\) would imply that NP=RP (Sly and Sun, Ann. Probab. 42(6):2383–2416, 2014). The previous best known result in this direction was in a recent manuscript by a subset of the current authors (Proc. 54th IEEE Symp. Found. Comput. Sci., pp 300–309, 2013), where the result was established under the sub-optimal condition \(\lambda < \lambda _c(\varDelta + 1)\). Our techniques also allow us to improve upon known bounds for decay of correlations for the hard core model on various regular lattices, including those obtained by Restrepo et al. (Probab Theory Relat Fields 156(1–2):75–99, 2013) for the special case of \(\mathbb {Z}^2\) using sophisticated numerically intensive methods tailored to that special case.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

1.1 Background

This paper studies the problem of approximately counting independent sets and matchings in sparse graphs. We consider these problems within the more general formalism of spin systems. In this setting, one first defines a natural probability distribution over configurations (e.g., independent sets or matchings) in terms of local interactions. The counting problem then corresponds to computing the normalization constant, known as the partition function in the statistical physics literature. The partition function can also be seen as a generating function of the combinatorial structures being considered and is an interesting graph polynomial in its own right.

The first model we consider is the so called hard core model, which is defined as follows. We start with a graph \(G = (V, E)\), and specify a vertex activity or fugacity parameter \(\lambda > 0\). The configurations of the hard core model are the independent sets of the graph, and the model assigns a weight \(w(I) = \lambda ^{|I|}\) to each independent set I in G. The weights in turn determine a natural probability distribution \(\mu (I) = \frac{1}{Z}w(I)\) over the independent sets known as the Gibbs distribution. Here,

is the partition function. Clearly, the problem of counting independent sets is the special case \(\lambda = 1\).

Our next model is the monomer-dimer model, which has as its configurations all matchings of a given graph \(G = (V, E)\). For a specified dimer activity \(\gamma > 0\), the model assigns a weight \(w(M) = \gamma ^{|M|}\) to each matching M of the graph. As before, the weights define the Gibbs distribution \(\mu (M) = \frac{1}{Z} w(M)\) over matchings, where

is the partition function. The problem of counting matchings again corresponds to the special case \(\gamma = 1\).

The problem of approximating the partition function has received much attention, both as a natural generalization of counting and because of its connections to sampling.Footnote 1 Recent progress in relating the complexity of approximating the partition function to phase transitions, which we now describe, has provided further impetus to this line of research.

The first such result was due to Weitz [50], who exploited the properties of the Gibbs measure of the hard core model on the infinite d-ary tree. It was well known that this model exhibits the following phase transition: there exists a critical activity \(\lambda _c(d)\) such that the total variation distance between the marginal probability distributions induced at the root of the tree by any two fixings of the independent set on all the vertices at distance \(\ell \) from the root decays exponentially in \(\ell \) when \(\lambda < \lambda _c(d) :=\frac{d^d}{(d-1)^{d+1}}\), but remains bounded away from 0 even as \(\ell \rightarrow \infty \) when \(\lambda > \lambda _c(d)\) (the former condition is also referred to as correlation decay, since the correlation between the configuration at the root of the tree and a fixed configuration at distance \(\ell \) from the root decays exponentially in \(\ell \); it is also called spatial mixing). Weitz showed that for all \(\lambda < \lambda _c(d)\) (i.e., in the regime where correlation decay holds on the d-ary tree), there exists a fully polynomial time approximation scheme (FPTAS)for the partition function of the hard core model on all graphs of degree at most \(d + 1\) (note that the condition on \(\lambda \) is only in terms of the d-ary tree, while the FPTAS applies to all graphs). This connection to phase transitions was further strengthened by Sly [45] (see also [12, 46]), who showed that a fully polynomial randomized approximation algorithm (FPRAS) for the partition function of the hard core model with \(\lambda > \lambda _c(d)\) on graphs of degree \(d+1\) would imply NP = RP.Footnote 2

In addition to establishing a close connection between the complexity of a natural computational problem and an associated phase transition, Weitz’s algorithm had the further interesting feature of not being based on Markov chain Monte Carlo (MCMC) methods; rather, it used a deterministic procedure based on proving that decay of correlations on the d-ary tree implies decay of correlations on all graphs of degree at most \(d+1\). To date, no MCMC algorithms are known for the approximation of the partition function of the hard core model on graphs of degree at most \(d+1\) which run in polynomial time for all \(\lambda < \lambda _c(d)\).

Weitz’s algorithm led to an exploration of his approach for other problems too. For example, in the case of the monomer-dimer model—unlike that of the hard core model—there does exists a randomized polynomial time algorithm (based on MCMC) for approximating the partition function which works for every \(\gamma > 0\), without any bounds on the degree of the graph [23]. However, finding a deterministic algorithm for the problem remains open. Bayati et al. [7] made progress on this question by showing that Weitz’s approach could be used to derive a deterministic algorithm that runs in polynomial time for bounded degree graphs, and is sub-exponential on general graphs.

The algorithms of both Weitz and Bayati et al. are therefore polynomial time only on bounded degree graphs, and in particular, for a given value of the parameter \(\lambda \) (or \(\gamma \) in the case of the monomer-dimer model) the running time of these algorithms on graphs of maximum degree \(d+1\) depends upon the rate of decay of correlations on the infinite d-ary tree. Further, these results are obtained by showing that decay of correlations on the d-ary tree implies a similar decay on all graphs of maximum degree \(d+1\).

There are two important shortcomings of such results. First, in statistical physics one is often interested in special classes of graphs such as regular lattices. One can reasonably expect that the rate of decay of correlations on such graphs should be better than that predicted by their maximum degree. Second, these results have no non-trivial consequences even in very special classes of sparse unbounded degree graphs, such as graphs drawn from the Erdős-Rényi model \(\mathcal {G}(n, d/n)\) for constant d.

This state of affairs leads to the following natural question: is there a finer notion of degree that can be used in these results in place of the maximum degree? Progress in this direction was made recently for the case of the hard core model in [44], where it was shown that one can get decay of correlation results in terms of the connective constant, a natural and well-studied notion of average degree. The connective constant of a regular lattice of degree \(d+1\) is typically substantially less than d; and it is bounded even in the case of sparse random graphs such as those drawn from \(\mathcal {G}(n, d/n)\), which have unbounded maximum degree. By analogy with the bounded degree case, one might hope to get correlation decay on graphs with connective constant at most \(\varDelta \) for all \(\lambda < \lambda _c(\varDelta )\). In [44], such a result was proven under the stronger condition \(\lambda < \lambda _c(\varDelta + 1)\). The latter bound is tight asymptotically as \(\varDelta \rightarrow \infty \) (because \(\lambda _c(\varDelta +1)/\lambda _c(\varDelta ) \rightarrow 1\) as \(\varDelta \rightarrow \infty \)), but is sub-optimal in the important case of small \(\varDelta \).

1.2 Contributions

In this paper, we show that one can indeed replace the maximum degree by the connective constant in the results of both Weitz [50] and Bayati et al. [7]. In particular, we show that for both the hard core and the monomer-dimer models, decay of correlations on the d-ary tree determines the rate of decay of correlations—as well as the complexity of deterministically approximating the partition function—in all graphs of connective constant at most d, without any dependence on the maximum degree. The specific notion of decay of correlations that we establish is known in the literature as strong spatial mixing [18, 33, 34, 50], and stipulates that the correlation between the state of a vertex v and another set S of vertices at distance \(\ell \) from v should decay exponentially in \(\ell \) even when one is allowed to fix the state of vertices close to v to arbitrary values (see Sect. 2.2.3 for a precise definition). Prior to the role it played in the design of deterministic approximate counting algorithms in Weitz’s work [50], strong spatial mixing was already a widely studied notion in computer science and mathematical physics for its utility in analyzing the mixing time of Markov chains [18, 33, 34], and hence an improved understanding of conditions under which it holds is of interest in its own right.

We now give an informal description of the connective constant [19, 32]; see Sect. 2.6 for precise definitions. Given a graph G and a vertex v in G, let \(N(v, \ell )\) denote the number of self avoiding walks in G of length \(\ell \) starting at v. A graph family \(\mathcal {F}\) is said to have connective constant \(\varDelta \) if for all graphs in \(\mathcal {F}\), the number of self-avoiding walks of length at most \(\ell \) for large \(\ell \) grows as \(\varDelta ^\ell \), i.e., if \(\ell ^{-1}\log \sum _{i=1}^\ell N(v, i)\sim \log \varDelta \) (the definition can be applied to both finite and infinite graphs; see Sect. 2.6). Note that in the special case graphs of maximum degree \(d+1\), the connective constant is at most d. It can, however, be much lower than this crude bound: for any \(\epsilon > 0\), the connective constant of graphs drawn from G(n, d / n) is at most \(d (1 + \epsilon )\) with high probability (w.h.p.) (see, e.g., [44]), even though their maximum degree is \(\varOmega \left( \frac{\log n}{\log \log n}\right) \) w.h.p.

Our first main result can now be stated as follows.

Theorem 1

(Main, hard core model) Let \(\mathcal {G}\) be a family of finite graphs of connective constant at most \(\varDelta \), and let \(\lambda \) be such that \(\lambda < \lambda _c(\varDelta )\). Then there is an FPTAS for the partition function of the hard core model with vertex activity \(\lambda \) for all graphs in \(\mathcal {G}\). Further, even if \(\mathcal {G}\) contains locally finite infinite graphs, the model exhibits strong spatial mixing on all graphs in \(\mathcal {G}\).

Remark 1

In [44], the above result was proved under the stronger hypothesis \(\lambda < \lambda _c(\varDelta + 1)\). The above result therefore subsumes the main results of [44]. It is also optimal in the following sense: there cannot be an FPRAS for graphs of connective constant at most \(\varDelta \) which works for \(\lambda > \lambda _c(\varDelta )\), unless NP = RP. This follows immediately from the hardness results for the partition function of the hard core model on bounded degree graphs [45, 46] since graphs of degree at most \(d+1\) have connective constant at most d.

An immediate corollary of Theorem 1 is the following.

Corollary 1

Let \(\lambda < \lambda _c(d)\). Then, there is an algorithm for approximating the partition function of graphs drawn from \(\mathcal {G}(n, d/n)\) up to a factor of \((1 \pm \epsilon )\) which, with high probability over the random choice of the graph, runs in time polynomial in n and \(1/\epsilon \).

Similar results for \(\mathcal {G}(n,d/n)\) have appeared in the literature in the context of rapid mixing of Glauber dynamics for the ferromagnetic Ising model [38], and also for the hard core model [11, 37]. Although the authors of [37] do not supply an explicit range of \(\lambda \) for which their rapid mixing results hold, an examination of their proofs suggests that necessarily \(\lambda <O\left( 1/d^2\right) \). Similarly, the results of [11] hold when \(\lambda < 1/(2d)\). In contrast, our bound approaches (and is always better than) the conjectured optimal value e / d. Further, unlike ours, the results of [11, 37, 38] are restricted to \(\mathcal {G}(n, d/n)\) and certain other classes of sparse graphs.

A second consequence of Theorem 1 is a further improvement upon the spatial mixing bounds obtained in [44] for various lattices, as shown in Table 1. For each lattice, the table shows the best known upper bound for the connective constant and the strong spatial mixing (SSM) bounds we obtain using these values in Theorem 1. In the table, a value \(\alpha \) in the “\(\lambda \)” column means that SSM is shown to hold for the appropriate lattice whenever \(\lambda \le \alpha \). As expected, improvements over our previous results in [44] are the most pronounced for lattices with smaller maximum degree.

The table shows that except in the case of the 2D integer lattice \(\mathbb {Z}^2\), our general result immediately gives improvements on the best known SSM bounds for all lattices using only previously known estimates of the connective constant. Not unexpectedly, our bound for \(\mathbb {Z}^2\) using the connective constant as a black-box still improves upon Weitz’s bound but falls short of the bounds obtained by Restrepo et al. [42] and Vera et al. [47] using numerically intensive methods tailored to this special case. However, as we noted in [44], any improvement in the bound on the connective constant would immediately yield an improvement in our SSM bound. Indeed, in Appendix A, we use a tighter analysis of the connective constant of a suitably constructed self-avoiding walk tree of \(\mathbb {Z}^2\) to show that SSM holds on this lattice whenever \(\lambda < 2.538\), which improves upon the specialized bound \(\lambda < 2.48\), obtained in the papers [42, 47]. We note that this improvement would not be possible using only our earlier results in [44].

We also apply our techniques to the study of uniqueness of the Gibbs measure of the hard core model on general trees. Uniqueness is a weaker notion than spatial mixing, requiring correlations to decay to zero with distance but not necessarily at an exponential rate (see Sect. 6 for a formal definition). We relate the phenomenon of the uniqueness of the Gibbs measure of the hard core model on a general tree to the branching factor of the tree, another natural notion of average arity that has appeared in the study of uniqueness of Gibbs measure for models such as the ferromagnetic Ising model [30]. The details of these results can be found in Sect. 6.

Our second main result concerns the monomer-dimer model.

Theorem 2

(Main, Monomer-dimer model) Let \(\mathcal {G}\) be a family of finite graphs of connective constant at most \(\varDelta \), and let \(\gamma > 0\) be any fixed edge activity. Then there is an FPTAS for the partition function of the monomer-dimer model with edge activity \(\gamma \) for all graphs in \(\mathcal {G}\). More specifically, the running time of the FPTAS for producing an \((1\pm \epsilon )\) factor approximation is \(\left( n/\epsilon \right) ^{O( \sqrt{\gamma \varDelta } \log \varDelta )}\).

The previous best deterministic approximation algorithm for the partition function of the monomer-dimer model was due to Bayati et al. [7], and ran in time \(\left( n/\epsilon \right) ^{O( \sqrt{\gamma d} \log d)}\) for graphs of degree at most \(d+1\). Thus, our algorithm replaces the maximum degree constraint of Bayati et al. by a corresponding constraint on the connective constant, without requiring any bounds on the maximum degree. In particular, for graphs such as \(\mathcal {G}(n, d/n)\) which have bounded connective constant and unbounded degree, our analysis yields a polynomial time algorithm (for any fixed value of the edge activity \(\gamma \)) in contrast to the sub-exponential time algorithm obtained by Bayati et al. [7]. Using an observation of Kahn and Kim [25], Bayati et al. also pointed out that the \(\sqrt{d}\) factor in the exponent of their running time was optimal for algorithms which are based on Weitz’s framework and which use only the fact that the maximum degree of the graph is at most \(d+1\). A similar observation shows that the \(\sqrt{\gamma \varDelta }\) factor in the exponent of our running time is optimal for algorithms in the Weitz framework which use bounds on the connective constant (see the remark at the end of Sect. 5 for a more detailed discussion of this point). As an aside, we also note that when no bounds on the connective constant are available our FPTAS degrades to a sub-exponential algorithm, as does the algorithm of Bayati et al. in the case of unbounded degree graphs.

1.3 Techniques

The analyses by Weitz [50] and Bayati et al. [7] both begin with the standard observation that obtaining an FPTAS for the marginal probabilities of the Gibbs distribution is sufficient in order to obtain an FPTAS for the partition function. The next non-trivial step is to show that this computation of marginal probabilities at a given vertex v in a graph G can be carried out on the tree \(T_{SAW}\left( v, G\right) \) of self-avoiding walks in G starting at v. Transferring the problem to a tree allows one to write down a recurrence for the marginal probabilities of a node in the tree in terms of the marginal probabilities of its children. However, since the tree is of exponential size, one needs to truncate the tree at a small (logarithmic) depth in order to obtain a polynomial time algorithm. Such a truncation in turn introduces an “error” at the leaves. The challenge then is to show that this error contracts exponentially as the recurrence works its way up to the root.

The approach of [7, 50] (and similar results in [27, 28, 43]) for establishing this last condition takes the following general form: one shows that at each step of the recurrence, the correlation decay condition implies that the error at the parent node is less than a constant factor (less than 1) times the maximum (\(\ell _\infty \) norm) of the errors at the children of the node. Intuitively, this strategy loses information about the structure of the tree by explaining the error at the parent in terms of only one of its children, and hence it is not surprising that the results obtained from it are only in terms of a local parameter such as the maximum degree.

The main technical contribution of [44] was to show that, by analyzing the decay in terms of the \(\ell _2\) norm—rather than the \(\ell _\infty \) norm—of the errors at the children, one can get past this limitation and obtain a result in terms of the connective constant. Nevertheless, as stated above, the results obtained in [44] did not hold over the best possible range of parameters. Our main innovation in the present paper is to analyze instead a norm adapted to the parameters of the model, rather than a fixed norm such as \(\ell _2\) or \(\ell _\infty \). Specifically, we show that optimal results can be obtained by analyzing the decay in terms of a carefully picked \(\ell _q\) norm where q is chosen as a function of the connective constant and the model parameters (the fugacity \(\lambda \) in the hard core model and the edge activity \(\gamma \) in the monomer-dimer model). At a technical level, the use of these adaptive norms implies that we can no longer employ the relatively simpler convexity arguments used in [44] in order to bound the propagation of errors; characterizing the “worst case” error vectors now requires solving a more involved optimization problem, which is the main new technical challenge in this paper. In Sect. 3, we give a general framework for tackling this problem. Our model specific main results are then obtained as direct applications of this framework. We conjecture that our framework may find applications to other approximate counting problems as well.

1.4 Related work

MCMC based algorithms for approximating the partition function of the hard core model on graphs of bounded degree \(d+1\) were obtained under the condition \(\lambda < 1/(d-2)\) by Luby and Vigoda [29], and later under the weaker condition \(\lambda < 2/(d-1)\) by Dyer and Greenhill [10] and Vigoda [48]. Weitz [50] obtained an FPTAS under the much weaker condition \(\lambda < \lambda _c(d)\) by establishing a tight connection between the algorithmic problem and the decay of correlations on the d-ary tree. This connection was further tightened by Sly [45] (see also Galanis et al. [12] and Sly and Sun [46]) who showed that approximating the partition function of the hard core model on \((d+1)\)-regular graphs is NP-hard when \(\lambda > \lambda _c(d)\). Weitz’s algorithm was also one of the first deterministic algorithms for approximating partition functions (along with the contemporaneous work of Bandhopadhyay and Gamarnik [4]) which exploited decay of correlations directly—in contrast to earlier algorithms which were mostly based on MCMC techniques. To date, no MCMC based algorithms for the partition function of the hard core model are known to have as large a range of applicability as Weitz’s algorithm.

Weitz’s approach has also been used to study the correlation decay phenomenon on specific lattices. Restrepo et al. [42] supplemented Weitz’s approach with sophisticated computational arguments tailored to the special case of \(\mathbb {Z}^2\) to obtain strong spatial mixing on \(\mathbb {Z}^2\) under the condition \(\lambda < 2.38\). Using even more extensive numerical work, this bound was improved to \(\lambda < 2.48\) by Vera et al. [47]. In contrast, a direct application of Weitz’s results yields the result only under the condition \(\lambda < \lambda _c(3) = 1.6875\). Sampling from the hard core model on special classes of unbounded degree graphs has also been considered in the literature. Mossel and Sly [37] gave an MCMC based algorithm for sampling from the hard core model on graphs drawn from \(\mathcal {G}(n, d/n)\). However, their algorithm is only applicable in the range \(\lambda < O(1/d^2)\). Efthymiou [11] gave an MCMC based sampler under the much weaker condition \(\lambda < 1/(2d)\). Hayes and Vigoda [21] also considered the question of sampling from the hard core model on special classes of unbounded degree graphs. They showed that for regular graphs on n vertices of degree \(d(n) = \varOmega (\log n)\) and of girth greater than 6, the Glauber dynamics for the hard core model mixes rapidly for \(\lambda < e/d(n)\). These results are incomparable to Theorem 1. The latter neither requires the graph to be regular, nor any lower bounds on its degree or girth, but it does require additional information about the graph in the form of its connective constant. However, when the connective constant is available, then irrespective of the maximum degree of the graph or its girth, the theorem affords an FPTAS for the partition function.

In contrast to the case of the hard core model, much more progress has been made on relating spatial mixing to notions of average degree in the case of the zero field ferromagnetic Ising model. Lyons [30] showed that on an arbitrary tree, a quantity similar in flavor to the connective constant, known as the branching factor, exactly determines the threshold for uniqueness of the Gibbs measure for this model. For the ferromagnetic Ising model on general graphs, Mossel and Sly [36, 38] proved results analogous to our Theorem 1. An important ingredient in the arguments in both [30] and [36, 38] relating correlation decay in the zero field Ising model to the branching factor and the connective constant is the symmetry of the “\(+\)” and “−” spins in the zero field case. In work related to [30], Pemantle and Steif [40] defined the notion of a robust phase transition (RPT) and related the threshold for RPT for various “symmetric” models such as the zero field Potts model and the Heisenberg model on general trees to the branching factor of the tree. Again, an important ingredient in their arguments seems to be the existence of a symmetry on the set of spins under whose action the underlying measure remains invariant. In contrast, in the hard core model, the two possible spin states of a vertex (“occupied” and “unoccupied”) do not admit any such symmetry.

A preliminary version of this paper [44] investigated decay of correlations in the hard core model in graphs with possibly unbounded degree but bounded connective constant. There, it was shown that when \(\lambda < \lambda _c(\varDelta +1)\), all graphs of connective constant at most \(\varDelta \) exhibit decay of correlations and also admit an FPTAS for the partition function. The observation that for any \(\epsilon > 0\), the connective constant of graphs drawn from \(\mathcal {G}(n, d/n)\) is at most \(d(1+\epsilon )\) with high probability was then used to derive a polynomial time sampler for the hard core model on \(\mathcal {G}(n, d/n)\) all the way up to \(\lambda < e/d\) (which was the conjectured asymptotic bound). Even for the case of bounded degree graphs, it was shown in [44] that estimates on the connective constant could be used to improve the range of applicability of Weitz’s results. This latter result was then used in conjunction with well known upper bounds on the connective constants of various regular lattices to improve upon the known strong spatial mixing bounds for those lattices (these included \(\mathbb {Z}^d\) for \(d \ge 3)\). However, in the special case of \(\mathbb {Z}^2\), the bounds in [44] fell short of those obtained by Restrepo et al. [42] and Vera et al. [47].

The results of the present paper for the hard core model strengthen and unify the two distinct results of [44] mentioned above, by replacing the maximum degree constraint in Weitz’s result completely by an exactly corresponding constraint on the connective constant: in particular, we show that graphs of connective constant \(\varDelta \) admit an FPTAS (and exhibit strong spatial mixing) whenever \(\lambda < \lambda _c(\varDelta )\). Thus, for example, our results extend the range of applicability of the above quoted results of [44] for \(\mathcal {G}(n, d/n)\) to \(\lambda < \lambda _c(d)\); since \(\lambda _c(d) > e/d\) for all \(d \ge 1\), this is a strict improvement on [44]. Regarding the question of improved strong spatial mixing bounds on specific lattices, our results show that connective constant computations are sufficient to improve upon the results of Restrepo et al. [42] and Vera et al. [47] even in the case of \(\mathbb {Z}^2\), without taking recourse to any further numerical or computational work.

In contrast to the case of the hard core model, where algorithms with the largest range of applicability are already deterministic, much less is known about the deterministic approximation of the partition function of the monomer-dimer model. Jerrum and Sinclair [23] gave an MCMC-based FPRAS for the monomer-dimer model which runs in polynomial time on all graphs (without any bounds on the maximum degree) for any fixed value of the edge activity \(\lambda \). However, no deterministic algorithms with this range of applicability are known, even in the case of specific graph families such as \(\mathcal {G}(n, d/n)\). So far, the best result in this direction is due to Bayati, Gamarnik, Katz, Nair and Tetali [7], who gave an algorithm which produces a \((1\pm \epsilon )\) factor approximation for the monomer-dimer partition function in time \(\left( {n}/{\epsilon }\right) ^{\tilde{O}(\sqrt{\gamma d})}\) on graphs of maximum degree d. Their result therefore yields super-polynomial (though sub-exponential) algorithms in the case of graphs such as those drawn from \(\mathcal {G}(n, d/n)\), which have unbounded maximum degree even for constant d. In contrast, the present paper shows that the same running time can in fact be obtained for graphs of connective constant d, irrespective of the maximum degree. The running times obtained by applying the results of this paper to the case of \(\mathcal {G}(n, d/n)\) are therefore polynomial (and not merely sub-exponential) when d is a fixed constant.

The connective constant itself and various notions related to it have been the subject of an extensive and active area of research. Starting with the classical papers by Hammersley and Morton [20], Hammersley and Broadbent [8] and Hammersley [19], several natural combinatorial questions concerning the number and other properties of self-avoiding walks in various lattices have been studied in depth; see the monograph of Madras and Slade [32] for a survey. Much work has been devoted especially to finding rigorous upper and lower bounds for the connective constant of various lattices [1, 2, 22, 26, 41], and heuristic techniques from physics have also been brought to bear upon this question. For example, Nienhuis [39] conjectured on the basis of heuristic arguments that the connective constant of the honeycomb lattice \(\mathbb {H}\) must be \(\sqrt{2+\sqrt{2}}\). In 2012, Nienhuis’s conjecture was rigorously confirmed in a celebrated paper of Duminil-Copin and Smirnov [9].

2 Preliminaries

2.1 Approximation algorithms

Deterministic and randomized fully polynomial approximation schemes are standard notions of algorithmic approximation. While the definitions we give here for them are specialized to the case of quantities which are functions of graphs (since this is the setting that concerns us in this paper), there is no difficulty in generalizing them to other contexts.

Definition 1

(Fully polynomial approximation schemes) Let \(\mathcal {A}(G)\) be a quantity that depends upon an input graph G. A fully polynomial time approximation scheme (FPTAS) for \(\mathcal {A}\) is a deterministic algorithm which, on input G and a rational accuracy parameter \(\epsilon \), outputs in time polynomial in \(1/\epsilon \) and the representation length of G a quantity \(\hat{A}\) satisfying \((1-\epsilon )\mathcal {A}(G) \le \hat{A} \le (1+\epsilon )\mathcal {A}(G)\). A fully polynomial time randomized approximation scheme (FPRAS) for \(\mathcal {A}\) is a randomized algorithm that on input G, an accuracy parameter \(\epsilon \) and an error parameter \(\delta \), outputs in time polynomial in \(1/\epsilon \), \(\log (1/\delta )\) and the representation length of G a quantity \(\hat{A}\) which, with probability at least \(1-\delta \) over the randomness of the algorithm satisfies \((1-\epsilon )\mathcal {A}(G) \le \hat{A} \le (1+\epsilon )\mathcal {A}(G)\).

2.2 Probabilities and likelihood ratios

In this section, we define some standard marginals of the monomer-dimer and hard core distributions. The importance of these quantities for our work comes from the standard “self-reducibility” arguments which show that obtaining an FPTAS for these marginals is sufficient in order to obtain an FPTAS for the partition function of these models (see Sect. 2.5 for these reductions).

2.2.1 Monomer-dimer model

Definition 2

(Monomer probability) Consider the Gibbs distribution of the monomer-dimer model with dimer activity \(\gamma \) on a finite graph \(G = (V,E)\), and let v be a vertex in V. We define the monomer probability p(v, G) as

which is the probability that v is unmatched (i.e., a monomer) in a matching M sampled from the Gibbs distribution.

Remark 2

The monomer-dimer model is often described in the literature in terms of a monomer activity \(\lambda \) instead of the dimer activity \(\gamma \) used here. In this formulation, the weight of a matching M is \(\lambda ^{u(M)}\), where u(M) is the number of unmatched vertices (monomers) in M. The two formulations are equivalent: with dimer activity \(\gamma \) corresponding to monomer activity \(\lambda = \frac{1}{\gamma ^2}\).

2.2.2 Hard core model

In the case of the hard core model, we will need to define the appropriate marginals in a slightly more generalized setting. Given a graph \(G = (V,E)\), a boundary condition will refer to a partially specified independent set in G; formally, a boundary condition \(\sigma = (S, I)\) is a subset \(S \subseteq V\) along with an independent set I on S (boundary conditions may be seen as special instances of initial conditions defined below).

Definition 3

(Occupation probability and occupation ratio) Consider the hard core model with vertex activity \(\lambda > 0\) on a finite graph G, and let v be a vertex in G. Given a boundary condition \(\sigma = (S, I_S)\) on G, the occupation probability \(p_v(\sigma , G)\) at the vertex v is the probability that v is included in an independent set I sampled according to the hard core distribution conditioned on the event that I restricted to S coincides with \(I_S\). The occupation ratio \(R_v(\sigma ,G)\) is then defined as

2.2.3 Strong spatial mixing

We present the definition of strong spatial mixing for the special case of the hard core model; the definition in the case of the monomer dimer model is exactly analogous. Our definition here closely follows the version used by Weitz [50].

Definition 4

(Strong spatial mixing) The hard core model with a fixed vertex activity \(\lambda > 0\) is said to exhibit strong spatial mixing on a family \({\mathcal {F}}\) of graphs if for any graph G in \({\mathcal {F}}\), any vertex v in G, and any two boundary conditions \(\sigma \) and \(\tau \) on G which differ only at a distance of at least \(\ell \) from v, we have

for some fixed constant \(0 \le c < 1\).

An important special condition of the definition is when the family \(\mathcal {F}\) consists of a single infinite graph (e.g., \(\mathbb {Z}^2\) or another regular lattice). The constant c in the definition is often referred to as the rate of strong spatial mixing.

2.3 Truncated recurrences with initial conditions

As in the case of other correlation decay based algorithms (e.g., in [13, 50]), we will need to analyze recurrences for marginals on rooted trees with various initial conditions. We therefore set up some notation for describing such recurrences. For a vertex v in a tree T, we will denote by |v| the distance of v from the root of the tree. Similarly, for a set S of vertices, \(\delta _S :=\min _{v \in S} |v|\).

Definition 5

(Cutset) Let T be any tree rooted at \(\rho \). A cutset C is a set of vertices in T satisfying the following two conditions:

-

(1)

Any path from \(\rho \) to a leaf v with \(|v| \ge \delta _C\) must pass through C.

-

(2)

The vertices in C form an antichain, i.e., for any vertices u and v in C, neither vertex is an ancestor of the other in T.

A trivial example of a cutset is the set L of all the leaves of T. Another example we will often need is the set \(S_\ell \) of all vertices at distance \(\ell \) from \(\rho \) in T.

Remark 3

In Sect. 6, we will need to use cutsets on rooted locally finite infinite trees. In this case, the first condition in the definition changes to “any infinite path starting from the root \(\rho \) must pass through C”.

For a cutset C, we denote by \(T_{\le C}\) the subtree of T obtained by removing the descendants of vertices in C from T, and by \(T_{< C}\) the subtree of T obtained by removing the vertices in C from \(T_{\le C}\). Further, for a vertex u in T, we denote by \(T_u\) the subtree of T rooted at u, and by \(T_{u, \le C}\) and \(T_{u, < C}\) the intersections of \(T_u\) with \(T_{\le C}\) and \(T_{< C}\) respectively.

Definition 6

(Initial condition) An initial condition \(\sigma = (S, P)\) is a set S of vertices in T along with an assignment \(P: S \rightarrow [0,b]\) of bounded positive values to vertices in S.

We are now ready to describe the tree recurrences. Given an initial condition \(\sigma = (S, P)\) along with a default value \(b_0\) for the leaves, a family of functions \(f_d:[0,b]^d \rightarrow [0,b]\) for every positive integer \(d \ge 1\), and a vertex u in T, we let \(F_{u}(\sigma )\) denote the value obtained at u by iterating the tree recurrences f on the subtree \(T_{u}\) rooted at u under the initial condition \(\sigma \). Formally, we define \(F_u(\sigma ) = b_0\) when \(u\not \in S\) is a leaf, and

2.4 The self-avoiding walk tree and associated recurrences

Given a vertex v in a graph G, one can define a rooted tree \(T_{SAW}\left( v,G\right) \) of self-avoiding walks (called the self-avoiding walk tree, or SAW tree) starting at v, as follows: the root of the tree represents the trivial self-avoiding walk that ends at v, and given any node u in the tree, its children represent all possible self-avoiding walks that can be obtained by extending the self-avoiding walk represented by u by exactly one step (note that the self-avoiding walk tree for a finite graph G is of finite size, since any self-avoiding walk in G is of length at most the number of vertices in G). The importance of the self-avoiding walk tree for computation stems from the beautiful results of Godsil [16] (for the monomer-dimer model) and Weitz [50] (for the hard core model), which allow the derivation of simple recurrences for the monomer probability \(p_v(G)\) on general graphs. We begin with the case of the monomer-dimer model.

2.4.1 Monomer-dimer model

Theorem 3

(Godsil [16]) Let v be a vertex in a graph G, and consider the monomer-dimer model with dimer activity \(\gamma > 0\) on the graphs G and \(T_{SAW}\left( v, G\right) \). We then have

The promised recurrence for \(p_v(G)\) can now be derived using dynamic programming on the tree \(T_{SAW}\left( v, G\right) \). In particular, let T be any tree rooted at \(\rho \), and let \(\rho _i\), \(1 \le i \le d\) be the children of \(\rho \). Denoting by \(p_i\) the monomer probability \(p_{\rho _i}(T_{\rho _i})\) at the root of the subtree \(T_{\rho _i}\), one can then show that (see, e.g., [25])

Remark 4

In what follows, we will often suppress the dependence of \(f_{d,\gamma }\) on \(\gamma \) for convenience of notation.

In terms of our notation for tree recurrences, we note that the actual computation of \(p_\rho (T)\) corresponds to computing \(F_\rho (\varvec{1}_L)\), where the initial condition \(\varvec{1}_L\) assigns the value 1 to all vertices in L, the cutset comprising all the leaves (and with the boundary value \(b_0\) set to 1), since the base case of the recurrence comprises a single vertex which has monomer probability 1 by definition.

Note that the self-avoiding tree can be of exponential size, so that Godsil’s reduction does not immediately yield an efficient algorithm for computing \(p_\rho (G)\). In order to obtain an algorithm, we would need to consider truncated versions of the recurrence, obtained by specifying initial conditions on the cutset \(S_\ell \) comprising all vertices at distance \(\ell \) from \(\rho \). Since \(f_{d, \gamma }\) is monotonically decreasing in each of its arguments, we have

Here, the initial condition \(\varvec{0}_\ell \) (respectively, \(\varvec{1}_\ell \)) assigns the value 0 (respectively, 1) to every vertex in \(S_\ell \). Given these conditions, it is sufficient to show that the difference between \(F_\rho (\varvec{0}_\ell )\) and \(F_\rho (\varvec{1}_\ell )\) decreases exponentially in \(\ell \) in order to establish that truncated versions of the recurrence converge to the true answer \(p_\rho (T)\) exponentially fast in the “truncation length” \(\ell \).

2.4.2 Hard core model

Weitz [50] proved a reduction similar to that of Godsil for the hard core model. However, in contrast to Godsil’s reduction for the monomer-dimer model Weitz’s reduction requires a boundary condition to be applied to the self avoiding walk tree.

Theorem 4

(Weitz [50]) Let v be a vertex in a graph G, and consider the hard core model with vertex activity \(\lambda > 0\) on the graphs G and \(T_{SAW}\left( v, G\right) \). Then, there exists an efficiently computable boundary conditionFootnote 3 \(\mathcal {W}\) on \(T_{SAW}\left( v, G\right) \) such that for any boundary condition \(\sigma \) on G, we have

where (1) the boundary condition \(\sigma \) on the right hand side denotes the natural translation of the boundary condition \(\sigma \) on G to \(T_{SAW}\left( v, G\right) \), and (2) \(\mathcal {W} \cup \sigma \) is the boundary condition obtained by first applying the boundary condition \(\mathcal {W}\), and then \(\sigma \) (that is, \(\sigma \) overrides \(\mathcal {W}\) on vertices on which \(\sigma \) and \(\mathcal {W}\) are both specified and disagree).

We will often refer to a self-avoiding walk tree with Weitz’s boundary condition as “a Weitz SAW tree”.

As in the case of the monomer-dimer model, the theorem allows the computation of \(R_v(\sigma , G)\) using natural recurrences on the tree. Using the same notation as in the case of the monomer-dimer model, we denote \(R_{\rho _i}(\sigma , T_{\rho _i})\) as \(R_i\). It is well known that (see, e.g., [50]) that

We now see that in terms of our notation for tree recurrences, the computation of \(R_\rho (\sigma , T)\) corresponds to computing \(F_\rho (\varvec{\lambda }_L \cup \sigma )\) (with the boundary value \(b_0\) for leaves set to \(\lambda \)), where the initial condition \(\varvec{\lambda }_L \cup \sigma \) assigns the value \(\lambda \) to all vertices in the set L of leaves, and then applies the boundary condition \(\sigma \) (possibly overriding previously assigned values). Note that the boundary condition \(\sigma \) assigns \(R_v = \infty \) for vertices v which are set to occupied by \(\sigma \), and hence, strictly speaking, violates the requirement that initial conditions should only assign bounded values. However, this can be fixed easily by observing that an initial condition which assigns \(R_v = \infty \) is equivalent to the one which assigns \(R_u = 0\) to the parent u of v. Thus, we may assume without loss of generality that our initial conditions only assign values from the interval \([0, \lambda ]\).

Again, as in the case of the monomer-dimer model, we will need to work with truncated trees. As before, we consider initial condition specified on cutsets \(S_\ell \) of vertices at distance \(\ell \) from the root \(\rho \), and use the fact that \(f_{d, \lambda }\) is monotonically decreasing in each of its arguments to see that

Here, the initial condition \(\varvec{0}_\ell \cup \sigma \) (respectively, \(\varvec{\lambda }_\ell \cup \sigma \)) assigns the value 0 (respectively, \(\lambda \)) to every vertex in \(S_\ell \), after which the boundary condition \(\sigma \) is applied, possibly overriding the earlier assignments (from the previous discussion, we can assume that the effect of \(\sigma \) is limited to setting some more vertices to 0). As before, it is then sufficient to show that the difference between \(F_\rho (\varvec{0}_\ell \cup \sigma )\) and \(F_\rho (\varvec{1}_\ell \cup \sigma )\) decreases exponentially in \(\ell \) in order to establish that truncated versions of the recurrence converge to the true answer \(R_\rho (\sigma , T)\) exponentially fast in the “truncation length” \(\ell \).

2.5 From probabilities to the partition function

In this section, we review some standard facts on how approximation algorithms for the marginal probabilities translate into approximation algorithms for the partition function (see, e.g, [13, 50]). We provide the calculations here for the case of the monomer-dimer model, and refer to Weitz [50] for similar calculations for the hard core model.

Let \(v_1, v_2, \cdots , v_n\) be any arbitrary ordering of the vertices of G. Since the monomer-dimer partition function of the empty graph is 1, we then have

Suppose, we have an FPTAS for the probabilities \(p_\rho \) which runs in time \(t(n, 1/\epsilon )\) and produces an output \(\hat{p}\) such that \(p_\rho /(1+\epsilon ) \le \hat{p} \le p_\rho \). Now, given \(\epsilon \le 1\), we use the FPTAS in time \(t\left( n, {2n}/{\epsilon }\right) \) to compute an approximation \(\hat{p}_i\) to the \(p_{v_i}\left( G - \left\{ v_1,\cdots ,v_{i-1}\right\} \right) \). We then have for each i

By multiplying these estimates. we obtain an estimate \(\hat{Z}\) of the partition function which satisfies

where we use the condition \(\epsilon \le 1\) in the last inequality. Thus, the total running time is \(O\left( n\cdot t\left( n, {2n}/{\epsilon }\right) \right) \), which is polynomial in n and \(1/\epsilon \) whenever t is. Thus, it is sufficient to derive an FPTAS for the marginal probabilities in order to obtain an FPTAS for the partition function.

2.6 The connective constant

We now recall the definition of the connective constant of a graph. Given a vertex v in a locally finite graph, we denote by N(v, l) the number of self-avoiding walks of length l in the graph which start at v. The connective constant for infinite graphs is then defined as follows.

Definition 7

(Connective constant: infinite graphs [32]) Let \(G=(V,E)\) be a locally finite infinite graph. The connective constant \(\varDelta (G)\) of G is \(\sup _{v\in V}\limsup _{\ell \rightarrow \infty }N(v,\ell )^{1/\ell }\).

Remark 5

The supremum over v in the definition is clearly not required for vertex-transitive graphs such as Cartesian lattices. Further, in such graphs the \(\limsup \) can be replaced by a limit [32].

The definition was extended in [44] to families of finite graphs parametrized by size. As observed there, such a parametrization is natural for algorithmic applications.

Definition 8

(Connective constant: finite graphs [44]) Let \({\mathcal {F}}\) be a family of finite graphs. The connective constant of \({\mathcal {F}}\) is at most \(\varDelta \) if there exist constants a and c such that for any graph \(G = (V,E)\) in \({\mathcal {F}}\) and any vertex v in G, we have \(\sum _{i=1}^\ell N(v, i) \le c\varDelta ^\ell \) for all \(\ell \ge a\log |V|\).

It is easy to see that the connective constant of a graph of maximum degree \(d+1\) is at most d. However, the connective constant can be much smaller than the maximum degree. For example, though the maximum degree of a graph drawn from the Erdős–Rényi model \(\mathcal {G}\left( n, d/n\right) \) is \(\varTheta (\log n/\log \log n)\) w.h.p, it is not hard to show (see [44]) that for any fixed \(\epsilon > 0\), the connective constant of such a graph is at most \(d(1+\epsilon )\) w.h.p.

Remark 6

Note that the connective constant has a natural interpretation as the “average arity” of the SAW tree, since vertices in \(T_{SAW}\left( v, G\right) \) at distance \(\ell \) from the root are in bijection with self-avoiding walks of length \(\ell \) starting at v.

3 Decay of correlations on the SAW tree

In this section, we lay the groundwork for proving decay of correlations results for the tree recurrences \(F_{\rho }\) defined in Eq. (1) for both the hard core and monomer-dimer models: such a result basically affirms that truncating the recurrence at a small depth \(\ell \) is sufficient in order to approximate \(F_\rho \) with good accuracy. Our proof will use the message approach [28, 42, 43], which proceeds by defining an appropriate function \(\phi \) of the marginals being computed and then showing a decay of correlation result for this function.

Definition 9

(Message [28, 42, 43]) Given a positive real number b, a message is a strictly increasing and continuously differentiable function \(\phi : (0, b] \rightarrow \mathbb {R}\), with the property that the derivative of \(\phi \) is bounded away from 0 on its domain. A message \(\phi \) is guaranteed to admit a continuously differentiable inverse, which we will denote by \(\psi \).

In the rest of this section, we will work in the abstract framework described in Sect. 2.3, to illustrate how the message approach can be used to get strengthened decay of correlation estimates as compared to those obtained from direct analyses of one step of the recurrence. We will then instantiate our framework with appropriately chosen messages for the monomer-dimer and the hard core models in Sects. 4 and 5.

We begin by fixing the boundary value \(b_0\) for the leaves in our recurrence framework, and assume that the initial conditions specify values in the interval [0, b]. We assume that we have a set of tree recurrences \(f_d:[0,b]^d \rightarrow [0,b]\) for every positive integer \(d \ge 1\). The only constraints we put on the recurrences in this section are the following (both of which are trivially satisfied by the recurrences for the hard core and the monomer-dimer model).

Condition 5

(Consistency) We say that a set of recurrences \(\left\{ f_d\right\} _{d \ge 1}\), where \(f_d\) is d-variate, are consistent if they obey the following two conditions:

-

(1)

If \(\varvec{x} \in \mathbb {R}^d\) is a permutation of \(\varvec{y}\in \mathbb {R}^d\), then \(f_d(\varvec{x}) = f_d(\varvec{y})\).

-

(2)

If all but the first k co-ordinates of \(\varvec{x} \in \mathbb {R}^d\) are 0, then \(f_d(\varvec{x}) = f_k(x_1, x_2, x_3, \ldots , x_k)\).

Given the message \(\phi \) (and its inverse \(\psi \)), we further define \(f_d^\phi \) by

We then have the following simple consequence of the mean value theorem (a proof can be found in Appendix B).

Lemma 1

(Mean value theorem) Consider two vectors \(\varvec{x}\) and \(\varvec{y}\) in \(\phi ([0,B])^d\). Then there exists a vector \(\varvec{z} \in [0, \infty )^d\) such that

where \(\varPhi := \phi '\) is the derivative of \(\phi \), and by a slight abuse of notation we denote by \(\frac{\partial f_{d}}{\partial z_i}\) the partial derivative of \(f_{d}(R_1, R_2,\ldots ,R_d)\) with respect to \(R_i\) evaluated at \(\varvec{R} = \varvec{z}\).

The first step of our approach is similar to that taken in the papers [27, 28, 42, 43] in that we will use an appropriate message—along with the estimate in Lemma 1—to argue that the “distance” between two input message vectors \(\varvec{x}\) and \(\varvec{y}\) at the children of a vertex shrinks by a constant factor at each step of the recurrence. Previous works [27, 28, 42, 43] showed such a decay on some version of the \(\ell _\infty \) norm of the “error” vector \(\varvec{x} - \varvec{y}\): this was achieved by bounding the appropriate dual \(\ell _1\) norm of the gradient of the recurrence. Our intuition is that in order to achieve a bound in terms of a global quantity such as the connective constant, it should be advantageous to use a more global measure of the error such as an \(\ell _q\) norm for some \(q < \infty \).

In line with the above plan, we will attempt to bound the right hand side of eq. (8) in terms of \(\Vert \varvec{x}-\varvec{y} \Vert _{q}\) for an appropriate value of \(q < \infty \) by maximizing the sum while keeping \(f_{d}(\varvec{z})\) fixed. In the special case \(q=2\), it is in fact posible to carry out this maximization using relatively simple concavity arguments. This was the approach taken in [44], but the restriction \(q=2\) leads to sub-optimal results. Here we get past this limitation by using a more flexible optimization than that used in [44]. To do this, we will seek to establish the following property for our messages (the exponent a will be the Hölder conjugate of the value of q that we eventually use).

Definition 10

Given a consistent family of recurrences \(\left\{ f_d\right\} _{d \ge 1}\), a message \(\phi \) (with \(\varPhi :=\phi '\)) is said to be symmetrizable with exponent a with respect to the family if it satisfies the following two conditions:

-

(1)

\(\lim _{x_i\rightarrow {0^+}}\frac{1}{\varPhi (x_i)}\left| \frac{\partial f_d}{\partial x_i}\right| = 0\) for all \(d \ge 1\), and for any fixed values of the \(x_j\), \(j \ne i\).

-

(2)

Let \(\mathcal {D}\) be the domain of the recurrence family, so that as discussed above, it is of the form [0, b] for a positive real b. For every positive integer d and every real \(B > 0\) for which the program

$$\begin{aligned} \max \qquad&\sum _{i=1}^d \left( \frac{1}{\varPhi (x_i)} \left| \frac{\partial f_{d}}{\partial x_i}\right| \right) ^a, \qquad \text {where}\\&f_d(\varvec{x}) = B\\&x_i \in \mathcal {D},\qquad 1\le i\le d \end{aligned}$$is feasible, it also has a solution \(\varvec{x}\) in which all the non-zero entries of \(\varvec{x}\) are equal. Here, we use condition 1 to continuously extend the definition of the d functions \(\frac{1}{\varPhi (x_i)} \left| \frac{\partial f_{d}}{\partial x_i}\right| \) so that that they evaluate to 0 when \(x_i = 0\).

For symmetrizable messages, we will be able to bound the quantity \(\vert f_{d}^\phi (\varvec{x}) - f_{d}^\phi (\varvec{y})\vert \) in terms of \(\Vert \varvec{x} - \varvec{y} \Vert _{q}\), where \(1/a + 1/q = 1\), and our improved correlation decay bounds will be based on the fact that symmetrizability can be shown to hold under a wider range of values of q than that required by the concavity conditions used in [44]. Our bounds will be stated in terms of the following notion of decay.

Notation. Given a d-variate function \(f_d\) and a scalar x, we denote by \(f_d(x)\) the quantity \(f_d(x, x, \ldots , x)\).

Definition 11

(Decay factor \(\alpha \)) Let \(\phi \) be a message with derivative \(\varPhi \), and let a and q be positive reals such that \(\frac{1}{a} + \frac{1}{q} = 1\). We define the functions \(\varXi _{\phi ,q}(d, x)\) and \(\xi _{\phi ,q}(d)\) as follows:

The decay factor \(\alpha \) is then defined as

Armed with the above definitions, we are now ready to prove Lemma 2, which provides the requisite decay bound for one step of the tree recurrence. The main technical step in applying this lemma is to find a, q as in the definition and a message \(\phi \) symmetrizable with exponent a for which the decay factor \(\alpha \) is small; Lemma 3 below then shows how the decay factor comes into play in proving exponential decay of correlations over the tree.

Lemma 2

Let \(\phi \) be a message with derivative \(\varPhi \), and let a and q be positive reals such that \(\frac{1}{a} + \frac{1}{q} = 1\). If \(\phi \) is symmetrizable with exponent a, then for any two vectors \(\varvec{x}, \varvec{y}\) in \(\phi ([0, b])^d\), there exists an integer \(k \le d\) such that

Proof

We apply Lemma 1. Assuming \(\varvec{z}\) is as defined in that lemma, we have by Hölder’s inequality

Since \(\phi \) is symmetrizable with exponent a, we can replace \(\varvec{z}\) in the above inequality with a vector \(\varvec{\tilde{z}}\) all of whose non-zero entries are equal to some fixed real \(\tilde{z}\). Let \(k \le d\) be the number of non-zero entries in \(\varvec{\tilde{z}}\). Using the consistency condition, we then get

Raising both sides to the power q, and using \(\frac{1}{a} + \frac{1}{q} = 1\) and the definitions of the functions \(\varXi \) and \(\xi \), we get the claimed inequality.

Given a message \(\phi \) satisfying the conditions of Lemma 2, we can easily prove the following lemma on the propagation of errors in locally finite infinite trees. Recall that \(F_\rho (\sigma )\) denotes the value computed by the recurrence at the root \(\rho \) under an initial condition \(\sigma \). The lemma quantifies the dependence of \(F_\rho (\sigma )\) on initial conditions \(\sigma \) which are fixed everywhere except at some cutset C, in terms of the distance of C from \(\rho \).

Lemma 3

Let T be a finite tree rooted at \(\rho \). Let C be a cutset in T at distance at least 1 from the root which does not contain any leaves, and let \(C'\) be the cutset consisting of the children of vertices in C. Consider two arbitrary initial conditions \(\sigma \) and \(\tau \) on \(T_{\le C'}\) which differ only on \(C'\) (so that they agree, in particular, on the default values they assign to the leaves of \(T_{\le C'}\) that are not in \(C'\)), and which assign values from the interval [0, b]. Given a recurrence family \(\left\{ f_d\right\} _{d\ge 1}\), let a and q be positive reals such that \(\frac{1}{a} + \frac{1}{q} = 1\) and suppose \(\phi \) is a message that is symmetrizable with exponent a. We then have

where \(\alpha \) is as defined in Eq. (9), and L and M are defined as follows:

For a proof of this lemma, see Appendix B.

4 A message for the hard core model

We now instantiate the approach outlined in Sect. 3 to prove Theorem 1 for the hard core model. Our message is the same as that used in [28]; we choose

Notice that \(\phi \) is a strictly increasing, continuously differentiable function on \((0, \infty )\), and also satisfies the technical condition that the derivative \(\varPhi \) be bounded away from zero on any finite interval, as required in the definition of a message. Our improvements over the results in [44] depend upon proving the following fact about the message \(\phi \).

Lemma 4

For any \(a \ge 2\), the message \(\phi \) as defined in Eq. (10) is symmetrizable with exponent a with respect to the tree recurrence \(\left\{ f_{d,\lambda }\right\} _{d\ge 1}\) of the hard core model.

The proof of the above lemma is quite technical and is deferred to Appendix C.2. The crucial advantage of Lemma 4 is the flexibility it allows in the choice of exponent a. The decay factor \(\alpha \) in Lemma 3 which governs the rate of decay in the tree recurrences depends upon the choice of the exponent, and as we show later in this section, it is possible to obtain an optimal decay rate by choosing an appropriate exponent satisfying Lemma 4.

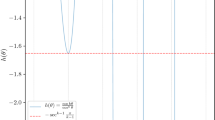

We now outline how the flexibility in the choice of the exponent afforded by Lemma 4 helps us obtain an optimal decay rate. Given the vertex activity \(\lambda \), we first define the quantity \(\varDelta _c :=\varDelta _c(\lambda )\) as the unique solution in t of \(\lambda _c(t) = \lambda \) (the existence and uniqueness of \(\varDelta _c\) follow from the easily verified fact that \(\lambda _c\) is a strictly decreasing function and maps the interval \((1, \infty )\) onto \((0, \infty )\)). To get sufficient stepwise decay when Lemma 3 is applied, we need to show that for all \(d > 0\), \(\xi _{\phi ,q}(d) \le \frac{1}{\varDelta _c}\). On the other hand, irrespective of the choice of q (and hence a), it is easy to show that \(\xi _{\phi ,q}(\varDelta _c) = \frac{1}{\varDelta _c}\). Thus, in order to fulfill our requirements for the decay rate, we need to choose a value of q such that \(\xi _{\phi , q}(d)\) is maximized at \(d = \varDelta _c\). Now, it turns out that although symmetrizability is easier to establish when \(q \ge 2\) (i.e., when \(a \le 2\)), \(\xi _{\phi , q}(d)\) is a strictly increasing function of d for such q. Hence, in order to produce a maximum at \(d = \varDelta _c\), we need to choose \(q \le 2\) (equivalently, \(a \ge 2\)). In fact, as seen in [44], even \(q = a = 2\) is not sufficient for an optimal result, and we actually need \(q < 2\) to fulfill the requirements on the decay rate. The hypotheses of Lemma 4 then require symmetrizability to be established for this more challenging range of values of a and q. In contrast, the simpler concavity arguments used in [44] restricted the choice of the exponent to \(a = 2\), and hence led to a suboptimal decay rate.

Our analysis of the function \(\xi _{\phi ,q}(d)\) now begins with the following simple lemma, the special case \(q=2\) of which was proven in [44]. The lemma merely shows how to perform one of the maximizations needed in the definition of the decay factor \(\alpha \). In what follows, we drop the subscript \(\phi \) for simplicity of notation.

Lemma 5

Consider the hard core model with any fixed vertex activity \(\lambda > 0\). For any \(q \ge 1\) and with \(\phi \) as defined in Eq. (10), we have \(\xi _q(d) = \varXi _q(d, \tilde{x}_\lambda (d))\), where \(\tilde{x}_\lambda (d)\) is the unique solution to

Proof

Plugging in \(\varPhi \) from Eq. (10) in the definition of \(\varXi \), we get

Taking the partial derivative with respect to the second argument, we get

For fixed d, the quantity outside the square brackets is always positive, while the expression inside the square brackets is strictly decreasing in x. Thus, any zero of the expression in the brackets will be a unique maximum of \(\varXi _q\). The fact that such a zero exists follows by noting that the partial derivative is positive at \(x = 0\) and negative as \(x \rightarrow \infty \). Thus, \(\varXi _q(d, x)\) is maximized at \(\tilde{x}_{\lambda }(d)\) as defined above, and hence \(\xi _q(d) = \varXi _q(d, \tilde{x}_\lambda (d))\), as claimed.

We now choose a and q as follows:

Note that since \(\log (1+y) \le y\) for all \(y \ge 0\), we get that \(q \le 2\) (and hence \(a \ge 2\)) by using \(y = \frac{1}{\varDelta _c - 1}\), and noting that \(\varDelta _c > 1\). As noted above, \(\xi _{\phi , q}(\varDelta _c) = 1/\varDelta _c\) holds for all q. The above value of q is derived by maximizing \(\xi _{\phi , q}(d)\) over d for an arbitrary q, and then stipulating that this maximum occurs at \(d = \varDelta _c\). The details of this computation justifying this choice of q appear in Appendix C.1. Here, we record this property of our choice of q in the following lemma. To simplify notation and to emphasize dependence of q upon \(\lambda \), we define the function \(\nu _\lambda (d)\) as follows:

Lemma 6

Fix \(\lambda > 0\) and let \(\varDelta _c(\lambda ) > 1\) be the unique solution to \(\lambda _c(t) = \lambda \). The function \(\nu _\lambda : \mathbb {R}^+ \rightarrow \mathbb {R}^{+}\) is maximized at \(d = \varDelta _c :=\varDelta _c(\lambda )\). Further,

The proof of the above lemma is somewhat technical, and is deferred to Appendix C.1. The lemma shows that when \(\lambda < \lambda _c(\varDelta )\), the decay factor \(\alpha < \frac{1}{\varDelta }\). We now proceed with the proof of Theorem 1. This only requires some standard arguments, with the only new ingredient being the improved estimate on decay factor proved in Lemma 6.

Proof of Theorem 1

Let \({\mathcal {G}}\) be any family of finite or infinite graphs with connective constant \(\varDelta \). We prove the result for any fixed \(\lambda \) such that \(\lambda < \lambda _c(\varDelta )\). For such \(\lambda \), we have \(\varDelta _c(\lambda ) > \varDelta \) (since \(\lambda _c\) is a decreasing function). Using Lemma 6 we then see that there is an \(\epsilon > 0\) such that \(\nu _{\lambda }(d)\varDelta \le 1 - \epsilon \) for all \(d > 0\).

We first prove that the hard core model with these parameters exhibits strong spatial mixing on this family of graphs. Let G be any graph from \({\mathcal {G}}\), v any vertex in G, and consider any boundary conditions \(\sigma \) and \(\tau \) on G which differ only at a distance of at least \(\ell \) from v. We consider the Weitz self-avoiding walk tree \(T_{SAW}\left( v, G\right) \) rooted at v (as defined in Sect. 2.6). As before, we denote again by \(\sigma \) (respectively, \(\tau \)) the translation of the boundary condition \(\sigma \) (respectively, \(\tau )\) on G to \(T_{SAW}\left( v, G\right) \). From Weitz’s theorem, we then have that \(R_v(\sigma , G) = R_v(\mathcal {W} \cup \sigma , T_{SAW}\left( v, G\right) )\) (respectively, \(R_v(\tau , G) = R_v(\mathcal {W} \cup \tau , T_{SAW}\left( v, G\right) )\)).

Consider first the case where G is infinite. Let \(C_\ell \) denote the cutset in \(T_{SAW}\left( v, G\right) \) consisting of all vertices at distance \(\ell \) from v. Since G has connective constant at most \(\varDelta \), it follows that for \(\ell \) large enough, we have \(|C_\ell | \le \varDelta ^\ell (1-\epsilon /2)^{-\ell }\). Further, in the notation of Lemma 3, \(\nu _\lambda (d)\varDelta = 1-\epsilon \) implies that the decay factor \(\alpha \) [defined in Eq. (9)] is at most \((1-\epsilon )/\varDelta \). We now apply Lemma 3. We first observe that given our message \(\phi \), we can bound the quantities L and M in the lemma as

The bounds on L and M follows from the fact that the values of the occupation ratio computed at any internal node of the tree lie in the range \([0, \lambda ]\). Setting \(c_0 = (L/M)^q\), we can the apply the lemma to get

which establishes strong spatial mixing in G, since \(1-\epsilon < 1-\epsilon /2\).

We now consider the case when \({\mathcal {G}}\) is a family of finite graphs, and G is a graph from \({\mathcal {G}}\) of n vertices. Since the connective constant of the family is \(\varDelta \), there exist constants a and c (not depending upon G) such that for \(\ell \ge a \log n\), \(\sum _{i=1}^\ell N(v, \ell ) \le c\varDelta ^\ell \). We now proceed with the same argument as in the infinite case, but choosing \(\ell \ge a \log n\). The cutset \(C_\ell \) is again chosen to be the set of all vertices at distance \(\ell \) from v in \(T_{SAW}\left( v,G\right) \), so that \(|C_\ell | \le c\varDelta ^{\ell }\). As before, we then have for \(\ell > a \log n\),

which establishes the requisite strong spatial mixing bound.

In order to prove the algorithmic part, we first recall an observation of Weitz [50] that an FPTAS for the “non-occupation” probabilities \(1 - p_v\) under arbitrary boundary conditions is sufficient to derive an FPTAS for the partition function. We further note that if the vertex v is not already fixed by a boundary condition, then \(1-p_v = \frac{1}{1 + R_v} \ge \frac{1}{1+\lambda }\), since \(R_v\) lies in the interval \([0,\lambda ]\) for any such vertex. Hence, an additive approximation to \(R_v\) with error \(\mu \) implies a multiplicative approximation to \(1-p_v\) within a factor of \(1\pm \mu (1+\lambda )\). Thus, an algorithm that produces in time polynomial in n and \(1/\mu \) an additive approximation to \(R_v\) with error at most \(\mu \) immediately gives an FPTAS for \(1-p_v\), and hence, by Weitz’s observation, also for the partition function. To derive such an algorithm, we again use the tree \(T_{SAW}\left( v, G\right) \) considered above. Suppose we require an additive approximation with error at most \(\mu \) to \(R_v(\sigma , G) = R_v(\sigma , T_{SAW}\left( v, G\right) )\). We notice first that \(R_v = 0\) if and only if there is a neighbor of v that is fixed to be occupied in the boundary condition \(\sigma \). In this case, we simply return 0. Otherwise, we expand \(T_{SAW}\left( v, G\right) \) up to depth \(\ell \) for some \(\ell \ge a\log n\) to be specified later. Notice that this subtree can be explored in time \(O\left( \sum _{i=1}^\ell N(v,i)\right) \) which is \(O(\varDelta ^\ell )\) since the connective constant is at most \(\varDelta \).

We now consider two extreme boundary conditions \(\sigma _+\) and \(\sigma _-\) on \(C_\ell \): in \(\sigma _+\) (respectively, \(\sigma _-\)) all vertices in \(C_\ell \) that are not already fixed by \(\sigma \) are fixed to “occupied” (respectively, unoccupied). The form of the recurrence ensures that the true value \(R_v(\sigma )\) lies between the values \(R_v(\sigma _+)\) and \(R_v(\sigma _-)\). We compute the recurrence for both these boundary conditions on the tree. The analysis leading to eq. (13) ensures that, since \(\ell \ge a \log n\), we have

for some fixed positive constants \(M_1\) and \(M_2\). Now, assume without loss of generality that \(R_v(\sigma _+) \ge R_v(\sigma _-)\). By the preceding observations, we then have

By choosing \(\ell = a\log n + O(1) +O(\log (1/\mu ))\), we get the required \(\pm \mu \) approximation. Further, by the observation above, the algorithm runs in time \(O\left( \varDelta ^{\ell }\right) \), which is polynomial in n and \(1/\mu \) as required.

We now prove Corollary 1.

Proof of Corollary 1

Since \(\lambda < \lambda _c(d)\), there exists an \(\epsilon > 0\) such that \(\lambda < \lambda _c(d(1+\epsilon ))\). Fix \(\beta > 0\). In order to prove the corollary, we only need to show that graphs drawn from \(\mathcal {G}(n, d/n)\) have connective constant at most \(d(1+\epsilon )\) with probability at least \(1 - n^{-\beta }\).

Recall that \(N(v,\ell )\) is the number of self-avoiding walks of length \(\ell \) starting at v. Suppose \(\ell \ge a\log n\), where a is a constant depending upon the parameters \(\epsilon \), \(\beta \) and d which will be specified later. We first observe that

and hence by Markov’s inequality, we have \(\sum _{i=1}^\ell N(v,i) \le d^\ell \frac{d}{d-1}(1+\epsilon )^\ell \) with probability at least \(1 - (1+\epsilon )^{-\ell }\). By choosing a such that \(a\log (1+\epsilon ) \ge \beta + 2\), we see that this probability is at least \(1 - n^{-\left( \beta +2\right) }\). By taking a union bound over all \(\ell \) with \(a\log n \le \ell \le n\) and over all vertices v, we see that the connective constant \(\varDelta \) is at most \(d(1+\epsilon )\) with probability at least \(1-n^{-\beta }\). We therefore see that with probability at least \(1-n^{-\beta }\), the conditions of Theorem 1 are satisfied. This completes the proof.

5 A message for the monomer-dimer model

In this section, we apply the general framework of Sect. 3 to the monomer-dimer model. As in the case of the hard core model, the first step is to choose an appropriate message. Unfortunately, unlike the case of the hard core model where we could show that an already known message was sufficient, we need to find a new message function in this case. We claim that the following message works:

Note that \(\phi \) is strictly increasing and continuously differentiable on the interval (0, 1], and its derivative is bounded away from 0 on that interval. Thus, \(\phi \) satisfies the conditions required in the definition of a message (note that the bound b used in the definition is 1 in the case of the monomer-dimer model). Now, in order to apply Lemma 3, we first study the symmetrizability of \(\phi \) in the following technical lemma.

Lemma 7

Fix \(r \in (1, 2]\). The message \(\phi \) as defined in Eq. (14) is symmetrizable with exponent r with respect to the tree recurrences \(\left\{ f_{d,\gamma }\right\} _{d \ge 1}\) of the monomer-dimer model.

We defer the proof of the above lemma to Appendix D.1. As in the case of the hard core model, we will need to make a careful choice of the exponent r in order to obtain an optimal decay factor. We begin with a technical lemma which characterizes the behavior of the function \(\xi \) used in the definition of the decay factor. For ease of notation, we drop the subscript \(\phi \) from the notation for \(\xi \).

Lemma 8

Consider the monomer-dimer model with edge activity \(\gamma \), and let \(\phi \) be the message chosen in (14). For any \(q > 1\), we have \(\xi _q(d) = \varXi _q(d, \tilde{p}_\gamma (d))\), where \(\tilde{p}_\gamma (d)\) satisfies \(\varXi _q^{(0,1)}(d, \tilde{p}_\gamma (d)) = 0\) and is given by

Proof

Plugging in \(\varPhi \) from eq. (14) in the definition of \(\varXi \), we get

Taking the partial derivative with respect to the second argument, we get

For fixed d, and \(0\le x \le 1\), the quantity outside the square brackets is always positive, while the expression inside the square brackets is strictly decreasing in x. Thus, any zero of the expression in the brackets in the interval [0, 1] will be a unique maximum of \(\varXi _q\). By solving the quadratic, we see that \(\tilde{p}_\gamma (d)\) as defined above is such a solution. Thus, \(\varXi _q(d, x)\) is maximized at \(\tilde{p}_{\gamma }(d)\) as defined above, and hence \(\xi _q(d) = \varXi _q(d, \tilde{p}_\gamma (d))\).

Given the edge activity \(\gamma \) and an upper bound \(\varDelta \) on the connective constant of the graph family being considered, we now choose \(D > \max (\varDelta , 3/(4\gamma ))\). We claim that we can get the required decay factor by choosing

Note that the choice of D implies that \(1 < r \le 2\), so that \(\phi \) is symmetrizable with respect to r. The following lemma shows that this choice of r indeed gives us the required decay factor. As in the case of the hard core model, we emphasize the dependence of the decay parameter on the model parameters by setting

Lemma 9

Fix \(\gamma > 0\) and \(D > 3/{4\gamma }\), and let q be as chosen in (15). Then the function \(\nu _\gamma : \mathbb {R}^+ \rightarrow \mathbb {R}^{+}\) is maximized at \(d = D\). Further, the decay factor \(\alpha \) is given by

Proof

We consider the derivative of \(\nu _\gamma (d)\) with respect to d. Recalling that \(\nu _\gamma (d) = \xi (d)= \varXi (d, \tilde{p}_\gamma (d))\) and using the chain rule, we have

where we in the last line we substitute the values \(\tilde{p}_\gamma (d) = (\sqrt{1 + 4\gamma d} - 1)/(2\gamma d)\) from Lemma 8 and \(q = \sqrt{1 + 4\gamma D}\) from eq. (15). Now, we note that in eq. (16), the quantity outside the square brackets is always positive, while the quantity inside the square brackets is a strictly decreasing function of d which is positive for \(d < D\) and negative for \(d > D\). It follows that \(\nu _\gamma '(d)\) has a unique zero at \(d = D\) for \(d \ge 0\), and this zero is a global maximum of \(\nu _\gamma \).

We are now ready to prove our main result for the monomer-dimer model, Theorem 2 from the introduction. Given Lemmas 3 and 9, only some standard arguments are needed to finish the proof.

Proof of Theorem 2

Let \({\mathcal {F}}\) be any family of finite graphs with connective constant at most \(\varDelta \). Given the vertex activity \(\gamma \) of the monomer-dimer model, we choose \(D = \max (\varDelta , 3/(4\gamma ))\). Using Lemma 9, we then see that the decay factor \(\alpha \) appearing in Lemma 3 can be chosen to be

Now, let G be any graph (with n vertices) from \({\mathcal {F}}\), and let v be a vertex in G. As observed in Sect. 2.2, it is sufficient to construct an FPTAS for \(p_v(G)\) in order to derive an FPTAS for the partition function.

Consider the self-avoiding walk tree \(T_{SAW}\left( v, G\right) \) rooted at v (as defined in Sect. 3). From Godsil’s theorem (Theorem 3), we know that \(p_v(G) = p_v(T_{SAW}\left( v, G\right) )\). Let \(C_\ell \) denote the cutset in \(T_{SAW}\left( v, G\right) \) consisting of all vertices at distance \(\ell \) from v. Since \(\mathcal {F}\) has connective constant at most \(\varDelta \), there exist constants a and c such that if \(\ell \ge a \log n\), we have \(\sum _{i=1}^\ell N(v, \ell ) \le c\varDelta ^\ell \). We will now apply Lemma 3 with q as defined in eq. (15). We first observe that the quantities L and M in the lemma can be taken to be

since the degree of any vertex in G is at most n.Footnote 4 Now, defining \(c_0 :=(M/L)^q\), we have

Raising both sides to the power 1 / q and substituting for \(c_0\) and q, we then have

To analyze the running time, we note that in order to obtain a \((1 \pm \epsilon )\) multiplicative approximation to \(p_v(G)\), it is sufficient to obtain a \(\pm \epsilon /(1 +\gamma n)\) additive approximation; this is because \(p_v(G) \ge 1/(1 +\gamma n))\) since the degree of each vertex in G is at most n. Now, as observed in Sect. 2.3, \(p_v(G)\) always lies between the quantities \(F_v(\varvec{0}_\ell )\) and \(F_v(\varvec{1}_\ell )\), so in order to obtain a \(\pm \epsilon /(1+\gamma n)\) approximation, it is sufficient to choose \(\ell \ge a \log n\) large enough so that the right hand side of eq. (18) is at most \(\epsilon /(1 + \gamma n)\). Denoting by \(\beta \) the quantity in the parenthesis on the right hand side of eq. (18), we can ensure this by choosing

Further, given such an \(\ell \), the running time of the algorithm is \(O(\sum _{i=1}^\ell N(v, \ell )) = O(\varDelta ^\ell )\), since this is the time it takes to expand the self-avoiding walk tree up to depth \(\ell \). Noting that \(1/(\log (1/\beta )) = \sqrt{\gamma \varDelta } + \varTheta (1)\), we obtain an algorithm running in time

which provides a \((1\pm \epsilon )\) multiplicative approximation for \(p_v(G)\). Recalling the arguments in Sect. 2.5, we see that this yields an algorithm for approximating the partition function up to a multiplicative factor of \((1 \pm \epsilon )\) with the same asymptotic exponent in the running time. This completes the proof.

Remark 7