Abstract

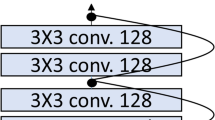

Training of a neural network is easier when layers are limited but situation changes rapidly when more layers are added and a deeper architecture network is built. Due to the vanishing gradient and complexity issues, it makes it more challenging to train neural networks, which makes training deeper neural networks more time consuming and resource intensive. When residual blocks are added to neural networks, training becomes more effective even with more complex architecture. Due to skip connections linked to the layers of artificial neural networks, which improves residual network (ResNet) efficiency, otherwise it was a time consuming procedure. The implantation of residual networks, their operation, formulae, and the solution to the vanishing gradient problem are the topics of this study. It is observed that because of ResNet, the model obtains good accuracy on image recognition task, and it is easier to optimize. In this study, ResNet is tested on the CIFAR-10 dataset, which has a depth of 34 layers and is both, more dense than VGG nets and less complicated. ResNet achieves error rates of up to 20% on the CIFAR-10 test dataset after constructing this architecture, which takes 80 epochs. More epochs can decrease the error further. The outcomes of ResNet and its corresponding convolutional network (ConvNet) without skip connection are compared. The findings indicate that ResNet offers more accuracy but is more prone to overfitting. To improve accuracy, overfitting prevention techniques including stochastic augmentation on training datasets and the addition of dropout layers in networks have been used.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Detailed guide to understand and implement ResNets. CV (2019) Retrieved November 27, 2021, from https://cv-tricks.com/keras/understand-implement-resnets/

Bock S, Weiß M (2019) A proof of local convergence for the adam optimizer. Int Joint Conf Neural Netw (IJCNN) 2019:1–8. https://doi.org/10.1109/IJCNN.2019.8852239

Cs.toronto.edu (2022) CIFAR-10 and CIFAR-100 datasets. [online] Available at: https://www.cs.toronto.edu/kriz/cifar.html. Accessed 15 Dec 2021

Bishop CM (1995) Neural networks for pattern recognition. Oxford university press

Ripley BD (1996) Pattern recognition and neural networks. Cambridge university press

Venables W, Ripley B (1999) Modern applied statistics with s-plus

Lee CY, Xie S, Gallagher P, Zhang Z, Tu Z (2014) Deeplysupervised nets. arXiv:1409.5185

Szegedy C, Liu W, Jia Y, Sermanet P, Reed S, Anguelov D, Erhan D, Vanhoucke V, Rabinovich A. Going deeper with convolutions. In: CVPR

Raiko T, Valpola H, LeCun Y (2012) Deep learning made easier by linear transformations in perceptrons. In: AISTATS

Schraudolph NN (1998) Centering neural network gradient factors. In: Neural networks: tricks of the trade. Springer, p 207–226

Schraudolph NN (1998) Accelerated gradient descent by factor centering decomposition. Technical report

Vatanen T, Raiko T, Valpola H, LeCun Y (2013) Pushing stochastic gradient towards second-order methods–backpropagation learning with transformations in nonlinearities. In: Neural Information Processing

Srivastava RK, Greff K, Schmidhuber J (2015) Highway networks. arXiv:1505.00387

Srivastava RK, Greff K, Schmidhuber J (2015) Training very deep networks. 1507.06228

Hochreiter S, Schmidhuber J (1997) Long short-term memory. Neural Comput 9(8):1735–1780

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. IEEE Conf Comput Vis Pattern Recogn (CVPR) 2016:770–778

Torch.ch (2021) Torch—exploring residual networks. Available at: http://torch.ch/blog/2016/02/04/resnets.html Accessed 26 Nov 2021

Nair V, Hinton GE (2010) Rectified linear units improve restricted Boltzmann machines. In: ICML

He K, Zhang X, Ren S, Sun J, Identity mappings in deep residual networks

TensorFlow (n.d.) The functional API, TensorFlow Core. [online] Available at: https://www.tensorflow.org/guide/keras/functional#a toy resnet model. Accessed 29 Nov 2021

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Borawar, L., Kaur, R. (2023). ResNet: Solving Vanishing Gradient in Deep Networks. In: Mahapatra, R.P., Peddoju, S.K., Roy, S., Parwekar, P. (eds) Proceedings of International Conference on Recent Trends in Computing. Lecture Notes in Networks and Systems, vol 600. Springer, Singapore. https://doi.org/10.1007/978-981-19-8825-7_21

Download citation

DOI: https://doi.org/10.1007/978-981-19-8825-7_21

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-19-8824-0

Online ISBN: 978-981-19-8825-7

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)