Abstract

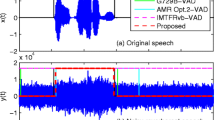

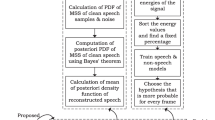

Speech is degraded in the presence of background noise. The need to detect the presence of voiced segments accurately in the degraded signal is crucial for many speech processing applications. This paper addresses the problem of separation of speech and non-speech (noise/silence) segments under non-stationary noisy environments by means of Voice Activity Detector (VAD). A VAD detects the speech and non-speech segments by extracting the speech features and comparing it to a threshold. In this paper, the VAD algorithms are based on two speech features: energy and spectral centroid. NOIZEUS speech corpus containing speech degraded by non-stationary noises at four different SNRs are used. The performance of the VAD algorithms is evaluated using F-score and Euclidean distance with comparison to the Ground truth VAD. Results demonstrate that for different noise conditions tested, a weighted spectral centroid VAD achieves outstanding performance.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

A median filter is a non-linear filtering technique used to remove noise from the signal [9].

- 2.

Binary mask is binary decision taken by a VAD. If measured value exceeds a threshold then VAD = 1, that is, voiced segment, else, VAD = 0, that is, noise/silence.

References

Romero-Fresco, P.: Subtitling through speech recognition: Respeaking (2020)

Yadav, S., Rai, A.: Learning Discriminative Features for Speaker Identification and Verification. In: Interspeech, pp. 2237–2241 (2018)

Vincent, E., Virtanen, T., Gannot, S.: Audio Source Separation and Speech Enhancement. John Wiley & Sons (2018)

Benyassine, A., et al.: ITU-T Recommendation G. 729 Annex B: a silence com- pression scheme for use with G. 729 optimized for V. 70 digital simultaneous voice and data applications. IEEE Commun. Mag. 35(9), 64–73 (1997)

Kristjansson, T., Deligne, S., Olsen, P.: Voicing features for robust speech detection. In: 9th European Conference on Speech Communication & Technology (2005)

Chang, J.H., Kim, N.S., Mitra, S.K.: Voice activity detection based on multiple sta- tistical models. IEEE Trans. Signal Process. 54(6), 1965–1976 (2006)

Alam, J., et al.: Supervised/unsupervised voice activity detectors for text-dependent speaker recognition on the RSR2015 corpus. In: Odyssey Speaker and Language Recognition Workshop, pp. 123–130 (2014)

Loizou, P.C.: Speech Enhancement: Theory and Practice. CRC press, Boca Raton (2013)

Deligiannidis, L., Arabnia, H.R.: Emerging Trends in Image Processing, Computer Vision and Pattern Recognition. Morgan Kaufmann, Burlington (2014)

Hu, Y., Loizou, P.C.: Subjective comparison of speech enhancement algorithms. In: IEEE International Conference on Acoustics Speech and Signal Processing (ICASSP) Proceedings, vol. 1, pp. 153–156 (2006)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Jaiswal, R. (2022). Performance Analysis of Voice Activity Detector in Presence of Non-stationary Noise. In: Mahyuddin, N.M., Mat Noor, N.R., Mat Sakim, H.A. (eds) Proceedings of the 11th International Conference on Robotics, Vision, Signal Processing and Power Applications. Lecture Notes in Electrical Engineering, vol 829. Springer, Singapore. https://doi.org/10.1007/978-981-16-8129-5_10

Download citation

DOI: https://doi.org/10.1007/978-981-16-8129-5_10

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-16-8128-8

Online ISBN: 978-981-16-8129-5

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)