Abstract

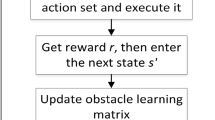

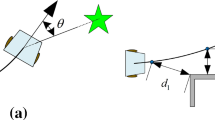

In order to solve the path planning problem of robot in unknown environment, this paper proposes a path planning algorithm based on direction detection reinforcement learning combined with virtual sub target optimization. Firstly,the direction-detection method is proposed to replace the grid method in the discrete state, which reduces the dimension. Secondly, a virtual sub-target optimization algorithm embedded in reinforcement learning process is proposed to optimize nodes in the continuous iteration process. Finally, simulation experiments show that the convergence speed of the proposed algorithm is 98.2% higher than that of traditional reinforcement learning, and the path is more stable and smooth.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Watkins, C., Dayan, P.: Technical note: Q-learning. Mach. Learn. 8, 279–292 (1992). https://doi.org/10.1007/BF00992698

Dijkstra, E.W.: A note on two problems in connexion with graphs. Numer. Math. 1, 269–271 (1959). https://doi.org/10.1007/BF01386390

Hart, P.E, Nilsson, N.J.: Raphael B.A formal basis for the heuristic determination of minimum cost paths. IEEE Trans. Syst. Sci. Cybern. 4, 100-107 (1968). https://doi.org/10.1109/TSSC.1968.300136

Wenhao, L.: An improved artificial potential field method based on chaos theory for UAV route planning. In: 34th Youth Academic Annual Conference of Chinese Association of Automation, pp. 47-51. IEEE Press (2019). https://doi.org/10.1109/YAC.2019.8787671

Wai, R., Prasetia, A.S.: Adaptive neural network control and optimal path planning of UAV surveillance system with energy consumption prediction. IEEE Access 99, 1 (2019). https://doi.org/10.1109/ACCESS.2019.2938273

Toland, R.B., Vonnegut, B.: Measurement of maximum electric field intensities over water during thunderstorms. J. Geophys. Res. 82, 438–440 (1977). https://doi.org/10.1029/JC082i003p00438

Wiewiora, E.: Potential-based shaping and Q-value initialization are equivalent. J. Artif. Intell. Res. 19, 205–208 (2011). https://doi.org/10.1613/jair.1190

Chu, P., Vu, H., Yeo, D., Lee, B., Um, K., Cho, K.: Robot reinforcement learning for automatically avoiding a dynamic obstacle in a virtual environment. In: Park, J.J.J.H., Chao, H.C., Arabnia, H., Yen, N.Y. (eds.) Advanced Multimedia and Ubiquitous Engineering. LNEE, vol. 352, pp. 157–164. Springer, Heidelberg (2015). https://doi.org/10.1007/978-3-662-47487-7_24

Acknowledgments

The work is supported by the National Natural Science Foundation of China (61673200, 61903172), the Major Basic Research Project of Natural Science Foundation of Shandong Province of China (ZR2018ZC0438) and the Key Research and Development Program of Yantai City of China (2019XDHZ085).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Ning, X., Yang, H., Fan, Z., Han, Y. (2022). Path Planning in Unknown Environment Based on Reinforcement Learning. In: Jia, Y., Zhang, W., Fu, Y., Yu, Z., Zheng, S. (eds) Proceedings of 2021 Chinese Intelligent Systems Conference. Lecture Notes in Electrical Engineering, vol 805. Springer, Singapore. https://doi.org/10.1007/978-981-16-6320-8_24

Download citation

DOI: https://doi.org/10.1007/978-981-16-6320-8_24

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-16-6319-2

Online ISBN: 978-981-16-6320-8

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)