Abstract

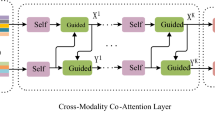

Visual Question Answering (VQA) is a moderately new and challenging multi-modal task, which endeavors to discover an answer for a given pair of an image and a relating question. This AI-complete task gains attraction from numerous researchers from the areas computer vision (CV) and natural language processing (NLP) due to its various potential applications. The general flow of VQA algorithms consists of image feature extraction, question feature extraction and joint comprehension of these two to generate an appropriate answer. Existing VQA systems did not pay attention to input feature extraction, but only celebrated different ways of multi-modal embedding. This paper proposes to improve the task of VQA by feature-level fusion of visual information. The goal of feature fusion is to consolidate relevant information from two or more feature vectors into a solitary one with additional discriminative power. Unlike simple concatenation, this paper uses discriminative correlation analysis (DCA) for fusion, which is the only method that incorporates the class structure into the feature-level fusion. Since the VQA systems are generally modeled as classification systems by treating the correct answers as classes, class-specific DCA suits well here. The newly created fused feature vectors are close to the right answers and thus raise the role of image understanding in VQA. The experimental results show the effectiveness of the new approach on DAQUAR dataset with mutual information (MI) as an evaluation metric.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Antol, S., Agrawal, A., Lu, J., Mitchell, M., Batra, D., Lawrence Zitnick, C., Parikh, D.: VQA: visual question answering. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2425–2433 (2015)

Zhang, P., Goyal, Y., Summers-Stay, D., Batra, D., Parikh, D.: Yin and Yang: balancing and answering binary visual questions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 5014–5022 (2016)

Zhu, Y., Groth, O., Bernstein, M., Fei-Fei, L.: Visual7w: grounded question answering in images. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4995–5004 (2016)

Yu, L., Park, E., Berg, A.C., Berg, T.L.: Visual madlibs: fill in the blank description generation and question answering. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2461–2469 (2015)

Fader, A., Zettlemoyer, L., Etzioni, O.: Paraphrase-driven learning for open question answering. In: Proceedings of the 51st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 1608–1618 (2013)

Xiong, C., Merity, S., Socher, R.: Dynamic memory networks for visual and textual question answering. In: International Conference on Machine Learning, pp. 2397–2406 (2016)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition (2014). arXiv preprint arXiv:1409.1556

Szegedy, C., Liu, W., Jia, Y., Sermanet, P., Reed, S., Anguelov, D., Rabinovich, A.: Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1–9 (2015)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Krizhevsky, A., Sutskever, I., Hinton, G.E.: Imagenet classification with deep convolutional neural networks. In: Advances in Neural Information Processing Systems, pp. 1097–1105 (2012)

Jabri, A., Joulin, A., van der Maaten, L.: Revisiting visual question answering baselines. In: European Conference on Computer Vision, pp. 727–739. Springer, Cham (2016)

Wu, Q., Wang, P., Shen, C., Dick, A., van den Hengel, A.: Ask me anything: Free-form visual question answering based on knowledge from external sources. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4622–4630 (2016)

Andreas, J., Rohrbach, M., Darrell, T., Klein, D.: Neural module networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 39–48 (2016)

Noh, H., Hongsuck Seo, P., Han, B.: Image question answering using convolutional neural network with dynamic parameter prediction. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 30–38 (2016)

Kim, J.H., Lee, S.W., Kwak, D., Heo, M.O., Kim, J., Ha, J.W., Zhang, B.T.: Multimodal residual learning for visual QA. In: Advances in Neural Information Processing Systems, pp. 361–369 (2016)

Bai, Y., Fu, J., Zhao, T., Mei, T.: Deep attention neural tensor network for visual question answering. In: Computer Vision–ECCV 2018: 15th European Conference, Munich, Germany, September 8–14, 2018, Proceedings, vol. 11216, p. 20. Springer, Berlin (2018)

Peng, L., Yang, Y., Bin, Y., Xie, N., Shen, F., Ji, Y., Xu, X.: Word-to-region attention network for visual question answering. Multimedia Tools Appl. 1–16 (2018)

Lu, J., Yang, J., Batra, D., Parikh, D.: Hierarchical question-image co-attention for visual question answering. In: Advances in Neural Information Processing Systems, pp. 289–297 (2016)

Malinowski, M., Doersch, C., Santoro, A., Battaglia, P.:. Learning visual question answering by bootstrapping hard attention. In: Computer Vision—ECCV 2018 Lecture Notes in Computer Science, pp. 3–20 (2018)

Zeiler, M.D., Fergus, R.: Visualizing and understanding convolutional networks. In: European Conference on Computer Vision, pp. 818–833. Springer, Cham (2014)

Tommasi, T., Mallya, A., Plummer, B., Lazebnik, S., Berg, A.C., Berg, T.L.: Combining multiple cues for visual madlibs question answering. Int. J. Comput. Vis. 1–23 (2018)

Pennington, J., Socher, R., Manning, C.: Glove: global vectors for word representation. In: Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 1532–1543 (2014)

Campello, R.J., Moulavi, D., Sander, J.: Density-based clustering based on hierarchical density estimates. In: Pacific-Asia Conference on Knowledge Discovery and Data Mining, pp. 160–172. Springer, Berlin (2013)

Cover, T.M., Thomas, J.A.: Elements of Information Theory. Wiley (2012)

Haghighat, M., Abdel-Mottaleb, M., Alhalabi, W.: Discriminant correlation analysis: real-time feature level fusion for multimodal biometric recognition. IEEE Trans. Inf. Forensics Secur. 11(9), 1984–1996 (2016)

Malinowski, M., Fritz, M.: A multi-world approach to question answering about real-world scenes based on uncertain input. In: Advances in Neural Information Processing Systems, pp. 1682–1690 (2014)

Redmon, J., Farhadi, A.: Yolov3: an incremental improvement (2018). arXiv preprint arXiv:1804.02767

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 The Editor(s) (if applicable) and The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Manmadhan, S., Kovoor, B.C. (2021). Optimal Image Feature Ranking and Fusion for Visual Question Answering. In: Bhateja, V., Peng, SL., Satapathy, S.C., Zhang, YD. (eds) Evolution in Computational Intelligence. Advances in Intelligent Systems and Computing, vol 1176. Springer, Singapore. https://doi.org/10.1007/978-981-15-5788-0_10

Download citation

DOI: https://doi.org/10.1007/978-981-15-5788-0_10

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-15-5787-3

Online ISBN: 978-981-15-5788-0

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)