Abstract

Social media platforms, forums, blogs, and opinion sites generate vast amount of data. Such data in the form of opinions, emotions, and views about services, politics, and products are characterized by unstructured format. End users, business industries, and politicians are highly influenced by sentiments of the people expressed on social media platforms. Therefore, extracting, analyzing, summarizing, and predicting the sentiments from large unstructured data needs automated sentiment analysis. Sentiment analysis is an automated process of extracting the opinionated from data and classifying the sentiments as positive, negative, and neutral. Lack of enough labeled data for sentiment analysis is one of the crucial challenges in natural language processing. Deep learning has emanated as one of the highly sought-after solutions to address this challenge due to automated and hierarchical learning capability inherently supported by deep learning models. Considering the application of deep learning approaches for sentiment analysis, this chapter aims to put forth taxonomy of traits to be considered for deep learning-based sentiment analysis and demystify the role of deep learning approaches for sentiment analysis.

Access provided by Autonomous University of Puebla. Download chapter PDF

Similar content being viewed by others

Keywords

- Deep learning

- Deep neural networks

- Opinion mining

- Sentiment analysis

- Social media data

- Taxonomy

- Text representation

1 Introduction

The drastic shifts from read-only to read-write access to the Web lead the people to interact with each other through social media networks like wikis, blogs, online forums, communities, etc. Due to this, user-generated content through social media platforms is increasing tremendously. Specifically, Web-based data of the form—opinionated text, reviews of products, and services has been one of the most contributing factors in social big data [1].

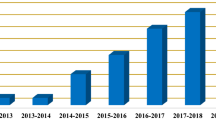

Analyzing the sentiments of people from such opinionated data helps both end users and business industries in decision-making for purchasing products, launching new products, assessing the industry reputation among the customers, etc. Sentiment analysis, also termed as opinion mining, is an automated process of extracting the polarity of the opinionated text. Alongside polarity, subject and opinion holders can also be identified using sentiment analysis. Sentiment analysis is one of the most active research areas in natural language processing since 2000 and continues to be highly sought-after research domain. It is forecasted that by 2025, NLP market would reach $22.3 billion [2].

Because of the proliferation of diverse opinion sites, it is difficult to find and monitor all the sites and collect the information pertaining to some domain and perform sentiment analysis. Moreover, it is difficult for human personnel to segregate the opinionated data from long blogs and forums and summarize the opinions. This arise the need of automated sentiment analysis systems.

Numerous techniques have been put forth till date to perform sentiment analysis based on supervised and unsupervised learning. In supervised learning, early literature focused on applying supervised machine learning techniques like naïve Bayes, support vector machines, and feature learning algorithms [3]. Unsupervised learning methods include the use of sentiment lexicons, grammatical analysis, etc.

Deep learning has emanated as a powerful technique to solve multitude of problems in the domains of computer vision [4,5,6,7,8], topic modeling [9,10,11], natural language processing [12,13,14], speech recognition [15], social media analytics [16,17,18], etc. Inspired by the same, applying deep learning-based sentiment analysis achieved great popularity in the recent lustrum. This book chapter sheds light upon the progress made in deep learning-based sentiment analysis by giving an overview of deep learning-based sentiment analysis models. Figure 1 gives a glimpse of main topics covered to demystify the application of deep learning for sentiment analysis.

2 Taxonomy of Sentiment Analysis

Figure 2 shows the taxonomy of the traits to be considered while designing the sentiment analysis models.

2.1 Sentiment Analysis, Polarity, and Output

Sentiment analysis is an automated process, which predicts the polarity of the opinionated text in terms of positive, negative, and neutral [19]. Fine-grained sentiment analysis involves the following categories, viz. very positive, positive, neutral, negative, and very negative. These categories can be mapped to a rating score, for example, “very positive” can be mapped to 5 stars, whereas “very negative” to 1 star. For multiple documents, the individual polarities obtained for each document can be mapped to the ratings and then aggregated to give aggregated score.

2.2 Levels of Sentiment Analysis

Sentiment analysis is performed at various levels of granularities such as document, sentence, and aspect-based. These levels have been discussed in this sub-section.

Document level

This level determines the sentiment of a complete paragraph or a document. The sentiment analysis model assumes that document contains opinionated text about the single entity. This level does not support documents comparing the multiple entities. The problem of determining whether the document has positive or negative polarity is portrayed as a binary classification problem. It can also be handled as a regression problem, for instance, assigning the rating score in the range of 1–5 stars for movie reviews. This task can also be modeled as a five-class classification problem.

Sentence level

This level of sentiment classification aims to determine the sentiment from a single sentence. Subjectivity classification and polarity classification can be used for inferring the sentiment from a sentence. Subjectivity classification focuses on finding whether a sentence is subjective or objective. On the other hand, the polarity classification determines whether a given subjective sentence is positive or negative. Existing deep learning techniques focuses on predicting polarity of a sentence as positive, negative, and neutral. As sentences are shorter compared to the document, semantic, and syntactic features obtained via POS tagger, parse trees, and lexicons can be used for sentence-level sentiment classification. Similar to document-level assumption, sentence-level sentiment classification assumes that each sentence contains sentiment about single entity.

Aspect-based sentiment analysis (ABSA)

In this level, sentiments of the users expressed toward aspects (features) of the entities (objects) such as movie and restaurant are extracted. It aims to find the aspect and polarity pairs from a given text. This level assumes that a single entity is present per document. As mentioned in [20], aspect-level sentiment analysis can be divided into four tasks as aspect term extraction, aspect term polarity, aspect category detection, and aspect category polarity. Aspect term extraction involves identifying the aspect terms from a set of sentences with pre-defined entities (e.g., laptops) and returning the list of distinct aspect terms. The second sub-task, namely, aspect term polarity focuses on determining the polarity of the aspect term detected in the first sub-task. Aspect category detection identifies the aspect categories in each sentence based on pre-defined set of aspect categories (e.g., general, price). The fourth sub-task aspect category polarity focuses on determining the polarity of each aspect category from a given set of sentences. Table 1 gives an example and output of each sub-task in ABSA.

Targeted ABSA is an extension of aspect-based sentiment analysis. ABSA assumes the occurrence of single entity per document, whereas targeted ABSA assumes a single sentiment toward each aspect of one or more entities. Targeted ABSA extracts the target entities, different aspects and their corresponding sentiments. For example, “The ambience is good in Viceroy but the service is bad, on the other hand, the staff in Novotel is very prompt and the food is tasty as usual.” This instance talks about aspects of two different hotels. Targeted ABSA recognizes “Viceroy” and “Novotel” as two target entities and output the labels as {Viceroy, ambience, positive}, {Viceroy, service, positive}, {Novotel, service, positive}, {Novotel, food, positive}.

2.3 Domain Applicability, Training, and Testing Strategy

Domain applicability states weather the sentiment analysis model performs in-domain or cross-domain sentiment analysis. For in-domain sentiment analysis, training and testing are done on the same target domain, i.e., domain-specific training and testing strategy are applied. Sometimes, the target domain on which sentiment analysis is to be performed lacks or possesses very less labeled data associated with sentiment classes, and therefore it is difficult to train the model with such data. Therefore, domain adaptation [21] (transfer learning) technique is applied for cross-domain sentiment analysis in which a model is trained on the domain with labeled data and tested on target domain with no or very less labeled data.

2.4 Language Support

Sentiment analysis models can be categorized into monolingual, multi-lingual, and cross-lingual sentiment models based on the support for the language. Cross-lingual sentiment analysis models train the model on resource-rich language and then test on resource-poor language.

2.5 Evaluation Measures

Common evaluation metrics commonly used for sentiment analysis are accuracy, F1 score, average recall (AvgRec), macro-average F1 score, ranking loss, macro-averaged mean absolute error, least absolute error (LAE), mean squared error (MSE), Pearson correlation coefficient, KullbackLeibler divergence (KLD), and area under the ROC curve (AUC). These metrics have been discussed in this section in Sect. 5.

3 Text Representation for Sentiment Analysis

Figure 3 depicts various traits to be considered to represent the text for sentiment analysis using deep learning. Each trait has been discussed in sub-sequent sections.

3.1 Embedded Vectors

For most machine learning algorithms, which map input to output using approximation require numerical representation of input data. Embedding methods (also named as vectorizing or encoding) convert input data (i.e., words, sentences, paragraphs, document, date, emoji, graph, etc.) into real numbers capturing the hidden semantic relation between input data. Embedding models are one of the successful applications of unsupervised learning and have been popularly used in deep learning-based NLP tasks. Bengio et al. [22] introduced the concept of word embeddings. Some noteworthy models which can be used for representing the input text have been discussed.

Collobert and Weston (C&W) model

C&W model proposed in [23] has been designed using multi-layered neural network architecture, trained on large dataset and carries syntactic and semantic meaning. This model is designed agnostic to any task-specific feature engineering and therefore serves as useful word representation model for wide variety of NLP tasks.

Word2vec

The vectors used for representing the words are neural word embeddings. Word2vec [24] is used to obtain the distributed representation of words, i.e., word embeddings. Word2vec trains the words against the other words that are neighbors of each other in the input corpus. This training can be done using any of the two models such as continuous bag-of-words (CBOW)or skip-gram model. CBOW model emits a target word according to surrounding context. Skip-gram model emits words in a surrounding context provided that central word is given.

fastText

Facebook’s AI research laboratory came up with fastText library [25]. It efficiently learns word representation. By making use of character-level information, fastText can be used to get the representation for rear words also.

Global Vectors for Word Representation (GloVe)

GloVemodel [26] gives vector representations for words in an unsupervised manner. It uses both global matrix factorization and local context window to get representation of the word.

Embeddings from Language Models (ELMo)

Traditional word embedding models like word2vec and GloVe can not handle the contextual meaning of the words and therefore provide the same vector representation for the word with different meanings. For instance, meaning of the word stick is different “stick” in the following example.

Sentence 1: This stick is made up of wooden material

Sentence 2: Let’s stick to one goal at a time

ELMo model [27] cleverly handles the multiple meanings of the words as mentioned in above sentences based on context by representing the embedded vector as a function of the entire sentence containing that word. ELMo representation can model syntactical and semantical characteristics of the word, handles words with multiple meanings based on context (polysemy modeling). Word vectors obtained from ELMo model are learned functions of the hidden states of a bi-directional language model. As ELMo vectors are character-based, the ELMo model can represent out-of-vocabulary words unseen in training phase by making use of morphological clues.

Sentiment-Specific Word Embeddings (SSWE)

Tang et al. [28] proposed SSWE model by incorporating sentiment knowledge in continuous representation of the words. For this, three neural network-based models have been designed, viz. SSWEh, SSWEr, and SSWEu. SSWEh is trained with very strict constraint to predict the positive and negative n-gram in the range [1,0] and [0,1], respectively. In SSWEr, the strict constraint of softmax has been removed. Both SSWEh, and SSWEr prohibit generation of corrupted n-grams. Being unified, SSWEu captures both the sentiments of sentences and syntactical contexts of the words.

Graphs from LOw-level unit Modeling (GLoMo)

Graphs from low-level unit modeling (GLoMo) framework is based on unsupervised latent graph learning [29]. It is also a transfer learning framework developed to improve the performance of NLP tasks like sentiment analysis, natural language inference, question answering, and image classification.

Universal Language Model Fine-tuning (ULMFiT)

ULMFiT [30] is transfer learning model which can be used for any natural language processing task. The pre-trained models of ULMFiT can be leveraged for sentiment analysis. In this, a language model is pre-trained on general domain and then fine-tuned on target domain. Its working is invariant to document size, number, and label and therefore claims to be universal. It follows a single architecture and training for carrying out diverse tasks and does not need domain-specific documents and labels.

OpenAITransformer

OpenAITransformer [31] first trains a transformer model on large carpus in an unsupervised manner using language model as a training signal. After this, fine-tuning the model on small supervised dataset enables to solve the specific task.

Bi-directional Encoder Representations from Transformers (BERT)

BERT [32] pretrains bi-directional representations of unlabeled data in all layers by jointly handling both left and right context. Due to this, it can be fine-tuned to solve any task of NLP by just adding one output layer to the pre-trained model.

3.2 Strategy of Initializing the Embedded Vectors

Table 2 gives details of pre-trained models which can be leveraged for sentiment analysis. Word embeddings can be initialized by setting the vector representations with random values (random initialization). Another way is to use pre-trained word embeddings and then fine-tune these embeddings for initializing the model.

Pre-trained models based on various corpora such as Wikipedia (C&W), Google News (Google), Twitter with emoticons (SSWE), Amazon corpus (Amazon), Wikipedia and Twitter (Glove) have been developed. Applying word2vec to a specific corpus yields customized embeddings [37, 38]. As mentioned in [33], random initialization may result in getting local minima with stochastic gradient descent (SGD) and if the pre-trained embeddings are not fine-tuned then automatic feature learning capacity of deep neural networks can not be leveraged. Therefore, use of pre-trained embeddings as initializer and then fine-tuning them helps to make the model efficient [39].

3.3 Enhancing the Embedded Vectors

For enhancing the effectiveness of the embedded vector, additional feature (from a word, sentence, and document) can be extracted and appended to a pre-trained embedded vector. For example, word vector can be appended with sentiment, parts-of-speech (POS) tag, word subjectivity, total count of syllables, number of characters with or without punctuation, etc.

The words which are out-of-vocabulary to the embedding model lack vector representation. For such OOV words, vector representation is obtained by approximation based on OOV word’s context. The following are some solutions to handle OOV words. (1) Specifically, given a sentence and corresponding OOV word, language modeling performs sequencing of words in sentence and then predicts the meaning of word by comparing it with similar sentences. (2) Another solution is to use character or n-gram-level embeddings obtained from fastText. (3) Embeddings can be trained from scratch on the text. However, it suffers from overfitting and can not handle sentences having complex structure. Tang et al. [40] handled the problem of OOV words for the domain of users and products by averaging the representation of available data related to users and products. Creating a domain-specific word embedding model also helps to improve the performance [28, 41, 42].

3.4 Approximation Methods

Reducing the computational complexity of final softmax layer is one of the crucial challenges to be handled while designing the better word embedding model. Therefore, approximation algorithms based on sampling and softmax-based approaches have been devised by the research community. These approaches have been discussed in this sub-section.

3.5 Sampling-Based Approaches

Sampling-based approaches approximate the normalization term present in the denominator of the softmax with other computationally inexpensive loss function. Sampling-based methods are useful only for training. During testing, the full softmax needs to be computed to get a normalized probability.

-

Importance sampling: Traditional importance sampling is based on Monte-Carlo sampling. It approximates a target distribution via unigram distribution.

-

Adaptive importance sampling: Approximation using importance sampling works better for large samples [43]. Bengio and Senécal proposed an Adaptive importance sampling [44] which works on n-gram distribution.

-

Target sampling: Jean et al.’s [45] approximation training algorithm is based on biased importance sampling, namely target sampling, which allows training neural machine translation model with a much large target vocabulary. Once the model is trained, they limit the target words being sampled by forming a subset of the vocabulary obtained by partitioning and selecting pre-defined sample words in each partition.

-

Noise contrastive estimation (NCE): NCE [46] is more stable compared to importance sampling. Importance sampling has the risk of proposal distribution getting divergent from target distribution. Compared to importance sampling, NCE does not find the probability of the word directly. NCE uses an auxiliary loss for maximizing the probability of correct words using optimization.

-

Negative sampling: It minimizes the negative log-likelihood of words in training set using logistic loss function and focuses on learning word-representations of high quality.

3.6 Softmax-Based Approaches

-

Hierarchical softmax (H-Softmax): Approximation based on hierarchical softmax [47] replaces the softmax layer with hierarchical tree in which leaves correspond to the words. Hierarchical layer decomposes the process of probability calculation. This alleviates the need of calculating the expensive normalization over the words. Therefore, it achieves a speed-up for word prediction tasks.

-

Differentiated softmax: Differentiated softmax [48] is a variant of traditional softmax layer. It is based on the philosophy that a number of parameters required by words are different and varies according to the occurrence of the words. Due to this principle, D-softmax works faster during testing. However, the assignment of a smaller number of parameters to rarely occurring words does not help the model to handle rare words efficiently.

-

CNN-softmax: Kim et al.’s [49] work focuses on modifying the traditional softmax layer using character-level convolutional neural network (CNN). Character-level CNN has been used for producing the input word embeddings. Jozefowicz et al. [50] designed softmax loss based on character-level CNN, named as CNN-softmax. However, character-based models can not handle the same words with different meanings. This is because continuous space representation is used for the characters and the model prone to learn mapping from characters to word embeddings using smooth function. Therefore, a correction factor can be introduced which is learned per word.

4 Deep Learning Approaches for Sentiment Analysis

In this section, highly significant deep learning approaches for sentiment analysis at document, sentence, and aspect-level have been discussed. Table 3 compares these approaches based on text representation, neural network model, dataset, and crux of each approach.

Document-level sentiment analysis approaches

Zhai and Zhang [34] proposed a semi-supervised denoising autoencoder model for document-level sentiment analysis. It considers sentiment information during learning phase for getting good representation of document vectors. It learns a task-oriented data representation by using Bregman divergence function as a loss in the autoencoder and obtaining discriminative loss function from class labels.

Zhou et al. [52] proposed bilingual sentiment embeddings for cross-lingual sentiment classification. In this, denoising autoencoder is used to learn bilingual embeddings in unsupervised way. Then via supervised learning, sentiment information is incorporated into bilingual embeddings from sentiment labels of documents to get bilingual sentiment word embeddings.

For learning the document representation, Tang et al. [51] utilized the sentence relationships. For this, they first used CNN or long short-term memory (LSTM) for sentence representation learning and then applied gated recurrent unit (GRU) for adaptively encoding the semantics of sentences and their relation in document representation for sentiment analysis.

For overcoming the shortcomings of bag-of-words model, Le and Mikolov proposed unsupervised algorithm, namely paragraph vector [54] which learns fixed-length representation of text data from variable-sized text such as sentence, paragraphs, and documents. It learns representation by predicting the surrounding words based on contextual information from the text. After learning the vector representation, logistic classifier is applied to learn to predict the sentiments. During testing, the network for vector representation freezes and representation for test data (sentence, paragraph, or document) is learnt using gradient descent. The leant vector representation is then fed to logistic regression for predicting the sentient.

Tang et al. [40] proposed supervised learning framework which incorporates user- and product-level information in a neural network model to perform document-level sentiment classification. Incorporation of user-level and product-level information facilitates to capture the individual choices of users and overall qualities of products, respectively, to provide better representation of the text.

Like [51], Chen et al. [52] incorporated user- and product-level information in a hierarchical LSTM model via word and sentence-level attention mechanism. Based on the principle of compositionality [80], they modeled document semantics in a hierarchical manner at word, sentence, and document level. They used word-level user-product attention to get sentence representation and sentence-level user-product attention to get document representation.

Dou [53] also proposed user-product deep memory network (UPDMN) for capturing user and product information. Initially, a document is represented using LSTM and then deep memory network having computational layers with content-based attention mechanism is applied for predicting review rating. For handling semantic knowledge in long text, Xu et al. [76] put forth cached LSTM model. Cache mechanism divides the memory in different groups with varying forgetting patterns and enable to capture emotional information locally and globally for improved sentiment classification. Compared to standard LSTM, this model converges faster. Hierarchical attention network based on GRU-based sequence encoder proposed in [55] applies attention mechanism at word- and sentence-level for document-level sentiment classification. It incrementally constructs a document vector by aggregating significant words into sentence vectors and in turn significant sentence vectors into document vectors via aggregation. Song et al. [56] proposed hierarchical iterative attention model using bi-directional LSTM which captures interaction between documents and aspects at word- and sentence-level to learn the document representation in aspect-specific fashion. This model performs multi-aspect sentiment classification. Zhou et al. [57] proposed to use bi-directional LSTM with sentence-level attention mechanism for cross-lingual sentiment classification. Initially, machine translation tool translated training data into target language. They used bi-directional LSTM for modeling the document representation in source and target language. To remove the noise effect introduced due to machine translation, hierarchical attention mechanism is introduced which jointly trains with the LSTM network. Li et al. [58] addressed the issue of selecting the pivots for cross-domain sentiment analysis in transfer learning mode. They used adversarial memory network and jointly trained two networks for sentiment and domain classification. Huang et al. [59] proposed two variants of representations to be used with LSTM for document-level sentiment classification. In the first variant, document is represented by capturing the semantics of sentences from sentence vectors. In the second variant, document is represented using sorted sentence vectors. For getting sorted sentence representation, dataset is pre-processed to remove irrelevant sentences, which does not carry sentiment information.

Sentence-level sentiment analysis approaches

Socher et al. [60] first put forth recursive autoencoder network working in semi-supervised manner for sentiment classification at sentence level. This approach retrieves vector representation with reduced dimensions for multi-word phrases. As this method is based on single-vector space model, it can not capture the compositional meaning of long phrases.

Socher et al. [61] put forth recursive matrix-vector model which additionally associates matrix representation with a word in a tree structure. This approach alleviates the problem of capturing the compositional meaning of long sentences with arbitrary syntax and length by representing the word and phrase using both the vector and matrix. Word vector captures inherent meaning and change in meaning of neighboring words is captured by matrix representation. An external parser has been used for building a tree structure.

To perform supervised training and evaluate sentiment compositional models, Socher et al. [81] developed Stanford Sentiment Treebank dataset [82]. They proposed recursive neural tensor network based on tensor-oriented compositional features for efficiently capturing the interaction among the words in a sentence. The model was tested on movie reviews dataset where sentiment polarities varied from very negative to very positive as five-sentiment classes.

Qian et al. [62] proposed two models based on compositional functions, namely, tag-guided recursive neural network (TG-RNN), tag-embedded recursive neural network/recursive neural tenser network (TE-RNN/RNTN). The former model selects a composition function based on POS tags of a phrase, whereas the later model combines tag and word embeddings. They tested the performance on Sentiment Treebank corpus and the models achieved significant performance over baseline models.

Dynamic CNN proposed by Kalchbrenner et al. [63] uses dynamic K-max pooling operator to capture semantics of sentences. They experimented on DCNN by varying the initialization parameters of word embeddings such as CNN with random initialization, CNN with pre-trained and fine-tuned embeddings, and CNN with multiple sets of word embeddings. Character to sentence CNN model proposed in [64] uses two layers of CNN for extracting word- and sentence-level features with varying length of input sentences for sentiment analysis. Wang et al. [65] utilized gates and constant error carousels in the memory structure of LSTM for handling the interaction among words for via compositional function. A regional CNN-LSTM model [66] performs dimensional sentiment analysis in which regional CNN captures sentence-level information locally and LSTM captures long-distance dependency.

Motivated by structural correspondence learning method preferably used for domain adaptation [83], Yu and Jiang [41] proposed the idea of learning generalized sentence embeddings for cross-domain sentence-level sentiment analysis and designed CNN models to joint learning of hidden feature representations of labeled and unlabeled data.

Aspect-based sentiment analysis approaches

Ruder et al. [67] captured intra- and inter-sentence relation using hierarchical bi-directional LSTM for aspect-based sentiment analysis. The complete reliance on sentence and its structure made their approach language-independent, and thus supports multi-lingual ABSA.

Wang et al. [68] proposed integrated recursive neural networks with conditional random field for jointly extracting the explicit aspect terms and opinion terms as the first step toward ABSA. Xu et al. [69] applied double embedding mechanism with CNN model for aspect extraction. This approach uses both general embeddings (GloVe-CNN) and domain-specific embeddings (DE-CNN) without any extra supervision for aspect extraction.

Attention-over-attention mechanism proposed in [70] jointly models representation of aspects and sentences to capture interaction among aspects and the context of the sentences. It used two bi-directional LSTM networks for learning the hidden semantics of the words in sentence and target. Target-specific transformation networks (TNet) [71] adapts convolutional neural network for handling target-level sentiment classification. For integrating target information into word representation, target-specific transformation network is proposed.

Wang et al. [72] proposed attention-based LSTM for ABSA. They proposed two ways of considering the aspect information while applying attention mechanism. Interactive attention network [73] (IAN) leverages target and context information for computing the attention vector and learns target and context representations. By concatenating target representation with context representation, IAN predicts polarity of the target. Zhang et al. [74] proposed to use gated recurrent neural networks for targeted sentiment analysis. First, for better representation of target and context by applying pooling layer over hidden layer instead of words, bi-directional gated neural network is used. A three-way gated neural network has been used to model interaction between surrounding context and the target. Saeidi et al. [84] proposed SentiHood dataset for targeted ABSA. They proposed to use the bi-directional LSTM model and logistic regression model to learn a classifier for each aspect.

Ma et al. [77] proposed a solution for handling targeted ABSA by applying attention mechanism in two-step model at target- and sentence-level and extending LSTM to incorporate commonsense knowledge associated with sentiments. Inspired by the use of memory augmented models in machine reading, Liu et al. [78] proposed to use external memory chains with a delayed memory update mechanism, enabling to track multiple target entities for targeted ABSA. Sun et al. [79] utilized pre-trained BERT language model for targeted ABSA. Specifically, they represented single sentence and a pair of sentences using pre-trained BERT language model and constructed the auxiliary sentences. After this, the task of targeted ABSA has been transformed into sentence-pair classification task. By fine-tuning the pre-trained BERT model, sentiment analysis has been performed.

5 Evaluation Metrics for Sentiment Analysis

Evaluation metrics commonly used for sentiment analysis have been discussed in this section.

-

Accuracy: Accuracy (precision) relates to how often the sentiment rating predicted by the model is correct. Higher is the accuracy, better is the model. Accuracy is calculated as

$$ {\text{Acc}}\,{\text{ = }}\,\frac{{{\text{TP + TN}}}}{{{\text{TP + TN + FP + FN}}}} $$(1)where TP, TN, FP, and FN denote true positive, true negative, false positive, and false negative, respectively.

-

F1 score: It uses both precision and recall of test data for finding its score. It is calculated as follows.

$$ F_{1} = \frac{{2\left( {Precision \times Recall} \right)}}{{Precision + Recall}} $$(2) -

Average recall (AvgRec): For the models, which find the overall sentiment of a document or text, average recall is used. Average recall is calculated by averaging the recall across the sentiment classes such as positive, negative, and neutral.

$$ AvgRec = \frac{1}{2}\left( {R^{P} + R^{N} + R^{U} } \right) $$(3)where \( R^{P} \), \( R^{N} \), and \( R^{U} \) refer to recall associated with positive, negative, and neutral class, respectively. The value of AvgRec varies in the range [0, 1]. Average recall is more robust to class imbalance as compared to standard accuracy. Higher the value of AvgRec, better is the model.

-

Macro-average F1 score: Macro-average F1 score is calculated with respect to positive and negative classes as

$$ F_{1}^{PN} = \frac{1}{2}\left( {F_{1}^{P} + F_{1}^{N} } \right) $$(4)where \( F_{1}^{P} \) and \( F_{1}^{N} \) denote \( F_{1} \) score with respect to positive and negative class, respectively.

-

Ranking loss: It averages the distance between actual and predicted rank [85, 86]. It is calculated as follows.

$$ Ranking\,loss = \sum\limits_{i = {1}}^{n} {\frac{{\left| {t_{i} - \hat{t}_{i} } \right|}}{{k \times n}}} $$(5)where \( t_{i} \) and \( \hat{t}_{i} \) denote values associated with actual sentiment and predicted sentiment, respectively, k is number of sentiment classes, and n is instances used for testing.

-

Macro-averaged mean absolute error: It is robust for imbalanced datasets [87]

$$ MAE^{M} \left( {t,\hat{t}} \right) = \frac{{1}}{k}\sum\limits_{{j = {1}}}^{k} {\frac{{1}}{{\left| {t_{j} } \right|}}\sum\limits_{{t_{i} \in t_{j} }} {\left| {t_{i} - \hat{t}_{i} } \right|} } $$(6)where t and \( \hat{t} \) denote vector of actual and predicted sentiment values, respectively, \( t_{j} = \left\{ {t_{i} :t_{i} \in t,t_{i} = j} \right\} \) and k denotes sentiment classes in t.

-

Least absolute error (LAE) [88]: It is widely used evaluation measure to calculate the error of sentiment classification. It is given as

$$ {\text{LAE}} = \sum\limits_{i = 1}^{n} {\left| {\hat{t}_{i} - t_{i} } \right|} $$(7)where \( \hat{t}_{i} \) and \( t_{i} \) denote vector of predicted sentiment values and actual sentiment values.

-

Mean squared error (MSE) [89]: It is used for evaluating the sentiment prediction error. It is specifically used for regression. MSE and Root MSE are computed as follows.

$$ {\text{MSE}} = \frac{1}{n}\sum\limits_{i = 1}^{n} {\left( {\hat{t}_{i} - t_{i} } \right)^{2} } $$(8)$$ {\text{RMSE}} = \sqrt {\frac{1}{n}\sum\limits_{i = 1}^{n} {\left( {\hat{t}_{i} - t_{i} } \right)^{2} } } $$(9)where n denotes number of test instances, \( \hat{t}_{i} \) and \( t_{i} \) denote vector of predicted sentiment values and actual sentiment values. It can be noted that lower values of MSE and RMSE indicates better performance of prediction model.

-

Pearson correlation coefficient: It is calculated as

$$ r = \frac{1}{n - 1}\sum\limits_{i = 1}^{n} {\left( {\frac{{t_{i} - \bar{t}}}{{\sigma_{t} }}} \right)\left( {\frac{{\hat{t}_{i} - \bar{\hat{t}}}}{{\sigma_{{\hat{t}}} }}} \right)} $$(10)where n denotes number of test instances, \( \hat{t}_{i} \) and \( t_{i} \) denote value of predicted and actual sentiments, \( \bar{\hat{t}} \) and \( \bar{t} \) denote arithmetic means of predicted and actual values, and σ represents standard deviation. Higher the value of r indicates better prediction accuracy of the model.

-

Distributed cumulative grain (DCG): While performing sentiment analysis using topic modeling technique, first topics (aspects) are detected and then the sentiments associated with detected topics (aspects) are predicted. Therefore, for the sake of evaluating the relevance of returned topics (aspects), normalized Discounted Cumulative Gain (nDCG) is used [90]. The regular DCG is computed as follows.

$$ {\text{DCG}}_{m} = \sum\limits_{i = 1}^{m} {\frac{{2^{rel(i)} - 1}}{{\log_{2} (i + 1)}}} $$(11)where m represents top m topics (aspects), \( {\text{rel}}(i) \) denotes relevance score of topics (aspect) i. For the models which produce the rankings of the detected topics (aspects), normalized DCG summarizes the quality of the rankings.

-

KullbackLeibler divergence (KLD): KLD [91] is used for measuring error in estimating actual distribution t over a set \( {\mathbf{\mathcal{K}}} \) of sentiment classes by means of a predicted distribution \( \hat{t} \). Like \( MAE^{M} \), lower the values of KLD, better is the model. KLS is calculated as follows.

$$ KLD\left( {\hat{t}, t,{K}} \right) = \sum\limits_{{k_{j} \in {K}}} {t\left( {k_{j} } \right){log}_{e} \frac{{t\left( {k_{j} } \right)}}{{\hat{t}(k_{j} )}}} $$(12) -

Area under the ROC curve (AUC): Saeidi et al. [84] proposed to use the AUC metric for tasks of aspect and sentiment detection. AUC helps to measure the quality of ranking the output scores without relying on the threshold.

6 Benchmarked Datasets and Tools

Table 4 gives the glimpse of standard benchmarked datasets used for sentiment analysis at document, sentence, aspect, and targeted aspect-level.

These are numerous tools available which offer sentiment analysis as one of its services. The details of the tools providing sentiment analysis as a service have been mentioned in Table 5.

With reference to popularity of sentiment analysis, dedicated search engines have been developed such as Social Mention [116], Social Searcher [117], Talkwalker’s Quick Search [118]. Social Mention [116] combines the user-generated data across the Web and gives the sentiments of a given keyword based on how many times the positive, negative, and neutral mentions of the keyword are present in the collected data. Social Searcher [117] is a real-time search engine for quickly pulling recent mentions from popular social networks and displays analytics in the form of mentions, users, and sentiments for the topic entered in the search box. It also offers sentiment filters to get a set of mentions.

7 Conclusion

This chapter gives a demystified overview of state-of-the-art approaches for sentiment analysis. The proposed graphical taxonomy gives traits to be considered for designing the sentiment analysis systems. Providing suitable input to the deep learning models plays crucial role in achieving the good performance. Therefore, parameters associated with text representation techniques such as use of embedded vectors, language models, ways of improving the functionality of embedded vectors, and approximating the computationally expensive softmax function in embedding models have been thoroughly discussed.

A comparative overview of the noteworthy research papers focusing on sentiment analysis at document, sentence, and aspect level using deep learning approaches has been given in the chapter. We also shed light upon state-of-the-art benchmarked datasets and the tools and services available for sentiment analysis.

References

Pathak, A.R., M. Pandey, and S. Rautaray. 2018. Construing the big data based on taxonomy, analytics and approaches. Iran Journal of Computer Science 1: 237–259.

NLP market. https://www.tractica.com/newsroom/press-releases/natural-language-processing-market-to-reach-22–3-billion-by-2025/.

Agarwal, B., and N. Mittal. 2016. Prominent feature extraction for sentiment analysis. In Springer Book Series: Socio-Affective Computing series, 1–115. Springer International Publishing, ISBN: 978-3-319-25343-5. https://doi.org/10.1007/978-3-319-25343-5.

Pathak, A.R., M. Pandey, and S. Rautaray. 2018. Application of deep learning for object detection. Procedia Computer Science 132: 1706–1717.

Pathak, A.R., M. Pandey, and S. Rautaray. 2018. Deep learning approaches for detecting objects from images: A review. In Progress in Computing, Analytics and Networking, ed. Pattnaik, P. K., S.S. Rautaray, H. Das, J. Nayak, J., 491–499. Springer Singapore.

Pathak, A.R., M. Pandey, S. Rautaray, and K. Pawar. 2018. Assessment of object detection using deep convolutional neural networks. In Advances in Intelligent Systems and Computing, 673.

Pawar, K., and V. Attar. 2019. Deep learning approaches for video-based anomalous activity detection. World Wide Web 22: 571–601.

Pawar, K., and V. Attar. 2019. Deep Learning approach for detection of anomalous activities from surveillance videos. In CCIS. Springer, In Press.

Pathak, A.R., M. Pandey, and S. Rautaray. 2019. Adaptive model for dynamic and temporal topic modeling from big data using deep learning architecture. Internationl Journal of Intelligent Systems and Applications 11: 13–27. https://doi.org/10.5815/ijisa.2019.06.02.

Bhat, M.R., M.A. Kundroo, T.A. Tarray, and B. Agarwal. 2019. Deep LDA: A new way to topic model. Journal of Information and Optimization Sciences 1–12 (2019).

Pathak, A.R., M. Pandey, and S. Rautaray. 2019. Adaptive framework for deep learning based dynamic and temporal topic modeling from big data. Recent Patents on Engineering, Bentham Science 13: 1. https://doi.org/10.2174/1872212113666190329234812.

Pathak, A.R., M. Pandey, and S. Rautaray. 2019. Empirical evaluation of deep learning models for sentiment analysis. Journal of Statistics and Management Systems 22: 741–752.

Pathak, A.R., M. Pandey, and S. Rautaray. 2019. Adaptive model for sentiment analysis of social media data using deep learning. In International Conference on Intelligent Computing and Communication Technologies, 416–423.

Ram, S., S. Gupta, and B. Agarwal. 2018. Devanagri character recognition model using deep convolution neural network. Journal of Statistics and Management Systems, 21: 593–599.

Hinton, G., and et al. 2012. Deep neural networks for acoustic modeling in speech recognition. IEEE Signal Processing Magazine, 29.

Jain, G., M. Sharma, and B. Agarwal. 2019. Spam detection in social media using convolutional and long short term memory neural network. Annals of Mathematics and Artificial Intelligence 85: 21–44.

Agarwal, B., H. Ramampiaro, H. Langseth, and M. Ruocco. 2018. A deep network model for paraphrase detection in short text messages. Information Processing & Management 54: 922–937.

Jain, G., M. Sharma, and B. Agarwal. 2019. Optimizing semantic LSTM for spam detection. International Journal of Information Technology 11: 239–250.

Liu, B. 2012. Sentiment Analysis and Opinion Mining, 1–108. https://doi.org/10.2200/s00416ed1v01y201204hlt016.

SemEval-2014. http://alt.qcri.org/semeval2014/task4/.

Glorot, X., A. Bordes, and Y. Bengio. 2011. Domain adaptation for large-scale sentiment classification: A deep learning approach. In Proceedings of the 28th International Conference on Machine Learning (ICML-11), 513–520.

Bengio, Y., R. Ducharme, P. Vincent, and C. Jauvin. 2003. A neural probabilistic language model. Journal of Machine Learning Research 3: 1137–1155.

Collobert, R., et al. 2011. Natural language processing (almost) from scratch. Journal of Machine Learning Research 12: 2493–2537.

Mikolov, T., K. Chen, G. Corrado, and J. Dean. 2013. Efficient estimation of word representations in vector space. arXiv Prepr. arXiv1301.3781.

Bojanowski, P., E. Grave, A. Joulin, and T. Mikolov. 2017. Enriching word vectors with subword information. Transactions of the Association for Computational Linguistics 5: 135–146.

Pennington, J., R. Socher, and C. Manning. 2014. Glove: Global vectors for word representation. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), 1532–1543.

Peters, M.E., and et al. 2018. Deep contextualized word representations. In Proceedings of NAACL.

Tang, D., and et al. 2014. Learning sentiment-specific word embedding for twitter sentiment classification. In Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 1555–1565.

Yang, Z., and et al. 2018. Glomo: Unsupervisedly learned relational graphs as transferable representations. arXiv Prepr. arXiv1806.05662.

Howard, J., and S. Ruder. Universal language model fine-tuning for text classification. arXiv Prepr. arXiv1801.06146.

Radford, A., K. Narasimhan, T. Salimans, I. Sutskever. 2018. Improving language understanding by generative pre-training. URL https://s3-us-west-2.amazonaws.com/openai-assets/research-covers/language-unsupervised/language_understanding_paper.pdf.

Devlin, J., M.-W. Chang, K. Lee, and K. Toutanova. 2018. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv Prepr. arXiv1810.04805.

Liu, P., S. Joty, and H. Meng. 2015. Fine-grained opinion mining with recurrent neural networks and word embeddings. In Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, 1433–1443.

Zhai, S., and Z.M. Zhang. 2016. Semisupervised autoencoder for sentiment analysis. In Thirtieth AAAI Conference on Artificial Intelligence.

EMLo. https://allennlp.org/elmo.

Zhu, Y., and et al. 2015. Aligning books and movies: Towards story-like visual explanations by watching movies and reading books. In Proceedings of the IEEE International Conference on Computer Vision, 19–27.

Poria, S., E. Cambria, and A. Gelbukh. 2016. Aspect extraction for opinion mining with a deep convolutional neural network. Knowledge-Based Systems 108: 42–49.

Wang, W., S.J. Pan, D. Dahlmeier, and X. Xiao. 2016. Recursive neural conditional random fields for aspect-based sentiment analysis. arXiv Prepr. arXiv1603.06679.

Jebbara, S., and P. Cimiano. 2016. Aspect-based relational sentiment analysis using a stacked neural network architecture. In Proceedings of the Twenty-second European Conference on Artificial Intelligence, 1123–1131.

Tang, D., B. Qin, and T. Liu. 2015. Learning semantic representations of users and products for document level sentiment classification. In Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), 1014–1023.

Yu, J., and J. Jiang. 2016. Learning sentence embeddings with auxiliary tasks for cross-domain sentiment classification. In Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, 236–246.

Sarma, P.K., Y. Liang, and W.A. Sethares. 2018. Domain adapted word embeddings for improved sentiment classification. arXiv Prepr. arXiv1805.04576.

Bengio, Y., J.-S. Senécal, and Others. 2003. Quick training of probabilistic neural nets by importance sampling. In AISTATS, 1–9.

Bengio, Y., and J.-S. Senécal. 2008. Adaptive importance sampling to accelerate training of a neural probabilistic language model. IEEE Transactions on Neural Networks 19: 713–722.

Jean, S., K. Cho, R. Memisevic, and Y. Bengio. 2014. On using very large target vocabulary for neural machine translation. arXiv Prepr. arXiv1412.2007.

Mnih, A., and Y.W. Teh. 2012. A fast and simple algorithm for training neural probabilistic language models. arXiv Prepr. arXiv1206.6426.

Morin, F., and Y. Bengio. 2005. Hierarchical probabilistic neural network language model. Aistats 5: 246–252.

Chen, W., D. Grangier, and M. Auli. 2015. Strategies for training large vocabulary neural language models. arXiv Prepr. arXiv1512.04906.

Kim, Y., Y. Jernite, D. Sontag, and A.M. Rush. 2016. Character-aware neural language models. In Thirtieth AAAI Conference on Artificial Intelligence.

Jozefowicz, R., O. Vinyals, M. Schuster, N. Shazeer, and Y. Wu. 2016. Exploring the limits of language modeling. arXiv Prepr. arXiv1602.02410.

Tang, D., B. Qin, and T. Liu. 2015. Document modeling with gated recurrent neural network for sentiment classification. In Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, 1422–1432.

Zhou, H., L. Chen, F. Shi, and D. Huang. 2015. Learning bilingual sentiment word embeddings for cross-language sentiment classification. In Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), 430–440.

Dou, Z.-Y. 2017. Capturing user and product information for document level sentiment analysis with deep memory network. In Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, 521–526.

Le, Q., and T. Mikolov. 2014. Distributed representations of sentences and documents. In International Conference on Machine Learning, 1188–1196.

Yang, Z., and et al. 2016. Hierarchical attention networks for document classification. In Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 1480–1489.

Yin, Y., Y. Song, and M. Zhang. 2017. Document-level multi-aspect sentiment classification as machine comprehension. In Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, 2044–2054.

Zhou, X., X. Wan, and J. Xiao. 2016. Attention-based LSTM network for cross-lingual sentiment classification. In Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, 247–256.

Li, Z., Y. Zhang, Y. Wei, Y. Wu, and Q. Yang. 2017. End-to-end adversarial memory network for cross-domain sentiment classification. In IJCAI, 2237–2243.

Rao, G., W. Huang, Z. Feng, and Q. Cong. 2018. LSTM with sentence representations for document-level sentiment classification. Neurocomputing 308: 49–57.

Socher, R., J. Pennington, E.H. Huang, A.Y. Ng, and C.D. Manning. semi-supervised recursive autoencoders for predicting sentiment distributions. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, 151–161.

Socher, R., B. Huval, C.D. Manning, and A.Y. Ng. 2012. Semantic compositionality through recursive matrix-vector spaces. In Proceedings of the 2012 Joint Conference on Empirical Methods in Natural Language Processing and Computational Natural Language Learning, 1201–1211.

Qian, Q., and et al. 2015. Learning tag embeddings and tag-specific composition functions in recursive neural network. In Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), 1365–1374.

Kalchbrenner, N., E. Grefenstette, and P. Blunsom. 2014. A convolutional neural network for modelling sentences. arXiv Prepr. arXiv1404.2188.

dos Santos, C., and M. Gatti. 2014. Deep convolutional neural networks for sentiment analysis of short texts. In Proceedings of COLING 2014, the 25th International Conference on Computational Linguistics: Technical Papers, 69–78.

Wang, X., Y. Liu, S.U.N. Chengjie, B. Wang, and X. Wang. 2015. Predicting polarities of tweets by composing word embeddings with long short-term memory. In Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), vol. 1, 1343–1353.

Wang, J., L.-C. Yu, K. Lai, and X. Zhang. 2016. Dimensional sentiment analysis using a regional CNN-LSTM model. In Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), 225–230.

Ruder, S., P. Ghaffari, and J.G. Breslin. 2016. A hierarchical model of reviews for aspect-based sentiment analysis. arXiv Prepr. arXiv1609.02745.

Wang, W., S.J. Pan, and D. Dahlmeier, and X. Xiao. 2016. Recursive neural conditional random fields for aspect-based sentiment analysis. arXiv Prepr. arXiv1603.06679.

Xu, H., B. Liu, L. Shu, and P.S. Yu. 2018. Double embeddings and cnn-based sequence labeling for aspect extraction. arXiv Prepr. arXiv1805.04601.

Huang, B., Y. Ou, and K.M. Carley. 2018. Aspect level sentiment classification with attention-over-attention neural networks. In International Conference on Social Computing, Behavioral-Cultural Modeling and Prediction and Behavior Representation in Modeling and Simulation, 197–206.

Li, X., L. Bing, W. Lam, and B. Shi. 2018. Transformation networks for target-oriented sentiment classification. In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 946–956.

Wang, Y., M. Huang, L. Zhao and Others. 2016. Attention-based lstm for aspect-level sentiment classification. In Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, 606–615.

Ma, D., S. Li, X. Zhang, and H. Wang. 2017. Interactive attention networks for aspect-level sentiment classification. arXiv Prepr. arXiv1709.00893.

Zhang, M., Y. Zhang, and D.-T. Vo. 2016. Gated neural networks for targeted sentiment analysis. In Thirtieth AAAI Conference on Artificial Intelligence.

Mitchell et al. Corpus. http://www.m-mitchell.com/code/index.html.

Xu, J., D. Chen, X. Qiu, and X. Huang. 2016. Cached long short-term memory neural networks for document-level sentiment classification. arXiv Prepr. arXiv1610.04989.

Ma, Y., H. Peng, and E. Cambria. 2018. Targeted aspect-based sentiment analysis via embedding commonsense knowledge into an attentive LSTM. In Thirty-Second AAAI Conference on Artificial Intelligence.

Liu, F., T. Cohn, and T. Baldwin. 2018. Recurrent entity networks with delayed memory update for targeted aspect-based sentiment analysis. In Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 2 (Short Papers), 278–283.

Sun, C., L. Huang, and X. Qiu. 2019. Utilizing BERT for aspect-based sentiment analysis via constructing auxiliary sentence. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), 380–385.

Pelletier, F.J. 1994. The principle of semantic compositionality. Topoi 13: 11–24.

Socher, R., and et al. 2013. Recursive deep models for semantic compositionality over a sentiment treebank. In Proceedings of the 2013 Conference on Empirical Methods in Natural Language Processing, 1631–1642.

Sentiment Treebank. https://nlp.stanford.edu/sentiment/treebank.html.

Blitzer, J., M. Dredze, and F. Pereira. 2007. Biographies, Bollywood, boom-boxes and blenders: Domain adaptation for sentiment classification. In Proceedings of the 45th Annual Meeting of the Association of Computational Linguistics, 440–447.

Saeidi, M., G. Bouchard, M. Liakata, and S. Riedel. 2016. SentiHood: targeted aspect based sentiment analysis dataset for urban neighbourhoods. In Proceeding COLING 2016, 26th International Conference Computational Linguistics: Technical Papers, 1546–1556.

Crammer, K., and Y. Singer. 2002. Pranking with ranking. In Advances in Neural Information Processing Systems, 641–647.

Moghaddam, S., and M. Ester. 2010. Opinion digger: an unsupervised opinion miner from unstructured product reviews. In Proceedings of the 19th ACM International Conference on Information and Knowledge Management, 1825–1828.

Marcheggiani, D., O. Täckström, A. Esuli, and F. Sebastiani. 2014. Hierarchical multi-label conditional random fields for aspect-oriented opinion mining. In European Conference on Information Retrieval, 273–285.

Lu, B., M. Ott, C. Cardie, and B.K. Tsou. 2011. Multi-aspect sentiment analysis with topic models. In 2011 IEEE 11th International Conference on Data Mining Workshops (ICDMW), 81–88.

Wang, H., Y. Lu, and C. Zhai. 2011. Latent aspect rating analysis without aspect keyword supervision. In Proceedings of the 17th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 618–626.

Wang, Q., J. Xu, H. Li, and N. Craswell. 2013. Regularized latent semantic indexing: A new approach to large-scale topic modeling. ACM Transactions on Information Systems (TOIS) 31: 5.

Kullback, S., and R.A. Leibler. 1951. On information and sufficiency. The Annals of Mathematical Statistics 22: 79–86.

Yelp Dataset. https://www.yelp.com/dataset/challenge.

Diao, Q. and et al. 2014. Jointly modeling aspects, ratings and sentiments for movie recommendation (JMARS). In Proceedings of the 20th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 193–202.

Zhang, X., J. Zhao and Y. LeCun. 2015. Character-level convolutional networks for text classification. In Advances in Neural Information Processing Systems, 649–657.

NLP and CC 2013. http://tcci.ccf.org.cn/conference/2013/index.html.

Movie Reviews. http://www.cs.cornell.edu/people/pabo/movie-review-data/.

MPQA Opinion. http://mpqa.cs.pitt.edu.

Go, A., R. Bhayani, and L. Huang. 2009. Twitter sentiment classification using distant supervision. In CS224 N Project Report, Stanford, 1.

Yu, L.-C., and et al. 2016. Building Chinese affective resources in valence-arousal dimensions. In Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 540–545.

Camera Review. https://www.cs.uic.edu/~liub/FBS/sentiment-analysis.html.

Pontiki, M., and et al. 2016. SemEval-2016 task 5: Aspect based sentiment analysis. In Proceedings of the 10th International Workshop on Semantic Evaluation (SemEval-2016), 19–30.

Dong, L., and et al. 2014. Adaptive recursive neural network for target-dependent twitter sentiment classification. In Proceedings of the 52nd annual meeting of the association for computational linguistics (volume 2: Short papers), 49–54.

Brand24. https://brand24.com.

Clarabridge. https://www.clarabridge.com/platform/analytics/.

Repustate. https://www.repustate.com.

ParallelDots. https://www.paralleldots.com/sentiment-analysis.

Lexalytics. https://www.lexalytics.com/technology/sentiment-analysis.

Hi-Tech BPO. https://www.hitechbpo.com/sentiment-analysis.php.

Sentiment Analyzer. https://www.danielsoper.com/sentimentanalysis/.

SentiStrength. http://sentistrength.wlv.ac.uk.

Meaning Cloud. https://www.meaningcloud.com/products/sentiment-analysis.

Tweet Sentiment Visualization. https://www.csc2.ncsu.edu/faculty/healey/tweet_viz/tweet_app/.

Rapidminer. https://rapidminer.com/solutions/text-mining/.

Brandwatch. https://www.brandwatch.com/products/analytics/.

Social Mention. http://www.socialmention.com.

Social Searcher. https://www.social-searcher.com/social-buzz/.

Talkwalker’s Quick Search. https://www.talkwalker.com/quick-search-form.

Sentigem. https://sentigem.com.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Singapore Pte Ltd.

About this chapter

Cite this chapter

Pathak, A.R., Agarwal, B., Pandey, M., Rautaray, S. (2020). Application of Deep Learning Approaches for Sentiment Analysis. In: Agarwal, B., Nayak, R., Mittal, N., Patnaik, S. (eds) Deep Learning-Based Approaches for Sentiment Analysis. Algorithms for Intelligent Systems. Springer, Singapore. https://doi.org/10.1007/978-981-15-1216-2_1

Download citation

DOI: https://doi.org/10.1007/978-981-15-1216-2_1

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-15-1215-5

Online ISBN: 978-981-15-1216-2

eBook Packages: EngineeringEngineering (R0)