Abstract

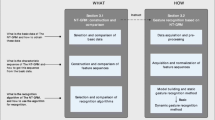

We present a new gesture recognition method using multi-modal data. Our approach solves a labeling problem, which means that gesture categories and their temporal ranges are determined at the same time. For that purpose, a generative probabilistic model is formalized and it is constructed by nonparametrically estimating multi-modal densities from a training dataset. In addition to the conventional skeletal joint based features, appearance information near the active hand in the RGB image is exploited to capture the detailed motion of fingers. The estimated log-likelihood function is used as the unary term for our Markov random field (MRF) model. The smoothness term is also incorporated to enforce temporal coherence of our model. The labeling results can then be obtained by the efficient dynamic programming technique. Experimental results demonstrate that our method provides effective gesture labeling results for the large-scale gesture dataset. Our method scores \(0.8268\) in the mean Jaccard index and is ranked 3rd in the gesture recognition track of the ChaLearn Looking at People (LAP) Challenge in 2014.

Chapter PDF

Similar content being viewed by others

References

Aggarwal, J., Ryoo, M.: Human activity analysis: A review. ACM Comput. Surv. 43(3), 16:1–16:43 (2011)

Ye, M., Zhang, Q., Wang, L., Zhu, J., Yang, R., Gall, J.: A survey on human motion analysis from depth data. In: Grzegorzek, M., Theobalt, C., Koch, R., Kolb, A. (eds.) Time-of-Flight and Depth Imaging. LNCS, vol. 8200, pp. 149–187. Springer, Heidelberg (2013)

Shotton, J., Fitzgibbon, A., Cook, M., Sharp, T., Finocchio, M., Moore, R., Kipman, A., Blake, A.: Real-time human pose recognition in parts from single depth images. In: CVPR, pp. 1297–1304 (2011)

Jhuang, H., Gall, J., Zuffi, S., Schmid, C., Black, M.J.: Towards understanding action recognition. In: ICCV, pp. 3192–3199 (2013)

Wang, H., Klser, A., Schmid, C., Liu, C.L.: Dense trajectories and motion boundary descriptors for action recognition. International Journal of Computer Vision 103(1), 60–79 (2013)

Dalal, N., Triggs, B.: Histograms of oriented gradients for human detection. In: CVPR, vol. 1, pp. 886–893 (2005)

Laptev, I., Marszalek, M., Schmid, C., Rozenfeld, B.: Learning realistic human actions from movies. In: CVPR, pp. 1–8 (2008)

Escalera, S., Baró, X., Gonzàlez, J., Bautista, M.A., Madadi, M., Reyes, M., Ponce, V., Escalante, H.J., Shotton, J., Guyon, I.: Chalearn looking at people challenge 2014: dataset and results. In: ECCV Workshops (2014)

Jalal, A., Uddin, M.Z., Kim, J.T., Kim, T.S.: Recognition of human home activities via depth silhouettes and r transformation for smart homes. Indoor and Built Environment, 1–7 (2011)

Xia, L., Chen, C.C., Aggarwal, J.: View invariant human action recognition using histograms of 3d joints. In: CVPR Workshops, pp. 20–27 (2012)

Sminchisescu, C., Kanaujia, A., Li, Z., Metaxas, D.: Conditional models for contextual human motion recognition. In: ICCV, vol. 2, pp. 1808–1815 (2005)

Sung, J., Ponce, C., Selman, B., Saxena, A.: Unstructured human activity detection from rgbd images. In: IEEE International Conference on Robotics and Automation (ICRA), pp. 842–849 (2012)

Lin, Z., Jiang, Z., Davis, L.S.: Recognizing actions by shape-motion prototype trees. In: ICCV, pp. 444–451 (2009)

Wang, J., Wu, Y.: Learning maximum margin temporal warping for action recognition. In: ICCV, pp. 2688–2695 (2013)

Wang, J., Liu, Z., Wu, Y., Yuan, J.: Mining actionlet ensemble for action recognition with depth cameras. In: CVPR, pp. 1290–1297 (2012)

Oreifej, O., Liu, Z.: Hon4d: histogram of oriented 4d normals for activity recognition from depth sequences. In: CVPR, pp. 716–723 (2013)

Wei, P., Zheng, N., Zhao, Y., Zhu, S.C.: Concurrent action detection with structural prediction. In: ICCV, pp. 3136–3143 (2013)

Yang, X., Tian, Y.: Eigenjoints-based action recognition using naïve-bayes-nearest-neighbor. In: CVPR Workshops, pp. 14–19 (2012)

Zanfir, M., Leordeanu, M., Sminchisescu, C.: The moving pose: an efficient 3d kinematics descriptor for low-latency action recognition and detection. In: ICCV, pp. 2752–2759 (2013)

Müller, M., Röder, T., Clausen, M.: Efficient content-based retrieval of motion capture data. ACM Transactions on Graphics (TOG) 24(3), 677–685 (2005)

Muja, M., Lowe, D.G.: Fast approximate nearest neighbors with automatic algorithm configuration. In: International Conference on Computer Vision Theory and Application (VISAPP), pp. 331–340 (2009)

Murillo, J.M.L., Rodríguez, A.A.: Algorithms for gaussian bandwidth selection in kernel density estimators. Neural Inf. Proc. Systems (2008)

Behmo, R., Marcombes, P., Dalalyan, A., Prinet, V.: Towards optimal naive bayes nearest neighbor. In: Daniilidis, K., Maragos, P., Paragios, N. (eds.) ECCV 2010, Part IV. LNCS, vol. 6314, pp. 171–184. Springer, Heidelberg (2010)

Everingham, M., Van Gool, L., Williams, C.K., Winn, J., Zisserman, A.: The pascal visual object classes (voc) challenge. International Journal of Computer Vision 88(2), 303–338 (2010)

Vedaldi, A., Fulkerson, B.: VLFeat: An open and portable library of computer vision algorithms (2008). http://www.vlfeat.org/

Neverova, N., Wolf, C., Taylor, G., Nebout, F.: Multi-scale deep learning for gesture detection and localization. In: ECCV Workshops (2014)

Wu, D.: Deep dynamic neural networks for gesture segmentation and recognition. In: ECCV Workshops (2014)

Monnier, C., German, S., Ost, A.: A multi-scale boosted detector for efficient and robust gesture recognition. In: ECCV Workshops (2014)

Camgoz, N.C., Kindiroglu, A.A., Akarun, L.: Gesture recognition using template based random forest classifiers. In: ECCV Workshops (2014)

Evangelidis, G., Singh, G., Horaud, R.: Continuous gesture recognition from articulated poses. In: ECCV Workshops (2014)

Peng, X., Wang, L., Cai, Z.: Action and gesture temporal spotting with super vector representation. In: ECCV Workshops (2014)

Pigou, L., Dieleman, S., Kindermans, P.J.: Sign language recognition using convolutional neural networks. In: ECCV Workshops (2014)

Chen, G., Clarke, D., Giuliani, M., Weikersdorfer, D., Knoll, A.: Multi-modality gesture detection and recognition with un-supervision, randomization and discrimination. In: ECCV Workshops (2014)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2015 Springer International Publishing Switzerland

About this paper

Cite this paper

Chang, J.Y. (2015). Nonparametric Gesture Labeling from Multi-modal Data. In: Agapito, L., Bronstein, M., Rother, C. (eds) Computer Vision - ECCV 2014 Workshops. ECCV 2014. Lecture Notes in Computer Science(), vol 8925. Springer, Cham. https://doi.org/10.1007/978-3-319-16178-5_35

Download citation

DOI: https://doi.org/10.1007/978-3-319-16178-5_35

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-16177-8

Online ISBN: 978-3-319-16178-5

eBook Packages: Computer ScienceComputer Science (R0)