Abstract

In recent years, most developed countries have been facing serious issues with an increasing number of lifestyle-related diseases. Recognizing human emotions and their strength has been an essential challenge to improving healthcare services. In this research, a purely segment-level approach is proposed that entirely abandons utterance-level features. We focus on better extracting emotional information from a number of selected segments within an utterance and establishing a method for recognizing the emotion of an utterance. Validation of the proposed method was carried out on a 50-person emotional speech database that was specifically designed for this research, and a significant improvement of more than 20 % was achieved in the average accuracy compared with the existing utterance-level approaches. Moreover, testing results based on speech signals stimulated by the International Affective Picture System (IAPS) database showed that the proposed method could be also used in emotion strength analysis.

Access provided by Autonomous University of Puebla. Download chapter PDF

Similar content being viewed by others

1 Introduction

Recently, increasing attention has been drawn to identifying emotions by using speech signals. There are many reasons for the popularity of using speech signals for emotion recognition. One main reason is that speech is the most natural and important way of human communication. However, despite the tremendous research on speech recognition done since the late 1950s, emotion is still one of the huge differences between humans and machines [1]. Recognizing human emotion from speech introduces promising applications such as healthcare services, commercial conversations, virtual humans, emotion-based indexing, and information retrieval.

An utterance (phrase, short sentence, etc.) is often considered to be a fundamental unit and is recognized on the basis of the global utterance-wise statistics of derived segments, so the segment features are transformed into a single feature vector for each emotional utterance [2–6]. However, in recent research, an increasing number of scientists and psychologists have been arguing that changes in emotional activity occur within a very short period of time. Several studies have emphasized the importance of the temporal dynamics of emotions [7, 8]. Furthermore, one study illustrates that emotions are inherently dynamic [9]. The paper contains an illustration showing that, within 2.6 s, a person went through several emotional activities, such as surprise, fear, aggressive stance, and relaxation. In addition, another study demonstrates that the emotion effect occurs within hundreds of milliseconds [10].

Motivated by these findings, we focused on a novel scheme to improve speech emotion recognition by using segment-level features instead of utterance-wise features [11–13]. Many researchers have recently been focusing on whether the utterance-level approach is the right choice for modeling emotions [14]. They are concerned with this because of the difficulties with utterance-wise statistics in avoiding influence from spoken content. Moreover, valuable but neglected information could be utilized in the segment-level feature extraction approach rather than calculating only the utterance-wise statistics. This hypothesis is also supported by many pieces of research [15, 16] on the basis of the fact that improvements can be made by adding segment-level features to the common utterance-level features.

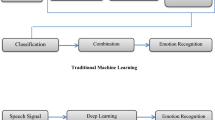

We took into consideration a purely segment-level strategy for recognizing speech emotion and abandoned utterance-wise features in order to reduce noises such as spoken content and utilize neglected information when calculating the utterance-wise statistics in this study. An issue when using segment-level speech emotion recognition is that it increases the difficulty for training to a large extent because a single utterance is divided into a number of segments. The aim of this paper is to properly design an approach for recognizing utterance-level emotion that is based on aggregating the segment-level labels and to extract more information such as emotion strength. The concept is illustrated in Fig. 1.

2 Experimental Design for Emotion Database

A well-annotated database is needed to construct a robust method for recognizing emotions by using speech signals [17]. Our experiment emphasizes “natural speech.” The participants were prevented from becoming aware that they were in an experimental environment during the experiments, which is much more realistic than experiments that were conducted with scripted speech. Natural speech is difficult to analyze but more suitable than scripted speech for validating the robustness of an emotion analysis method.

2.1 Experimental Procedure

The experimental setup is composed of one instructor, one coordinator, and two subjects. The coordinator cooperates with the subjects in order to help better stimulate their emotions. However, the coordinator pretends to be one of the participants in the experiment to avoid being an extra obstruction for the subjects. The stimulation process unfolds through conversations with the aid of videos. The steps are demonstrated as follows, and the experiment is illustrated in Fig. 2.

-

The instructor sets up the experimental environment, such as a projector for the videos and microphones for collecting the speech signals, and gives instructions to the participants.

-

The instructor also explains the steps to the participants, including the coordinator, for freely providing their impressions related to the videos.

-

Self-introductions are made to create an easy speaking atmosphere.

-

After watching each emotion evoking video, which lasts several minutes, the speech signals are recorded from the impressions.

The emotion corresponding to each utterance from the recorded speech signals is not only self-assessed by the subjects (self-assessment) but also by ten other people (others-assessment) after the experiment. Therefore, it is possible to evaluate the degree of the reliability of labeling utterance emotions.

2.2 Data Information

Ninety-six people participated in the experiments, which included 53 males and 43 females ranging from their early 10s to 40s. We provided sample selections to obtain reliable data in two steps. First, only the samples with the same label (pleasure or displeasure) based on the self-assessment and others-assessment were taken into consideration. Second, to maintain a balance in the sample numbers for each label, we selected 300 utterances with higher rankings by using the others-assessment, which consisted of 150 utterances as pleasure data and 150 utterances as displeasure data obtained from 50 participants. Ten specialists put a label for each utterance in the others-assessment, and the rank for each utterance was calculated on the basis of the ratio of the specialists who gave the same labels that were consistent with the label set from the self-assessment.

3 Emotion Recognition Method Based on Purely Segment-Level Features

The proposed methodology for emotion recognition is based on purely segment-level speech frames, and the important issues for consideration here are the increased number of samples that raise the computational burden in terms of both memory capacity and execution speed and the decline in the generalization ability of the classifier. In this work, we address the quantitative analysis of various analytical schemes related to segment-level emotion recognition, and we propose an automatic approach for decreasing the number of samples in order to reduce the computational complexity and improve the classifier generalization ability. The algorithm is illustrated in Fig. 3.

3.1 Segmentation Approach

We propose novel segmentation strategies based on analysis of short time speech segments. The proposed approach is illustrated in Fig. 4.

A classifier is trained by using the information contained in the input feature vectors. In the real world, the final uncertainty will not be ideally zero after training because of insufficient input information. In addition, the classifier might be “confused” due to ambiguities in the input information. The most likely solution is to increase the number of training samples, but it is not desirable in our case since the large increase in the number of training samples caused by splitting an utterance into segments is already a great computational burden. However, a more efficient way is to find more informative segments by minimizing the amount of mutual information between the two feature vectors. In this study, fixed length segments are constructed at selected positions on the basis of designed indexes. More precise labels of segments can be defined when taking into consideration a much smaller number of selected segments. Thus, not all parts of the utterance are used in the analysis. A sliding window with no overlap is adopted to process the utterance signal for calculating the ranking of the fixed length segment. A correlation coefficient is adopted for getting a smaller fixed number of segments from an utterance.

The correlation coefficient [18], which is also known as the Pearson product-moment correlation coefficient, is a measure of the linear dependence between two feature vectors. It is defined as

where n is the number of features.

The concept of the proposed segmentation methods is illustrated in Fig. 5.

3.2 Feature Extraction

We focused on a set of 162 acoustic features obtained from speech segments, including 50 mel-frequency spectral coefficients (MFCC), 50 linear predictive coefficients (LPC), and 10 statistical features (mode, median, mean, range, interquartile range, standard deviation, variation, absolute deviation, skewness, and Kurtosis) calculated from each of the five levels of detailed wavelet coefficients by using the discrete wavelet decomposition (DWT), pitch, energy, zero-crossing rate (ZCR), the first seven formants, centroid, and 95 %-roll-off-point from FFT-spectrum.

3.3 Decision Model

The label of an utterance is decided on the basis of the labels of its segments predicted from a classifier. We simply use the majority vote, which determines the label of the utterance from the label in the majority, in order to pay more attention to examine the effectiveness of the proposed segment-level approaches for speech emotion recognition. The decision model is shown in Fig. 6. Our decision model is based on a classifier called the “probabilistic neural network” (PNN) [19]. PNN operations are designed into a multilayered feed-forward network with four layers. The network has many advantages compared with other kinds of artificial neural networks and nonlinear learning algorithms, including a very fast learning speed and a small number of parameters.

4 Results

A tenfold cross validation was used to evaluate and test our proposed approaches as well as make comparisons with previous pieces of research because it is used in many other pieces of emotion recognition research to validate general models [15]. We reviewed all of the most recent research on the aspect of classifiers and found that the support vector machine (SVM) is one of the most robust and popular classifiers in the field of affective research, and it beats out many other kinds of classifiers in terms of recognition accuracy [20]. Thus, our evaluation results based on PNN are compared with those based on SVM (Fig. 7). Twenty segments with a length is 50 ms were used for voting in our proposal.

5 Discussion on Segment-Level Features

Previous research has reported on strategies for improving the accuracy of speech emotion recognition by utilizing segment-level features together with global features extracted from utterances. The effectiveness of these strategies was proved in many reports [14, 15]. This research further develops a new approach that totally abandons the global features from utterances. The analytical results indicate the robustness of this advancement, which leads to a higher level of recognition accuracy by only using segment-level features in the proposed decision model. We proposed a segmentation method adopting a correlation coefficient in order to select the appropriate number of segments within an utterance. Therefore, the generated segments have less redundant information for the decision model, which contributes to a better understanding of the utterance label.

We used a 162-dimension feature set for a complete analysis, but a remaining point is that we did not include a feature selection procedure before the segmentation. The full set of extracted features is chosen instead, and we let the segmentation algorithm decide on the more appropriate segments for representing the utterance labels. This is appropriate with respect to feature dimensions with a large number of samples. However, the interaction between the feature selection and segmentation approaches and its meaning will be discussed as a future issue.

6 Application for Emotion Strength Analysis

A very interesting potential application is emotion strength analysis by segment-level speech emotion recognition. We use majority voting to decide the utterance label (pleasure or displeasure) with the assumption that the segment label in the majority represents the utterance label. To better understand segment labels, we looked further into the ratio of the predicted segment labels that can represent the strength of an utterance emotion. All speech frames are used for examining all segments in terms of emotions.

6.1 Experimental Data

The International Affective Picture System (IAPS) [21] is used for evoking emotions with different strengths. The IAPS is an emotion stimulation system built from the results of many emotion experiments and is composed of about 1,000 pictures labeled with a standard scale of valence (pleasure-displeasure) and arousal (exciting-sleepy). Therefore, it meets our requirement for stimulating emotions with different strengths. Figure 8 shows the four kinds of emotion strengths we defined by using the IAPS.

The experimental approach was made up of four parts in accordance with the pleasure and displeasure emotion stimulation, which includes the defined emotion strength (weak and strong). The detailed experimental procedure is shown in Fig. 9. The pictures selected from the IAPS during the stimulation period were projected on a screen to evoke emotions. Then, speech signals were collected when the participants were reading designed scripts with their evoked emotion. The participants were requested to close their eyes to relax during the control time. Seven Japanese males took part in the experiment. Data were collected by using the previously described procedures for estimating four emotional strengths and contained 312 samples including 156 pleasure (78 strong, 78 weak) and 156 displeasure (78 strong, 78 weak) data.

6.2 Results of Emotion Strength Analysis

We statistically analyzed the components of all of the samples. We then visualized the results with a bar chart with a standard deviation to illustrate the correlations between stimulations and emotion components, which were represented by segment-level predictions within an utterance by using the proposed segment-level speech emotion recognition method (Fig. 10).

Recognizing the emotion of utterances is one of the more attractive topics in speech analysis for human–computer interaction (HCI), healthcare, etc. However, emotion strength analysis has been a very essential but difficult research area. We discussed the potential of using segment-level frames for such analysis. As shown in Fig. 10, the proposed method can indeed reflect the strengths of emotions in utterance clusters over a short period of time. However, difficulties exist in applying it to a single utterance because of the variances in the emotional components regarding the utterances. Although additional research is necessary for collecting more solid findings in terms of the emotion strength analysis of utterances, segment-level speech emotion analysis will be a new method for better recognizing human emotion strength with machines.

7 Conclusion

An emotion recognition method using short time speech analysis was proposed. To make the proposed method more efficient and accurate, an advanced relative segmentation method was introduced that uses correlation coefficients for fixed length segment selection, which is essential for realizing the purely segment-level approach. The proposed method can greatly increase the accuracy of emotion recognition by more than 20 % compared with the conventional method of using the global features of utterances, which was validated by using a database with speech signals from 50 participates. The proposed method also showed the effectiveness of determining the emotion strength of utterances over a period of time. It can provide hints about emotion strength information according to our validation results with the IAPS database.

References

Picard RW, Vyzas E, Healey J (2001) Toward machine emotional intelligence: analysis of affective physiological state. IEEE Trans Pattern Anal Mach Intell 23:1175–1191

Nicholson J, Takahashi K, Nakatsu R (2000) Emotion recognition in speech using neural networks. Neural Comput Appl 9:290–296

Ververidis D, Kotropoulos C (2005) Emotional speech classification using gaussian mixture models and the sequential floating forward selection algorithm. In: IEEE international conference on multimedia and expo, pp 1500–1503

Pierre-Yves O (2003) The production and recognition of emotions in speech: features and algorithms. Int J Hum Comput Stud 59:157–183

Kim EH, Hyun KH, Kim SH, Kwak YK (2009) Improved emotion recognition with a novel speaker-independent feature. In: IEEE/ASME transactions on mechatronics, p 14

Shan MK, Kuo FF, Chiang MF, Lee SY (2009) Emotion-based music recommendation by affinity discovery from film music. Expert Syst Appl 36:7666–7674

Hemenover SH (2003) Individual differences in rate of affect change: studies in affective chronometry. J Pers Soc Psychol 85:121–131

Eaton LG, Funder DC (2001) Emotional experience in daily life: valence, variability, and rate of change. Emotion 1:413–421

Marsella SC, Gratch J (2009) EMA: a process model of appraisal dynamics. Cogn Syst Res 10:70–90

Ye J, Li Y, Wei L, Tang Y, Wang J (2009) The race effect on the emotion-induced gamma oscillation in The EEG. In: 2nd international conference on biomedical engineering and informatics, pp 1–4

Lee CM, Narayanan SS (2005) Toward detecting emotions in spoken dialogs. IEEE Trans Speech Audio Process 13:293–303

Morrison D, De Silva LC (2007) Voting ensembles for spoken affect classification. J Netw Comput Appl 30:1356–1365

Schuller B, Reiter S, Muller R, Al-Hames M, Lang M, Rigoll G (2005) Speaker independent speech emotion recognition by ensemble classification. In: IEEE international conference on multimedia and expo

Schuller B, Rigoll G (2006) Timing levels in segment-based speech emotion recognition. In: Proceedings of INTERSPEECH

Yeh JH, Pao TL, Lin CY, Tsai YW, Chen YT (2011) Segment-based emotion recognition from continuous Mandarin Chinese speech. Comput Hum Behav 27:1545–1552

Vogt T, Andre E (2005) Comparing feature sets for acted and spontaneous speech in view of automatic emotion recognition. In: IEEE international conference on multimedia and expo

Shuzo M, Yamamoto T, Shimura M, Monma F, Mitsuyoshi S, Yamada I (2011) Construction of natural voice database for analysis of emotion and feeling. J Inf Process 53:1185–1194

Steuer R, Kurths J, Daub CO, Weise J, Selbig J (2002) The mutual information: detecting and evaluating dependencies between variables. Bioinformatics 18(Suppl 2):S231–S240

Specht DF (1990) Probabilistic neural networks. Neural Netw 3:109–118

Morrison D, Wang R, De Silva LC (2007) Ensemble methods for spoken emotion recognition in call-centres. Speech Commun 49:98–112

Lang PJ, Bradley MM, Cuthbert BN (2008) International affective picture system (IAPS): affective ratings of pictures and instruction manual. University of Florida, Gainesville

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2015 Springer International Publishing Switzerland

About this chapter

Cite this chapter

Zhang, H., Warisawa, S., Yamada, I. (2015). Emotion Recognition Using Short Time Speech Analysis. In: Fukuda, S. (eds) Emotional Engineering (Vol. 3). Springer, Cham. https://doi.org/10.1007/978-3-319-11555-9_7

Download citation

DOI: https://doi.org/10.1007/978-3-319-11555-9_7

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-11554-2

Online ISBN: 978-3-319-11555-9

eBook Packages: EngineeringEngineering (R0)