Abstract

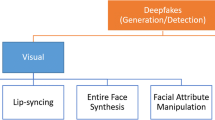

Deepfakes combine the latest in machine learning and Artificial Intelligence (AI) to create hyper-realistic audio-visual forgeries. Deepfakes materialise from a specific type of deep learning—hence the name—in which sets of algorithms compete in a generative adversarial network or GAN (Goodfellow et al. Advances in Neural Information Processing Systems. Cambridge, MA: MIT Press, 2014). Deep learning is a form of AI in which sets of algorithms (or neural networks) learn to simulate rules and replicate patterns by analysing large data sets. The generator algorithm creates content modelled on source material, which is already readily available (social media content, e.g.), whilst the discriminator algorithm seeks to detect flaws on the fake. This is an iterative process allowing for rapid improvements which address the flaws, meaning the GAN is able to produce highly realistic fake video content. This field of AI continues to advance at a rapid rate thus it is ever easier to create sophisticated and compelling videos. This has sparked concerns from politicians and international organisations about how these types of videos may be weaponised for malicious ends. These fears have fed into the post-truth era in the second decade of the early twenty-first century, where social media and information platforms have become increasingly uncanny spaces creating “abtruth” environments that, often toxify individual and collective memory to, destabilise the idea of provable facts and the nature of the “real” world.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Works Cited

Agarwal, Shruti, Hany Farid, Yuming Gu, Mingming He, Koki Nagano, and Hao Li. 2019. “Protecting World Leaders Against Deep Fakes.” In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, 38–45.

Ajder, Henry, Giorgio Patrini, Cavalli Francesco, and Laurence Cullen. 2019. The State of Deepfakes: Landscape, Threats and Impact. Amsterdam: Deeptrace.

Appel, Markus, and Fabian Prietzel. 2022. “The Detection of Political Deepfakes.” Journal of Computer-Mediated Communication 27 (4): 1–13.

Ayers, Drew. 2021. “The Limits of Transactional identity: Whiteness and Embodiment in Digital Facial Replacement.” Convergence: The International Journal of Research into New Media Technologies 27 (4): 1018–37.

Bessi, Alessandro, and Emilio Ferrara. 2016. “Social Bots Distort the 2016 US Presidential Election Online Discussion.” In First Monday. Vol. 21. Issue 11. November 7. https://firstmonday.org/ojs/index.php/fm/article/view/7090/5653. Accessed 6 June 2023.

Bianchi, Federico, Pratyusha Kalluri, Esin Durmus, Faisal Ladhak, Myra Chen, Debora Nozza, Tatsunori Hashimoto, Dan Jurafsky, James Zou, and Aylin Caliskan. 2022. “Easily Accessible Text-to-Image Generation Amplifies Demographic Stereotypes at Large Scale.” In Computer Science. November 11, 1–17.

Bode, Lisa, Dominic Lees, and Dan Golding. 2021. “The Digital Face and Deepfakes on Screen.” Convergence: The International Journal of Research into New Media Technologies 27 (4): 849–54.

Cameron, Allan, 2021. “Dimensions of the Digital Face: Flatness, Contour and the Grid.” Convergence: The International Journal of Research into New Media Technologies 27 (4): 868–81.

Chavoshi, Nikan, Hossein Hamooni, and Abdullah Mueen. 2016. “DeBot: Twitter Bot Detection Via Warped Correlation.” In IEEE 16th International Conference on Data Mining (ICDM), 817–22. Barcelona: Spain.

Chesney, Robert, and Danielle Citron. 2019. “Deep Fakes: A Looming Challenge for Privacy, Democracy, and National Security.” California Law Review 107: 1753–1820.

Choi, Yunjey, Minje Choi, Munyoung Kim, Jung-Woo Ha, Sunghun Kim, and Jaegul Choo. 2018. “StarGAN: Unified Generative Adversarial Networks for Multi-Domain Image-to-Image Translation.” In Proceedings of the Computer Vision and Pattern Recognition (CVPR). Salt Lake City: Utah, June 18–23. https://ieeexplore.ieee.org/document/8579014. Accessed 28 July 2023.

Coats, D. R. 2019. “Worldwide Threat Assessment of the US Intelligence Community.” Senate Select Committee on Intelligence. Office of the Director of National Intelligence. https://www.dni.gov/index.php/newsroom/congressional-testimonies/congressional-testimonies-2019/item/1947-statement-for-the-record-worldwide-threat-assessment-of-the-us-intelligence-community. Accessed 28 July 2023.

Cook, Jesselyn. 2019. “Here’s What It’s Like to See Yourself in a Deepfake Porn Video.” In Huffington Post. https://www.huffingtonpost.co.uk/entry/deepfake-porn-heres-what-its-like-to-see-yourself_n_5d0d0faee4b0a3941861fced. Accessed 28 July 2023.

Dahlgren, Peter. 2018. “Media, Knowledge and Trust: The Deepening Epistemic Crisis of Democracy.” Javnost—The Public 25 (1): 20–27.

Diakopoulos, Nicholas. 2019. Automating the News: How Algorithms Are Rewriting the Media. Cambridge, MA: Harvard University Press.

Diakopoulos, Nicholas, and Deborah Johnson. 2021. “Anticipating and Addressing the Ethical Implications of Deepfakes in the Context of Elections.” New Media & Society 23 (7): 2072–98.

Dobber, Tom, Nadia Metoui, Damien Trilling, Natali Helberger, and Claes de Vreese. 2021. “Do (Microtargeted) Deepfakes Have Real Effects on Political Attitudes?” The International Journal of Press/Politics 26 (1): 69–91.

Domke, David, Dhavan V. Shah, and Daniel B. Wackman. 1998. “Media Priming Effects: Accessibility, Association, and Activation.” International Journal of Public Opinion Research 10 (1): 51–74.

Fallis, Don. 2021. “The Epistemic Threat of Deepfakes.” Philosophy & Technology 34 (4): 623–43.

Freedman, Des. 2018. “Populism and Media Policy Failure.” European Journal of Communication 33 (6): 604–18.

Future Advocacy. N.D. https://futureadvocacy.com/. Accessed 28 July 2023.

Goldberg, Matthew, Sander van der Linden, Matthew T. Ballew, Seth A. Rosenthal, Abel Gustafson, and Anthony Leisrowitz. 2019. “The Experience of Consensus: Video as an Effective Medium to Communicate Scientific Agreement on Climate Change.” Science Communication 41 (5): 659–73.

Golding, Dan. 2021. “The Memory of Perfection: Digital Faces and Nostalgic Franchise Cinema.” Convergence: The International Journal of Research into New Media Technologies 27 (4): 855–67.

Goodfellow, Ian, Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Warde-Farley, Sherjil Ozair, Aaron Courville, and Yoshua Benigo. 2014. “Generative Adversarial Nets.” Advances in Neural Information Processing Systems 27, edited by Z. Ghahramani, M. Welling, C. Cortes, N. D. Lawrence, and K. Q. Weinberger, 2672–80. Cambridge, MA: MIT Press.

Harjuniemi, Hayek Timo. 2021. “Post-truth, Fake News and the Liberal “Regime of Truth”: The Double Movement Between Lippmann and Hayek.” European Journal of Communication 37 (3): 269–83.

Harris, Douglas. 2019. “Deepfakes: False Pornography Is Here and the Law Cannot Protect You.” Duke Law &Technology Review 17 (1): 99–128.

Hurley, Kelly. 1996. The Gothic Body: Sexuality, Materialism, and Degeneration at the Fin de Siècle. Cambridge: Cambridge University Press.

Karras, Tero, Timo Alia, Samuli Laine, and Jaakko Lehtinen. 2018. “Progressive Growing of GANs for Improved Quality, Stability, and Variation.” In Proceedings of the International Conference on Learning Representations (ICLR). https://arxiv.org/abs/1710.10196. Accessed on 28th July 2023.

Kats, Daniel. 2020. “Identifying Sockpuppet Accounts on Social Media Platforms.” Norton Labs. https://www.nortonlifelock.com/blogs/norton-labs/identifying-sockpuppet-accounts-social-media. Accessed 28 July 2023.

Kietzmann, J., A. J. Mills, and K. Planagger. 2020. “Deepfakes: Perspectives on the Future ‘Reality’ of Advertising and Branding.” International Journal of Advertising 40 (3): 473–85.

Kim, Hyeongwoo, Pablo Garrido, Ayush Tewari, Weipeng Xu, Justus Thies, Matthias Nieβner, Patrick Pérez, Christian Richardt, Michael Zollhöfer, and Christian Theobolt. 2018. “Deep Video Portraits.” ACM Transactions on Graphics (TOG) 37: 1–14.

Kobis, Nils, Barbora Dolezalova, and Ivan Soraperra. 2021. “Fooled Twice—People Cannot Detect Deepfakes But Think They Can.” iScience 24 (11): n.p.

Kovic, Marko, Adrian Rauchfleisch, Marc Sele, and Christian Caspar. 2018. “Digital Astroturfing in Politics: Definition, Typology, and Countermeasures.” Studies in Communiation Sciences 18 (1): 69–85.

Lefevere, Jonas, Knut De Swert, and Stefaan Walgrave. 2021. “Effects of Popular Exemplars in Television News.” Communication Research 39 (1): 103–19.

Levin, Sam. 2017. “Google ‘Segregates’ Women into Lower-paying Jobs, Stiflingcarers, Lawsuit Says.” In The Guardian, September 14. https://www.theguardian.com/technology/2017/sep/14/google-women-promotions-lower-paying-jobs-lawsuit. Accessed 28 July 2023.

Levy, Susan. 2018. “Gender Bias in Artificial Intelligence: The Need for Diversity and Gender Theory in Machine Learning.” In International Conference on Software Engineering, 14–16.

Mihailova, Mihaela. 2021. “To Dally with Dalí: Deepfake (Inter)faces in the Art Museum.” Convergence: The International Journal of Research into New Media Technologies 27 (4): 882–98.

Morstatter, Fred, Do Own (Donna) Kim, Jonckheere Natalie, Calvin Liu, Malilka Seth, and Dmitri Williams. 2021. “I’ll Play on My Other Account: The Network and Behavioural Differences of Sybils.” Proceedings of the ACM on Human-Computer Interaction 5, Chi Play: 1–18.

Mourão, R. R., and C. T. Robertson. 2019. “Fake News as Discursive Integration: An Analysis of Sites That Publish False, Misleading, Hyperpartisan and Sensational Information.” Journalism Studies 20 (14): 2077–95.

Pennycook, Gordon, Robert M. Ross, Derek J. Koehler, and Jonathan A. Fugelsang. 2016. “Atheists and Agostics Are More Reflective Than Religious Believers: Four Empirical Studies and a Meta-Analysis.” PloS One 11: 1–18.

Petty, Richard E., Cacioppo, John T., and Schumann, David. 1983. “Central and Peripheral Routes in Advertising Effectiveness: The Moderating Role of Involvement.” Journal of Consumer Research 10 (2): 135–46.

Pickard, Victor. 2018. “When Commercialism Trumps Democracy: Media Pathologies and the Rise of Misinformation Society.” Trump and the Media, edited by Pablo J. Boczkowski and Zizi Papacharissi, 195–201. Cambridge, MA: MIT Press.

Satter, Raphael. 2020. “Deepfake Used to Attack Activist Couple Shows New Disinformation Frontier.” Reuters, July 15. https://www.reuters.com/article/us-cyber-deepfake-activist/deepfake-used-to-attack-activist-couple-shows-new-disinformation-frontier-idUSKCN24G15. Accessed 28 July 2023.

Taylor, Shelley E., and Suzanne C. Thompson. 1982. “Stalking the Elusive ‘Vividness’ Effect.” Psychological Review 89 (2): 155–81.

Tormala, Zakary L., Richard E. Petty, and Pablo Briñol. 2002. “Ease of Retrieval Effects in Persuasion: A Self-validation Analysis.” Personalilty and Social Psychology Bulletin 28 (12): 1700–12.

Turner, Graeme. 2010. “Redefining Journalism: Citizen Journalism, Blogs and the Rise of Opinion.” Ordinary People and the Media, 71–97. London: Sage.

Vosoughi, Soroush, Deb Roy, and Sinan Aral. 2018. “The Spread of True and False News Online.” Science 359 (6380): 1146–51.

Waisbord, Silvio. 2018. “Truth Is What Happens to News: On Journalism, Fake News and Post-truth.” Journalism Studies 19 (13): 1866–78.

Williams, Apryl. 2020. “Black Memes Matter: #LivingWhileBlack With Becky and Karen.” Social Media and Society 6 (4): n.p.

Yang, Zhi, Christo Wilson, Xiao Wang, Tingting Gao, Ben Zhao, and Yafei Dai. 2014. “Uncovering Social Network Sybils in the Wild.” ACM Transactions on Knowledge Discovery from Data 8 (1): 1–29.

Yoonn, InJeong. 2016. “Why Is Not Just a Joke? Analysis of Internet Mems Associated with Racism and Hidden Ideology of Colorblindness.” Journal of Cultural Research in Art Education 33: 92–123.

Zelenkauskaite, Asta, and Marcello Balduccini. 2017. “‘Information Warfare’ and Online News Commenting: Analyzing Forces of Social Influence Through Location-based Commenting User Typology.” Social Media and Society 3 (3): 1–13.

Zerback, Thomas, Florian Töpfl, and Maria Knöpfle. 2021. “The Disconcerting Potential of Online Disinformation: Persuasive Effects of Astroturfing Comments and Three Strategies for Inoculation Against Them.” New Media & Society 25 (5): 1080–98.

Zillmann, Dolf. 1999. “Exemplification Theory: Judging the Whole by the Some of Its Parts.” Media Psychology 1 (1): 69–94.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this chapter

Cite this chapter

Morris, K.W. (2024). Deepfake Sockpuppets: The Toxic “Realities” of a Weaponised Internet. In: Bacon, S., Bronk-Bacon, K. (eds) Gothic Nostalgia. Palgrave Gothic. Palgrave Macmillan, Cham. https://doi.org/10.1007/978-3-031-43852-3_5

Download citation

DOI: https://doi.org/10.1007/978-3-031-43852-3_5

Published:

Publisher Name: Palgrave Macmillan, Cham

Print ISBN: 978-3-031-43851-6

Online ISBN: 978-3-031-43852-3

eBook Packages: Literature, Cultural and Media StudiesLiterature, Cultural and Media Studies (R0)