Abstract

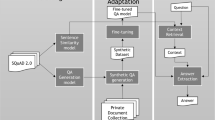

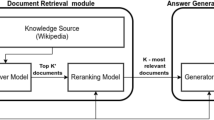

In this paper, we propose a new framework called Document-Retrieval Question Generation (DR.QG). The goal of DR.QG is to generate a question corresponding to a given answer. Differing from the common question generation setting, DR.QG takes only answers for question generation, while existing question generation takes a context passage and an answer as input for generating. To achieve this goal, we explored the possibility of importing document retrieval. Through the performance evaluation on the Question Answering (QA) task, we demonstrate the feasibility of DR.QG. The result shows that our method improves QA performance by up to 13%. Furthermore, we simulate the closed-domain situation on the open-domain dataset and show that we improved performance by 3%.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Badugu, S., Manivannan, R.: A study on different closed domain question answering approaches. Int. J. Speech Technol. 23(2), 315–325 (2020). https://doi.org/10.1007/s10772-020-09692-0

Banerjee, S., Lavie, A.: METEOR: an automatic metric for MT evaluation with improved correlation with human judgments. In: Proceedings of the ACL Workshop on Intrinsic and Extrinsic Evaluation Measures for Machine Translation and/or Summarization, pp. 65–72 (2005)

Chan, Y.H., Fan, Y.C.: A recurrent BERT-based model for question generation. In: Proceedings of the 2nd Workshop on Machine Reading for Question Answering, pp. 154–162 (2019)

Izacard, G., Grave, E.: Leveraging passage retrieval with generative models for open domain question answering. arXiv preprint: arXiv:2007.01282 (2020)

Karpukhin, V., et al.: Dense passage retrieval for open-domain question answering. arXiv preprint: arXiv:2004.04906 (2020)

Lee, K., Chang, M.W., Toutanova, K.: Latent retrieval for weakly supervised open domain question answering. arXiv preprint: arXiv:1906.00300 (2019)

Lewis, M., et al.: BART: denoising sequence-to-sequence pre-training for natural language generation, translation, and comprehension. arXiv preprint: arXiv:1910.13461 (2019)

Lewis, P., et al.: PAQ: 65 million probably-asked questions and what you can do with them. Trans. Assoc. Comput. Linguist. 9, 1098–1115 (2021)

Lin, C.Y.: ROUGE: a package for automatic evaluation of summaries. In: Text Summarization Branches Out, pp. 74–81 (2004)

Papineni, K., Roukos, S., Ward, T., Zhu, W.J.: BLEU: a method for automatic evaluation of machine translation. In: Proceedings of the 40th Annual Meting of the Association for Computational Linguistics, pp. 311–318 (2002)

Wolf, T., et al.: HuggingFace’s transformers: state-of-the-art natural language processing. arXiv preprint: arXiv:1910.03771 (2019)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Tong, ZW., Fan, YC., Leu, FY. (2023). DR.QG: Enhancing Closed-Domain Question Answering via Retrieving Documents for Question Generation. In: Barolli, L. (eds) Innovative Mobile and Internet Services in Ubiquitous Computing . IMIS 2023. Lecture Notes on Data Engineering and Communications Technologies, vol 177. Springer, Cham. https://doi.org/10.1007/978-3-031-35836-4_31

Download citation

DOI: https://doi.org/10.1007/978-3-031-35836-4_31

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-35835-7

Online ISBN: 978-3-031-35836-4

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)