Abstract

Controllable image synthesis with user scribbles is a topic of keen interest in the computer vision community. In this paper, for the first time we study the problem of photorealistic image synthesis from incomplete and primitive human paintings. In particular, we propose a novel approach paint2pix, which learns to predict (and adapt) “what a user wants to draw” from rudimentary brushstroke inputs, by learning a mapping from the manifold of incomplete human paintings to their realistic renderings. When used in conjunction with recent works in autonomous painting agents, we show that paint2pix can be used for progressive image synthesis from scratch. During this process, paint2pix allows a novice user to progressively synthesize the desired image output, while requiring just few coarse user scribbles to accurately steer the trajectory of the synthesis process. Furthermore, we find that our approach also forms a surprisingly convenient approach for real image editing, and allows the user to perform a diverse range of custom fine-grained edits through the addition of only a few well-placed brushstrokes. Source code and demo is available at https://github.com/1jsingh/paint2pix.

Access provided by Autonomous University of Puebla. Download conference paper PDF

Similar content being viewed by others

1 Introduction

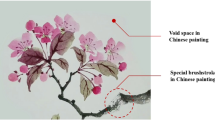

The human painting process represents a powerful mechanism for the expression of our inner visualizations. However, accurate depiction of the same is often quite time consuming and limited to those with sufficient artistic skill. Conditional image synthesis provides a popular solution to this problem, and simplifies output image synthesis based on higher-level input modalities (segmentation, sketch) which can be easily expressed using coarse user scribbles. For instance, segmentation based image generation methods [8, 9, 27, 40] allow for control over output image attributes based on user-editable semantic segmentation maps. However, they have obvious disadvantage of requiring large-scale dense semantic segmentation annotations for training, which makes them not easily scalable to new domains. Unsupervised sketch based image synthesis has also been explored [6, 12, 24], but they do not provide control over non-edge image areas.

Overview. We propose paint2pix which helps the user directly express his/her ideas in visual form by learning to predict user-intention from a few rudimentary brushstrokes. The proposed approach can be used for (a) synthesizing a desired image output directly from scratch wherein it allows the user to control the overall synthesis trajectory using just few coarse brushstrokes (blue arrows) at key points, or, (b) performing a diverse range of custom edits directly on real image inputs. Best viewed zoom-in. (Color figure online)

In this paper, we explore the use of another modality in this direction, by studying the problem of photorealistic image synthesis from incomplete and primitive human paintings. This is motivated from the observation that when constrained to a particular domain (e.g., faces), a lot of information about the final image output can be inferred from fairly rudimentary and partially drawn human paintings. We thus propose a novel approach paint2pix, which learns to predict (and adapt) “what the user intends to draw” from rudimentary brushstroke inputs, by learning a mapping from the manifold of incomplete human paintings to their realistic renderings. However, learning the manifold of incomplete human paintings is challenging as it would require extensive collection of human painting trajectories for each target domain. For this challenge, we show that a fair approximation of this manifold can still be obtained by using painting trajectories from recent works on autonomous human-like painting agents [31].

While predicting photo-realistic outputs from partially drawn paintings might be helpful for capturing certain parts of a user’s visualization (e.g., face shape, hairstyle), fine grain control over different image attributes might be missing. In order to address this need for fine-grained control, we introduce an interactive synthesis strategy, wherein paint2pix when used in conjunction with an autonomous painting agent, allows a novice user to progressively synthesize and refine the desired image output using just few rudimentary brushstrokes. The overall image synthesis (refer Fig. 1a) is performed in a progressive fashion wherein paint2pix and the autonomous painting agent are used in successive steps. Starting with an empty canvas, the user begins by making few rudimentary strokes (e.g., describing face shape, color) to obtain an initial user-intention prediction (through paint2pix). The painting agent then uses this prediction to paint until a user-controlled timestep, at which point, the user again provides a coarse brushstroke input (e.g., describing finer details like hair color) to change the trajectory of the synthesis process. By iterating between these steps till the end of painting trajectory, the human artist is able to gain significant control over final image contents whilst requiring to input only few coarse scribbles (blue arrows in Fig. 1a) at key points of the autonomous painting process.

In addition to progressive image synthesis, the proposed approach can also be used to perform fine-grained editing on real-images (Fig. 1b). As compared with previous latent space manipulation methods [3, 28, 30], we find that our approach forms a surprisingly convenient alternative for making a diverse range of custom fine-grained modifications through the use of a few user scribbles. Furthermore, we show that once the user is satisfied with a custom edit on one image (e.g., adding smile, changing makeup), the same edit can then be transferred to another image in a semantically-consistent manner (refer Sect. 6).

To summarize, the main contributions of this paper are 1) We introduce a novel task of photorealistic image synthesis from incomplete and primitive human paintings. 2) We propose paint2pix which learns to predict (and adapt) “what a user wants to ultimately draw” from rudimentary brushstroke inputs. 3) We finally demonstrate the efficacy of our approach for (a) progressively synthesizing an output image from scratch, and, (b) performing a diverse range of custom edits directly on real image inputs.

2 Related Work

Autonomous Painting Agents. In recent years, substantial research efforts [15, 19, 25, 31, 32, 35, 41] have been focused on developing autonoumous painting agents which can learn an unsupervised stroke decomposition for the recreation of a given target image. Despite their efficacy, previous works in this area are often limited to the non-photorealistic recreation of a provided target image. This assumes that the user already has a fixed reference image that he/she wants to recreate. However, in practical applications the intended image output may not be available and has to be synthesized in a progressive fashion. Our work thus proposes to develop a new application for autonomous painting agents by predicting user-intention from incomplete canvas frames.

Segmentation Based Image Generation. Image to image translation frameworks have been extensively studied for controllable generation of highly realistic image outputs based on a more simplified image representation. For instance, [11, 16, 21, 26, 27, 33, 40] use conditional generative adversarial networks for controllable image synthesis using user-provided semantic segmentation maps. While effective, these works require large-scale semantic segmentation annotations for training, which limits their scalability to new domains. Furthermore, making fine-grained changes within each semantic contour after image synthesis is non-trivial and often relies on style encoding methods [9, 40], which require the user to first find a set of reference images which best describe the nature of each intended change (e.g. adding makeup or changing hair style for facial images). In contrast, our work allows for a range of custom fine-grained image editions through the addition of just few well placed brush strokes.

Sketch based image generation has also been explored [5, 6, 22,23,24, 36, 37]. For instance, Ghosh et al. [12] predict possible image outputs from rudimentary sketches of simple objects. While effective in controlling initial aspects of the image output, the use of sketches (compared to paintings) is less effective as it offers limited control and sensitivity to changes made in non-edge areas.

GAN Inversion. Interactive image generation and editing with user scribbles has also been explored in the context of GAN-inversion [1, 2, 39]. Zhu et al. [39] propose a hybrid optimization approach for projecting user-given strokes onto the natural image manifold. Similarly, [1, 2] use GAN-inversion to perform local image edits with user scribbles. While effective for small-scale photorealistic manipulations, these methods often lack means to learn the distribution of user-inputs (manifold of rudimentary paintings in our case) and thus are limited to performing a pure color-based optimization. As shown in Sect. 5, this leads to poor performance on from-scratch synthesis and semantic edits on real images.

Positioning Our Work. Table 1 summarizes the positioning of our approach with respect to previous methods performing controllable image synthesis using user-given brushstrokes/scribbles. In particular, we posit the comparative benefits of our approach with respect to the following desirable properties.

-

Image synthesis from scratch. While paint2pix, segmentation and sketch based methods allow for direct synthesis of the primary image from scratch, GAN-inversion methods perform a more color-based optimization and thereby show poor performance on image synthesis from scratch (Sect. 5.1).

-

Responsiveness (control) over all image areas. Due to the one-to-many nature of learned mappings, segmentation based methods fail to provide fine-grained control over attributes within each semantic region. Similarly, sketch-based methods lack sensitivity to changes in non-edge areas.

-

Usability by novice artists. A key advantage of our method is that it allows a novice artist to control the synthesis process while using fairly rudimentary brushstrokes. In contrast, GAN-inversion based methods require the user to make sufficiently detailed strokes in order to preserve closeness to the real image manifold (refer Sect. 5.2 for more details).

-

Data efficiency. Our method is largely self-supervised and uses [31] to approximate the manifold of incomplete human paintings. In contrast, segmentation based methods require large-scale dense semantic maps for training on each target domain, which limits their scalability.

Model Overview. The paint2pix model helps simplify the image synthesis task by predicting user-intention from rudimentary canvas state \(C_t\), while also allowing the user to accurately steer the synthesis trajectory using coarse brushstroke inputs in \(C_{t+1}\). This is done in two steps. First, the canvas encoder \(\mathbf {E_1}\) learns a mapping between the manifold of incomplete paintings and real images to predict realistic user-intention predictions \(\{y_t,y_{t+1}\}\) from \(\{C_t,C_{t+1}\}\) respectively. These intermediate predictions are then fed into a second identity encoder \(\textbf{E}_2\) to predict a latent-space correctional term \(\varDelta _t\), which ensures that the final prediction \(\tilde{y}_{t+1}\) preserves the identity of the prediction from the original canvas \(C_t\), while at the same time incorporating changes made by the user input brushstrokes in \(C_{t+1}\). The progressive synthesis process can then be continued by feeding final prediction \(\tilde{y}_{t+1}\) to an autonomous painting agent which paints it till a user-controlled timestep, at which point, the user can again add coarse brushstroke inputs in order to better express her inner ideas in the final image output.

3 Our Method

The paint2pix model uses a two-step decoupled encoder-decoder architecture (refer Fig. 2) for predicting user intention from incomplete user paintings.

3.1 Canvas Encoding Stage

The goal of the canvas encoding stage is two-fold: 1) predict user-intention by learning a mapping between the manifold of incomplete user paintings to their realistic output renderings, while at the same time 2) allow for modification in the progressive synthesis trajectory based on coarse user-brushstrokes.

In particular, given current canvas state \(C_t\) and the updated canvas state after coarse user-brushstroke input \(C_{t+1}\), we first use a canvas encoder \(\textbf{E}_1\) to predict a tuple of initial latent vector predictions \(\{\textbf{w}_t, \textbf{w}_{t+1}\}\) as,

These latent predictions are then fed into a StyleGAN [18] decoder network \(\textbf{G}\), in order to get realistic user-intention predictions \(\{y_t,y_{t+1}\}\) corresponding to input canvas tuple \(\{C_t,C_{t+1}\}\) respectively, i.e. \(\textbf{y}_t, \textbf{y}_{t+1} = G(\textbf{w}_t), G(\textbf{w}_{t+1}).\)

Losses. Given realistic output ground-truth annotation \(\hat{y}_t\) corresponding to canvas \(C_t\) (refer Sect. 3.3), the canvas encoder \(\textbf{E}_1\) is trained to learn to predict user-intention with the following prediction loss \(\mathcal {L}_{pred}\),

where \(\mathcal {L}_{lpips}\) is the perceptual similarity loss [38] and \(\mathcal {L}_{id}\) represents the Arcface [10] / MoCo-v2 [7] features based identity similarity loss from Tov et al. [34].

As previously mentioned, we would also like to ensure that the output predictions are modified in order to reflect the changes added by the user in \(C_{t+1}\). This is then achieved by the following edition loss \(\mathcal {L}_{edit}\),

where \(\varDelta C_t = C_{t+1} - C_{t}\) and \(\varDelta y_t = y_{t+1} - y_{t}\) represent the changes in the original canvas and output predictions respectively. \(\mathcal {L}_{adv}\) refers to the latent discriminator loss from e4e [34] to ensure realism of the latent space prediction. Finally, the last term ensures that the codes \(\{w_{t},w_{t+1}\}\) for consecutive image outputs \(\{y_{t},y_{t+1}\}\) lie close in the StyleGAN [18] latent space.

3.2 Identity Embedding Stage

While enforcing closeness of consecutive latent vector codes \(\{w_{t},w_{t+1}\}\) (Eq. 3), helps in ensuring that the updated output prediction \(y_{t+1}\) is derived from the original prediction \(y_t\), inconsistencies might still arise due to subtle changes in the identity of the underlying prediction (Fig. 2). Thus, the goal of the second stage is to preserve the underlying identity between consecutive image predictions and thereby ensure semantic consistency of the overall image synthesis process.

To address this, we train a second identity encoder \(\textbf{E}_2\) which ensures that the final prediction \(\tilde{y}_{t+1}\) preserves identity of the original prediction \(y_t\) while still reflecting the changes made by the user in canvas \(C_{t+1}\). In particular, given output image predictions \(\{y_t, y_{t+1}\}\) from the canvas encoding stage, the identity encoder \(\textbf{E}_2\) predicts a correctional term \(\varDelta _t\) to update the latent codes as,

The updated latent code \(\tilde{w}_{t+1}\) is then used to predict the final output prediction \(\tilde{y}_{t+1}\) using the StyleGAN [18] decoder \(\textbf{G}\) as,

Losses. The identity encoder is trained using the following loss,

where the first three terms ensure the preservation of edits made by the user in \(C_{t+1}\), while the last term enforces that the final prediction \(\tilde{y}_{t+1}\) preserves the identity of the original image prediction \(y_{t}\), thereby ensuring consistency of the overall progressive synthesis process.

Reason for Decoupled Encoders. While its feasible to design a model architecture wherein both \(\mathcal {L}_{canvas}\) and \(\mathcal {L}_{embed}\) are applied using a single encoder, the use of a decoupled identity encoder offers several practical advantages. For instance, while ensuring identity consistency is usually important (e.g., making fine-grained changes), a change in underlying identity might sometimes be actually desirable, especially at the beginning of the progressive synthesis process. The decoupling of canvas encoding and identity embedding stage is therefore useful, as it allows the user to apply identity correction depending on the nature of the intended change. Furthermore, as shown in Sect. 7, decoupling the two stages allows our model to perform multi-modal synthesis without requiring any special architecture for producing multiple output predictions.

3.3 Overall Training

Total Loss. The overall paint2pix model is trained using a combination of canvas-encoding \(\{\mathcal {L}_{pred},\mathcal {L}_{edit}\}\) and identity embedding \(\mathcal {L}_{embed}\) losses,

Ground Truth Painting Annotations. As discussed before, a key requirement of our approach is the ability to learn a mapping between the manifold of incomplete human-user paintings to their ideal realistic outputs. This requirement is challenging as it would need large-scale collection of human painting trajectories for each target domain, making our method intractable for most practical applications. To address this, we propose to instead use the recent works on autonomous painting agents for obtaining a decent approximation for the manifold of incomplete user paintings. The accuracy of such an approximation would depend highly on the domain gap between the incomplete paintings made by human users as compared to those made by a painting agent. We reduce this domain gap by using the recently proposed Intelli-paint [31] method, which has been shown to generate intermediate canvas frames which are more intelligible to actual human artists as opposed to previous works [15, 25, 32, 41].

In particular, for each painting trajectory trying to recreate a given target image \(I_{target} \in \mathcal {D}\) (\(\mathcal {D}\) is input domain, e.g., FFHQ [17] for faces), we collect input canvas annotations by uniformly sampling 20 tuples of consecutive canvas frames \(\{C_t,C_{t+1}\}\) observed during the painting process. The output image annotation \(\hat{y}_t\) for all sampled canvas tuples (from the same trajectory) is then set to the original target image \(I_{target}\). Furthermore, we collect painting annotations under various brushstroke counts \(N_{strokes} \in [200, 500]\), as it helps capture the diverse degrees of abstraction observed in paintings made by actual human artists.

4 Paint2pix for Progressive Image Synthesis

Figure 3 demonstrates the use of paint2pix for progressive image synthesis from scratch. A potential user would start the painting process by adding a few rudimentary brushstrokes on the canvas (e.g., background scene for cars or face shape, color for faces). The paint2pix network then outputs a set of possible realistic image renderings (refer Sect. 7 for more details on multi-modal synthesis) that the user might be interested in drawing. The user may then select the image that most closely resembles his/her idea to obtain a user-intention prediction. The progressive synthesis process can then be continued by feeding this prediction to an autonomous painting agent which paints it till a user-controlled timestep, at which point, the user can again add coarse scribbles (e.g., describing finer details like sky color for cars or hairstyle for faces) in order to steer the synthesis trajectory according to his/her ideas. By continuing this iterative process till the end of the painting process, a novice user can gain significant control over the final image contents while requiring to only input few rudimentary brushstrokes at key points in the autonomous painting trajectory.

5 Comparison with Inversion Methods

Interactive image generation and editing with user brushstrokes has also been explored in the context of GAN-inversion methods [1, 2, 39], which use an encoder or optimization based inversion approach in order to project user scribbles onto the real image manifold. In this section, we present extensive quantitative and qualitative results comparing our approach with existing GAN-inversion methods for image manipulation with user-scribbles. In particular, we demonstrate the efficacy of our approach in terms of both 1) from scratch synthesis: i.e., predicting user intention from fairly rudimentary paintings (Sect. 5.1), and 2) real image editing: allowing a potential user to make a range of custom fine-grain edits directly by just using a few coarse input brushstrokes (Sect. 5.2).

Baselines. We compare our results with recent state-of-the-art encoder based methods from Restyle [4], e4e [34] and pSp [29]. In addition, we report results for optimization based encoding approach from Karras et al. [18] and hybrid strategy from Zhu et al. [39]. Please note that in order to get best output quality, results for [39] are reported while using a pretrained ReStyle [4] encoder.

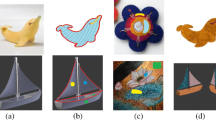

5.1 Predicting User-Intention from Rudimentary Paintings

Qualitative Results. Figure 4a shows qualitative comparisons while predicting photo-realistic (user-intention) outputs from rudimentary paintings. We clearly see that our approach results in much more photorealistic predictions for the user-intended final output. In contrast, the predominantly color-based optimization nature of previous GAN-inversion works leads to non-photorealistic projections when all color details are yet to be added by the user. For instance, while drawing a human face, it is quite common for an artist to first draw a coarse brushstroke for the face region without adding in the finer facial details. However, this leads to poor performance while using color-based optimization as it leads the model to instead predict an output face where the finer facial details are hardly noticeable. Adversarial loss in e4e [29] helps improve the realism of output images but it still performs worse than paint2pix for this task.

Quantitative Results. We also report quantitative results for this task (and image editing tasks from Sect. 5.2) in Table 2. Results are reported in terms of the Fréchet inception distance (FID) [14], which is used capture the output image quality from different methods. Furthermore, we perform a human user-study (details in supp. material) and report the percentage of human users which prefer our method as opposed to competing works. As shown in Table 2, we observe that paint2pix produces better quality images (lower FID scores) and is preferred by majority of human users over competing methods.

5.2 Real-Image Editing

In addition to being able to perform progressive synthesis from scratch, paint2pix also offers a surprisingly convenient approach for making a diverse range of custom semantic edits (e.g., add smile for faces) on real images by simply initializing the canvas input \(C_t\) with a real image. We next compare our method with previous GAN-inversion works on performing real image editing with user scribbles.

Semantic Image Edits. As shown in Fig. 4b, we observe that our approach performs much better when the nature of the underlying edit is not purely color-based. For instance, consider the first example from Fig. 4b. Our method is able to correctly interpret that coarse white brushstrokes near the mouth region implies that the user is trying to add smile to the underlying facial image. In contrast, due to the predominantly color-based-optimization, gan-inversion methods fail to understand the change in semantics of face, and thus predict output faces in which the mouth region has been artificially-colored white.

Color-Based Custom Edits. Even when the custom-edits are color-based, we show that paint2pix leads to outputs which are 1) more photorealistic, 2) exhibit a greater level of detail at the edit locations, 3) modify non-edit locations (in addition to edit locations) in order to maintain coherence of the resulting image and 4) better preserve the identity of the original image input.

Results are shown in Fig. 4c. Consider the first example (row-1). The increased realism of paint2pix outputs can be clearly seen by the more photorealistic and detailed representation at edit locations (e.g., hair, eyebrows). Furthermore, note that our method shows a more global understanding of image semantics and subtly modifies the skin tone and the eye shading of the face to maintain consistency with user-given edits. In contrast, the color-based optimization of GAN-inversion methods exhibits a lower level of detail at edit locations (e.g., hair in row 1–3 and makeup in row 2,3). Furthermore, we find that our method shows better performance in preserving the identity of the original image in the final output (e.g.row 2,3), which is highly essential for real-image editing.

6 Inferring Global Edit Directions

We next show that the custom edits (e.g., adding glasses, changing makeup) learned through paint2pix are not limited to the image on which the modifications were originally performed but instead show semantically-consistent generalization across the input domain. Put another way, once the user is satisfied with the output of a given custom edit on one image, the same edit can then be applied across different images from the input data distribution without requiring the user to repeat similar brushstrokes on each individual image.

In particular, consider \(\{x_0,x_1\}\) be the original and edited image tuple with stylegan latent space vectors \(\{\textbf{w}_0,\textbf{w}_1\}\) respectively. The custom edit \(x_0 \rightarrow x_1\) can then be applied to another image x (with stylegan latent code \(\textbf{w}\)) by computing a modified latent space edit direction \(\delta _{edit}(x)\) as,

where \(\delta _{edit}(x_0) = \textbf{w}_1 - \textbf{w}_0\) represents the original edit direction from \(x_0 \rightarrow x_1\), and the second term ensures identity preservation in the transferred edit.

The original edit can then be transferred to the input image x as,

where \(\alpha \) is the edit strength and \(\textbf{G}\) is the StyleGAN [18] decoder network.

Results are shown in Fig. 5. We are able to clearly see that custom edits learned on one image can be extended to different images in a semantic-consistent manner. Furthermore, we observe that the strength of the intended edit can be varied by simply adjusting the edit-strength parameter \(\alpha \). This helps us to use extrapolation in order to achieve edits which would be otherwise difficult to draw using coarse scribbles alone. For instance, while adding smile (using white brushstrokes) is easy, drawing a fully laughing face might be difficult for a novice artist. However, the same can be easily achieved by using a higher edit strength which allows us to extrapolate the original smiling edit to a laughing face edit (ow-1, Fig. 5). Similarly, different levels of facial wrinkles (or aging) can also be achieved in an analogous fashion (row-2, Fig. 5).

7 Multi-modal Synthesis

Predicting a single output for inferring user intention from an incomplete painting might not be always useful if the user’s ideas are vastly different from the output prediction. The use of decoupled encoders in paint2pix is helpful in this regard, as it allows our approach to perform multi-modal synthesis for the final output without requiring special architecture changes.

In practice, given an incomplete canvas \(C_t\), multi-modal synthesis is achieved by sampling a random image as the identity input (\(y_t\)) to the identity encoder network. Results are shown in Fig. 6. We observe that the above approach forms a convenient method for predicting multiple possible image completions from incomplete paintings. This provides the user with a wider range of choices to select the best direction for the synthesis process. Furthermore, we note that this idea can also be used to perform identity conditioned synthesis, by using the same identity image (e.g., Chris Hemsworth) throughout the painting trajectory.

8 Ablation Study

In this section, we perform several ablation studies in order to study the importance of different losses \(\{\mathcal {L}_{pred},\mathcal {L}_{edit},\mathcal {L}_{embed}\}\) in the performance of paint2pix. Please note that in order to still get meaningful results, the experiments without \(\mathcal {L}_{pred}\) are performed while using a pretrained restyle [4] network for independently predicting intermediate outputs \(\{y_{t+1},y_{t}\}\) from canvas frames \(\{C_{t+1},C_{t}\}\), and, the ablation \(\{\mathrm {w/o} \ \mathcal {L}_{edit}\}\) is done using \(\textbf{w}_{t+1}\) = \(\textbf{w}_{t}\) for the canvas encoder.

Results are shown in Fig. 7. We observe that \(\{\mathrm {w/o} \ \mathcal {L}_{pred}\}\) the model lacks an understanding of the manifold of incomplete paintings and thus produces outputs which are not fully photorealistic. In contrast, \(\{\mathrm {w/o} \ \mathcal {L}_{edit}\}\) shows high quality outputs but does not incorporate the edits made by user brushstrokes. Finally, we see that the use of \(\mathcal {L}_{embed}\) helps the model produce images which preserve the identity of the original image in the final prediction.

9 Discussion and Limitations

In-distribution Predictions. A key advantage of paint2pix is that allows a novice user to synthesize and manipulate an output image on the real image manifold, while using fairly rudimentary and crude brushstrokes. While this is desirable in most scenarios, it also limits our method as it prevents a potential user from intentionally performing out-of-distribution (or non-realistic) facial manipulations (e.g., blue eyebrows, ghost like faces etc.).

Invertibility for Real-Image Editing. Much like other GAN-inversion and latent space manipulation methods [3, 4, 13, 28, 29, 39], accurate real-image editing with paint2pix is highly dependent on the ability of used encoder architecture to invert the original real image into StyleGAN [18] latent space.

Advanced Edits. Another limitation is that paint2pix does not provide a direct approach for achieving advanced semantic edits like age, gender manipulation. Nevertheless, as show in Fig. 8, age variation edits can still be achieved using extrapolation of edit strength \(\alpha \). Similarly, gender variation edits are possible by using progressive synthesis to infer the gender edit direction. Further details and analysis for gender variation edits are provided in the supp. material.

10 Conclusion

In this paper, we explore a novel task of performing photorealistic image synthesis and editing using primitive user paintings and brushstrokes. To this end, we propose paint2pix which can be used for 1) progressively synthesizing a desired image output from scratch using just few rudimentary brushstrokes, or, 2) real image editing: wherein it allows a human user to directly perform a range of custom edits without requiring any artistic expertise. As shown through extensive experimentation, we find that paint2pix forms a highly convenient and simple approach for directly expressing a potential user’s inner ideas in visual form.

References

Abdal, R., Qin, Y., Wonka, P.: Image2styleGAN: how to embed images into the styleGAN latent space? In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 4432–4441 (2019)

Abdal, R., Qin, Y., Wonka, P.: Image2styleGAN++: how to edit the embedded images? In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 8296–8305 (2020)

Abdal, R., Zhu, P., Mitra, N.J., Wonka, P.: StyleFlow: attribute-conditioned exploration of styleGAN-generated images using conditional continuous normalizing flows. ACM Trans. Graph. (TOG) 40(3), 1–21 (2021)

Alaluf, Y., Patashnik, O., Cohen-Or, D.: ReStyle: a residual-based StyleGAN encoder via iterative refinement. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), October 2021

Chen, T., Cheng, M.M., Tan, P., Shamir, A., Hu, S.M.: Sketch2Photo: internet image montage. ACM Trans. Graph. (TOG) 28(5), 1–10 (2009)

Chen, W., Hays, J.: SketchyGAN: towards diverse and realistic sketch to image synthesis. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 9416–9425 (2018)

Chen, X., Fan, H., Girshick, R., He, K.: Improved baselines with momentum contrastive learning. arXiv preprint arXiv:2003.04297 (2020)

Choi, Y., Choi, M., Kim, M., Ha, J.W., Kim, S., Choo, J.: StarGAN: unified generative adversarial networks for multi-domain image-to-image translation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 8789–8797 (2018)

Choi, Y., Uh, Y., Yoo, J., Ha, J.W.: StarGAN v2: diverse image synthesis for multiple domains. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 8188–8197 (2020)

Deng, J., Guo, J., Xue, N., Zafeiriou, S.: ArcFace: additive angular margin loss for deep face recognition. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 4690–4699 (2019)

Esser, P., Rombach, R., Ommer, B.: Taming transformers for high-resolution image synthesis. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 12873–12883 (2021)

Ghosh, A., et al.: Interactive sketch & fill: Multiclass sketch-to-image translation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 1171–1180 (2019)

Härkönen, E., Hertzmann, A., Lehtinen, J., Paris, S.: GANSpace: discovering interpretable GAN controls. In: Advances in Neural Information Processing Systems, vol. 33, pp. 9841–9850 (2020)

Heusel, M., Ramsauer, H., Unterthiner, T., Nessler, B., Hochreiter, S.: GANs trained by a two time-scale update rule converge to a local nash equilibrium. In: Advances in Neural Information Processing Systems 30 (2017)

Huang, Z., Heng, W., Zhou, S.: Learning to paint with model-based deep reinforcement learning. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 8709–8718 (2019)

Isola, P., Zhu, J.Y., Zhou, T., Efros, A.A.: Image-to-image translation with conditional adversarial networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1125–1134 (2017)

Karras, T., Laine, S., Aila, T.: A style-based generator architecture for generative adversarial networks. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 4401–4410 (2019)

Karras, T., Laine, S., Aittala, M., Hellsten, J., Lehtinen, J., Aila, T.: Analyzing and improving the image quality of StyleGAN. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 8110–8119 (2020)

Kotovenko, D., Wright, M., Heimbrecht, A., Ommer, B.: Rethinking style transfer: from pixels to parameterized brushstrokes. arXiv preprint arXiv:2103.17185 (2021)

Krause, J., Stark, M., Deng, J., Fei-Fei, L.: 3D object representations for fine-grained categorization. In: 4th International IEEE Workshop on 3D Representation and Recognition (3dRR-13), Sydney, Australia (2013)

Lee, C.H., Liu, Z., Wu, L., Luo, P.: MaskGAN: towards diverse and interactive facial image manipulation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 5549–5558 (2020)

Lee, J., Kim, E., Lee, Y., Kim, D., Chang, J., Choo, J.: Reference-based sketch image colorization using augmented-self reference and dense semantic correspondence. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 5801–5810 (2020)

Li, X., Zhang, B., Liao, J., Sander, P.V.: Deep sketch-guided cartoon video synthesis. CoRR (2020)

Liu, R., Yu, Q., Yu, S.X.: Unsupervised sketch to photo synthesis. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12348, pp. 36–52. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58580-8_3

Liu, S., et al.: Paint transformer: feed forward neural painting with stroke prediction. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 6598–6607 (2021)

Liu, X., Yin, G., Shao, J., Wang, X., et al.: Learning to predict layout-to-image conditional convolutions for semantic image synthesis. In: Advances in Neural Information Processing Systems 32 (2019)

Park, T., Liu, M.Y., Wang, T.C., Zhu, J.Y.: Semantic image synthesis with spatially-adaptive normalization. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 2337–2346 (2019)

Patashnik, O., Wu, Z., Shechtman, E., Cohen-Or, D., Lischinski, D.: Styleclip: text-driven manipulation of StyleGAN imagery. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 2085–2094 (2021)

Richardson, E., et al.: Encoding in style: a StyleGAN encoder for image-to-image translation. In: IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), June 2021

Shen, Y., Zhou, B.: Closed-form factorization of latent semantics in GANs. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 1532–1540 (2021)

Singh, J., Smith, C., Echevarria, J., Zheng, L.: Intelli-paint: towards developing human-like painting agents. In: European Conference on Computer Vision. Springer (2022)

Singh, J., Zheng, L.: Combining semantic guidance and deep reinforcement learning for generating human level paintings. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (2021)

Sushko, V., Schönfeld, E., Zhang, D., Gall, J., Schiele, B., Khoreva, A.: You only need adversarial supervision for semantic image synthesis. arXiv preprint arXiv:2012.04781 (2020)

Tov, O., Alaluf, Y., Nitzan, Y., Patashnik, O., Cohen-Or, D.: Designing an encoder for stylegan image manipulation. arXiv preprint arXiv:2102.02766 (2021)

Wang, Q., Guo, C., Dai, H.N., Li, P.: Self-stylized neural painter. In: SIGGRAPH Asia 2021 Posters, pp. 1–2 (2021)

Xiang, X., Liu, D., Yang, X., Zhu, Y., Shen, X., Allebach, J.P.: Adversarial open domain adaptation for sketch-to-photo synthesis. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp. 1434–1444 (2022)

Yang, S., Wang, Z., Liu, J., Guo, Z.: Controllable sketch-to-image translation for robust face synthesis. IEEE Trans. Image Process. 30, 8797–8810 (2021)

Zhang, R., Isola, P., Efros, A.A., Shechtman, E., Wang, O.: The unreasonable effectiveness of deep features as a perceptual metric. In: CVPR (2018)

Zhu, J.-Y., Krähenbühl, P., Shechtman, E., Efros, A.A.: Generative visual manipulation on the natural image manifold. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9909, pp. 597–613. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46454-1_36

Zhu, P., Abdal, R., Qin, Y., Wonka, P.: Sean: Image synthesis with semantic region-adaptive normalization. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 5104–5113 (2020)

Zou, Z., Shi, T., Qiu, S., Yuan, Y., Shi, Z.: Stylized neural painting. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 15689–15698 (2021)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Singh, J., Zheng, L., Smith, C., Echevarria, J. (2022). Paint2Pix: Interactive Painting Based Progressive Image Synthesis and Editing. In: Avidan, S., Brostow, G., Cissé, M., Farinella, G.M., Hassner, T. (eds) Computer Vision – ECCV 2022. ECCV 2022. Lecture Notes in Computer Science, vol 13674. Springer, Cham. https://doi.org/10.1007/978-3-031-19781-9_39

Download citation

DOI: https://doi.org/10.1007/978-3-031-19781-9_39

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-19780-2

Online ISBN: 978-3-031-19781-9

eBook Packages: Computer ScienceComputer Science (R0)