Abstract

Corrosion detection on metal constructions is a major challenge in civil engineering for quick, safe and effective inspection. Existing image analysis approaches tend to place bounding boxes around the defected region which is not adequate both for structural analysis and prefabrication, an innovative construction concept which reduces maintenance cost, time and improves safety. In this paper, we apply three semantic segmentation-oriented deep learning models (FCN, U-Net and Mask R-CNN) for corrosion detection, which perform better in terms of accuracy and time and require a smaller number of annotated samples compared to other deep models, e.g. CNN. However, the final images derived are still not sufficiently accurate for structural analysis and prefabrication. Thus, we adopt a novel data projection scheme that fuses the results of color segmentation, yielding accurate but over-segmented contours of a region, with a processed area of the deep masks, resulting in high-confidence corroded pixels.

This paper is supported by the H2020 PANOPTIS project “Development of a Decision Support System for Increasing the Resilience of Transportation Infrastructure based on combined use of terrestrial and airborne sensors and advanced modelling tools,” under grant agreement 769129.

Access provided by Autonomous University of Puebla. Download conference paper PDF

Similar content being viewed by others

Keywords

1 Introduction

Metal constructions are widely used in transportation infrastructures, including bridges, highways and tunnels. Rust and corrosion may result in severe problems in safety. Hence, metal defect detection is a major challenge in civil engineering to achieve quick, effective but also safe inspection, assessment and maintenance of the infrastructure [9] and deal with materials’ deterioration phenomena that derive from several factors, such as climate change, weather events and ageing.

Current approaches in image analysis for detecting defects are through bounding boxes placed around defected areas to assist engineers to rapidly focus on damages [2, 13, 14, 18]. Such approaches, however, are not adequate for a structural analysis since several metrics (e.g. area, aspect ratio, maximum distance) are required to assess the defect status. Thus, we need a more precise pixel-level classification which can also trigger the novel ideas in construction of prefabrication [5]. Prefabrication allows components to be built outside the infrastructure, decreasing maintenance cost and time, and improving traffic flows and working risks. Additionally, real-time classification response is necessary to achieve fast inspection of the critical infrastructure, especially on large-scale structures. Finally, a small number of training samples is available, due to the fact that specific traffic arrangements, specialized equipment and extra manpower are required, increasing the cost dramatically.

1.1 Related Work

Recently, deep learning algorithms [20] have been proposed for defect detection. Since the data received as 2D image inputs, convolutional neural networks (CNNs) have been applied to identify regions of interest [14]. Other approaches exploit the CNN structure to detect cracks in concrete and steel infrastructures [2, 6], road damages [15, 23] and railroad defects on metal surfaces [18]. Finally, the work of [3] combines a CNN and a Naïve Bayes data fusion scheme to detect crack patches on nuclear power plants.

The main problem of all the above-mentioned approaches is that they employ conventional deep models, such as CNNs, which require a large number of annotated data [11, 12]. In our case, such a collection is an arduous task since the annotation should be carried out at pixel level by experts. For this reason, most of existing methods estimate the defected regions through boundary boxes. In addition, the computational complexity of the above methods is high, a crucial factor when inspecting large-scale infrastructures.

To address these constraints, we exploit alternative approaches in deep learning proposed for semantic segmentation but for applications different than defect detection in transportation networks such as Fully Convolutional Networks (FCN) [10], U-Nets [16] and Mask R-CNN [7]. The efficiency of the specific methods has been already verified in medical imaging (e.g. brain tumour and COVID-19 symptoms detection) [4, 21, 22].

1.2 Paper Contribution

In this paper, we apply three semantic segmentation-oriented deep models (FCN, U-Net and Mask R-CNN) to detect corrosion in metal structures since they perform more efficiently than traditional deep models. However, the masks derived are still inadequate for structural analysis and prefabrication because salient parts of a defected region, especially at the contours, are misclassified. Thus, a detailed, pixel-based mask should be extracted so that civil engineers can take precise measurements on it.

To overcome this problem, we combine through projection the results of color segmentation, which yields accurate contours but oversegments regions, with a processed area of the deep masks (through morphological operators), which indicate only high confident pixels of a defected area. The projection merges color segments belonging to a damaged area improving pixel-based classification accuracy. Experimental results on real-life corroded images, captured in European H2020 Panoptis project, prove the outperformance of the proposed scheme than using segmentation-oriented deep networks or traditional deep models.

2 The Proposed Overall Architecture

Let \(I \in \mathbb {R}^{w \times h \times 3}\) an RGB image of size \(w \times h\). Our problem involves a traditional binary classification: areas with intense corrosion grades (rust grade categories B, C and D) and areas of no or minor corrosion (category A). The rust grade categories stems from the standard ISO 8501-1 of civil engineering and are described in Sect. 5.1 and depicted in Fig. 3.

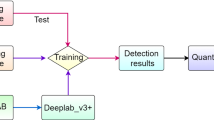

Figure 1 depicts the overall architecture of our approach. The RGB images are fed as inputs to the FCN, U-Net and Mask R-CNN deep models to carry out the semantic segmentation. Despite the effectiveness of these networks, inaccuracies still appear on the contours of the detected objects. Although these errors are small, when one measures them as a percentage of the total corroded region, they are very important for structural analysis and prefabrication.

To increase pixel-level accuracy of the derived masks, we combine color segmentation with the regions of the deep models. Color segmentation precisely localizes the contours of an object, but it over-segments it into multiple color areas. Instead, the masks of the deep networks correctly localize the defects, but fail to accurately segment the boundaries. Therefore, we shrink the masks of the deep models to find out the most confident regions, i.e., pixels indicating a defect with high probability. This is done through an erosion morphological operator applied on the initial detections. We also morphologically dilate the deep model regions to localize vague areas which we need to decide in what region they belong to. On that extended mask, we apply the watershed segmentation to generate color segments. Finally, we project the results of the color segmentation onto the high confident regions to merge together different color clusters of the same corrosion.

3 Deep Semantic Segmentation Models

Three types of deep networks are applied to obtain the semantic segments. The first is a Fully Convolutional Network (FCN) [10] which does not have any fully-connected layers, reducing the loss of spatial information and allowing faster computation. The second is a U-Net built for medical imaging segmentation [16]. The architecture is heavily based on FCN, though they have some key differences: U-Net (i) is symmetrical by having multiple upsampling layers and (ii) uses skip connections that apply a concatenation operator instead of adding up. Finally, the third model is the Mask R-CNN [7], which extends the Faster R-CNN by using a FCN. This model is able to define bounding boxes around the corroded areas and then segments the rust inside the predicted boxes.

To detect the defects, the models receive as input RGB data and generate, as outputs, binary masks, providing a pixel-level corrosion detection. However, the models fail to generate high fidelity annotations on a boundary level; contours over the detected regions fail to fully encapsulate the rusted regions of the object. As such, a region-growing approach, over these low confidence boundary regions, is applied to improve outcome’s robustness and provide refined masks. For training the models, we use an annotated dataset which have been built by civil engineers under the framework of EU project H2020 PANOPTIS.

4 Refined Detection by Projection - Fusion with a Color Segmentation

The presence of inaccuracies in the contours of outputs of the aforementioned deep models is due to the multiple down/up-scaling processes within the convolutional layers of models. To refine the initially detected masks, the following steps are adopted: (i) Localizing a region of high-confident pixels to belong to a defect as a subset of the deep masked outputs. (ii) Localizing fuzzy regions which we cannot decide with confidence if they belong to a corroded area or not, through an extension of the deep output masks. (iii) Applying a color segmentation algorithm in the extended masks which contains both the fuzzy and the high-confident regions. (iv) Finally projecting the results of the color segmentation onto the high-confident area. The projection retains the accuracy in the contours (stemming from color segmentation) while merging different color segments of the same defect together.

Let us assume that, in an RGB image \(I \in \mathbb {R}^{w \times h \times 3}\), we have a set of N corroded regions \(R=\{R_1,...,R_N\}\), generated using a deep learning approach. Each partition is a set of pixels \(R_j=\{(x_i,y_i)\}_{i=1}^{m_j}\), \({j=1,...,N}\), where \(m_j\) is the number of pixels in each set. The remaining pixels represent the no-detection or background areas, denoted by \(R^B\), so that \(R \cup R^B=I\) (see Fig. 2a). Subsequently, for each \(R_j\), \({j=1,...,N}\), we consider two subsets \(R_j^T\) and \(R_j^F\), so that \(R_j^T \cup R_j^F=R_j\). The first set \(R_j^T\), corresponds to inner pixels of the region \(R_j\), which are considered as true foreground and indicate high-confidence corroded areas. They can be obtained using an erosion morphological operator on \(R_j\). The second set \(R_j^F\), contains the remaining pixels \((x_i,y_i) \in R_j\) and their status is considered fuzzy.

We now define a new region \(R_j^{F+} \supset R_j^{F}\), which is a slightly extended area of pixels of \(R_j^{F}\), obtained using the dilation morphological operator. The implementation is carried out so that \(R_j^T\) and \(R_j^{F+}\) are adjacent, but non-overlapping. That is, \(R_j^T \cap R_j^{F+}=\varnothing \). Summarizing, we have three sets of areas (see Fig. 2b): (i) True foreground or corroded areas \(R^T=\{R_1^T,...,R_N^T\}\) (green region in Fig. 2b), (ii) fuzzy areas \(R^{F+}=\{R_1^{F+},...,R_N^{F+}\}\) (yellow region of Fig. 2b) and (iii) the remaining image areas \(R^B\), denoting the background or no-detection areas (black region of Fig. 2b).

In the extended fuzzy region \(R^{F+}\), we apply the watershed color segmentation algorithm [1]. Let us assume that the color segmentation algorithm produces \(M_j\) segments \(s_{i,j}\), with \(i=1,...,M_j\) for the j-th defected region (see the red region in Fig. 2c), all of which form a set \(R_j^w=\bigcup \limits _{i=1}^{M_j}s_{i,j}\). Then, we project segments \(R_j^w\) onto \(R_j^T\) in a way that:

Ultimately, the final detected region (see the blue region in Fig. 2c) is defined as the union of all sets \(R_j^T\) and \(R_j^{(a)}\) over all corroded regions \(j=1,...,N\).

5 Experimental Evaluation

5.1 Dataset Description

The dataset is obtained from heterogeneous sources (DSLR cameras, UAVs, cellphones) and contains 116 images of various resolutions, ranging from 194 \(\times \) 259 to 4248 \(\times \) 2852. All data have been collected under the framework of H2020 Panoptis project. For the dataset, 80% is used for training and validation, while the remaining 20% is for testing. Among the training data, 75% of them is used for training and the remaining 25% for validation. The images vary in terms of corrosion type, illumination conditions (e.g. overexposure, underexposure) and environmental landscapes (e.g. highways, rivers, structures). Furthermore, some images contain various types of occlusions, making detection more difficult.

All images of the dataset were manually annotated by engineers within Panoptis project. Particularly, corrosion has been classified according to the ISO 8501-1 standard (see Fig. 3): (i) Type A: Steel surface largely covered with adhering mill scale but little, if any, rust. (ii) Type B: Steel surface which has begun to rust and from which the mill scale has begun to flake. (iii) Type C: Steel surface on which the mill scale has rusted away or from which it can be scraped, but with slight pitting visible under normal vision. (iv) Type D: Steel surface on which the mill scale has rusted away and on which general pitting is visible under normal vision.

5.2 Models Setup

A common case is the use of pretrained networks of specified topology. Generally, transfer learning techniques serve as starting points, allowing for fast initialization and minimal topological interventions. The FCN-8s variant [10, 17], served as the main detector for the FCN model. Additionally, the Mask R-CNN detector was based on Inception V2 [19], pretrained over COCO [8] dataset. On the other hand, U-Net was designed from scratch. The contracting part, of the adopted variation, had the following setup: Input \(\rightarrow \) 2@Conv \(\rightarrow \) Pool \(\rightarrow \) 2@Conv \(\rightarrow \) Pool \(\rightarrow \) 2@Conv \(\rightarrow \) Drop \(\rightarrow \) Pool, where 2@Conv denotes that two consecutive convolutions, of size \(3 \times 3\), took place. Finally, for the decoder three corresponding upsampling layers were used.

5.3 Experimental Results

Figure 4 demonstrates two corroded images along with the respective ground truth annotation for all of the three deep models, without applying boundary refinement. In general, the results depict the semantic segmentation capabilities of the core models. Nevertheless, boundary regions are rather coarse. To refine these areas, we implement the color projection methodology as described in Sect. 4 and visualized in Fig. 5. In particular, it shows the defected regions before and after the boundary refinement (see the last two columns of Fig. 5). The corroded regions are illustrated in green for better clarification.

Objective results are depicted in Fig. 6 using precision and F1-score. The proposed fusion method improves precision and slightly the F1-score. U-Net performs slightly better in terms of precision than the rest ones, while for F1-score all deep models yield almost the same performance. Investigating the time complexity, U-Net is the fastest approach, followed by FCN (1.79 times slower) and last is Mask R-CNN (18.25 times slower). Thus, even if Mask R-CNN followed by boundary refinement performs better than the rest in terms of F1-score, it may not be used as the main detection mechanism due to the high execution times.

6 Conclusions

In this paper, we propose a novel data projection scheme to yield accurate pixel-based detection of corrosion regions on metal constructions. This projection/fusion exploits the results of deep models, which correctly identify the semantic area of a defect but fail on the boundaries, and a color segmentation algorithm which over-segments the defect into multiple color areas but retains contour accuracy. The deep models were FCN, U-Net and Mask R-CNN. Experimental results and comparisons on real datasets verify the out-performance of the proposed scheme, even for very tough image content of multiple types of defects. The performance is evaluated on a dataset annotated by engineer experts. Though the increase in accuracy is relatively small, the new defected areas can significantly improve structural analysis and prefabrication than other traditional methods.

References

Beucher, S., et al.: The watershed transformation applied to image segmentation. In: Scanning Microscopy-Supplement, pp. 299–299 (1992)

Cha, Y.J., Choi, W., Büyüköztürk, O.: Deep learning-based crack damage detection using convolutional neural networks. Comput.-Aided Civil Infrastruct. Eng. 32(5), 361–378 (2017)

Chen, F.C., Jahanshahi, M.R.: NB-CNN: deep learning-based crack detection using convolutional neural network and Naïve Bayes data fusion. IEEE Trans. Industr. Electron. 65(5), 4392–4400 (2017)

Dong, H., Yang, G., Liu, F., Mo, Y., Guo, Y.: Automatic brain tumor detection and segmentation using U-net based fully convolutional networks. In: Valdés Hernández, M., González-Castro, V. (eds.) MIUA 2017. CCIS, vol. 723, pp. 506–517. Springer, Cham (2017). https://doi.org/10.1007/978-3-319-60964-5_44

Dong, Y., Hou, Y., Cao, D., Zhang, Y., Zhang, Y.: Study on road performance of prefabricated rollable asphalt mixture. Road Mater. Pavement Des. 18(Suppl. 3), 65–75 (2017)

Doulamis, A., Doulamis, N., Protopapadakis, E., Voulodimos, A.: Combined convolutional neural networks and fuzzy spectral clustering for real time crack detection in tunnels. In: 2018 25th IEEE International Conference on Image Processing (ICIP), pp. 4153–4157. IEEE (2018)

He, K., Gkioxari, G., Dollár, P., Girshick, R.: Mask R-CNN. In: IEEE International Conference on Computer Vision, pp. 2961–2969 (2017)

Lin, T.Y., et al.: Microsoft coco: common objects in context. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) European Conference on Computer Vision, pp. 740–755. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10602-1_48

Liu, Z., Lu, G., Liu, X., Jiang, X., Lodewijks, G.: Image processing algorithms for crack detection in welded structures via pulsed eddy current thermal imaging. IEEE Instrum. Meas. Mag. 20(4), 34–44 (2017)

Long, J., Shelhamer, E., Darrell, T.: Fully convolutional networks for semantic segmentation. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 3431–3440 (2015)

Makantasis, K., Doulamis, A., Doulamis, N., Nikitakis, A., Voulodimos, A.: Tensor-based nonlinear classifier for high-order data analysis. In: 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 2221–2225, April 2018. https://doi.org/10.1109/ICASSP.2018.8461418

Makantasis, K., Doulamis, A.D., Doulamis, N.D., Nikitakis, A.: Tensor-based classification models for hyperspectral data analysis. IEEE Trans. Geosci. Remote Sens. 56(12), 6884–6898 (2018)

Protopapadakis, E., Voulodimos, A., Doulamis, A.: Data sampling for semi-supervised learning in vision-based concrete defect recognition. In: 2017 8th International Conference on Information, Intelligence, Systems Applications (IISA), pp. 1–6, August 2017. https://doi.org/10.1109/IISA.2017.8316454

Protopapadakis, E., Voulodimos, A., Doulamis, A., Doulamis, N., Stathaki, T.: Automatic crack detection for tunnel inspection using deep learning and heuristic image post-processing. Appl. Intell. 49(7), 2793–2806 (2019). https://doi.org/10.1007/s10489-018-01396-y

Protopapadakis, E., Katsamenis, I., Doulamis, A.: Multi-label deep learning models for continuous monitoring of road infrastructures. In: Proceedings of the 13th ACM International Conference on PErvasive Technologies Related to Assistive Environments, pp. 1–7 (2020)

Ronneberger, O., Fischer, P., Brox, T.: U-net: convolutional networks for biomedical image segmentation. In: Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F. (eds.) MICCAI 2015. LNCS, vol. 9351, pp. 234–241. Springer, Cham (2015). https://doi.org/10.1007/978-3-319-24574-4_28

Shuai, B., Liu, T., Wang, G.: Improving fully convolution network for semantic segmentation. arXiv preprint arXiv:1611.08986 (2016)

Soukup, D., Huber-Mörk, R.: Convolutional neural networks for steel surface defect detection from photometric stereo images. In: Bebis, G., et al. (eds.) ISVC 2014. LNCS, vol. 8887, pp. 668–677. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-14249-4_64

Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J., Wojna, Z.: Rethinking the inception architecture for computer vision. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 2818–2826 (2016)

Voulodimos, A., Doulamis, N., Doulamis, A., Protopapadakis, E.: Deep learning for computer vision: a brief review. Comput. Intell. Neurosci. 2018 (2018)

Voulodimos, A., Protopapadakis, E., Katsamenis, I., Doulamis, A., Doulamis, N.: Deep learning models for COVID-19 infected area segmentation in CT images. medRxiv (2020)

Vuola, A.O., Akram, S.U., Kannala, J.: Mask-RCNN and U-net ensembled for nuclei segmentation. In: 2019 IEEE 16th International Symposium on Biomedical Imaging (ISBI 2019), pp. 208–212. IEEE (2019)

Zhang, L., Yang, F., Zhang, Y., Zhu, Y.J.: Road crack detection using deep convolutional neural network. In: 2016 IEEE International Conference on Image Processing (ICIP), pp. 3708–3712. IEEE (2016)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Katsamenis, I., Protopapadakis, E., Doulamis, A., Doulamis, N., Voulodimos, A. (2020). Pixel-Level Corrosion Detection on Metal Constructions by Fusion of Deep Learning Semantic and Contour Segmentation. In: Bebis, G., et al. Advances in Visual Computing. ISVC 2020. Lecture Notes in Computer Science(), vol 12509. Springer, Cham. https://doi.org/10.1007/978-3-030-64556-4_13

Download citation

DOI: https://doi.org/10.1007/978-3-030-64556-4_13

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-64555-7

Online ISBN: 978-3-030-64556-4

eBook Packages: Computer ScienceComputer Science (R0)