Abstract

Between preoperative computed tomography (CT) image acquisition and endoscopic sinus surgery, the nasal cavity of a patient undergoes changes. These changes make it challenging for non-deformable vision-based registration algorithms to find accurate alignments between CT image and intraoperative video. Large alignment errors can lead to injuries to critical structures. In this paper, we present a deformable video-CT registration that deforms the patient shape extracted from CT according to statistics learned from population. We also associate confidence with regions of deformed shapes based on the location of matched video features. Experiments on both simulation and in vivo data produced < 1 mm errors (statistically significantly lower than prior work).

Access provided by Autonomous University of Puebla. Download conference paper PDF

Similar content being viewed by others

Keywords

1 Introduction

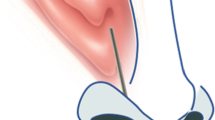

Since the step-by-step procedure for endoscopic sinus surgery (ESS) was first developed [14] in the early 1980s, interventions through the nasal cavities have become predominantly minimally invasive due to faster recovery times and reduced facial scarring. However, ESS comes with its own challenges. For instance, the 3D operating field is transformed into a 2D video display and the field of view (FOV) of the surgeon is limited to that of the endoscope. These can cause difficulty in estimating nearby anatomy that is not in the FOV of the endoscope. Knowledge of nearby anatomy is especially critical during surgeries through the nasal cavity since nasal cavities are small and complex with thin boundaries separating them from critical structures like the brain, eyes, carotid arteries, optic nerves, etc. Therefore, minimally invasive ESS through the nasal cavity requires a preoperative patient computed tomography (CT) scan, which is used by surgical navigation systems to orient surgeons with respect to critical anatomy.

Several navigation systems have been introduced that register endoscopic views to preoperative patient CT image. Systems that use electromagnetic or optical trackers require markers to be attached to the endoscope, which can interfere with surgical workflow. Vision-based navigation systems, however, do not add any additional hardware to the surgical space. Many vision-based navigation systems compute rigid registrations between features from endoscopic video and CT image. The iterative closest point (ICP) algorithm [2] is a standard two-step registration algorithm that iterates between finding correspondences between feature sets and finding the transformation that best aligns the matched points until convergence. Several ICP variants have also been explored [21]. In addition to position, orientation [6, 20], contours [4], and noise models [11, 22] have been used to improve matches. However, patient anatomy undergoes change between CT image acquisition and surgery [12]. Patients are also administered decongestants before surgery which further modifies anatomy. Rigid registration methods have shown deterioration in performance in the presence of tissues that undergo change due to decongestants [15, 16]. However, prior work has shown that principal component analysis (PCA) modes can capture the physiological changes that occur in the nasal cavity, i.e., the expanding and contracting of erectile tissues on the nasal turbinates [26]. Therefore, deformable variants of ICP that use PCA-based statistical shape models (SSMs) [9] to additionally solve for shape parameters have also been explored [13, 27]. However, these methods compute registrations to the mean shape, deforming the mean shape to estimate patient, and do not take prior patient information into consideration. Further, they do not provide confidence measures on the estimated shape.

Our registration simultaneously computes video-CT registration and deforms patient shape using SSMs. We also estimate how errors in deformed patient shape estimation increase as distance from video features increases and provide confidence measures. We evaluate our method on simulated and in vivo data.

2 Method

The method in [27] is formulated according to the following likelihood function:

where \(f_{\mathrm {match}}\) finds the oriented point \(\mathbf {y}= (\mathbf {y}_\mathbf {p}, \hat{\mathbf {y}}_\mathbf {n})\) on the current shape, \(\psi \), that maximizes the likelihood of a match with oriented sample point \(\mathbf {x}= (\mathbf {x}_\mathbf {p}, \hat{\mathbf {x}}_\mathbf {n})\) from video, and \(f_{\mathrm {shape}}\) is the likelihood of shape deformation. \(\theta \) represents parameters of the non-deformable registration (i.e., rotation, R, translation, t, and scale, a), \(\mathbf {s}= \{s\}\) represents the shape parameters, \(\bar{\mathbf {V}}\) is the mean shape, \(\mathbf {W}\) the weighted modes of variation, and \(\mathrm {T_{ssm}}\) is the deformable transformation applied to \(\bar{\mathbf {V}}\). \(f_{\mathrm {match}}\) can be any likelihood-based registration objective, such as those presented in [3, 5, 6]. In this paper, we use the \(f_{\mathrm {match}}\) defined in [6, 27], which incorporates an anisotropic Gaussian noise model and an anisotropic Kent noise model to account for errors in position and orientation, respectively [6]. Assuming both position and orientation errors are zero-mean, i.i.d, \(f_{\mathrm {match}}\) for each \(\mathbf {x}\) transformed by a current similarity transform, \([a,\mathbf {R},\mathbf {t}]\), is defined as [27]:

where \(\mathbf {\Sigma }= \mathbf {R}\mathbf {\Sigma _{\mathrm {x}}}\mathbf {R}\mathbf {^T}+ \mathbf {\Sigma _{\mathrm {y}}}\), \(\mathbf {\Sigma _{\mathrm {x}}}\) and \(\mathbf {\Sigma _{\mathrm {y}}}\) are the covariance matrices representing noise in \(\mathbf {x}\) and \(\mathbf {y}\), \(\kappa = \frac{1}{\sigma ^2}\) is the concentration parameter of orientation noise, \(\sigma \) is the standard deviation, and \(\beta = e\frac{\kappa }{2}\) (\(e \in [0, 1]\) is the eccentricity) controls the anisotropy of orientation noise along with \(\hat{\mathbf {\gamma }}_{1}\) and \(\hat{\mathbf {\gamma }}_{2}\), which are the major and minor axes of the elliptical level sets of the Kent distribution on the unit sphere [6, 18]. \(\hat{\mathbf {y}}_\mathbf {n}\), \(\hat{\mathbf {\gamma }}_{1}\), \(\hat{\mathbf {\gamma }}_{2}\) are orthogonal. Similarly, assuming each vertex, \(\mathbf {v}\in \mathbf {V}\), on a shape deforms independently and deformations are Gaussian distributed [23]:

where \(n_\mathbf {v}\) is the number of vertices in the shape and \(n_\mathbf {m}\) is the number of PCA modes used to estimate deformation.

This formulation forces the mean shape to be the most likely shape [27] and cannot accommodate prior patient information. That is, in a generalized formulation for the exponent in Eq. 1, \(e^{\frac{\Vert s_i- \mu _i\Vert _2^2}{2}}\), \(\mu _i\), which are the mode weights corresponding to the most likely shape, are simply set to \(0,\,\forall i\), since the mean shape produces \(\mathbf {0}\) mode weights. This is a good assumption when patient CT is unavailable [25, 27]. However, if patient CT is available, then patient shape should be assumed to be the most likely shape. If patient shape, \(\mathbf {V}^*\), is segmented such that it has correspondences with the mean shape [26, 28], \(\mu \) can be computed by projecting the mean subtracted patient shape onto the SSM modes,

where \(\mathbf {m}\) and \(\lambda \) are the modes and mode weights of variation. \(\mathbf {m}\) and \(\lambda \) can be obtained by performing PCA on a set of shapes with corresponding vertices [9]:

Finally, the deformation applied to \(\bar{\mathbf {V}}\) based on the current \(\mathbf {s}\) is defined as

\(\eta _i^{(j)}\) are the 3 barycentric coordinates that define the position of \(\mathbf {y}_i\) on a triangle on \(\psi \), and \(\mathbf {w}_i = \sqrt{\lambda _i}\mathbf {m}_i\) are the weighted modes of variation [27].

Finally, we associate confidence with regions of the estimated shape. We expect errors to be lower where sampled points are matched to the shape and higher as distance from these points increases since these areas are unobserved. To verify this, we associate per vertex errors with distance from the centroid of inlying matched points,

and model our confidence based on observations in simulation. \(n_{\mathrm {inliers}}\) is the number of inlying matched points.

3 Experiments and Analysis

Since our method initializes the shape estimation problem closer to the optimal solution, we expect our registrations to converge faster and produce lower mean errors since it is less likely for our optimization to converge to a non-optimal solution. To verify these expectations, we evaluate our method on simulated and in vivo data. All experiments are run on a 3.4 GHz Intel Core i7 CPU, 16 GB RAM.

3.1 Simulated Data

We perform a leave-one-out experiment using right nasal cavity meshes from a 53 CT dataset [1, 7, 8, 10]. The left-out shape is perturbed to simulate a patient with modified anatomy. Points are sampled from regions of the perturbed left-out shape that would be visible to an endoscope (Fig. 3A). Position noise with \(\sigma = 1\times 1\) mm\(^2\) in plane and 2 mm out of plane (i.e., \(1\times 1\times 2\) mm\(^3\)) and orientation noise with \(\sigma =10^{\circ }\) and \(e=0.5\) are added to the sampled points. Offsets in intervals [0, 10] mm and \([0, 10]^{\circ }\) are applied to the sample positions and orientations, respectively. The perturbed left-out shape is estimated using our method, i.e., with \(\mu \) set to weights from the original left-out shape, and using prior work, i.e., with \(\mu =\mathbf {0}\). Estimation of \(\mathbf {s}\) is constrained to \([-3, 3]\) standard deviations. Both registrations are run with two noise assumptions: first, assuming the noise in the samples is known, and second, assuming the noise is unknown and initializing the noise estimates to \(2\times 2\times 4\) mm\(^3\) and \(20^{\circ }\ (e=0.5)\) for position and orientation, respectively. To evaluate our results, we first compute the total registration error (tRE) by computing the Hausdorff distance (HD) between the deformed left-out shape and the estimated shape transformed to the coordinate frame of the registered sample points. Next, we compute the total shape error (tSE) by computing the HD between the two shapes in the same coordinate frame.

In both cases, we observed statistically significantFootnote 1 decrease in errors when registration is initialized to patient weights, with errors lower when noise is known (Fig. 1A and B) compared to when noise is unknown (Fig. 2A and B). We also observe that there is a statistically significant decrease in the number of iterations required for convergence, which also leads to decrease in runtime. Although there is a bigger decrease in number of iterations and runtime for convergence with known noise (Fig. 1C and D), results with unknown noise (Fig. 2C and D) show that for similar number of iterations and runtime, we achieve lower errors when registration is initialized to patient shape. Our current CPU implementation is embarrassingly parallelizable and can be further optimized to improve runtime.

We also observe, as expected, that shape estimation errors are lower where correspondences to sample points are found (Fig. 3B). Per vertex tSE shows little change near the centroid of the matched points, but quickly increases away from it (Fig. 4A). Therefore, we model our confidence in shape estimation as an exponential decay as distance from matched points increases. Figure 4B shows an estimated left-out shape from the experiment with unknown noise with ground truth errors, while Fig. 4C shows our estimated confidence in shape estimation.

3.2 In Vivo Data

Our clinical evaluation was performed on anonymized endoscopic videos of the nasal cavity collected from 4 consenting patients under an IRB approved protocol. These videos were used to train a self-supervised depth-estimation network that leverages established multi-view stereo methods like structure from motion (SfM) for learning [17]. This method produces dense and accurate point clouds from single frames of monocular endoscopic videos. Reconstructions from nearby frames were aligned using relative camera motion to produce dense reconstructions covering large areas in the nasal cavities. 3000 randomly sampled points from 14 such reconstructions were deformably registered both to the mean shape and the patient shape assuming noise with \(\sigma = 1\times 1\times 2\) mm\(^3\) and \(30^\circ \ (e=0.5)\) in position and orientation, respectively. The SSM used is pre-built using our 53 CT dataset and does not include CTs from any of the patients scoped for clinical evaluation. Rigid registrations to the respective patient shapes with the same parameters were also computed for comparison [6]. All registrations were manually initialized.

(A) Right nasal cavity mesh with an example of points (yellow) sampled from the inferior turbinate, lateral and septal walls, and cavity floor - regions visible to an endoscope when entering the cavity. (B) Mean tSE over registrations (from L-R) initialized at patient shape and mean shape, with known and unknown noise each, and run with 50 modes plotted on the mean shape. Shape estimation errors are higher away from matched points as well as when mean shape is used for initialization (arrow). (Color figure online)

Since in vivo data lacks ground truth, we evaluate our registration using residual errors between matches computed by the registration. However, residual error can be misleading since it does not take into consideration the orientations of matched points. Therefore, we also report registration confidence based on the agreement in the orientations of corresponding points. [27] showed that registration confidence decreased with increasing chi-square CDF values, p, computed using orientation agreement scores. For simplicity, we show increasing confidence with increasing \(q = 1-p\).

(A) Confidence based on orientation agreement plotted for registrations computed with reconstructions from different video sequences. Initializations at patient shape (green) produce higher confidence in registration, only showing no confidence for sequences 01 and 04 from Patient 4. Several registrations initialized at mean shape (red) show no confidence. (B) Deformed patient 2 with confidence estimates when aligned with features extracted from (C) video sequence 02. (D) Points (red with blue normals) overlayed on the deformed shape (gray). (Color figure online)

Both deformable registration methods produced statistically significant improvements over rigid registration to patient shape, which produced a mean residual error of 0.7 (±0.26) mm. Residual errors between deformable registration initialized at mean shape and initialized at patient shape produced the same residual error of 0.44 (±0.14) mm. However, confidence in registration using orientation agreement was higher when initialized to patient shape (Fig. 5A), implying that our method produced better alignment. We are also able to visualize confidence in estimated shapes. Confidence in estimated Patient 2 is shown in Fig. 5B along with the alignment that produced the estimated shape (Fig. 5C and D).

4 Discussion and Future Work

In this work, we show that we can deformably register endoscopic videos to patient CT using PCA modes to deform patient shape. This method produces statistically significant improvements in registration errors as well as iterations and runtime to convergence compared to prior PCA-based deformable registration methods [24]. We believe that these results bring us a step closer to providing accurate patient-specific navigation during endoscopic sinus procedures without using any external tools like electromagnetic or optical trackers.

We did not compare our method to other prior registration methods like coherent point drift (CPD) due to memory limitations [19]. CPD computes a \(n_\mathbf {v}\times n_\mathbf {v}\) matrix which is stored in memory, resulting in large memory overhead even for medium sized meshes. Our method does not suffer from such limitations. Further, we expect our method to perform as well [24] or better since CPD only deforms the parts of meshes where sample points are matched which can result in unnatural deformations in the mesh. Since our method is driven by statistics learned from population, it is much less likely for our method to produce unnatural deformations. Our method also computes confidence falloff in estimated shapes as distance from registered feature points increases. Providing this information during surgical navigation is critical since it allows surgeons to modulate their trust in the navigation system.

In the future, we will analytically evaluate the uncertainty in different regions of the estimated shape and improve the runtime of our algorithm. We are also working on building SSMs from a larger population in order to better capture the extent of variations in the nasal cavity. Another goal we hope to work towards is automating registration initialization so our method can be seamlessly integrated into the surgical navigation workflow. Finally, we would like to emphasize that our method can enable highly accurate navigation and reduce risk of damage to critical structures towards the start of a procedure. We hope that future work that can account for non-physiological changes that occur during surgery can be integrated with our method to enable accurate navigation throughout endoscopic sinus procedures.

Notes

- 1.

All statistical significance figures reported in this paper are evaluated using the paired-sample Student’s t-test and indicate \(p<0.001\).

References

Beichel, R.R., et al.: Data from QIN-HEADNECK. The Cancer Imaging Archive (2015)

Besl, P.J., McKay, N.D.: A method for registration of 3-D shapes. IEEE Trans. Pattern Anal. Mach. Intell. 14(2), 239–256 (1992)

Billings, S.D., Boctor, E.M., Taylor, R.H.: Iterative most-likely point registration (IMLP): a robust algorithm for computing optimal shape alignment. PLoS ONE 10(3), 1–45 (2015)

Billings, S.D., et al.: Anatomically constrained video-CT registration via the V-IMLOP algorithm. In: Ourselin, S., Joskowicz, L., Sabuncu, M.R., Unal, G., Wells, W. (eds.) MICCAI 2016. LNCS, vol. 9902, pp. 133–141. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46726-9_16

Billings, S., Taylor, R.: Iterative most likely oriented point registration. In: Golland, P., Hata, N., Barillot, C., Hornegger, J., Howe, R. (eds.) MICCAI 2014. LNCS, vol. 8673, pp. 178–185. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10404-1_23

Billings, S.D., Taylor, R.H.: Generalized iterative most likely oriented-point (G-IMLOP) registration. Int. J. Comput. Assist. Radiol. Surg. 10(8), 1213–1226 (2015)

Bosch, W.R., Straube, W.L., Matthews, J.W., Purdy, J.A.: Data from Head-Neck-Cetuximab. The Cancer Imaging Archive (2015)

Clark, K., et al.: The cancer imaging archive (TCIA): maintaining and operating a public information repository. J. Digit. Imaging 26(6), 1045–1057 (2013)

Cootes, T.F., Taylor, C.J., Cooper, D.H., Graham, J.: Active shape models - their training and application. Comput. Vis. Image Underst. 61, 38–59 (1995)

Fedorov, A., et al.: DICOM for quantitative imaging biomarker development: a standards based approach to sharing clinical data and structured PET/CT analysis results in head and neck cancer research. PeerJ 4, e2057 (2016)

Granger, S., Pennec, X.: Multi-scale EM-ICP: a fast and robust approach for surface registration. In: Heyden, A., Sparr, G., Nielsen, M., Johansen, P. (eds.) ECCV 2002. LNCS, vol. 2353, pp. 418–432. Springer, Heidelberg (2002). https://doi.org/10.1007/3-540-47979-1_28

Hasegawa, M., Kern, E.B.: The human nasal cycle. Mayo Clin. Proc. 52(1), 28–34 (1977)

Hufnagel, H., Pennec, X., Ehrhardt, J., Ayache, N., Handels, H.: Computation of a probabilistic statistical shape model in a maximum-a-posteriori framework. Methods Inf. Med. 48(04), 314–319 (2009)

Kennedy, D.W.: Functional endoscopic sinus surgery. Technique. Arch. Otolaryngol. 111(10), 643–649 (1985). (Chicago, Ill.: 1960)

Leonard, S., et al.: Evaluation and stability analysis of video-based navigation system for functional endoscopic sinus surgery on in vivo clinical data. IEEE Trans. Med. Imaging 37(10), 2185–2195 (2018)

Leonard, S., Reiter, A., Sinha, A., Ishii, M., Taylor, R.H., Hager, G.D.: Image-based navigation for functional endoscopic sinus surgery using structure from motion. In: Proceedings SPIE Medical Imaging, vol. 9784, pp. 97840V–97840V-7 (2016)

Liu, X., et al.: Self-supervised learning for dense depth estimation in monocular endoscopy. In: Stoyanov, D., et al. (eds.) CARE/CLIP/OR 2.0/ISIC -2018. LNCS, vol. 11041, pp. 128–138. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01201-4_15

Mardia, K.V., Jupp, P.E.: Directional Statistics. Wiley Series in Probability and Statistics, pp. 1–432. Wiley, Hoboken (2008)

Myronenko, A., Song, X.: Point set registration: coherent point drift. IEEE Trans. Pattern Anal. Mach. Intell. 32(12), 2262–2275 (2010)

Pulli, K.: Multiview registration for large data sets. In: Proceedings 2nd International Conference on 3-D Digital Imaging and Modeling, pp. 160–168 (1999)

Rusinkiewicz, S., Levoy, M.: Efficient variants of the ICP algorithm. In: Proceedings 3rd International Conference on 3-D Digital Imaging and Modeling, pp. 145–152 (2001)

Segal, A., Haehnel, D., Thrun, S.: Generalized-ICP. In: Proceedings Robotics: Science & Systems (2009)

Sinha, A.: Deformable registration using shape statistics with applications in sinus surgery. Ph.D. thesis, The Johns Hopkins University (2018)

Sinha, A., et al.: The deformable most-likely-point paradigm. Med. Image Anal. 55, 148–164 (2019)

Sinha, A., Ishii, M., Hager, G.D., Taylor, R.H.: Endoscopic navigation in the clinic: registration in the absence of preoperative imaging. Int. J. Comput. Assist. Radiol. Surg. 14, 12 (2019). IJCARS-MICCAI 2018

Sinha, A., Leonard, S., Reiter, A., Ishii, M., Taylor, R.H., Hager, G.D.: Automatic segmentation and statistical shape modeling of the paranasal sinuses to estimate natural variations. In: Proceedings SPIE Medical Imaging, vol. 9784, pp. 97840D–97840D-8 (2016)

Sinha, A., Liu, X., Reiter, A., Ishii, M., Hager, G.D., Taylor, R.H.: Endoscopic navigation in the absence of CT imaging. In: Frangi, A.F., Schnabel, J.A., Davatzikos, C., Alberola-López, C., Fichtinger, G. (eds.) MICCAI 2018. LNCS, vol. 11073, pp. 64–71. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-00937-3_8

Sinha, A., Reiter, A., Leonard, S., Ishii, M., Hager, G.D., Taylor, R.H.: Simultaneous segmentation and correspondence improvement using statistical modes. In: Proceedings SPIE Medical Imaging, vol. 10133, pp. 101331B–101331B-8 (2017)

Acknowledgment

This work was funded by the Johns Hopkins University (JHU) Provost’s Postdoctoral Fellowship and other JHU internal funds. We would also like to thank Seth D. Billings for his invaluable feedback.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Sinha, A., Liu, X., Ishii, M., Hager, G.D., Taylor, R.H. (2019). Recovering Physiological Changes in Nasal Anatomy with Confidence Estimates. In: Greenspan, H., et al. Uncertainty for Safe Utilization of Machine Learning in Medical Imaging and Clinical Image-Based Procedures. CLIP UNSURE 2019 2019. Lecture Notes in Computer Science(), vol 11840. Springer, Cham. https://doi.org/10.1007/978-3-030-32689-0_12

Download citation

DOI: https://doi.org/10.1007/978-3-030-32689-0_12

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-32688-3

Online ISBN: 978-3-030-32689-0

eBook Packages: Computer ScienceComputer Science (R0)