Abstract

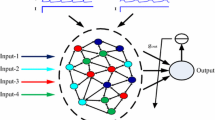

Chaotic spiking neural network serves as a main component (a “liquid”) in liquid state machines (LSM) – a very promising approach to application of neural networks to online analysis of dynamic data streams. The LSM ability to recognize complex dynamic patterns is based on “memory” of its liquid component – prolonged reaction of its neural network to input stimuli. A generalization of LSM called self-organizing LSM (LSM including spiking neural network with synaptic plasticity switched on) is studied. It is demonstrated that memory appears in such networks under certain locality conditions on their connectivity. Genetic algorithm is utilized to determine parameters of neuron model, synaptic plasticity rule and connectivity optimal from point of view of memory characteristics.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Maass, W.: Liquid state machines: motivation, theory, and applications. In: Computability in Context: Computation and Logic in the Real World. World Scientific, pp. 275–296 (2011)

Kiselev, M.: Self-organization process in large spiking neural networks leading to formation of working memory mechanism. In: Rojas, I., Joya, G., Cabestany, J. (eds.) Proceedings of IWANN 2013. LNCS, vol. 7902, Part I, pp. 510–517 (2013)

Kiselev, M.: Self-organized short-term memory mechanism in spiking neural network. In: Proceedings of ICANNGA 2011 Part I, Ljubljana, pp. 120–129 (2011)

Fiebig, F., Lansner, A.: A spiking working memory model based on Hebbian short-term potentiation. J. Neurosci. 37(1), 83–96 (2016)

Szatmary, B., Izhikevich, E.: Spike-timing theory of working memory. PLoS Comput. Biol. 6(8), e1000879 (2010)

Lansner, A., Marklund, P., Sikström, S., Nilsson, L.-G.: Reactivation in working memory: an attractor network model of free recall. PLoS ONE 8(8), e73776 (2013). https://doi.org/10.1371/journal.pone.0073776

Seeholzer, A., Deger, M., Gerstner, W.: Stability of working memory in continuous attractor networks under the control of short-term plasticity. PLoS Comput. Biol. 15(4), e1006928 (2019). https://doi.org/10.1371/journal.pcbi.1006928

Kiselev, M.: Rate coding vs. temporal coding – is optimum between? In: Proceedings of IJCNN-2016, pp. 1355–1359 (2016)

Kiselev, M., Lavrentyev, A.: A preprocessing layer in spiking neural networks – structure, parameters, performance criteria, accepted for publication. In: Proceedings of IJCNN-2019 (2019)

Breiman, L.: Random forests. Mach. Learn. 45(1), 5–32 (2001). https://doi.org/10.1023/A:1010933404324

Acknowledgements

I would like to thank Andrey Lavrentyev and Artyom Nechiporuk for valuable discussion. I am grateful to Kaspersky Lab for the powerful GPU computer provided.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Kiselev, M. (2020). Chaotic Spiking Neural Network Connectivity Configuration Leading to Memory Mechanism Formation. In: Kryzhanovsky, B., Dunin-Barkowski, W., Redko, V., Tiumentsev, Y. (eds) Advances in Neural Computation, Machine Learning, and Cognitive Research III. NEUROINFORMATICS 2019. Studies in Computational Intelligence, vol 856. Springer, Cham. https://doi.org/10.1007/978-3-030-30425-6_47

Download citation

DOI: https://doi.org/10.1007/978-3-030-30425-6_47

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-30424-9

Online ISBN: 978-3-030-30425-6

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)